AWS News Blog

GE Oil & Gas – Digital Transformation in the Cloud

GE Oil & Gas is a relatively young division of General Electric, the product of a series of acquisitions made by parent company General Electric starting in the late 1980s. Today GE Oil &Gas is pioneering the digital transformation of the company. In the guest post below, Ben Cabanas, the CTO of GE Transportation and formerly the cloud architect for GE Oil & Gas, talks about some of the key steps involved in a major enterprise cloud migration, the theme of his recent presentation at the 2016 AWS Summit in Sydney, Australia.

You may also want to learn more about Enterprise Cloud Computing with AWS.

— Jeff;

Challenges and Transformation

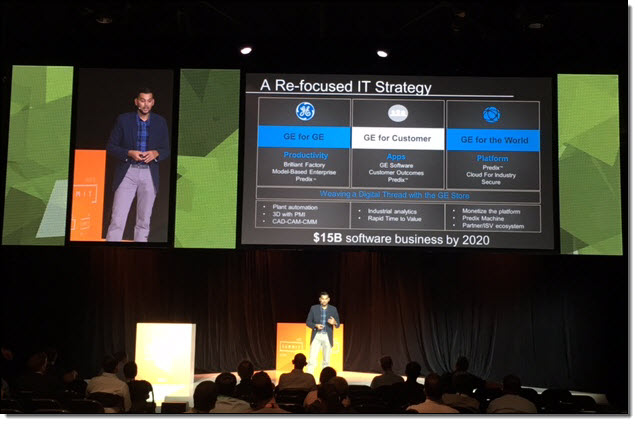

GE Oil & Gas is at the forefront of GE’s digital transformation, a key strategy for the company going forward. The division is also operating at a time when the industry is facing enormous competitive and cost challenges, so embracing technological innovation is essential. As GE CIO Jim Fowler has noted, today’s industrial companies have to become digital innovators to thrive.

Moving to the cloud is a central part of this transformation for GE. Of course, that’s easier said than done for a large enterprise division of our size, global reach, and role in the industry. GE Oil & Gas has more than 45,000 employees working across 11 different regions and seven research centers. About 85 percent of the world’s offshore oil rigs use our drilling systems, and we spend $5 billion annually on energy-related research and development—work that benefits the entire industry. To support all of that work, GE Oil & Gas has about 900 applications, part of a far larger portfolio of about 9,000 apps used across GE. A lot of those apps may have 100 users or fewer, but are still vital to the business, so it’s a huge undertaking to move them to the cloud.

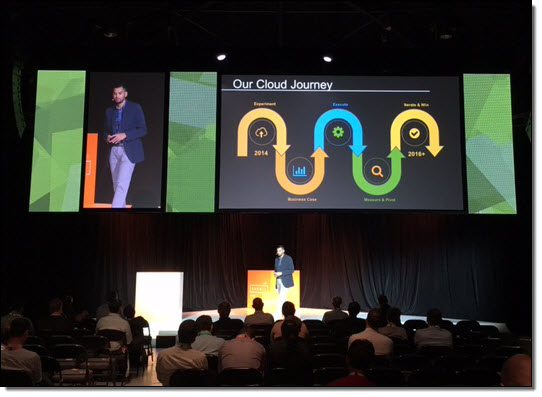

Our cloud journey started in late 2013 with a couple of goals. We wanted to improve productivity in our shop floors and manufacturing operations. We sought to build applications and solutions that could reduce downtime and improve operations. Most importantly, we wanted to cut costs while improving the speed and agility of our IT processes and infrastructure.

Iterative Steps

Working with AWS Professional Services and Sogeti, we launched the cloud initiative in 2013 with a highly iterative approach. In the beginning, we didn’t know what we didn’t know, and had to learn agile as well as how to move apps to the cloud. We took steps that, in retrospect, were crucial in supporting later success and accelerated cloud adoption. For example, we sent more than 50 employees to Seattle for training and immersion in AWS technologies so we could keep critical technical IP in-house. We built foundational services on AWS, such as monitoring, backup, DNS, and SSO automation that, after a year or so, fostered the operational maturity to speed the cloud journey. In the process, we discovered that by using AWS, we can build things at a much faster pace than what we could ever accomplish doing it internally.

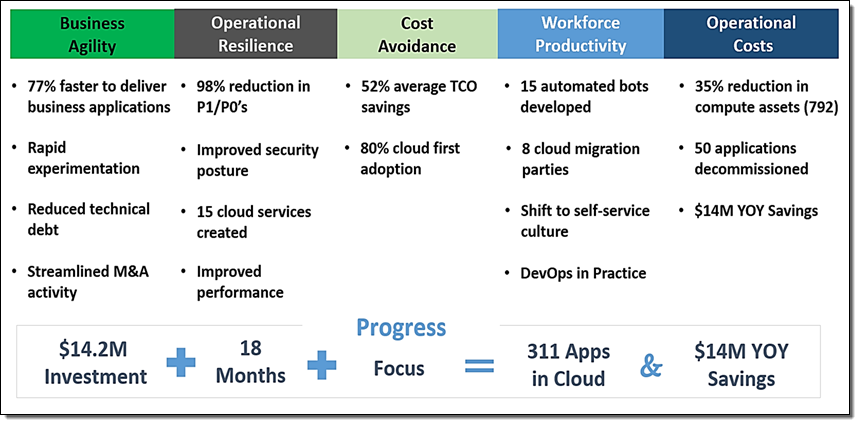

Moving to AWS has delivered both cost and operational benefits to GE Oil & Gas.

We architected for resilience, and strove to automate as much as possible to reduce touch times. Because automation was an overriding consideration, we created a “bot army” that is aligned with loosely coupled microservices to support continuous development without sacrificing corporate governance and security practices. We built in security at every layer with smart designs that could insulate and protect GE in the cloud, and set out to measure as much as we could—TCO, benchmarks, KPIs, and business outcomes. We also tagged everything for greater accountability and to understand the architecture and business value of the applications in the portfolio.

Moving Forward

All of these efforts are now starting to pay off. To date, we’ve realized a 52 percent reduction in TCO. That stems from a number of factors, including the bot-enabled automation, a push for self-service, dynamic storage allocation, using lower-cost VMs when possible, shutting off compute instances when they’re not needed, and moving from Oracle to Amazon Aurora. Ultimately, these savings are a byproduct of doing the right thing.

The other big return we’ve seen so far is an increase in productivity. With more resilient, cloud-enabled applications and a focus on self-service capability, we’re getting close to a “NoOps” environment, one where we can move away from “DevOps” and “ArchOps,” and all the other “ops,” using automation and orchestration to scale effectively without needing an army of people. We’ve also seen a 50 percent reduction in “tickets” and a 98 percent reduction in impactful business outages and incidents—an unexpected benefit that is as valuable as the cost savings.

For large organizations, the cloud journey is an extended process. But we’re seeing clear benefits and, from the emerging metrics, can draw a few conclusions. NoOps is our future, and automation is essential for speed and agility—although robust monitoring and automation require investments of skill, time, and money. People with the right skills sets and passion are a must, and it’s important to have plenty of good talent in-house. It’s essential to partner with business leaders and application owners in the organization to minimize friction and resistance to what is a major business transition. And we’ve found AWS to be a valuable service provider. AWS has helped move a business that was grounded in legacy IT to an organization that is far more agile and cost efficient in a transformation that is adding value to our business and to our people.

— Ben Cabanas, Chief Technology Officer, GE Transportation