AWS Big Data Blog

Automate building an integrated analytics solution with AWS Analytics Automation Toolkit

This blog post was last reviewed and updated July 2022, to be consistent with the new menu interface launched by the AWS Analytics Automation Toolkit.

Amazon Redshift is a fast, fully managed, widely popular cloud data warehouse that powers the modern data architecture enabling fast and deep insights or machine learning (ML) predictions using SQL across your data warehouse, data lake, and operational databases. A key differentiating factor of Amazon Redshift is its native integration with other AWS services, which makes it easy to build complete, comprehensive, and enterprise-level analytics applications.

As analytics solutions have moved away from the one-size-fits-all model to choosing the right tool for the right function, architectures have become more optimized and performant while simultaneously becoming more complex. You can use Amazon Redshift for a variety of use cases, along with other AWS services for ingesting, transforming, and visualizing the data.

Manually deploying these services is time-consuming. It also runs the risk of making human errors and deviating from best practices.

In this post, we discuss how to automate the process of building an integrated analytics solution by using a simple menu interface.

Solution overview

The framework described in this post uses Infrastructure as Code (IaC) to solve the challenges with manual deployments, by using AWS Cloud Development Kit (CDK) to automate provisioning AWS analytics services. You can indicate the services, resources and its respective configuration that you want to incorporate in your infrastructure by utilizing a multi-select bash menu.

The script then instantly auto-provisions all the required infrastructure components in a dynamic manner, while simultaneously integrating them according to AWS recommended best practices.

In this post, we go into further detail on the specific steps to build this solution.

Prerequisites

Prior to deploying the AWS CDK stack, complete the following prerequisite steps:

- Verify that you’re deploying this solution in a Region that supports AWS CloudShell. For more information, see AWS CloudShell endpoints and quotas.

- Have an AWS Identity and Access Management (IAM) user with the following permissions:

AWSCloudShellFullAccessIAM Full AccessAWSCloudFormationFullAccessAmazonSSMFullAccessAmazonRedshiftFullAccessAmazonS3ReadOnlyAccessSecretsManagerReadWriteAmazonEC2FullAccess- Create a custom AWS Database Migration Service (AWS DMS) policy called

AmazonDMSRoleCustomwith the following permissions:

- Create a key pair that you have access to. This is only required if deploying the AWS Schema Conversion Tool (AWS SCT) or Apache Jmeter ( an open-source load testing application).

- Optionally, if using resources outside your AWS account, open firewalls and security groups to allow traffic from AWS. This is only applicable for AWS DMS and AWS SCT deployments.

Launch the toolkit

This project uses CloudShell, a browser-based shell service, to programatically initiate the deployment through the AWS Management Console. Prior to opening CloudShell, you need to configure an IAM user, as described in the prerequisites.

- On the CloudShell console, clone the Git repository:

- Run the deployment script. This will install any dependencies and requirements. Once the installation is complete, a shell menu will prompt.

- Follow the prompts and select desired resources using Y/y/N/n or corresponding numbers. Press Enter to confirm your selection.

- At the end of the menu you may go back and reconfigure any desired inputs. Else, enter

6and press Enter to launch the resources.

- Depending on the resources being deployed, you may have to provide additional information, such as the password for an existing database or Amazon Redshift cluster.

Monitor the deployment

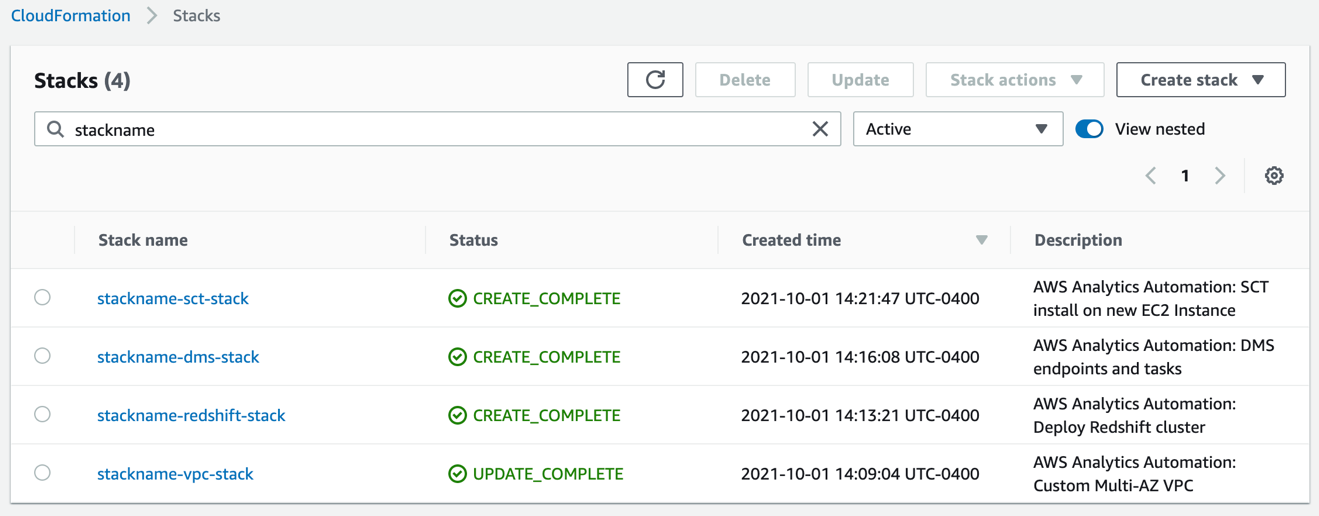

After you run the script, you can monitor the deployment of resource stacks through the CloudShell terminal, or through the AWS CloudFormation console, as shown in the following screenshot.

Each stack corresponds to the creation of a resource from the config file. You can see the newly created VPC, Amazon Redshift cluster, EC2 instance running AWS SCT, and AWS DMS instance.

Troubleshooting

If the stack launch stalls at any point, visit our GitHub repository for troubleshooting instructions.

Clean up

Delete the created resources to avoid unexpected charges:

- Open CloudShell.

- Run the destroy script.

- Enter the stackname when prompted, and click Yes when prompted, to destroy the stack.

Conclusion

In this post, we discussed how you can use the AWS Analytics Infrastructure Automation utility to quickly get started with Amazon Redshift and other AWS services. It helps you provision your entire solution on AWS instantly without any spending any time on challenges around integrating the services or scaling your solution.

About the Authors

Manash Deb is a Software Development Engineer in the AWS Directory Service team. He has worked on building end-to-end applications in different database and technologies for over 15 years. He loves to learn new technologies and solving, automating, and simplifying customer problems on AWS.

Manash Deb is a Software Development Engineer in the AWS Directory Service team. He has worked on building end-to-end applications in different database and technologies for over 15 years. He loves to learn new technologies and solving, automating, and simplifying customer problems on AWS.

Samir Kakli is an Analytics Specialist Solutions Architect at AWS. He has worked with building and tuning databases and data warehouse solutions for over 20 years. His focus is architecting end-to-end analytics solutions designed to meet the specific needs for each customer.

Samir Kakli is an Analytics Specialist Solutions Architect at AWS. He has worked with building and tuning databases and data warehouse solutions for over 20 years. His focus is architecting end-to-end analytics solutions designed to meet the specific needs for each customer.

Julia Beck is a Specialist Solutions Architect at AWS. She supports customers building analytics proof of concept workloads. Outside of work, she enjoys traveling, cooking, and puzzles.

Julia Beck is a Specialist Solutions Architect at AWS. She supports customers building analytics proof of concept workloads. Outside of work, she enjoys traveling, cooking, and puzzles.

Shawn Patel is a Solutions Architect at AWS. His primary interest is working on internal toolkits that simplify deploying analytics stacks on AWS. He loves enabling customers to make simplified and smarter decisions when building on the AWS Platform.

Shawn Patel is a Solutions Architect at AWS. His primary interest is working on internal toolkits that simplify deploying analytics stacks on AWS. He loves enabling customers to make simplified and smarter decisions when building on the AWS Platform.