AWS Compute Blog

New for AWS Lambda – Predictable start-up times with Provisioned Concurrency

Since the launch of AWS Lambda five years ago, thousands of customers such as iRobot, Fender, and Expedia have experienced the benefits of the serverless operational model. Being able to spend less time on managing scaling and availability, builders are increasingly using serverless for more sophisticated workloads with more exacting latency requirements.

As customers have progressed to building mission-critical serverless applications at scale, we have continued to invest in performance at every level of the service. From the updates in Lambda’s execution environment to the major improvements in VPC networking rolled out this year, performance remains a top priority. In addition to changes that broadly impact Lambda customers, we have also been working on a new feature specifically for the most latency-sensitive workloads, to provide even more granular control over application performance.

Currently, when the Lambda service runs “on demand,” it decides when to launch new execution environments for your function in response to incoming requests. As a result, the latency profile of your function will vary as it scales up, which may not meet the requirements of some application workloads. Today, we are providing builders with a significant new feature called Provisioned Concurrency, which allows more precise control over start-up latency when Lambda functions are invoked.

When enabled, Provisioned Concurrency is designed to keep your functions initialized and hyper-ready to respond in double-digit milliseconds at the scale you need. This new feature provides a reliable way to keep functions ready to respond to requests, giving you more precise control over the performance characteristics of your serverless applications. Builders can now choose the concurrency level for each Lambda function version or alias, including when and for how long these levels are in effect.

This powerful feature is controlled via the AWS Management Console, AWS CLI, AWS Lambda API, or AWS CloudFormation, and it’s simple to implement. This blog post introduces how to use Provisioned Concurrency, and how you can gain the benefits for your most latency-sensitive serverless workloads.

Using Provisioned Concurrency

This feature is ideal for where you need predictable function start times. For example, for interactive workloads such as mobile and web backends, synchronously invoked APIs, and latency-sensitive processes. Also, for applications that experience heavy loads based upon a predictable schedule, you can increase the amount of concurrency during times of high demand and decrease it when the demand decreases.

The simplest way to benefit from Provisioned Concurrency is by using AWS Auto Scaling. You can use Application Auto Scaling to configure schedules for concurrency, or have Auto Scaling automatically add or remove concurrency in real time as demand changes.

Turning Provisioned Concurrency on or off does not require any changes to your function’s code, Lambda Layers, or runtime configurations. There is no change to the Lambda invocation and execution model. It’s simply a matter of configuring your function parameters via the AWS Management Console or CLI, and the Lambda service manages the rest.

Provisioned Concurrency adds a pricing dimension to the existing dimensions of Duration and Requests. You only pay for the amount of concurrency that you configure and for the period of time that you configure it. When Provisioned Concurrency is enabled for your function and you execute it, you also pay for Requests and execution Duration.

How it works

Typically, the overhead in starting a Lambda invocation – commonly called a cold-start – consists of two components. The first is the time taken to set up the execution environment for your function’s code, which is entirely controlled by AWS.

The second is the code initialization duration, which is managed by the developer. This is impacted by code outside of the main Lambda handler function, and is often responsible for tasks like setting up database connections, initializing objects, downloading reference data, or loading heavy frameworks like Spring Boot. In our analysis of production usage, this causes by far the largest share of overall cold start latency. It also cannot be automatically optimized by AWS in a typical on-demand Lambda execution.

Provisioned Concurrency targets both causes of cold-start latency. First, the execution environment set-up happens during the provisioning process, rather during execution, which eliminates this issue. Second, by keeping functions initialized, which includes initialization actions and code dependencies, there is no unnecessary initialization in subsequent invocations. Once initialized, functions are hyper-ready to respond in double digit milliseconds of being invoked. This is the key to understanding how this feature helps you obtain predictable start-up latency for both causes of cold-starts.

While all Provisioned Concurrency functions start more quickly than the existing on-demand Lambda execution style, this is particularly beneficial for certain function profiles. Runtimes like C# and Java have much slower initialization times than Node.js or Python, but significantly faster execution times once initialized. With Provisioned Concurrency turned on, users of these runtimes benefit from both the consistent low latency of the function’s start-up, and the runtime’s performance during execution.

When you configure concurrency on your functions, the Lambda service initializes execution environments for running your code. If you exceed this level, you can choose to deny further invocations, or allow any additional functional invocations to use the on-demand model. You can do this by setting the per-function concurrency limit. In the latter case, while these invocations exhibit a more typical Lambda start-up performance profile, you are not throttled or limited from running invocations at high levels of throughput.

Using CloudWatch Logs or the Monitoring tab for your function in the Lambda console, you can see metrics for the number of Provisioned Concurrency invocations, compared with the total. This can help identify when total load is above the amount of concurrency, and you can make changes accordingly. Alternatively you can use the Auto Scaling CLI commands to have this managed by the service, so that the amount of concurrency tracks more closely with actual usage.

Turning on Provisioned Concurrency

Configuring Provisioned Concurrency is straight-forward. In this post, I demonstrate how to do this in the AWS Management Console but you can also use the AWS CLI, AWS SDK, and AWS CloudFormation to modify these settings.

1. Go to the AWS Lambda console and then choose your existing Lambda function.

2. Settings must be applied to a published version or an alias. Go to the Actions drop-down and choose Publish new version.

3. Enter an optional description for your version and choose Publish.

4. Go to the Actions drop-down and choose Create alias.

5. Enter a name for the alias (for example, “Test”), select 1 from the Version drop-down, and choose Create.

6. Locate the Concurrency card and choose Add.

7. Select the Alias radio button for Qualifier Type, choose Test in the Alias drop-down, and enter 100 for the Provisioned concurrency. Choose Save.

8. The Provisioned Concurrency card in the Lambda console will then show the status In progress.

After a few minutes, the initialization process is complete, and your function’s published alias can now be used with the Provisioned Concurrency feature.

Since the feature is applied explicitly to a function alias, ensure that your invocation method is calling this alias, and not the $LATEST version. Provisioned Concurrency cannot be applied to the $LATEST version.

Comparing results for on-demand and Provisioned Concurrency

In this example, I use a simple Node.js Lambda function that simulates 5 seconds of activity before exiting. The handler contains the following code:

exports.handler = async (event) => {

await doWork()

return {

"statusCode": 200

}

}

function doWork() {

return new Promise(resolve => {

setTimeout(() => {

resolve()

}, 5000)

})

}

I include multiple NPM packages to increase the function’s zip file to around 10 Mb, to approximate a reasonable package size for a typical production function. I also enable AWS X-Ray tracing on the Lambda function to collect and compare detailed performance statistics.

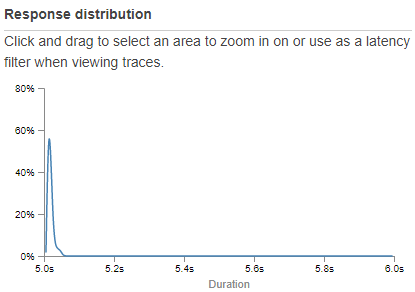

I add an API Gateway trigger to call the function for testing. This endpoint invokes the $LATEST version and has no Provisioned Concurrency settings applied, so it performs as a typical on-demand Lambda function. For testing, I use the Artillery NPM package to simulate user load. After the test is completed, the X-Ray report for this on-demand Lambda function shows an expected response distribution:

While most of the requests are completed near the expected 5-second execution time, there is a long tail where p95 and p99 performance times are slower. This is caused by the function is scaling up, and new concurrent invocations are slower to start due to execution environment initialization. The detailed distribution chart in X-Ray shows this more clearly, where these cold-starts are notable on the far right of the graph:

Finding the slowest-performing execution in this test, AWS X-Ray shows that the cold-start latency added approximately 650 ms to the overall performance:

This is slowest execution in the test, but typically the initialization process could take significantly longer in many production scenarios. This test shows how on-demand Lambda performance exhibits higher latencies at the p95 and p99 intervals when functions scale up.

For the comparison, I configure Provisioned Concurrency for this function using the same steps in the previous section. I also update the API Gateway integration to target the published version of the function, instead of the $LATEST version. Launching the same load test using Artillery, now the performance is dramatically different:

There is no performance long-tail, and zooming into the detailed chart in X-Ray shows that there were no extended latencies caused by execution environment initialization:

Finding the fastest and slowest executions in this test, the fastest completed in 5007 ms, while the slowest finished in 5066 ms, representing a spread of 7-66 ms overhead in total execution time.

If you compare the results of each load test using the standard on-demand invocation model and Provisioned Concurrency, the combined response time distribution shows the impact:

The brown line is the on-demand test, showing the long-tail latency caused by both execution environment initialization and code initialization inherent in the scaling up of Lambda functions. The blue line is the Provisioned Concurrency test where the long-tail is eliminated completely, showing much more consistent function latency using this new feature.

Conclusion

AWS Lambda continues to make significant performance improvements for all Lambda users, and remains committed to improving execution times for the existing on-demand scaling model. This new feature provides an option for builders with the most demanding, latency-sensitive workloads to execute their functions with predictable start-up times at any scale.

In this post, I reviewed how to set this feature on an alias of a Lambda function. I compared the total execution times between on-demand and Provisioned Concurrency for the same function over load. The results show that the start-up latency variability is eliminated when Provisioned Concurrency is enabled.

You can also use provisioned concurrency today with AWS Partner tools, including configuring provisioned concurrency settings with the Serverless Framework, or viewing metrics with Datadog, Epsagon, Lumigo, SignalFx, SumoLogic, and Thundra.

Provisioned Concurrency is available today in the following Regions: US East (Ohio), US East (N. Virginia), US West (N. California), US West (Oregon), Asia Pacific (Hong Kong), Asia Pacific (Mumbai), Asia Pacific (Seoul), Asia Pacific (Singapore), Asia Pacific (Sydney), Asia Pacific (Tokyo), Canada (Central), Europe (Frankfurt), Europe (Ireland), Europe (London), Europe (Paris), and Europe (Stockholm), Middle East (Bahrain), and South America (Sao Paulo).

To learn more about Provisioned Concurrency, visit our documentation, or read the launch blog post.