Containers

Optimize webSocket applications scaling with API Gateway on Amazon EKS

Introduction

WebSocket is a common communication protocol used in web applications to facilitate real-time bi-directional data exchange between client and server. However, when the server has to maintain a direct connection with the client, it can limit the server’s ability to scale down when there are long-running clients. This scale down can occur when nodes are underutilized during periods of low usage.

In this post, we demonstrate how to redesign a web application to achieve auto scaling even for long-running clients, with minimal changes to the original application.

Background

In many cases, existing on-premises applications are containerized and deployed to Amazon Elastic Kubernetes Service (Amazon EKS) or Kubernetes, or new applications are written natively to handle WebSocket connections. These applications may be written in various languages, with supporting libraries and frameworks (e.g., Springboot for Java, SignalR, or Websocket API for .NET, websockets on python available via pip, etc).

When an application with a WebSocket server is deployed on a server, it accepts connections from clients. These clients can remain open for an extended period of time — sometimes for hours depending on the use case. During periods of heavy traffic, Kubernetes Horizontal Pod Autoscaling scales up pods to handle the demand. However, scaling down the pods can be difficult when there are persistent connections left over from long-running clients that have not yet exited.

Furthermore, every command that comes in over the WebSocket connection from a client may require additional calls to various microservices before sending a response. This limits the use of an async calling model or event-driven architecture, as the same pod that has the WebSocket open must respond back to the client, which also limits the scalability and design of other application components.

The application architecture can be simplified by offloading WebSocket connection maintenance from the Amazon EKS pod to another service, such as Amazon API Gateway. This approach allows for easier scaling up and down of pods without worrying about lingering connections, and enables the use of async calling models and event-driven architecture for other components.

Additionally, using an Amazon API Gateway can provide other benefits, such as the ability to perform advanced traffic management (i.e., routing, throttling, and caching). This can help to further optimize the performance of the application, while also providing greater flexibility and control over the traffic flow.

Scaling Amazon EKS

Using Kubernetes Event Driven Autoscaling (KEDA) we can utilize more granular metrics to help scale our Amazon EKS backend to handle increased traffic to the WebSocket API and scale down when needed. For those who are not familiar with KEDA, it is an open-source project that enables automatic scaling of Kubernetes workloads based on various event sources instead of just CPU or memory metrics. With KEDA you can drive the scaling of any container on Amazon EKS based on the number of events needing to be processed. CPU and memory-based scaling used by Horizontal Pod Autoscaler (HPA) may not be the best metric to scale on because they may not reflect the actual load on your application. KEDA allows you to scale your application in a more precise and efficient manner while allowing you to respond quickly to changes in traffic patterns.

The number of web socket connections opened at the API gateway at any given time can be used to scale up pods to handle more client requests, and scale down when the opened WebSocket connections decreases. In addition, we can add a second metric of WebSocket message rate. This metric measures the rate at which the WebSocket messages are sent. If the rate of WebSocket messages exceed a certain threshold, then we can scale additional pods to handle the increased traffic.

There is one more aspect of scaling that we need to address. KEDA scales pods to handle additional load as more requests are sent to Amazon EKS backend from the Amazon API Gateway. As additional pods are added, they consume more resources on the Nodes until they can no longer add additional pods. New nodes need to be added to the cluster to support additional pods. Kubernetes Cluster Autoscaler is commonly utilized as the scaling mechanism for most Kubernetes deployments; however, Cluster Autoscaler isn’t as flexible as Karpenter due to its requirement for each node in an autoscaling group to have the same resource profile (i.e., vCPU and memory) and taints. Karpenter is an open-source project that lowers compute costs and minimizes operational overhead, by right-sizing your compute for your workload. Karpenter has the ability to address the full range of Amazon Elastic Compute Cloud (Amazon EC2) instance types available at AWS. With Karpenter, we can efficiently scale up and scale down nodes as needed. You can learn more about Karpenter with this post.

Solution overview

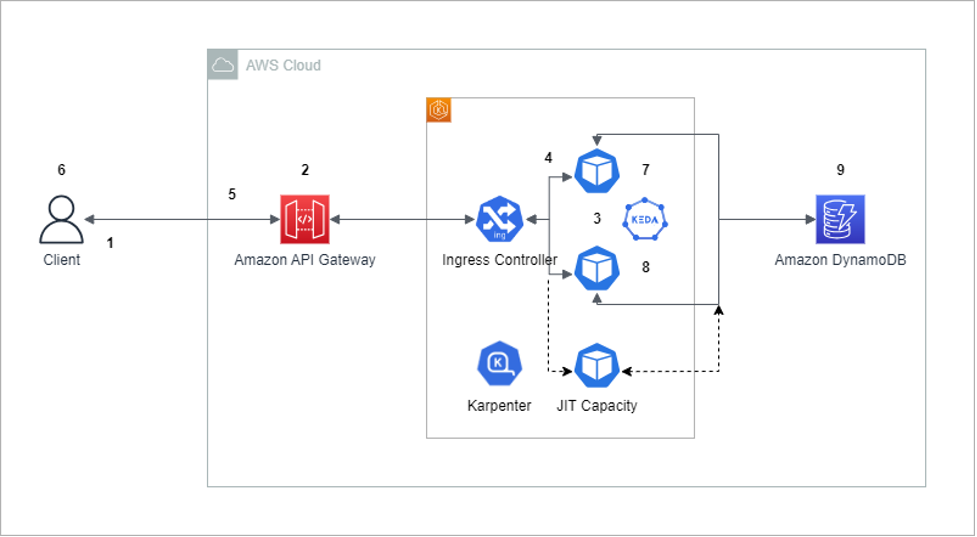

To overcome the scaling limitations resulting from open WebSocket connections, a potential solution is to utilize Amazon API Gateway for establishing WebSocket connections with clients and communicating with the backend Amazon EKS services via a REST API, instead of than WebSocket. Furthermore, to store session IDs for our application, use Amazon DynamoDB.

To implement this solution, we create an Amazon API Gateway WebSocket API, which manage incoming connections from the clients. Clients can connect to the WebSocket API either through a custom domain name or the default API gateway endpoint. The API gateway is responsible for managing the lifecycle of the WebSocket connections and handles incoming messages from clients.

When the client sends a message to the API gateway, it forwards the message to the application running on Amazon EKS using a REST API CALL. The Amazon EKS services can then process the message and send a response back to the API Gateway, which can then forward the response back to the client.

By adopting this approach, the API gateway manages the WebSocket connections, which allows the Amazon EKS backend services to be scaled up or down as needed without impacting the clients. Since the API Gateway communicates with the Amazon EKS backed services using REST API calls instead of WebSockets, there are no open connections to prevent the termination of pods. It’s worth noting that Karpenter is not currently implemented in the following walkthrough, but it can be easily installed by referencing the official documentation.

Due to additional hop via Amazon API Gateway and accessing Amazon DynamoDB for responses, it adds some additional latency that should be minimal in most cases. If your application is latency sensitive, then a performance test should be done to ensure that you are able to provide the responses within the Service Level Agreement (SLA) required.

Prerequisites

You would need to have an AWS account with the following resources pre-created before performing the implementation steps.

- An Amazon EKS cluster

- An Amazon Elastic Container Registry (Amazon ECR) registry or equivalent docker registry to host the docker image. In this post, we’ll use the Amazon ECR to host the application image

- Amazon EKS requires the AWS Load Balancer Controller to be installed

- A test machine with wscat client installed for testing.

- AWS Identify and Access Management (AWS IAM) roles for service accounts needs to be configured for the Kubernetes deployment with the appropriate policy that will allow the pods to invoke an API call against the Amazon API Gateway. You can follow this user guide to setup Iam Roles for Service Account (IRSA) and this policy to enable your pods to invoke the API call.

Walkthrough

- The client initiates a WebSocket connection to the Amazon API Gateway.

- Amazon API Gateway receives the WebSocket connection request and creates a WebSocket session for the client. Then Amazon API Gateway receives the message and routes it to the backend REST API running on Amazon EKS.

- The message is sent to a pod in the cluster via Ingress Controller. AWS Load Balancer controller can be used as the ingress controller. The application running on the pod determines if the session id exists in the Amazon DynamoDB table. If it doesn’t exist, then it creates a new item in the table.

- The application running on the pod processes the request and generates a response back to the Amazon API Gateway.

- Amazon API Gateway receives the response and forwards it to the client through the WebSocket connection.

- The Client receives the response and can continue to send and receive messages through the WebSocket connection.

- When there is a large number of WebSocket requests or increased message rates KEDA scales the number of Kubernetes replica to handle the additional load based on events. See the Scaling Amazon EKS section for further scaling details.

- Conversely, when there is a drop in WebSocket request or decrease in message rates, KEDA scales down the number of Kubernetes replica and reduces the number of pods without issue since there are no open WebSocket connections. When a Kubernetes Pod needs to be scaled down, the SIGTERM signal ensures that the pod is terminated gracefully.

- During scale down, if additional messages are sent through opened WebSocket connections, the Amazon EKS backend retrieves the session id from Amazon DynamoDB table. Setting a Time to Live (TTL) attribute on the Amazon DynamoDB table automatically collects your sessions and avoids the need to garbage collect them yourself.

Implementation steps

Refer to Prequisites before performing the below steps.

- Download the project files:

- Create the container image for a sample application. Go to the Amazon ECR registry and see the view push commands. It has instructions to build and push the docker image to Amazon ECR.

- Create and apply deployment file for service:

4. Create a Nodeport:

5. Create an Ingress Application Load Balancer(ALB):

6. Create Websocket API gateway by running the following command. Please replace the address for the ALB with your own from the previous command in step 5:

7. Edit the update-deployment-Service.yml file and replace <IRSA ServiceAccount> with the Service Account name you have created as part of the prerequisite, and also update it with the correct container image.

Run the following command to apply the update to the deployment:

Update your AWS IAM policy to grant the role only access to your API gateway and no additional resources as part of security best practices.

8. Test with client. Please retrieve the API gateway URL from the Amazon CloudFormation output and run the following command:

9. At the wscat prompt, type any message (e.g., hello) and it should echo back the message indicating end to end solution is working.

Cleaning up

Clean up the resources afterwards so as to not incur future charges. Delete the Amazon CloudFormation stack using the below command:

Delete kubernetes resources:

Delete any other resources created as part of prerequisite steps.

Cost

The introduction of additional components like API gateway and Amazon DynamoDB in the architecture provides scaling ability and introduces some additional cost. Please refer to API Gateway Pricing and Dynamodb pricing to get an estimate based on your usage.

Alternate architecture

The proposed architecture also applies to applications that are running on Amazon ECS with minor differences. The ingress controller is replaced by ALB and there is no need to use KEDA and Karpenter for autoscaling instead AWS Application auto scaling can be used to auto scale with custom metrics.

Another approach would be to use Amazon API Gateway with AWS Lambda. The AWS Lambda function handles the processing logic similar to the Amazon EKS pod. This approach requires significant code changes but would benefit from being completely serverless.

Conclusion

In this post, we showed you to move the Websocket handling from the Kubernetes pod to an Amazon API Gateway. By offloading the Websocket handling to the Amazon API Gateway, the pod is no longer responsible for managing the connections, which allows for easier pod autoscaling. Overall, this approach simplifies the management of the Websocket connections and allows for more efficient use of resources, which can ultimately lead to better application performance.

Below are some references that you can read for additional information:

Websocket with API Gateway and Lambda Backend