Containers

Under the hood: Amazon Elastic Container Service and AWS Fargate increase task launch rates

Since 2015, hundreds of thousands of developers have chosen Amazon Elastic Container Service (Amazon ECS) as their orchestration service for cluster management. Developers trust Amazon ECS with the lifecycle of their mission-critical applications, from initial deployment to rolling out new versions of their code and autoscaling in response to changing traffic levels.

Alongside these long-lived application tasks, Amazon ECS is also used to launch standalone tasks. Standalone tasks might be compute jobs that run on a schedule or in response to an event. They could also be AWS Batch jobs that run in response to work items arriving on a queue. Each second, Amazon ECS launches thousands of application tasks and batch tasks across Amazon EC2, Fargate, and Amazon ECS Anywhere managed on-premises hardware. Over the past year, we have increased task launch rates for Amazon ECS customers. This enables customers to deploy faster, scale faster, and complete batch workloads faster.

In this article, you’ll get a peek under the hood at how Amazon ECS works, what task launch rate is, and why increases in task launch rates are so important.

How does Amazon ECS work?

Developers choose Amazon ECS so they can move quickly and focus on building applications rather than infrastructure. Amazon ECS provides a managed control plane and simplicity focused experience that frees developer teams from the burden of needing to manage, upgrade, and understand infrastructure management. Amazon ECS is designed from the ground up to be a secure, multi-tenant control plane. From the outside, using Amazon ECS is as simple as calling the fully managed, serverless API. This API is the front end for a control plane that is made up of several core components:

- Agent communication: Amazon ECS agents run on Fargate managed tasks, Amazon EC2 hardware, or a customer’s on-premises servers. They report back to the Amazon ECS control plane to make their CPU, memory, and GPU capacity available for running tasks. The control plane also talks back to these agents to tell them to launch application tasks. Additionally, the control plane monitors these agents for unexpected disconnects from the agent or termination notices from Amazon EC2 that indicate that the host has been shut down.

- Task monitoring: Monitor task lifecycle and task health checks for unexpected state changes such as an application task crashing, freezing, or being killed by an operating system out of memory reaper.

- API: This is how developers and operations engineers can configure Amazon ECS with their intent. The API accepts declarative configuration for scenarios like “run this container as a standalone task that runs to completion” or “run 10 copies of this application container as a service, and keep them running indefinitely” or “this service already has 10 copies of my application running, but I would like to do a zero-downtime rolling deploy to update to a new version of my application container, without dropping any traffic.” Developers interact with the Amazon ECS API directly from their own deploy scripts or indirectly by using the AWS Management Console, AWS Command Line Interface, AWS CloudFormation, or other higher-level Amazon ECS tooling such as AWS Copilot.

- Task scheduling: This is the engine that creates and runs plans to make a customer’s declarative configuration into reality. When developers provide their declarative configuration, the scheduler is responsible for picking it up and finding capacity to run the application containers. Additionally, when task monitoring indicates that the real state of an Amazon ECS managed service has diverged from the plan, such as when a task crashes unexpectedly, the scheduler comes up with a plan to fix this. If the customer changes their intent, such as asking for a new version of the application to be rolled out or asking to scale the application in or out, the scheduler comes up with a plan to make this new intent a reality.

Task scheduling is at the heart of what Amazon ECS does. This scheduling can become very complex and resource-intensive because it requires keeping track of many instances and many tasks on those instances. EC2 instances come in different sizes and are often running multiple tasks of varying sizes. The scheduler is often doing concurrent task launches for multiple services, onto the same instances, so it must schedule tasks while avoiding conflicts that would result in two tasks competing for resources on an overburdened host. However, Amazon ECS handles all this complexity beneath the hood. Developers just have to specify a few goals for what they want the task scheduler to accomplish, and the Amazon ECS control plane does the rest.

Another factor that makes task scheduling interesting is that the complexity of scheduling can have considerable variance from one workload to another. Some developers are deploying tiny applications with a single container running in a single task. Other developers have an on-premises server farm with hundreds of machines or a huge cloud deployment with tens of thousands of application containers running on EC2 instances or as AWS Fargate tasks. Some developers deploy infrequently and have statically sized services. Other developers deploy many times per day and use autoscaling to adjust their service size up and down throughout the day.

No matter the size or the complexity of the use case, there is no charge for workload scheduling when using Amazon ECS. Developers only have to pay for the compute capacity that runs their application tasks. There is no charge for the placement of the application onto that capacity. It has been possible to offer workload scheduling for free because Amazon ECS is designed from the ground up as a multi-tenant architecture. With a single-tenant control plane design, each customer cluster would require its own dedicated control plane to do the scheduling for that cluster. This personal control plane would cost money no matter how much usage it received. As a result, single tenancy control planes are wasteful for small workloads that do not fully utilize their control plane. On the other hand, the Amazon ECS control plane uses a shared tenancy model that serves all users at once with slices of its gigantic, shared scheduling capacity. Small clusters and tiny service deployments take up tiny fractions of the scheduler’s time, so there is no waste. Large clusters use more resources but have the control plane’s large shared capacity to draw from when needed.

How Amazon ECS protects customer experience with rate limits

Amazon ECS currently schedules thousands of tasks per second across all the AWS customers that are using Amazon ECS as their orchestrator. The overall scheduling workload is fairly distributed among Amazon ECS customers by using rate limits for task launches. Rate limits prevent one customer from overwhelming the Amazon ECS control plane or AWS Fargate capacity pool with task launches that could impact the experience of other concurrent AWS customers.

Amazon ECS rate limits are implemented using a token bucket algorithm. You can read more about how AWS uses token buckets in the AWS Builder Library. But the gist of it is that the token bucket fills up with tokens at a set rate, up to a configurable maximum number of tokens. When you use Amazon ECS, such as by calling the RunTask API, the control plane removes a token from the bucket. If the bucket becomes empty, then further actions such as calling the RunTask API have to wait for a new batch of tokens to be added into the token bucket. This approach allows for bursts of activity when necessary, but it also limits the sustained rate of activity.

There are three rate limit types you should be aware of when using Amazon ECS:

- Service Deployment rate limit: When you create or update a service this controls how fast the service can roll out new tasks or replace existing tasks with a new version.

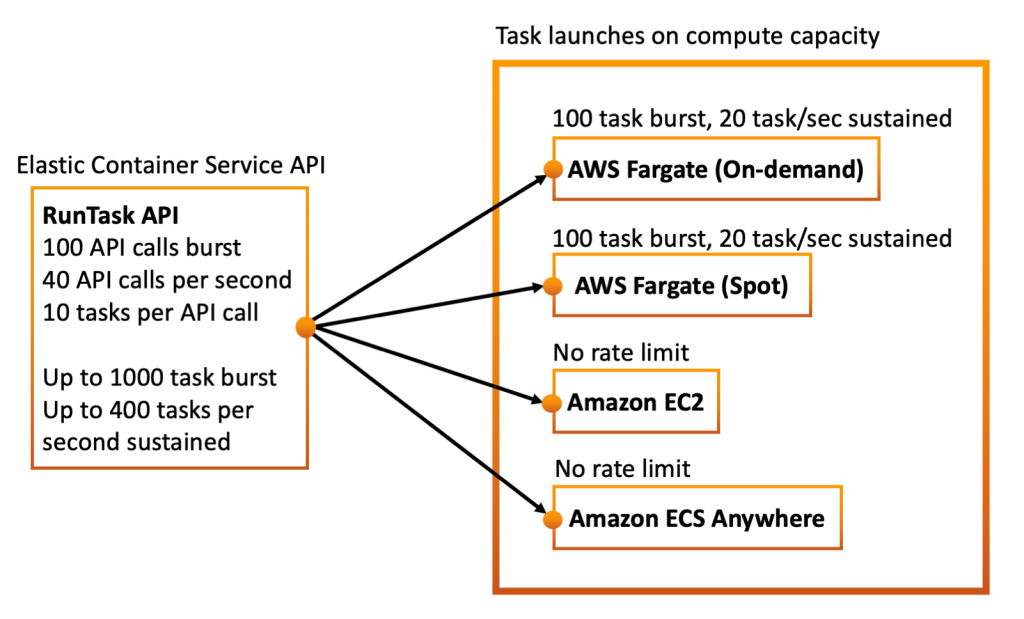

RunTaskAPI rate limit. This controls the rate at which you can launch standalone tasks.- AWS Fargate capacity rate limits. These are separate rate limits specifically for AWS Fargate task launches.

Each of these rate limits can be adjusted on a case by case basis by opening a support ticket. To explain how these default rate limits impact you lets dig a bit deeper into each one.

Capacity Type and Task Launch Rate

Your task launch rate is affected by the type of capacity that you pick with Amazon ECS. The following table shows the current rate limits for each capacity type.

| Capacity Type | Task Burst | Sustained Task Launches | Adjustable |

| AWS Fargate on-demand capacity | 100 tasks | 20 per second | Yes |

| AWS Fargate spot capacity | 100 tasks | 20 per second | Yes |

| EC2 | No limit | No limit | |

| ECS Anywhere | No limit | No limit |

Rate limits for AWS Fargate are account wide. This means all services in all clusters on an AWS account are sharing the same AWS Fargate task launch rate limit. However, the token buckets for AWS Fargate on-demand and AWS Fargate spot are separate from each other. This means that you can reach a sustained rate of up to 20 on-demand task launches per second, while also launching up to 20 spot tasks per second, as long as there is spot capacity available.

When considering the AWS Fargate rate limits it is important to remember that task size can be adjusted. At the smallest task size of 1/4th CPU you can launch up to 5 vCPU’s of on-demand capacity and 5 vCPU’s of spot capacity per second, while at the largest task size of 4 vCPU’s you can launch up to 80 vCPU’s of on-demand capacity and 80 vCPU’s of spot capacity per second. So if you need to launch more capacity faster, then you can increase your task size to get more computing resources within the same time frame. In cases where this still isn’t enough you can also open an AWS support ticket to request a rate limit increase that allows you to launch more tasks per second.

When using EC2 capacity there is no rate limit on task launches onto your existing EC2 instances. Service deployments and higher level API’s like RunTask have their own rate limits that control how fast you can launch tasks on EC2 capacity, but you can have many services all launching tasks onto the same EC2 capacity at once. You may still want to consider the EC2 quotas, as these limit how much EC2 capacity you can register into your cluster, and how fast you can do so. For ECS Anywhere there is also no rate limit on task launches, but you will need to consider the quotas for SSM Managed Instances.

Service Deployment Rate Limit – Deploy and update services faster

From 2021 to 2022, we improved Amazon ECS scheduling to be able to deploy each service at a rate of up to 500 tasks per minute when using AWS Fargate capacity, and 250 tasks per minute when using EC2 capacity. For AWS Fargate the deploy rate is 16X faster than last year, and for EC2 capacity the deploy rate has doubled.

To explain how this impacts the Amazon ECS customer experience, imagine that you create an Amazon ECS service for a large web API that receives high traffic. Because there is so much web traffic to this API, the service is going to require 1,000 tasks to serve all of the traffic. So you set the service’s desired count to 1,000. The Amazon ECS control plane now needs to respond to this request by launching 1,000 new tasks. The control plane will not launch all 1,000 tasks at once. Instead, it will begin executing workflow cycles to bring the current state (zero tasks) towards the desired state (1,000 tasks). Each workflow cycle will launch a batch of new tasks. The increased rate of task scheduling now allows those 1,000 tasks to be launched faster, with fewer workflow cycles. When using AWS Fargate as the capacity these 1000 tasks can be scheduled in around two minutes.

For a real-world scenario, there is more going on than just task scheduling, though. Your container images need to be downloaded and unpacked, and your application probably has health checks that validate that it is properly starting. Additionally, you may have integrations enabled, such as Amazon ECS automatically registering your tasks into a load balancer. You may see variation in task launch rate based on the features that you enable for your Amazon ECS service and the type of capacity being used (Amazon EC2 or Fargate). To give you an idea of how fast Amazon ECS services can now roll out tasks in a service, we ran some benchmarks for various real-world service configurations. Note that these benchmarks count the end-to-end time for scheduling the tasks onto compute infrastructure, downloading the container image, running it, and evaluating its health, so you’ll see that the total deployment time takes a bit longer than two minutes for 1000 tasks.

| Benchmark | Size | Duration | Rate |

| AWS Fargate on-demand capacity, no load balancer | 1000 tasks | 208 seconds | 4.8 tasks/sec |

| AWS Fargate spot capacity, no load balancer | 1000 tasks | 353 seconds | 2.8 tasks/sec |

| AWS Fargate 50/50 on-demand and spot capacity | 1000 tasks | 213 seconds | 4.7 tasks/sec |

| AWS Fargate on-demand, no public IP address | 1000 tasks | 199 seconds | 5 tasks/sec |

| AWS Fargate on-demand, load balanced service | 1000 tasks | 252 seconds | 4 tasks/sec |

| EC2 capacity, capacity provider starts empty | 1000 tasks | 752 seconds | 1.3 tasks/sec |

| EC2 capacity, with all the EC2 instances up already | 1000 tasks | 270 seconds | 3.7 tasks/sec |

| EC2 capacity, host networking instead of AWS VPC | 1000 tasks | 270 seconds | 3.7 tasks/sec |

If you would like to test out some of these task launch scenarios yourself, you can find the CloudFormation templates that were used in these tests on Github.

Some specific things to note from the table above:

- For a single service you will see faster service-managed task launch rates on AWS Fargate than on Amazon EC2 capacity. AWS Fargate has standardized task sizes that can be prepared ahead of time and kept in a prewarmed state for anyone who wants to run a task of that size. The Amazon ECS scheduler just requests a task from this AWS Fargate task pool whenever a task needs to be started. For Amazon ECS on Amazon EC2, the scheduler can’t take advantage of preprepared tasks. It must find an EC2 instance that has space for an arbitrarily sized task, reserve that space, and then launch a task on that instance on the fly.

- The default rate limits of 20 AWS Fargate task launches per second mean that once you get beyond 3 services deploying in parallel you will see services deploying faster on EC2 capacity than on AWS Fargate capacity.

- If you are launching a service that uses Amazon EC2 capacity but with a cold capacity provider that has no EC2 instances ready yet, then this means that Amazon ECS has to start EC2 instances on the fly and wait for them to boot up before it can launch containers on them. This significantly lowers the task launch rate. Having the Amazon EC2 capacity already launched and ready to run tasks increases the effective task launch rate.

- When launching AWS Fargate tasks that use Amazon VPC networking mode, if you turn off public IP addresses per task, then you can see a slight increase in task launch rate.

- Launching AWS Fargate spot tasks may be slower than AWS Fargate on-demand tasks as it is dependent on the amount of available spot capacity in the Region. In general, on-demand task launches are faster. However if you are facing rate limits you can still achieve a higher overall task launch rate by using a 50/50 mix of spot and on-demand tasks.

- Adding a load balancer to your service decreases task launch rate slightly. For some nonpublic facing workloads that you want to launch quickly, you might prefer to use DNS-based service discovery or a service mesh rather than a load balancer.

There are also some other built-in rate limits that may apply to scheduling tasks in Amazon ECS. One important rate limit to know about is exponential backoff when a service is failing to successfully launch stable containers that stay running. If the application container fails to start, crashes shortly after starting, or fails to pass health checks, then Amazon ECS detects that the service’s tasks are failing to stabilize, and it automatically slows down scheduling for this service using an exponential backoff. This protects any adjacent workloads that are running in the same cluster from being impacted by an endless loop of task launches and crashes. This exponential backoff on task launches can be reset by updating the service to a new task definition revision or by doing an UpdateService API call with the force-new-deployment flag set to true.

It is important to remember that any underlying capacity rate limits still apply. In specific if you are deploying multiple ECS services that all use AWS Fargate on-demand capacity then they are all sharing the same underlying AWS Fargate task launch rate limit of 20 tasks per second. In this case you may need to open a support ticket to request that your AWS Fargate task launch rate be raised in order to reach the maximum service deployment task launch rate when deploying all services in parallel.

RunTask API Limit – Run more standalone jobs faster

In addition to Amazon ECS services, some customers use the RunTask API to launch standalone tasks in their cluster. This API is generally used for batch workloads that run a new Amazon ECS task for each job. Examples include machine learning, video rendering, and scientific applications. The RunTask API is also used for use cases such as running a cron job on a schedule, running an Amazon ECS task in response to an Amazon EventBridge event, or running an Amazon ECS task as part of an AWS Step Functions workflow.

The RunTask API has a few limits. First, you can launch up to 10 tasks with each API call. Additionally, there is a token bucket rate limit that controls how fast you can call the RunTask API. This rate limit allows you to burst up to 100 calls to the API. The token bucket refreshes to allow a sustained rate of 40 RunTask API calls per second. Because the API allows you to launch up to 10 tasks per API call this means that you can use the RunTask API to burst up to 1000 task launches and reach a maximum sustained task launch rate of up to 400 tasks launches per second on EC2 or ECS Anywhere capacity. Note that because there is no underlying rate limit on task launches on EC2 you can have multiple service deployments to EC2 at the same time as you max out your RunTask API limits.

However, when running tasks on AWS Fargate capacity the underlying AWS Fargate rate limit of 20 task launches per second takes precedence over higher level rate limits such as the RunTask API rate limit, and the service deployment rate. The rate limits on the RunTask API may allow you to attempt to launch up to 400 tasks per second, but if those tasks are Fargate tasks then you would still get a rate limit error back if attempting to launch more than 20 on-demand tasks per second. However, even if the rate limit for on-demand Fargate tasks has been reached, you could still launch AWS Fargate spot tasks or EC2 tasks as long as you have not yet reached the overall rate limits of the RunTask API. Additionally the underlying AWS Fargate rate limit is shared between service deployment task launches and RunTask task launches. So if you are doing a simultaneous service deployment to Fargate while also launching tasks with RunTask you may hit Fargate capacity rate limits more quickly. Once again this Fargate capacity rate limit can be increased via a support ticket if this is an issue.

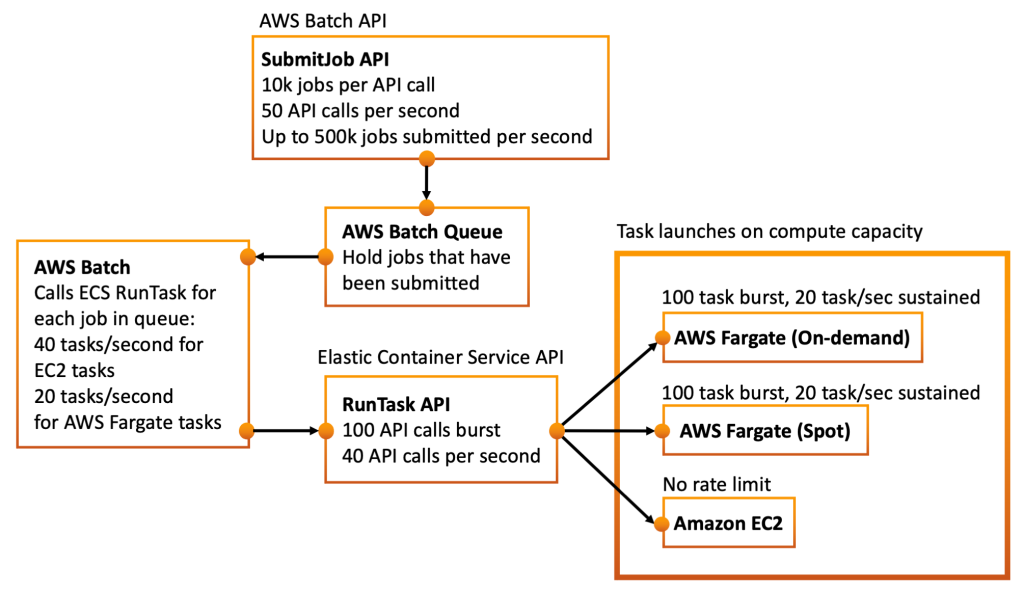

Many customers choose to use AWS Batch to help run large batch workloads without needing to think about rate limits and API throttling. With AWS Batch, you can use the SubmitJob API to submit batches of up 10,000 jobs at a time. This SubmitJob API can be called up to 50 times per second, allowing you to submit up to 500,000 jobs per second. The jobs will be stored in a queue that holds them until they can be launched as ECS tasks on AWS Fargate or EC2 capacity. AWS Batch will process queued jobs by calling the Amazon ECS RunTask API on your behalf, at the maximum rate that it can, until all the jobs have been run. This makes it much easier for you to process large batch workloads without having to implement your own code to handle Amazon ECS RunTask API limits.

The rate limits on the RunTask API now allow you to launch more Amazon ECS tasks per second, and larger bursts of tasks, whether you are calling the RunTask API directly, using AWS Batch as an intermediary for running work in an Amazon ECS cluster, or implementing Amazon EventBridge rules that trigger an Amazon ECS task to run. Once again if these rate limits are too low for you they can be raised on a case by case basis by opening a support ticket.

Conclusion

Developers are building larger and larger services, which operate at ever higher scale. They are using Amazon ECS to orchestrate these services. As Amazon ECS task launch rate continues to increase, it will better enable these developers to roll out larger web services more quickly and orchestrate high-volume batch jobs using Amazon ECS. We are excited to continue our efforts to make Amazon ECS even more powerful and to see what new and existing builders can create using Amazon ECS as their control plane.

If you are interested in reading another deep dive into container launch rates, Vlad Ionescu, an AWS Container Hero, has written an extensive article researching container scaling rates in Amazon ECS. His article shows task launch rate history from 2020, 2021, and 2022, so you can see the trends over time. It also compares container launch rates between Amazon Elastic Container Service, Amazon Elastic Kubernetes Service, with both EC2 and AWS Fargate capacity.

For more about the quotas that may effect your task launch rate you can also read:

For more general purpose tips on how to get the maximum speed out of Amazon ECS:

- Amazon ECS Best Practices: Speeding up Task Launch

- Amazon ECS Best Practices: Speeding up Cluster Autoscaling

- Amazon ECS Best Practices: Speeding up Deployments

Updated Feb 21, 2023: As of Jan 24, 2023 ECS has increased the speed of task launches onto EC2 capacity when using an EC2 capacity provider that starts out empty. This improvement was achieved by increasing a limit on number of PROVISIONING tasks in a cluster to 500 (previously 300). This allows EC2 capacity providers to observe greater demand for task launches onto EC2 and respond to this demand faster by launching larger quantities of EC2 instances to host those tasks. The benchmarks in this article have been updated to reflect this improvement. In a previous version of this post it took 951 seconds to launch 1000 tasks onto EC2 capacity when the capacity provider started out empty. It now takes 752 seconds to launch 1000 tasks in the same scenario, as the capacity provider launches EC2 instances more quickly. This represents an approximately 20% improvement for this scenario.