AWS Database Blog

Increase write throughput on Amazon RDS for MariaDB using the MyRocks storage engine

In 2022, Amazon Relational Database Service (Amazon RDS) for MariaDB introduced several new features to enhance the performance, scalability, reliability, and manageability of your workloads, including the MyRocks storage engine, AWS Identity and Access Management (IAM) integration, delayed replication, and multi-major upgrades.

Open-source database engines like MySQL and MariaDB implement a pluggable storage engine architecture. This architecture allows different database tables to use different storage engines without compromising scalability, flexibility, or performance. Each storage engine implements its own data structures and data access methods designed to cater to specific workload types. For example, the optimal data structure for mixed read/write workloads may not be suitable for write-intensive workloads, and vice versa. The same is true for transactional vs. analytical workloads.

In this post, we focus on the newly launched MyRocks storage engine architecture in Amazon RDS for MariaDB 10.6. We start by covering MyRocks and its architecture, use cases of MyRocks, and demonstrate our benchmarking results, so you can determine if the MyRocks storage engine can help you get increased performance for your workload.

Overview of MyRocks

The MyRocks storage engine is based on RocksDB, which was developed by Meta and is based on LevelDB. MyRocks has been extended by the MariaDB community to be a pluggable storage engine that you can start using in your Amazon RDS for MariaDB 10.6 solutions. It works seamlessly with MariaDB features. MyRocks is based on the log-structured merge-tree (or LSM tree). It’s optimized for fast storage and provides improved space and write efficiency. When evaluating storage engines that could give greater performance for web-scale type applications, MyRocks was a clear choice because of its superior handling of data compression and I/O efficiency.

MyRocks uses an LSM data structure format to store data, as shown in the following diagram (source).

When data is written to MyRocks, it’s stored in an in-memory write buffer called MemTable, as well as a Write Ahead Log (WAL). Otherwise, there will be data loss if the server crashes before the data in-memory (MemTable) is flushed to the disk. When the size of the MemTable reaches a defined size, it gets copied in-memory and becomes an immutable MemTable. Then the data of the MemTable is flushed to a Sorted Strings Table (SST) data file on disk and made immutable. Each SST stores data in sorted order to facilitate easy lookup of keys. As more SSTs are created on L0, they start to pour over to L1…L(n). To maintain the size for each level, some SSTs in level-L are selected and merged with the overlapping SSTs in level (L+1). The process is called compaction. Ultimately, data reaches the lowest level. In addition to the built-in compactness of the SSTs, data is also compressed, which optimizes storage consumption.

The overall write throughput of an LSM database directly depends on the speed at which compaction can occur, especially when the data is stored in fast storage like SSD or RAM. By default, MyRocks is configured to issue concurrent compaction requests from multiple threads. Sustained write rates and throughput may increase by as much as a factor of 10 with multi-threaded compaction when the database is on SSDs, as compared to single-threaded compaction.

In the read path, key lookup starts by searching all MemTables (most recent data) and then the block cache (most recently accessed data). If the key doesn’t exist in-memory, a key lookup occurs at each successive level until the key is found or it’s determined that the key isn’t present in the last level. It begins by searching all MemTables, followed by all level-0 SSTs, and then the SSTs at the following levels. At each of these successive levels, a whole binary search is used. In addition to using the LSM-tree structure, MyRocks provides extra optimization to improve performance by implementing bloom filters. Bloom filters are kept in each SST file to eliminate unnecessary search within an SST file.

Use cases of MyRocks

MyRocks has the following advantages compared to other storage engines, when your workload is running on SSDs, such as Amazon RDS: greater space efficiency, greater write efficiency, and faster data loading. MyRocks is useful for applications that have a lot of concurrent writes and large storage requirements such as web-scale applications and write-intensive workloads.

Benchmarking

We tested the MyRocks storage engine within Amazon RDS for MariaDB 10.6 to evaluate its performance compared to InnoDB. To conduct our tests, we used r5.12xlarge instances with 40,000 Provisioned IOPS on the EBS volume. We performed the benchmarking tests using sysbench and the genome dataset. We ran three separate benchmarks to understand storage optimization, write efficiency, and read efficiency. Each test was performed with 30 tables and with a table size of 100 million rows.

To evaluate storage optimization, we first compared bulk loads on MyRocks and InnoDB. To do this, we configured the parameter rocksdb_bulk_load = ON in the RDS parameter group. MyRocks performed significantly better than InnoDB, with a 1-hour load time, whereas InnoDB took 1 hour and 30 minutes to load the same amount of data. This is also evident in the write throughput and IOPS, where MyRocks peaked at 722.8MB/s in peak throughput and 15,621 IOPS, whereas InnoDB peaked at 363.6MB/s in throughput and 14,892 IOPS. We observed a small improvement in storage consumption with the bulk loads (600 GB on MyRocks vs. 700 GB on InnoDB). The following table summarizes these findings.

| Storage Engine | MyRocks | InnoDB |

| Load Time | 1 hour | 1 hour, 30 minutes |

| Write Throughput | 722.8 MB/s | 363.6 MB/s |

| IOPS | 15,621 | 14,892 |

| Storage consumption | 600 GB | 700 GB |

The following are the sample sysbench commands used for benchmarking.

For InnoDB, we used the following commands:

For MyRocks, we used the following commands:

The performance improvement became much starker when we changed our testing to use the genome dataset. We observed 50% higher storage consumption on InnoDB vs. MyRocks, where InnoDB consumed 1.2 TB vs. 807 GB on MyRocks. We saw that the degree of compression also varies with the change in the nature of the dataset. However, because these are synthetic load tests, we encourage you to perform load tests with your workloads to take advantage of the MyRocks compression to get greater space efficiency.

Next, we tested the write workload using the Amazon RDS default parameters on MyRocks vs. InnoDB. In this workload, we varied the number of threads to show the impact of load on the storage engine. The following graphs show the write performance difference between InnoDB and MyRocks.

The following are the sample sysbench commands used for benchmarking.

For InnoDB, we used the following commands:

For MyRocks, we used the following commands:

As the number of threads increase (x-axis), we see InnoDB peak out much earlier than MyRocks. This is where MyRocks shines, scaling up to 14,255 transactions/sec, vs. InnoDB’s peak at around 5,461 transactions/sec. The number of transactions also peaked much earlier in InnoDB at around 32 threads vs. MyRocks at around 128 threads.

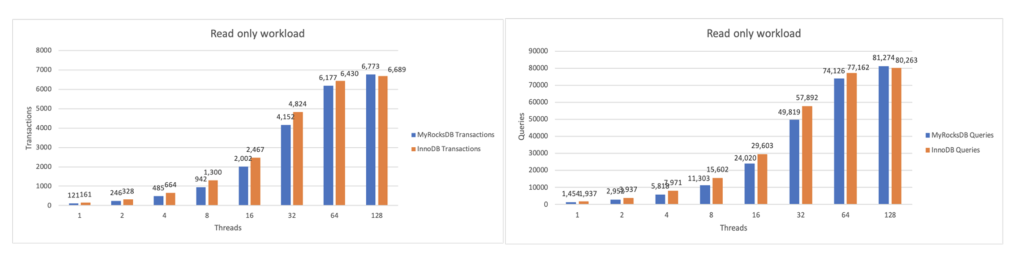

Finally, we tested the read workload with a similar parameter configuration as the write workload. As shown in the following graphs, both InnoDB and MyRocks exhibited similar read performance. We didn’t see any significant differences in read transactions per second between the two storage engines. However, your results may vary depending on your workload and instance configuration. Therefore, we recommend to benchmarking with your actual workload.

The following are the sample sysbench commands used for benchmarking.

For InnoDB, we used the following commands:

For MyRocks, we used the following commands:

Summary

Amazon RDS for MariaDB 10.6 introduced the MyRocks storage engine. Now your write-intensive workloads can benefit from greater space efficiency, faster data loads, and greater write efficiency. It uses an LSM architecture that has better compression than the B-tree used by the InnoDB storage engine. We observed a 3-times improvement in write performance, and a 33% reduction in storage consumption with our MyRocks test setup. We encourage you to try out the MyRocks storage engine with your MariaDB workloads to see how it can improve the performance of your applications.

About the authors

Vijay Karumajji is a Database Specialist Solutions Architect at Amazon Web Services based in Dallas. Vijay specializes in MySQL on Amazon RDS and Amazon Aurora. He works with our customers to provide guidance and technical assistance on database projects, helping them improve the value of their solutions when using AWS.

Vijay Karumajji is a Database Specialist Solutions Architect at Amazon Web Services based in Dallas. Vijay specializes in MySQL on Amazon RDS and Amazon Aurora. He works with our customers to provide guidance and technical assistance on database projects, helping them improve the value of their solutions when using AWS.

Justin Lim is a Business Development Manager at Amazon Web Services based in Seattle. In his role, Justin focuses on developing programs to help companies accelerate their workloads using AWS native databases. Prior to becoming a Business Development Manager, Justin held technical roles such as Solutions Architect for both databases and startups.

Justin Lim is a Business Development Manager at Amazon Web Services based in Seattle. In his role, Justin focuses on developing programs to help companies accelerate their workloads using AWS native databases. Prior to becoming a Business Development Manager, Justin held technical roles such as Solutions Architect for both databases and startups.