AWS Developer Tools Blog

F# Tooling Support for AWS Lambda

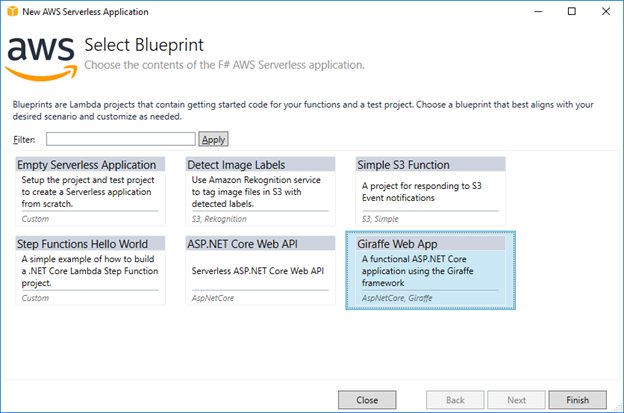

F# is a functional language that runs on .NET and enables you to use packages written in other .NET languages, like the AWS SDK for .NET that’s written in C#. Today we have released a new version of the AWS Toolkit for Visual Studio 2017 with support for writing AWS Lambda functions in F#. These […]

Autopagination feature in the AWS SDK for Java 2.0

This blog post is part of a series that outlines changes coming in the AWS SDK for Java 2.0. Read our Developer Preview announcement for more information about why we’re so excited for this new version of the SDK. We’re pleased to announce the support for automatic pagination in the AWS SDK for Java 2.0. Many […]

AWS SDK for Go 2.0 – Generated Marshalers

The AWS SDK for Go 2.0 has released generated marshalers for the restjson and restxml protocols. Generated marshalers will help with the performance and customer issues the SDK had been receiving. To better understand what was causing the performance hit, we used Go’s benchmark tooling to help us determine the main bottleneck—reflection. The reflection package […]

Publishing to HTTP/HTTPs Endpoints Using SNS and the AWS SDK for Java

We’re pleased to announce new additions to the AWS SDK for Java (version 1.11.274 or later) that makes it easy to securely process Amazon SNS messages via an HTTP/HTTPS endpoint. Before this update, customers had to deal with unmarshalling Amazon SNS messages sent to HTTP endpoints and validating their authenticity. Not only was this tedious, […]

New AWS X-Ray .NET Core Support

In our AWS re:Invent talk this year, we preannounced support for .NET Core 2.0 with AWS Lambda and support for .NET Core 2.0 with AWS X-Ray. Last month we released the AWS Lambda support for .NET Core 2.0. This week we released the AWS X-Ray support for .NET Core 2.0, with new 2.0 beta versions […]

AWS Service Provider for Symfony v2 with Support for Symfony v4

Version 2.0.0 of the AWS Service Provider for Symfony has been released with support for Symfony v4. You can upgrade through Composer using the following command: composer require aws/aws-sdk-php-symfony ~2.0 This AWS Service Provider for Symfony release is compatible with version 3 of the AWS SDK for PHP and versions 2, 3, and 4 of […]

Serverless ASP.NET Core 2.0 Applications

In our previous post, we announced the release of the .NET Core 2.0 AWS Lambda runtime and new versions of our .NET tooling to help you develop .NET Core 2.0-based serverless applications. Also, with the new .NET Core 2.0 Lambda runtime, we’ve released our ASP.NET Core NuGet Package, Amazon.Lambda.AspNetCoreServer, for general availability. Version 2.0.0 of […]

AWS Lambda .NET Core 2.0 Support Released

Today we’ve released the highly anticipated .NET Core 2.0 AWS Lambda runtime that is available in all Lambda-supported regions. With .NET Core 2.0, it’s easier to move existing .NET Framework code to .NET Core with the much larger API defined in .NET Standard 2.0, which .NET Core 2.0 implements. Using Visual Studio 2017 The easiest […]

Remote Debug an IIS .NET Application Running in AWS Elastic Beanstalk

In this guest post by AWS Partner Solution Architect Sriwantha Attanayake, we take a look at how you can set up remote debugging for ASP.NET applications deployed to AWS Elastic Beanstalk. We love to run IIS websites on AWS Elastic Beanstalk. With Elastic Beanstalk, you can quickly deploy and manage applications in the AWS Cloud […]

AWS Support for PowerShell Core 6.0

Announced in a Microsoft blog post yesterday, PowerShell Core 6.0 is now generally available. AWS continues to support this new cross-platform version of PowerShell with our AWS Tools for PowerShell Core module also known by its module name, AWSPowerShell.NetCore. This post recaps the modules available from AWS for PowerShell users wanting to script their AWS […]