AWS HPC Blog

Customize Slurm settings with AWS ParallelCluster 3.6

AWS ParallelCluster simplifies management and configuration of the Slurm scheduler. However, if you need to fine-tune Slurm’s behavior, further configuration might be necessary. For instance, you may want to adjust the number of concurrently-managed jobs or modify the resource configuration for compute resources. Historically, you have been able to change these settings by editing Slurm configuration files directly. However, this can be tedious since you have to provide the mechanism for doing so.

AWS ParallelCluster simplifies management and configuration of the Slurm scheduler. However, if you need to fine-tune Slurm’s behavior, further configuration might be necessary. For instance, you may want to adjust the number of concurrently-managed jobs or modify the resource configuration for compute resources. Historically, you have been able to change these settings by editing Slurm configuration files directly. However, this can be tedious since you have to provide the mechanism for doing so.

With AWS ParallelCluster 3.6, customizing Slurm settings has become much more convenient. Now, you can directly specify these adjustments in the cluster configuration itself. This enhancement enables you to create reproducible and well-optimized HPC systems, as your scheduler customizations can be specified declaratively. This also makes your cluster configuration files an even more valuable part of your infrastructure’s documentation.

Today, we’ll show you how this works and how you can implement it yourself.

How to customize Slurm settings

To get started, you’ll need to be using AWS ParallelCluster 3.6.0 or higher. You can follow this online guide to help you upgrade. Next, you will need to edit your cluster configuration as described in the examples below and in the AWS ParallelCluster documentation. You can always retrieve the configuration for a currently-running cluster using the pcluster describe-cluster syntax.

Finally, you’ll need to create a cluster using the new configuration.

Using key-value pairs in CustomSlurmSettings

You can define custom Slurm settings by adding CustomSlurmSettings to three sections of your cluster configuration:

SlurmSettings– specify cluster-wide settingsSlurmQueues– customize specific queuesComputeResources– configure particular sets of instances

You can have one custom settings section at the cluster level, and one for each queue or compute resource you wish to customize. In terms of precedence, compute resource settings override queue-level settings, which override cluster-level settings.

Here is an excerpt from a cluster configuration which illustrates all three levels.

Scheduling:

Scheduler: slurm

SlurmSettings:

CustomSlurmSettings:

- MaxJobCount: 50000

SlurmQueues:

- Name: normal

CustomSlurmSettings:

KillWait: 300

ComputeResources:

- Name: cr1

CustomSlurmSettings:

MemSpecLimit: 2048

Instances:

- InstanceType: m6a.xlarge

MinCount: 0

MaxCount: 10

In this example configuration, we set the maximum number of concurrent jobs for the cluster at 50,000; bump the amount of time before a job is forcibly terminated to 300 seconds; and reserve 2GB RAM for system use, not available for user allocations.

The examples shown here are quite simple, mapping to single-parameter lines in the Slurm configuration file. More complex configurations like sub-parameters – and even entire settings file sections – can be set using key-value pairs. This is well-documented in the AWS ParallelCluster documentation on this topic.

You can find the complete list of Slurm settings that you can configure in the Slurm configuration file documentation. However, some are not available for you to use because AWS ParallelCluster relies on them for its business logic. We’ve enumerated these reserved setting in the AWS ParallelCluster documentation.

Importing a settings file via CustomSlurmSettingsIncludeFile

You may already have a settings file that you want to import into new cluster systems. AWS ParallelCluster supports this.

To use a standalone file:

- Write a

slurm.conffile as defined in the Slurm documentation for the version of Slurm that ships with your release of AWS ParallelCluster (currently 23.02.2) - Upload the file so that it’s accessible via https or s3.

- Add

Scheduling:SlurmSettings:CustomSlurmSettingsIncludeFileto your cluster configuration, pointing to the file URL.

Scheduling:

Scheduler: slurm

SlurmSettings:

CustomSlurmSettingsIncludeFile: s3://MYBUCKET/slurm-setttings.conf

There are two concerns you should be aware of when using a standalone file.

First, the URL must be accessible to AWS ParallelCluster. If you’re using an HTTPS URL, it should not require any form of authentication. If you are using an S3 URL, the file should be accessible from the head node. You can achieve this by setting the file to be publicly-readable or by adding an S3 access configuration for the URL to HeadNode:Iam:S3Access as described in the AWS ParallelCluster documentation.

Second, an external setting file can only affect cluster-wide settings. If you need to modify queue- or compute resource-specific settings, use key-value pairs instead. Related to this limitation, key-value pairs and standalone files are mutually exclusive. You can only use one method, not both, to configure any given cluster (you’ll get a validation error from the CLI or UI if you try to use both at once).

We recommend you choose the key-value approach over a standalone file if you are new to customizing Slurm, unless you have an overarching need to use a configuration file. This will help make sure that you are not modifying any Slurm configuration parameters that could severely impact the native integration of Slurm in ParallelCluster.

Validation and debugging

Beyond checking the deny-list for restricted parameters, AWS ParallelCluster doesn’t validate the syntax or values you provide in your custom Slurm settings. It is your responsibility to make sure that any configuration changes you make to Slurm function work as you intend them to. If there are actions such as restarting services that we don’t cover in standard AWS ParallelCluster operations, it’s also your responsibility to make sure those happen.

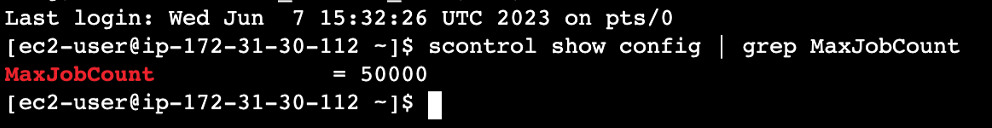

If you want to confirm that ParallelCluster is correctly incorporating your custom Slurm settings into the Slurm configuration, you can inspect the configuration directly. To do this, log into the head node and use the scontrol command. For example, on a cluster created from our example configuration, scontrol show config | grep MaxJobCount will show the line in the Slurm configuration that was generated using your CustomSlurmSettings (Figure 1).

Figure 1: You can use scontrol to inspect the Slurm configuration

You can also inspect the contents of various logfiles under /var/log/parallelcluster as well as /var/log/slurmctld.log to validate your cluster’s behavior against your custom settings.

Additional configuration files

There are other configuration files associated with Slurm such as gres.conf and cgroup.conf. It’s not currently possible to modify them using the AWS ParallelCluster custom Slurm settings mechanism. You can however, use custom bootstrap actions to do so. Keep in mind the same shared responsibility model applies to changes you make to these files as for standard custom settings.

Conclusion

AWS ParallelCluster 3.6.0 enables you to customize the settings for the Slurm scheduler in your cluster configuration file. This can help you tweak the behavior of your cluster to meet your specific use cases. To use this new capability, you’ll need to update your ParallelCluster installation to version 3.6.0 then either provide settings as key-value pairs or a standalone settings file.

Try out custom Slurm configurations and let us know how we can improve them, or any other feature of AWS ParallelCluster. You can reach us on Twitter at @TechHPC or by email at ask-hpc@amazon.com.