AWS for Industries

Building custom connectors using OSDU and Amazon AppFlow Custom Connector SDK

About OSDU

OSDU provides an industry initiative that aims to create an open-source technology-agnostic data platform for the energy industry. The OSDU platform supports most data types found in the energy industry. The components of OSDU include a common data model, APIs, and data governance frameworks to facilitate seamless integration and interoperability of energy data across various systems and applications. To learn more about energy data insights for the OSDU data platform on Amazon Web Services (AWS), visit here.

Application integration and OSDU

OSDU provides support for commonly used industry data types, including well data, seismic data, and reservoir data. OSDU offers a set of APIs for data ingestion, querying, retrieval, and metadata management, facilitating efficient data exchange, system interoperability, and ecosystem expansion.

In the context of OSDU, integration is crucial for the long-term success of OSDU because it facilitates the following:

- Data standardization: Data from various sources and systems can be standardized to a common data model, verifying data consistency and quality across the OSDU platform and reducing data silos, duplication, and discrepancies.

- Interoperability: Interoperability can be achieved between different systems, applications, and organizations to seamlessly exchange and share energy data within the OSDU platform.

- Workflows: Data exchange and near-real-time access to energy data can be streamlined.

- Extensibility: The mechanisms provide seamless integration of new data sources, applications, and technologies as the needs of the industry evolve.

In this blog post, we will explore how the AWS solution Amazon AppFlow—which automates data flows between software as a service (SaaS) and AWS services—can be used with OSDU to facilitate different integration patterns to deliver on the results outlined above.

Amazon AppFlow

Using Amazon AppFlow, you can automate bidirectional data flows between SaaS applications and AWS services in just a few clicks. The data flows can be run at the frequency you choose, whether on a schedule, in response to a business event, or on demand. Amazon AppFlow simplifies data preparation with transformations, partitioning, and aggregation. The service is fully managed, meaning users are not required to provision any infrastructure, which makes it easy to use and helps customers to focus on business needs and outcomes. The process is outlined in the following diagram:

Figure 1. The Amazon AppFlow process

Figure 1. The Amazon AppFlow process

A flow can be run on demand, on a schedule, or in response to a business event:

- On demand: Users can run data flows as a response to an action. This action can be performed manually by clicking “Run Flow” on the AWS console or automatically as a programmatic API or software development kit (SDK) call.

- Event-based: Users can run data flows in response to business events, like the creation of a sales opportunity, the status change of a support ticket, or the completion of a registration form.

- Scheduled: Users can run data flows on a routine schedule at chosen time intervals to keep data in sync or to run flows routinely.

Amazon AppFlow and AWS energy data insights for OSDU integration patterns

OSDU to AWS

Amazon AppFlow can facilitate data syncing between OSDU and AWS services. For example, Amazon AppFlow can be used to sync a machine learning (ML) dataset from OSDU to Amazon Simple Storage Service (Amazon S3)—an object storage service offering cutting-edge scalability, data availability, security, and performance—so that it can be accessed by Amazon SageMaker, a service used to build, train, and deploy ML models for any use case with fully managed infrastructure, tools, and workflows. This data syncing provides the flexibility to combine the data available in OSDU with AWS services to obtain new insights. Amazon AppFlow can also be used to bring data from other applications to Amazon services, effectively combining OSDU data and non-OSDU data.

Figure 2. Data flow between OSDU and Amazon AppFlow

Figure 2. Data flow between OSDU and Amazon AppFlow

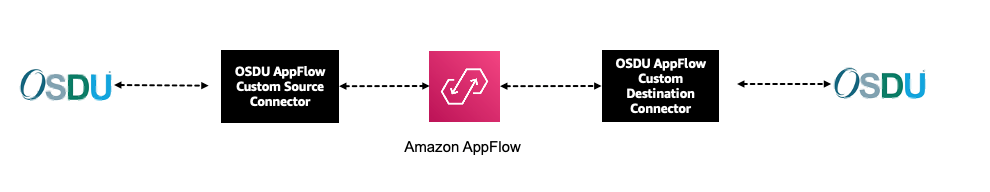

OSDU to OSDU

In a scenario where an organization has globally distributed teams working across multiple regions, deploying multiple instances of OSDU can help reduce the time to access large files, minimizing network latency and improving the user experience. Amazon AppFlow can then be used to sync data between the two instances. Amazon AppFlow provides the flexibility to define the data to be synced, the frequency of syncing, and the events that trigger the sync.

Figure 3. Data flow between OSDU instances

Figure 3. Data flow between OSDU instances

OSDU to custom in-house and AWS ISV Applications

Amazon AppFlow can be used to connect OSDU to custom in-house or independent software vendor (ISV) applications, meaning that Amazon AppFlow can be used to help applications that do not natively support OSDU share data with OSDU. In-house application development teams can use Amazon AppFlow to build custom connectors to interact with OSDU. ISVs can build connectors and publish these connectors to the Amazon AppFlow marketplace.

Figure 4. Data flow between in-house or ISV applications and OSDU

Figure 4. Data flow between in-house or ISV applications and OSDU

Amazon AppFlow Custom Connector SDK

Using Amazon AppFlow, you can easily create data flows for services that are not natively supported using the Custom Connector SDK. By using custom connectors, you gain the ability to interact with these services directly from the Amazon AppFlow console, just like you would with any of the connectors that are natively supported.

The open-source Custom Connector SDK now makes it easier to build a custom connector using the Python SDK or Java SDK. The Custom Connector SDK provides the ability to integrate with private API endpoints, proprietary applications, or other cloud services. It provides access to all available managed integrations and the ability to build your own custom integration.

You can deploy custom connectors in different ways:

- Private: The connector is available only inside the AWS account where it is deployed.

- Shared: The connector can be shared for use with other AWS accounts.

- Public: The connector can be published on the AWS Marketplace. For more information, refer to Sharing AppFlow connectors via AWS Marketplace.

How to create an Amazon AppFlow OSDU Custom Connector

The process for building, deploying, and using an Amazon AppFlow custom connector is as follows:

- Implement your data source APIs using the Custom Connector SDK and deploy the package as a function on AWS Lambda, a serverless, event-driven compute service.

- Register the custom connector using the Amazon AppFlow console or AWS CLI.

- Create one or more connections using the registered connector.

- Create one or more flows using the registered connector and connections.

Figure 5. Architecture using custom connectors

Figure 5. Architecture using custom connectors

It is necessary to implement the following three classes and the methods within each class from the Custom Connector SDK in the Amazon AppFlow Custom Connector function for AWS Lambda. The below code templates are from Amazon AppFlow Custom Connector Python SDK.

ConfigurationHandler:

ConfigurationHandler allows the connector to declare connector runtime settings and authentication config using the DescribeConnectorConfiguration method. This information is fetched during the connector registration process and stored in the Amazon AppFlow connector registry. Using this information, Amazon AppFlow renders the connection information to the user.

describe_connector_configuration method: describe_connector_configuration defines the capability of the connector such as supported modes, supported Auth types, scheduling frequencies, runtime settings of different scopes (source mode, destination mode, both and connector profile) etc.

ConfigurationHandler also includes the implementation for the following callbacks:

validate_connector_runtime_settings and validate_credentials methods: A successful registration of a connector enables the user to create a connector profile according to the supported Auth types and flows according to supported modes. A successful connector profile includes validating the user credentials and connector profile runtime settings. During this process, Amazon AppFlow invokes validate_credentials to validate the user credentials and validate_connector_runtime_settings with connector_profile scope to validate the runtime settings.

MetadataHandler:

Amazon AppFlow works with the metadata of the object to create a query for fetching the data from the source and applying the transformations given in the flow tasks. Amazon AppFlow will allow customers to interact with Entities that are returned from the listEntities method, where the connector developer may implement fine-grain control. Amazon AppFlow also ensures that users only use the fields and its properties defined in the describeEntity in tasks in order to apply the transformations such as mapping, masking, filtering etc. during the data processing step of the flow execution. The properties of the entity, such as datatype, queryable, updatable, help customers to define the filter condition, mapping tasks, and flow triggers correctly.

list_entities method: list_entities is called during flow creation and returns a list of schema definitions (Entity). This Entity is defined for each supported API.

describe_entity method: describe_entity is called in data field mapping when creating a flow and returns the field definition.

RecordHandler

Amazon AppFlow’s Flow execution for a custom connector mostly depends on the implementation of this RecordHandler interface. This is where we expect customers to implement the core functionality of making requests source/destination application in order to get/post data.

query_data method: query_data is called when the flow is run to define the fields in the flow.

write_data method: write_data is called when the flow is run to write the fields to the supported destination connector. If a flow has a destination connector as another OSDU instance or other supported destination connector, the write_data method is invoked.

Summary

In this blog post, we have explored how Amazon AppFlow can facilitate seamless and secure data integration across three different integration patterns, including OSDU to AWS, OSDU to OSDU, and OSDU to custom in-house and ISV applications.

Amazon AppFlow simplifies the data integration process for OSDU, making it easier for organizations to seamlessly exchange and share energy data with the OSDU platform. The service does this by reducing the development overhead required to build, test, and operate integration workflows. Amazon AppFlow scales up without the need to plan or provision resources, so you can move large volumes of data without breaking them down into multiple batches. Amazon AppFlow uses a highly available architecture with redundant, isolated resources to prevent any single points of failure while running within the resilient AWS infrastructure.