AWS for M&E Blog

Serverless fixity for digital preservation compliance

When The Digital Dilemma was published in 2007, Amazon had just started Amazon Web Services with the release of its AWS Simple Storage Service (S3) in the cloud. The industry was struggling with the short lifespan of digital tape formats, affordable disaster recovery, and technology refresh standards. Since that time, AWS has established itself as a dominant leader in digital preservation, matching tape level economics while providing 11 nines of durability and removing the overhead of a technology refresh schedule. AWS now manages many of the world’s most prominent archives, several of which have indefinite retention requirements including the U.S. National Archives.

To help maintain durability, AWS storage performs periodic background checking that confirms the integrity of stored content. While AWS performs this functionality on behalf of its customers as part of the service, some customers must maintain and leverage their own integrity or fixity checking processes as part of a content distribution package or internal standard. Several checksum algorithms are used in the industry for fixity checking; however, MD5 and SHA1 are the dominate algorithms used in the media and entertainment industry.

Broadcast and media production workflows execute a checksum as a standard part of a file copy, usually associated with the movement of a media file from one location to another. This enforces a valid “chain of custody” for managing content as corrupted files can lead to a massive waste of time and resource.

The National Digital Stewardship Alliance (NDSA) offers defined tiers of digital preservation and have dedicated a row to fixity checking for protecting media archives.

The process of running a checksum against video content can be time consuming due the sheer size of video content. It’s not uncommon for a checksum process on a 100 Gigabyte file to take up to 30 minutes on a standard server configuration. Content aggregation platforms set up to support incoming content will frequently dedicate servers to run 24×7 to support the ingest process and checksum files as they arrive. This creates a large bottleneck for large media companies as ingest is only as fast as the number of servers that are dedicated to the task. Aggregation platform may waste large amounts of resource as they are sized to manage peak capacity needs.

With regard to the NDSA levels of digital preservation, AWS has options to ensure an archive is protected at every level. AWS recommends that customers with high preservation requirements also adopt best practices:

- Content integrity should be validated after upload or copy operations, and validated again during read operations.

- Content integrity should be validated for chain of custody verification, especially where regulatory compliance is necessary.

In 2019, AWS launched a serverless ingest solution known as Media2Cloud. It’s a solution designed to support the movement of large-scale content and leverages services like AWS Lambda and AWS Step Functions to provide an automated, elastic ingest workflow that takes video content and performs a series of steps to create proxies and standardized metadata for managing the assets in AWS. Part of this solution is a serverless checksum solution for MD5. AWS recently added SHA1 to this solution as well, creating the ability for serverless fixity for digital preservation compliance.

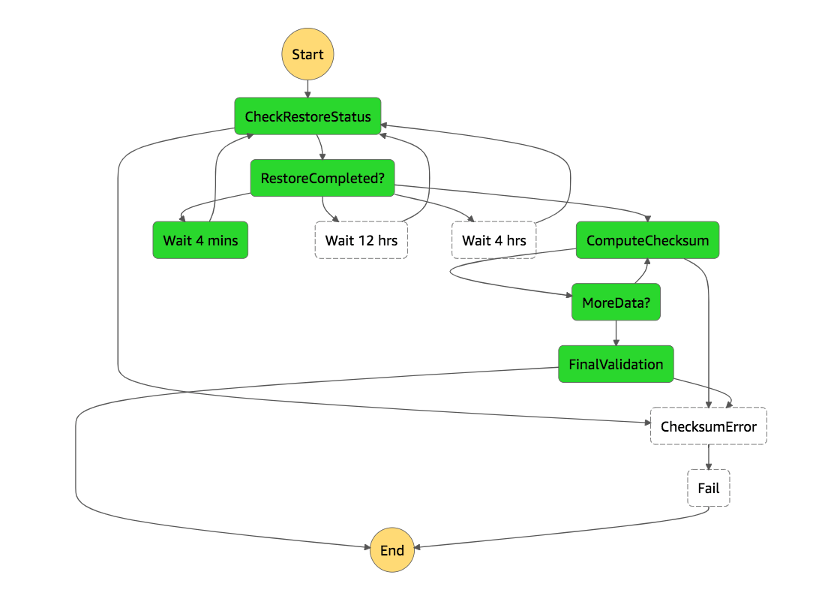

AWS Step Functions makes it simple to support checksum processing on large video files. AWS Lambda is an event-driven service that can run a process for up to fifteen minutes. This would normally be a problem for large video files; however, by using AWS Step Functions, a simple loop state machine is created that chucks large video files and passes the resulting hash of each chuck to the next sequential chunk of video file. The workflow diagram below is automatically created as JSON is written to create the loop for the checksum.

The serverless fixity for digital preservation compliance solution addresses two major issues with a media workflow. The first is the avoidance of queuing of checksum jobs waiting for finite resources. In most ingest workflows, content is transferred and received in batch, not a steady stream. If you’re managing your own servers, files need to wait for available resources to go through the fixity process. This can delay critical workflows like digital dailies that need to process content within minutes or hours or arrival. Candidly, the fixity process is often skipped in a dailies workflow due to this technical bottleneck. The downstream impact is to break the chain of custody, leaving open the possibility for corruption in the workflow in the capture process. Leveraging serverless checksum uses the elasticity in serverless workflows to process all video files in parallel to minimize processing time.

The following graphic reflects three different scenarios for processing a MD5 fixity checkum: One is serverless, the other two show processing time based on the number of dedicated checksum servers used to process files. If you receive ten 200 Gigabyte files that take close to an hour to process, running a checksum could take ten hours to complete on one server or five on two. Using a serverless infrastructure takes less than an hour to complete the same processing, regardless of the number of files being ingested. An added benefit is that you no longer pay for unused processing time. Running servers 24×7 is costly if your workflow process has extended downtime.

AWS is focused on providing a best-in-class service for ensuring chain of custody file integrity on media workflows as well as providing a robust service for archive preservation.

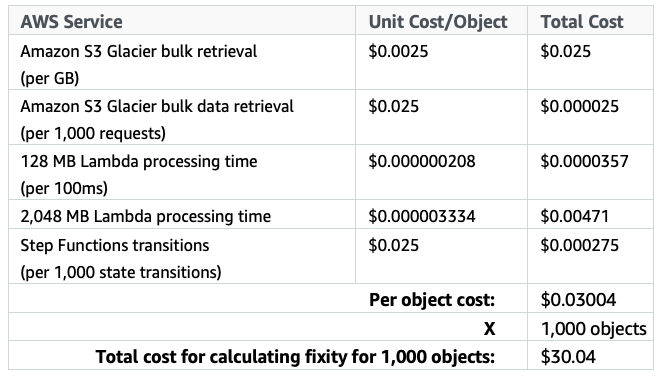

For example, the cost for running this solution with default settings in the US East (N. Virginia) Region is approximately $30.04 per month for 10 TB of data in total. This cost estimate assumes the following:

- The solution calculates fixity for 1,000 objects with each object being 10 GB in size.

- The objects are stored in Amazon S3 Glacier.

- For each fixity request, the solution consumes:

- 10 GB of S3 Glacier bulk retrieval data

- 1 restore request of S3 Glacier bulk data retrieval request

- 17,185ms of 128 MB AWS Lambda processing time

- 141,263ms of 2014 MB Lambda processing time

- 11 state transitions of AWS Step Functions Transitions

Note: The Lambda processing time and Step Functions transitions in this example take the average of 10 iterations of the fixity check process. The actual Lambda processing time and Step Functions transitions may vary.

For more information about serverless fixity for digital preservation compliance, visit the AWS Solutions page.