AWS Cloud Operations Blog

Ingest AWS Config data into Splunk with ease

AWS Config continuously monitors and records your AWS resource configurations and allows you to automate the evaluation of recorded configurations against configurations that you want. Today, many customers choose to use Splunk as their centralized monitoring system. In addition to displaying Amazon CloudWatch logs and metrics in Splunk dashboards, you can use AWS Config data to bring security and configuration management insights to your stakeholders.

The current recommended way to get AWS Config data to Splunk is a pull strategy. This involves configuring services like Amazon Simple Notification Service (SNS) and Amazon Simple Queue Service (SQS) with a dead letter queue and installing and setting up the Splunk Add-on application that polls for the messages in an SQS queue. Data delivery is guaranteed, but you have to wait for the subsequent poll job to receive AWS Config information. In addition, this requires operations to manage and orchestrate the dedicated pollers from the Splunk side, continuously polling for the SQS queues. With this setup, customers pay for the infrastructure even when it’s idle.

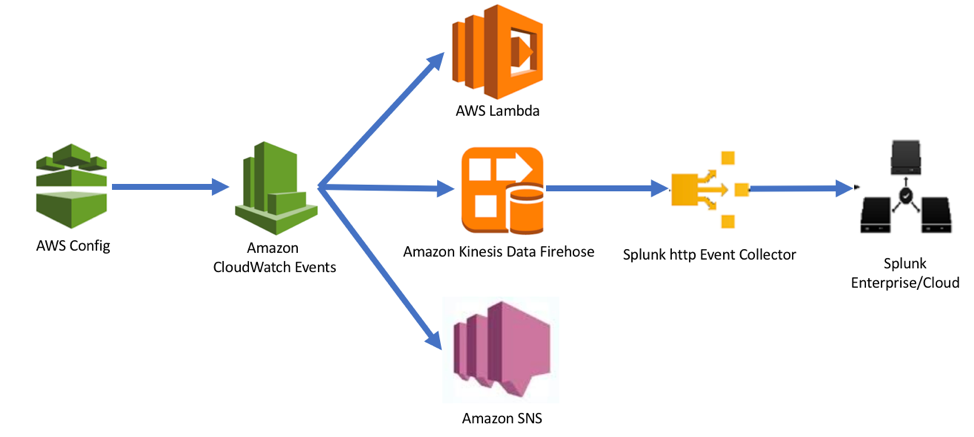

In late March 2018, Amazon Web Services announced new integration between AWS Config and Amazon CloudWatch Events which opened up a new and more efficient integration path to push AWS Config data into Splunk. The push approach that uses Amazon CloudWatch and Amazon Kinesis Data Firehose allows you to achieve near real-time data ingestion into Splunk. Prior to March 2018, AWS Config sent both configuration and compliance change notifications only via Amazon SNS, to a single topic. This made it difficult to filter various types of notifications. With CloudWatch Events integration, you can now filter AWS Config events for a specific type of event, related to a specific resource type or a resource ID. This integration also allows you to route specific events to specific targets such as AWS Lambda functions or Amazon Kinesis Data Firehose and take appropriate action. For example, if you set up an AWS Config rule to check on wide open security groups, with this new integration path, you would only receive AWS Config information relevant to this config rule in question to Kinesis Firehose stream.

Key concepts to know before you set up an end-to-end integration:

AWS Config: Provides a detailed view of the configuration of AWS resources in your AWS account. This is best illustrated with an example: If a specific Amazon S3 bucket is made public or if security group changes are made that open up more ports, AWS Config captures such configuration in near real time. You can set up notifications or call Lambda functions through SNS to take specific actions. AWS Config also includes information such as how the resources are related to one another and how they were configured in the past so that you can see how the configurations and relationships change over time. For more details on how AWS Config works, refer to the documentation.

Amazon CloudWatch Events: Delivers a near real-time stream of system events that describe changes in AWS resources. CloudWatch Events supports a number of AWS services. Using CloudWatch Events you can create event rules that are triggered based on the information captured by AWS CloudTrail, which is another service that automatically records events such as AWS Service API calls.

Amazon Kinesis Data Firehose: Is a fully managed service for delivering real-time streaming data to destinations such as Amazon Simple Storage Service (Amazon S3), Amazon Redshift, Amazon Elasticsearch Service (Amazon ES), and Splunk.

Splunk HEC cluster: Splunk has built a rich portfolio of components to access data from various applications, index huge files, and parse them to make sense out of data. Splunk developed HTTP Event Collector (HEC), which lets customers send data and application events to the Splunk clusters over HTTP and secure HTTPS protocols. This process eliminates the need of a Splunk forwarder and enables sending application events in real time.

Now let’s walk through the end-to-end integration setup. Setting up this integration involves two main steps:

- Set up Amazon Kinesis Firehose stream to point to the Splunk HTTP Event Collector cluster endpoint.

- Set up an Amazon CloudWatch Event rule to configure specific AWS Config changes or AWS Config rule triggers to ingest data directly to the Kinesis data delivery stream that was just created. This push strategy will make sure the data is made available near real-time.

Let’s step through how to easily ingest AWS Config data into Splunk. To process AWS Config data, we use the following architecture:

Steps for setting up Amazon Kinesis Data Firehose data stream

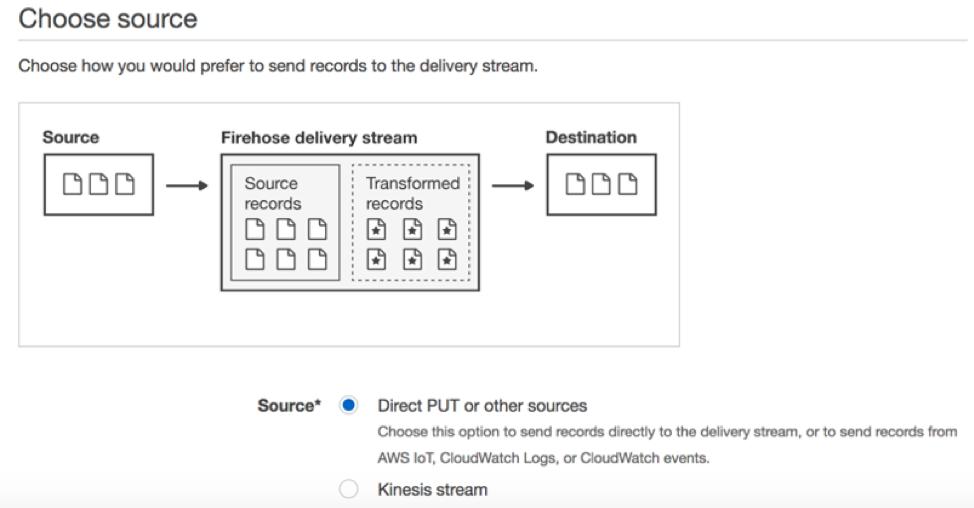

In the Amazon Kinesis Data Firehose console, after you choose the button to create a new stream, enter a relevant name for your stream (such as ConfigStream) and for Source choose Direct PUT or other sources. In our example, CloudWatch Events will be the source.

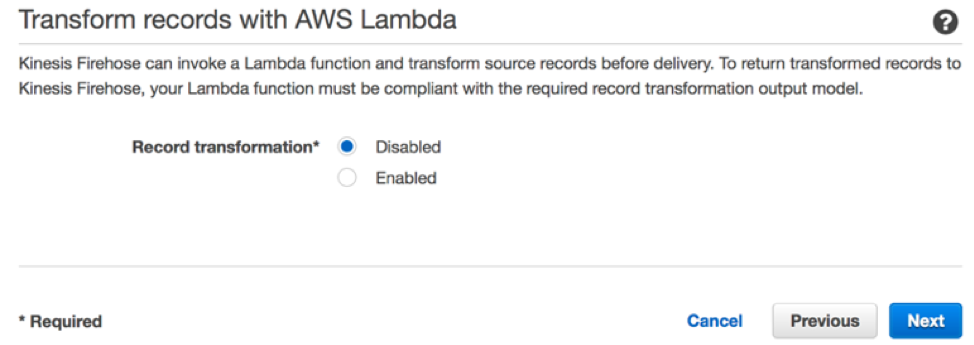

Next, you have an option to choose AWS Lambda to transform your data if you need to massage your data before transferring it to your destination. For our example, you can disable this option because the incoming data will be in a JSON format that can be interpreted by Splunk.

Next, choose Splunk for the destination and enter the details for the Splunk HEC server, such as the Splunk cluster endpoint and the Authentication token that you noted when you initially configured Splunk data inputs. Choose an appropriate endpoint type based on the data formatting. We will go with Raw endpoint in this example.

Note: Amazon Kinesis Data Firehose requires the Splunk HTTP Event Collector (HEC) endpoint to be terminated with a valid CA-signed certificate matching the DNS hostname used to connect to your HEC endpoint. You will receive delivery errors if you are using a self-signed certificate.

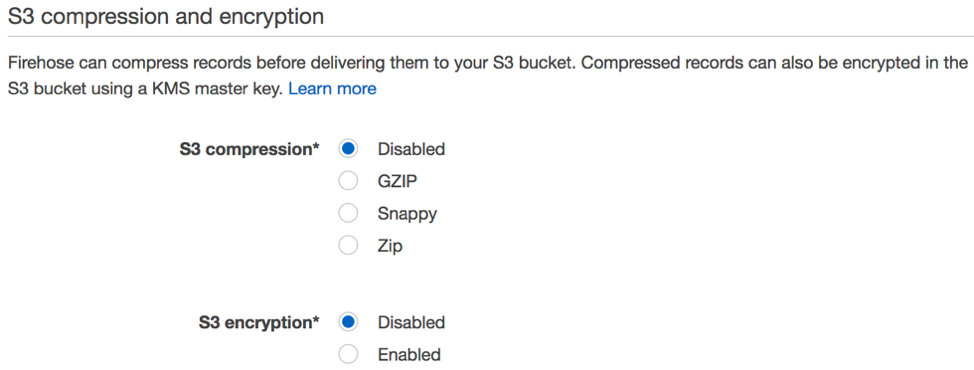

Set the Amazon S3 backup options to either back up all events or to back up failed events only. Enter the S3 bucket name and prefix. In this example we have left the default S3 buffer conditions and disabled S3 compression and encryption options. You can choose these options based on your requirements.

We recommend that you enable error logging initially for troubleshooting purposes and that you also create a firehose_delivery_role IAM role to grant access to the Kinesis Data Firehose service to resources like an S3 bucket and an AWS KMS key. Choose the Create delivery stream button on the review page after you review all the options you have chosen.

Steps for setting up CloudWatch Event rules

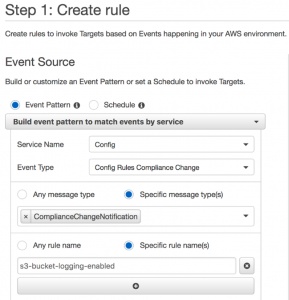

Navigate to the CloudWatch console, and choose on Rules to configure event rules. Choose Create rule to create a new rule and for Service Name select “Config”. In this example we have chosen the Event type as “Config Rules Compliance Change” to push the data whenever a specific rule compliance status changes.

You can also filter out specific resource types or resource IDs or just choose Any resource type and Any resource ID.

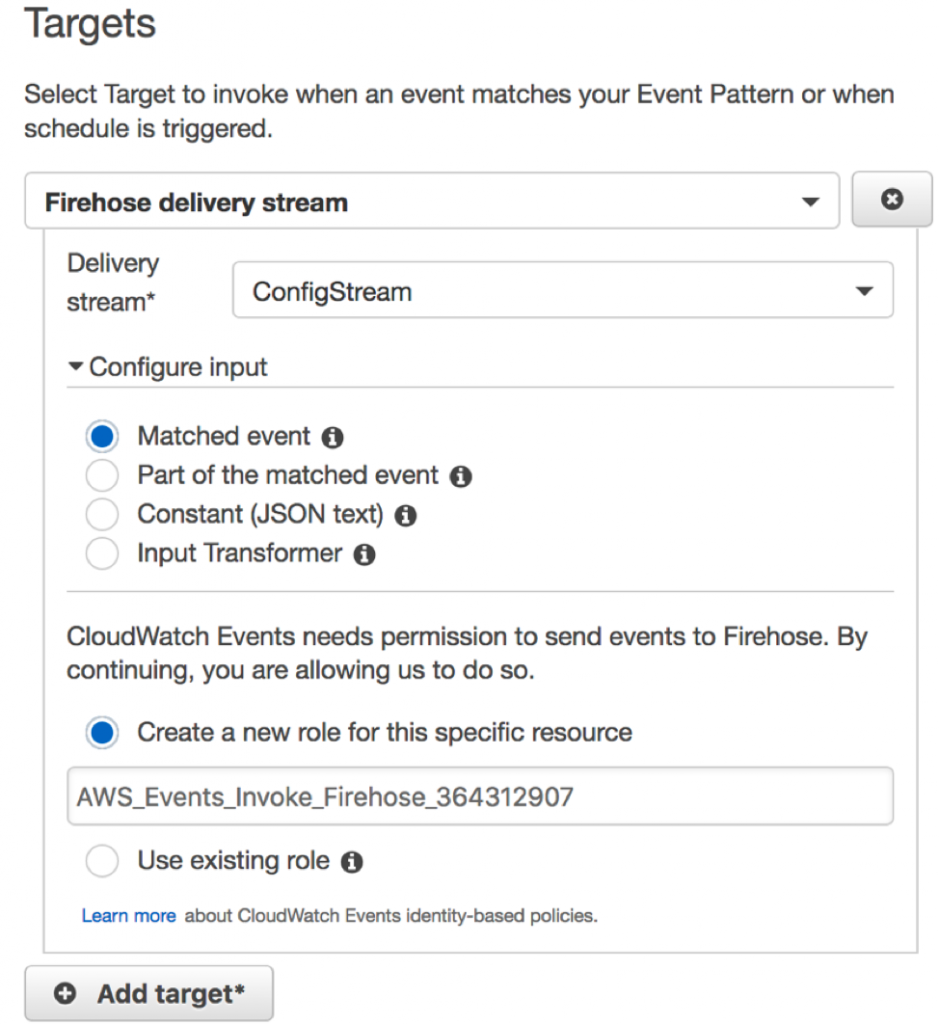

As part of the last step, choose Firehose delivery stream as the Target on the right side of the page. Select the delivery stream you created initially. Choose Matched Events while configuring events, and create a new role to grant CloudWatch Events permissions to send data to the stream.

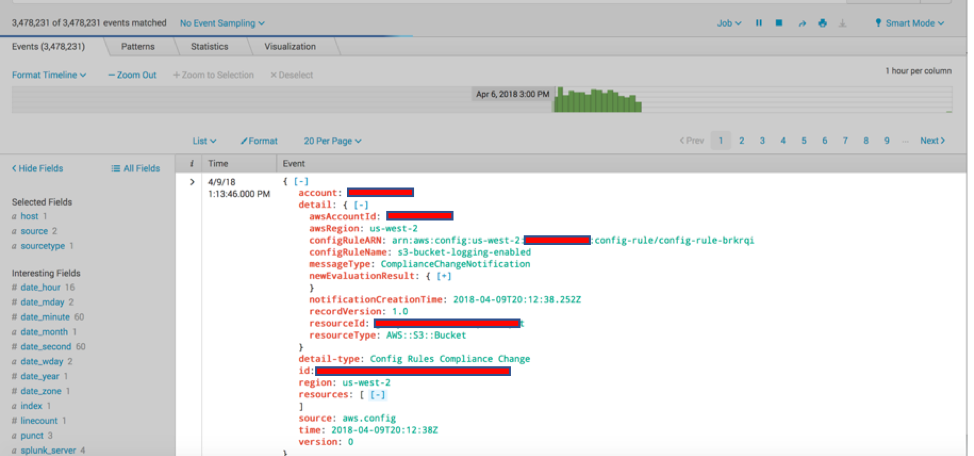

If your CloudWatch event rules are based on the compliance status change, you will see the next trigger occurring for the subsequent AWS Config compliance status re-evaluation.

Conclusion

You can now transfer AWS Config data to Splunk in near real time by using AWS services like Amazon CloudWatch Events and Amazon Kinesis Data Firehose. This enables you to keep your Splunk monitoring dashboards current. In addition, you can alert other teams to take necessary actions quickly, or, even better, you can automate specific configuration changes in near real time, thus minimizing business impacts.

About the Authors

Umesh Kumar Ramesh is a Cloud Infrastructure Architect with Amazon Web Services. He delivers proof-of-concept projects, topical workshops, and lead implementation projects to various AWS customers. He holds a Bachelors degree in Computer Science & Engineering from National Institute of Technology, Jamshedpur (India).

Umesh Kumar Ramesh is a Cloud Infrastructure Architect with Amazon Web Services. He delivers proof-of-concept projects, topical workshops, and lead implementation projects to various AWS customers. He holds a Bachelors degree in Computer Science & Engineering from National Institute of Technology, Jamshedpur (India).

Tarik Makota is a solutions architect with the Amazon Web Services Partner Network. He provides technical guidance, design advice and thought leadership to AWS’ most strategic software partners. His career includes work in an extremely broad software development and architecture roles across ERP, financial printing, benefit delivery and administration and financial services. He holds an M.S. in Software Development and Management from Rochester Institute of Technology.

Tarik Makota is a solutions architect with the Amazon Web Services Partner Network. He provides technical guidance, design advice and thought leadership to AWS’ most strategic software partners. His career includes work in an extremely broad software development and architecture roles across ERP, financial printing, benefit delivery and administration and financial services. He holds an M.S. in Software Development and Management from Rochester Institute of Technology.