Networking & Content Delivery

Approaches to Transport Layer Tenant Routing for SaaS using AWS PrivateLink

In today’s ecosystem, Software as a Service (SaaS) offerings are primarily delivered in a low friction, service-centric approach over the Internet. These services are often mobile applications or websites delivered via a Content Delivery Network (CDN), such as Amazon CloudFront, that in turn issues requests to the backend SaaS platform. As a SaaS provider, your SaaS solution is the main customer touch point for their core SaaS offering (excluding your Marketing, Sales, and Customer Success organisations for the point of this post) and we can address tenant identification, isolation, and routing using our usual Layer 7 (Application Layer) suspects (such as JSON Web Tokens (JWT) and HTTP Headers).

However, not all SaaS offerings are the same, and increasingly SaaS businesses are finding the need to meet their customers “where they are”, by enabling private integration between the SaaS offering and your customer’s IT environment. This provides great security and integration results for end customers. However, it also presents challenges for SaaS businesses, including addressing security and integration challenges between the SaaS offering’s AWS environment and your customer’s IT environment. A prominent example of this is Classless Inter-Domain Routing (CIDR) collisions between (RFC 1918) private addresses. Your SaaS application, deployed into a Virtual Private Cloud (VPC) is built on a CIDR range (e.g., 10.0.0.0/16). The odds are that one or more of your customers will clash with either yourself or another customer and a network routing challenge will ensue. If you’re having this exact challenge, then check out our post on Connecting Networks with Overlapping IP Ranges.

Now imagine that you are providing a Database as a Service offering that has a functional requirement to securely accept private Transport Layer (Layer 4) connections to your platform while still providing the isolation and tenancy routing capabilities that your customers expect. When working with Layer 4 connections, we’re often constrained by the communication protocols in use. Furthermore, we don’t immediately have the SaaS Tenant Identifiers that SaaS businesses routinely use to route tenants to the correct back-end infrastructure (e.g., commonly supplied using HTTP Headers or JWT).

To address this, we launched AWS PrivateLink in 2017. PrivateLink is a highly available, scalable technology that enables you to privately connect your VPC to services as if they’re located within your VPC.

When designing SaaS offerings, you must evaluate and decide whether resources should be pooled (shared) or siloed (not shared) between tenants based on the requirements of your SaaS offering. In this post, I’ll discuss a few approaches and what you should consider when architecting your SaaS offering for tenant routing and the delivery of Layer 4 solutions via PrivateLink.

PrivateLink recap

Before diving in, this blog will quickly recap the architecture for PrivateLink. For a detailed look, see the documentation on how to share your services through PrivateLink.

In short, PrivateLink is powered by the Network Load Balancer (NLB) service:

- You need an NLB deployed in front of the services that you want to expose from your VPC.

- Your NLB must be configured to listen on your required TCP port(s).

- Note that PrivateLink doesn’t support UDP connections at the time of writing this post.

- You will need one or more Target Groups and your NLB listener(s) configured to route connections to them.

Then, we create a VPC endpoint service configuration, based on that NLB, to set up your PrivateLink. From here, we grant permission to specific AWS principals (AWS accounts, AWS Identity and Access Manager (IAM) users, or IAM roles) so that they can connect to your service from the comfort of their own VPC.

Option 1 – Pooled NLB with Ports as Tenant Routing Identifier

Pooling (or sharing) your NLB between multiple tenants is a commonly considered solution when looking to implement multi-tenanted SaaS solutions via PrivateLink, as it provides benefits in simplicity (fewer NLBs to deploy and configure) and cost efficiency (as you’re charged for each hour or partial hour that an NLB is running). This pooled approach works extremely well in scenarios where you can perform application-level tenant routing using Layer 7 (Application Layer) constructs, such as HTTP Headers and JWT within your platform. Without these constructs (e.g., dealing with Transport layer connections for our hypothetical Database as a Service offering) we must rely on other techniques for tenant routing.

To identify and route Transport Layer traffic for SaaS tenants under this pooled NLB model, we can implement a port (or NLB Listener) per tenant to act as the Tenant Identifier. In turn, this enables you to route the traffic to the correct Target Group and through to the backing services. Remember that the Target Group lets you direct all of the traffic to a certain backend port, thereby removing the need to flow custom tenant ports throughout your SaaS platform. Under this model, you can cater for siloed or pooled backend resources as outlined in diagram 1.

Diagram 1 – Pooled NLB with Siloed Listeners as Tenant Routing Identifier

There are important considerations for this tenant routing approach that you should evaluate and consider, including:

- Each tenant will likely be allocated non-standard ports that can introduce complexity and friction during the tenant on-boarding experience, as your customers may need to raise and explain the need for unusual ports in their firewalls.

- Theoretically you could pool a listener if tenants share a Target Group. However, this significantly limits your flexibility if you must transparently adjust the routing for a tenant (e.g., a tenant moved SaaS plans that require different backend resources) and you’ll want to maintain a mapping of which tenants are using which port.

- Consider your Service Quotas associated with NLBs – you can have a maximum of 50 listeners (tenants in this case) per NLB. If you outgrow this Service Quota, then you would must add additional NLBs and manage tenant mapping/allocation between them.

- Network traffic bandwidth metrics are recorded per NLB in CloudWatch. By pooling this resource, it becomes challenging to understand and attribute network bandwidth usage at a per-tenant level.

- When you present a PrivateLink endpoint to your tenant, all of the ports present on the NLB are presented to the customer. This should be a significant consideration for the Tenant Isolation and Security of your platform, as it means Tenant B could attempt to access your platform via other ports. Under this pooled routing model this would mean that the tenant is routed to another tenant’s backend infrastructure.

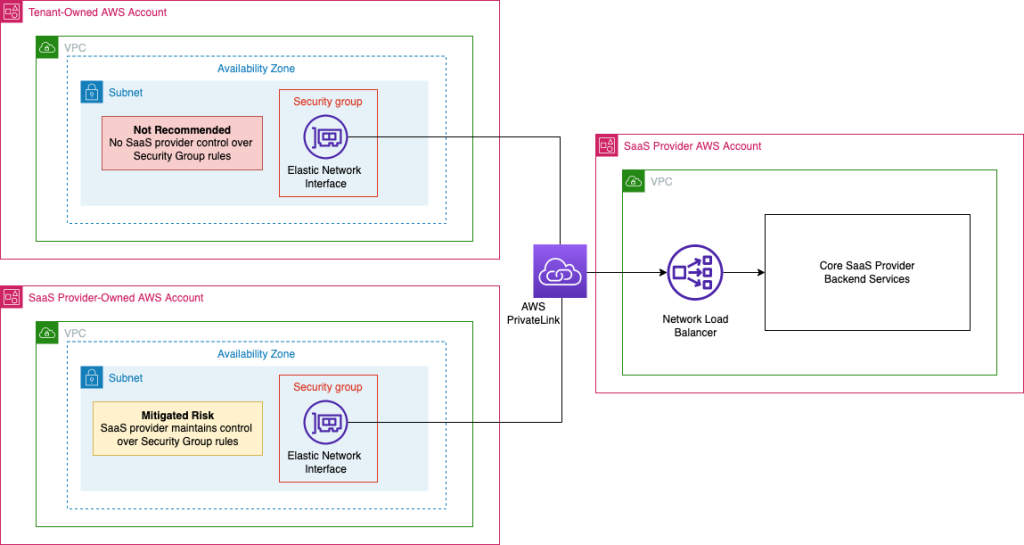

- This cross-tenancy risk can be mitigated if the deployed PrivateLink endpoint is owned and controlled in an SaaS vendor-provided integration VPC, where you control the security group associated with the endpoint and can restrict access to specific ports. This is detailed in diagram 2.

- You may additionally mitigate some of the risk that this poses if your backend resources require authentication, but this isn’t always the case.

Diagram 2 – Pooled NLB deployment scenarios

Option 2 – Siloed NLB as Tenant Routing Identifier

Depending on your specific scenario, one or more of the above considerations for a pooled NLB may not be feasible or acceptable. Therefore, we look at implementing your NLB and PrivateLink in a siloed model.

Under this model, we essentially have a 1:1 mapping for NLBs to your SaaS tenants that supports both Silo and Pooled models’ backend resources via Target Groups. The clear benefit here is simplicity (each NLB and PrivateLink is dedicated per tenant), you can expose the industry standard port(s) that your customers will expect, and you have the most flexibility for configuration, tenant routing, and ongoing updates.

As you can see in the diagram 3, this siloed NLB model can readily cater for most backend routing configurations, including both siloed and pooled models for target groups and backend resources.

Diagram 3 – Siloed NLB as Tenant Routing Identifier

However, as with Option 1 (pooled), there are some additional considerations that you’ll want to consider if implementing this tenant routing approach:

- As you’re implementing an NLB per tenant, you should keep an eye on your Service Quotas for NLBs and Target Groups. Both of these resources have a default quota (number of NLBs per region, number of Target Groups per region) that you may need to adjust to cater for your SaaS platform’s requirements.

- Network bandwidth monitoring and attribution to specific tenants is relatively simple under this model. We can readily understand and attribute network bandwidth to specific tenants.

- This model will impact your AWS spend (more than the pooled model) as you’re charged for each hour or partial hour that an NLB is running. In a 1:1 model, this pricing dimension can be expected to increase with each tenant on-boarded to this solution.

- Note that the pricing dimension for this component is readily known and consistent. Therefore, it can readily be incorporated into your per-tenant cost modelling.

Conclusion

This post has shown several approaches to implementing Transport Layer Tenant Routing using PrivateLink. I recommend implementing Option 2 (Siloed NLBs) for most Transport Layer routing scenarios via PrivateLink.

The following table shows a comparison between the options:

| Tenant Routing Method | Complexity | Security Considerations | Flexibility | Cost Profile | Tenant Bandwidth Monitoring | |

| Pooled NLB | Per Port | Moderate | Moderate | Moderate | Low | Hard |

| Siloed NLB | Per NLB | Low | Low | High | Moderate | Easy |

Further Reading

I strongly recommend reviewing our other articles and whitepapers on this topic: