AWS Open Source Blog

Category: PyTorch on AWS

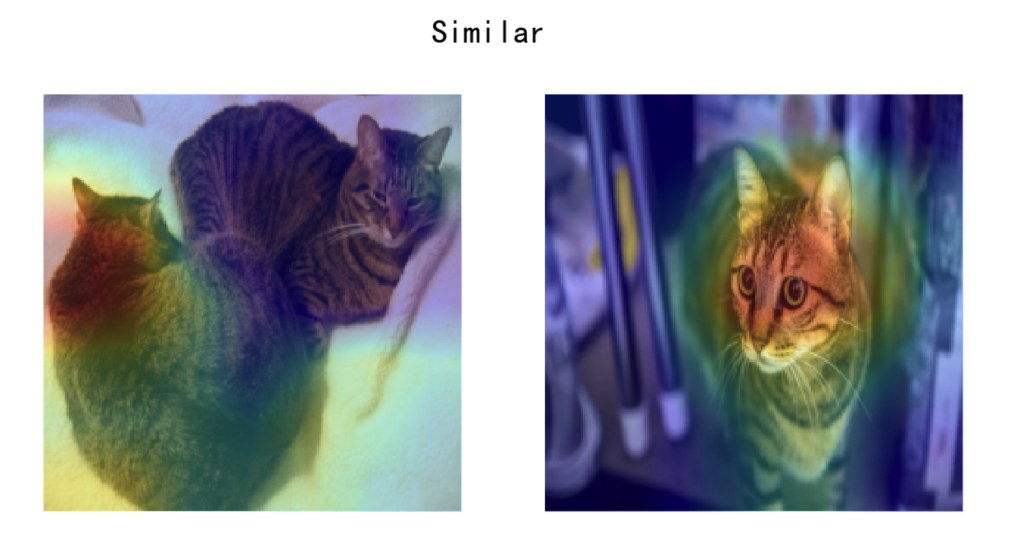

Twin Neural Network Training with PyTorch and Fast.ai and its Deployment with TorchServe on Amazon SageMaker

In this post we demonstrate how to train a Twin Neural Network based on PyTorch and Fast.ai, and deploy it with TorchServe on Amazon SageMaker inference endpoint. For demonstration purposes, we build an interactive web application for users to upload images and make inferences from the trained and deployed model, based on Streamlit, which is an open source framework for data scientists to efficiently create interactive web-based data applications in pure Python.

Deploy fast.ai-trained PyTorch model in TorchServe and host in Amazon SageMaker inference endpoint

Over the past few years, fast.ai has become one of the most cutting-edge, open source, deep learning frameworks and the go-to choice for many machine learning use cases based on PyTorch. It has not only democratized deep learning and made it approachable to general audiences, but fast.ai has also become a role model on how […]

How TalkingData uses AWS open source Deep Java Library with Apache Spark for machine learning inference at scale

This post is contributed by Xiaoyan Zhang, a Data Scientist from TalkingData. TalkingData is a data intelligence service provider that offers data products and services to provide businesses insights on consumer behavior, preferences, and trends. One of TalkingData’s core services is leveraging machine learning and deep learning models to predict consumer behaviors (e.g., likelihood of […]

Running TorchServe on Amazon Elastic Kubernetes Service

This article was contributed by Josiah Davis, Charles Frenzel, and Chen Wu. TorchServe is a model serving library that makes it easy to deploy and manage PyTorch models at scale in production environments. TorchServe removes the heavy lifting of deploying and serving PyTorch models with Kubernetes. TorchServe is built and maintained by AWS in collaboration […]