AWS Startups Blog

Brand Tracking with Bayesian Statistics and AWS Batch

Guest post by Corrie Bartelheimer, Senior Data Scientist, Latana

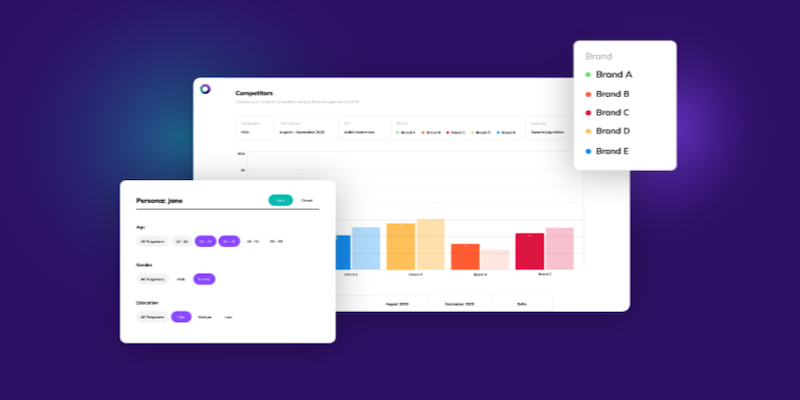

At Latana, we help customers make better marketing decisions about their brand by using advanced algorithms to track consumer perception. For example, a common problem with brand tracking is bias; while online surveys are relatively cheap, the respondents are usually not representative of the general population and the results can often falsely favor certain groups. Additionally, clients are often interested in how their brand fares in specific target groups, like households with young children, and very small target groups can be especially tricky to get reliable estimates from. The smaller the target group, the more difficult it is to distinguish between signal and noise: if there is a small change from one month to the other, is that due to random changes in survey respondents or is it actually a meaningful change? This last point is especially relevant for smaller companies that are not yet widely known, where a small absolute change implies a big relative change.

In this post, we outline how mathematical models and probability theory, specifically Bayesian methods, address some of the big problems in brand marketing and how AWS Batch, together with Metaflow, solves many of the technical issues that used to be major obstacles to using Bayesian methods at scale. Methods to compute Bayesian models such as Markov Chain Monte Carlo (MCMC) methods are infamous for being slow and computationally demanding, which is why adoption has been relatively low in the data industry in comparison to machine learning (ML) methods. Using Metaflow with AWS Batch allows us to provide a brand tracking solution powered by Bayesian statistics to our clients at scale.

Better Target Group Estimates through Hierarchies

At the core of our tracking tool is a statistical technique called MRP (Multilevel Regression with Poststratification), sometimes also affectionately known as Mister P. In its simplest form, MRP is a hierarchical linear model together with a poststratification. If we, for example, want to estimate how many people in the population know the brand FancyTech, and we are interested in the demographic’s gender, education, and age, we would set up the following model:

![]()

The variable `knows_brand` would be a binary variable (yes or no), so we use the inverse logit as link function. Following a hierarchical model, each demographic is grouped. This means that, for example, for education, we estimate one coefficient for each education level (low, medium, high), and all three coefficients come from a common probability distribution like Normal(0,1). This ensures that we get different estimates for each level but also helps regularize the estimates. If there are only a handful of respondents with high education, it’s better to stick closer to the estimate of the other two levels; otherwise, we risk skewing the data. Additionally, since we’re using a Bayesian approach, we get uncertainty estimates for free, which is especially important for small target groups where the estimate might be based on very few observations.

So far, we’ve addressed the problem of small target groups (through the hierarchical groups) as well as the problem of distinguishing between signal and noise (through the regularizing features of the Bayesian model). But how do we solve the problem of biased samples?

Poststratification of Biased Samples

The good news is that we generally do have information about how common different target groups are in the general population. Many countries collect extensive census data that give exact counts, like how many women live in Germany with a high education level between 40 and 50 years of age. If we have the proportions for each target group, we can use these to weight our predictions that we get from the hierarchical model. For example, if we only care about the gender demographics, we’d compute the prediction for both men and women and get the estimate for the general population as follows:

![]()

This formula easily extends to more demographics. As we’re using a generative Bayesian model, all uncertainty estimates also propagate across these calculations.

Challenges when Using Bayes

While using a Bayesian approach has multiple advantages, it also brings its challenges. Bayesian methods are known to be much slower than their frequentist counterparts and traditionally, their speed and computational complexity has been a major obstacle to using these methods at scale. Fortunately, recent developments such as the NUTS sampler and new probabilistic programming languages such as Stan mean that our models already run relatively fast; one of our models takes on average less than twenty minutes on a normal work laptop.

However, we need to run one model per question, and, depending on how the survey questions are coded, we also need to run multiple models per question. A question where a user can select multiple answers requires binarization, meaning we end up with one model per answer option. This also implies that one survey can result in a few hundred models, which would take a total compute time of multiple days. To speed up runtime, we therefore decided to run the models in parallel using AWS Batch, a service that simplifies running hundreds of parallel jobs in parallel. It was just what we needed.

Parallelizing Hundreds of Models

In each model, the same predictor variables are used (the demographic variables), and only the dependent variable changes (the question we predict). It thus makes sense to do all the data transformation once and only parallelize the models itself. Using AWS Batch, this means we would have one batch job to load and transform the data, then one job per model in parallelm and a single job afterwards to collect and combine the results. However, this also means we need to take care of data sharing between the different batch jobs. We quickly noticed that orchestrating the different batch jobs ourselves was difficult to maintain and was also taking away our time and resources that we’d rather spend on improving the statistical models.

In a previous project, our Data Science team had already worked with the framework Metaflow, and we concluded that it was also a good fit for our model pipeline. Metaflow structures a model pipeline, also called Flow, into multiple steps, and each step is computed in its own separate conda environment. We decided to create a few intermediate steps because it not only helps us to structure our code better, but it also gives us more fine grained control of the environment for each step and the resources needed.

![]()

To run the models in parallel, we create a single model step in our MRPFlow code and tell Metaflow to run it for each of the different question parameters. Metaflow takes care of saving and sharing data across the steps as well as handling the parallelization. One of the advantages when working with Metaflow is that there is barely any difference between running the code locally and running the code on AWS Batch. In our first outline of the model pipeline, we still had a few lines in our code that checked if it was running locally or on AWS Batch and then ran different code branches to either parallelize the models (on AWS Batch) or not (running locally). This made debugging the code rather difficult, and the process was generally more error prone. Many times, errors happening on AWS Batch were not reproducible locally. Using Metaflow ensures that the same piece of code is run, locally and on AWS Batch, and it also creates the same conda environment for both environments. This helps tremendously with reproducibility and generally makes development easier since we can be sure that what “works on my machine” will also work in the cloud and give the same results.

Results

By using a Bayesian approach, we can address some of the most common problems in brand tracking, and results even for small target groups are more reliable and less susceptible to random noise. For us, the benefits of Bayesian methods far outweigh the technical challenges that come with it. Furthermore, with Metaflow, we found a framework that takes care of those challenges for us and makes it feasible to run Bayesian models such as MRP at scale on AWS without requiring in-depth knowledge of AWS operations. Switching to Metaflow with AWS Batch really allowed us to speed up our development process and focus on improving the statistical models. In a previous version of the model pipeline, we ran everything on a single instance and could thus only parallelize a few dozen models. Moving to a fully parallelized setup meant we could run more than ten times faster and, on top of that, also have more flexibility in designing our models to deliver more accurate results as wells as smaller credibility intervals. The speed up in development has been especially noticeable in a recent project, where we had been working on stacking multiple models for each question, all run in parallel. Adding this to our existing MRPFlow was remarkably straightforward. In this sense, Metaflow has been extremely helpful in letting us focus on further improving our models to become more flexible and delivering more accurate results.