AWS Startups Blog

From 0 to 100 K in seconds: Instant Scale with AWS Lambda

Guest post by Michael Mendlawy, Senior developer, Crazylister

“What is the best infrastructure for processing massive amounts of requests at unpredictable times?” In this blog post, we describe what led us to ask this question and how AWS Lambda helped us answer it.

Before we dive into the nuts and bolts of the solution, a bit of background:

Here at Crazylister we help online retailers easily expand to new sales channels by removing the technological barriers. One way we tackle this problem is by giving eBay sellers the ability to easily create professional HTML product pages for their eBay listings, without writing a single line of code.

Many eBay sellers use “cross-selling galleries” in the descriptions of their products. Cross-selling galleries promote related products from the same seller, as shown in the following example.

Recently, eBay announced that it will begin phasing out “active content” from product descriptions in June 2017. This includes banning JavaScript from product descriptions, and by extension will break many existing JavaScript-based cross-selling galleries. We used this opportunity to introduce a prominent feature of our product: a JavaScript-free cross-selling gallery, which eBay sellers can add to their listings in a single click.

How does it work?

When an eBay seller comes to our dedicated landing page and enters his eBay credentials, we inject our cross-selling gallery code into each of his product pages. To do that, we use eBay’s API to get all of the seller’s product pages, insert the cross-selling gallery code into the description part of each page, and send it back to eBay.

Sounds simple enough, right? Well… yes and no.

The problem is volume. Some eBay sellers have hundreds of thousands (and some even have millions) of product pages.

Processing this amount of requests is typically handled via one of two ways:

- Overprovisioning – determining an upper limit for the number of supported concurrent requests and having a large enough instance ready at all times.

- Automatic scaling – launching Amazon EC2 instances on demand. This can be done using AWS Elastic Beanstalk and Auto Scaling.

The following table summarizes the main issues that arise from these solutions.

As you can see, these solutions pose problems in terms of response time and cost efficiency. The nature of our cross-selling gallery feature means that seller requests come in peaks. Most of the time few (if any) requests are sent. However, when a seller wants to make an update, we have to process massive amounts of requests as fast as possible.

Although overprovisioning gives us the ability to instantly start handling these peaks, we have to pay for a monstrous instance that sleeps most of the time. The benchmark that we used was 100 K product pages. Treating a single product requires about 5 MB RAM. That means that the instance should have about 500 GB RAM to support this amount of product updates at our required speed.

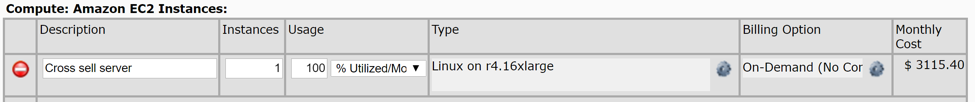

One type of EC2 instance closely matches this load, the r4.16xlarge. As you can see from the AWS Simple Calculator shown in the following screenshot, this amounts to over $3,000 a month!

Now, for startups in an initial stage (pre-Series A funding) that’s too much to pay for a single feature. Needless to say, we couldn’t afford it.

Auto Scaling allows us to “pay for what we use,” but launching new instances takes about five minutes, which results in an unacceptable user experience.

So…

Overprovisioning – too expensive

Auto Scaling – too slow

Is there a better solution out there?

Guess what? We found one.

AWS Lambda to the rescue!

We used AWS Lambda in the past for other microservices in our application, and when we considered it as a solution to our problem we found that AWS Lambda offers everything we were looking for. It lets us run as many concurrent functions as we want,* and we pay only when a Lambda function is running. Additionally, the price for running a Lambda function is low. Using the same 100 K listing benchmark as before, the monthly cost totals to a mere $13.

AWS Lambda gives us the best of both worlds: unlimited computing power instantly!

* There is a cap to the number of concurrent Lambda functions that you can have running, but asking nicely for an increase from the AWS support team will get you more Lambda functions up and running than you’ll know what to do with.

How did we implement it?

We designed an architecture that allows us to handle as many requests as we want using AWS Lambda. It is divided into three layers of Lambda invocations:

- Orchestration Lambda function

- Page Group Lambda function

- Page Lambda function

Orchestration Lambda

This is the Lambda that starts it all. This Lambda function does several things:

- Initializes all the required data and makes sure that the user input is valid.

- Gets the number of page requests to be made. For the sake of reducing call times, eBay paginates product information requests. This means that in order to receive all of a certain seller’s products, we need to tell eBay how many products we want returned for each call (up to 200 products per page), and eBay responds with the number of pages to be requested.

- Invokes as many concurrent Page Group Lambda functions as needed.

Page Group Lambda function

We bundle several pages together to be treated by a single Lambda function. This Lambda function invokes concurrent Page Lambda functions to treat each page in the page group. We do this to reduce the overhead of Lambda invocation. Yes, we can call thousands of Lambda functions to run in parallel, but the act of invoking thousands of asynchronous functions sequentially takes too long to meet our performance requirements.

Page Lambda function

Each Page Lambda function handles one eBay page that contains a number of the seller’s products. It inserts the cross-selling gallery into each product’s description, and sends the updated product information back to eBay.

This architecture empowers us to process a virtually unlimited amount of product pages simultaneously.

A few things we learned along the way

Invest time in finding the right solution, it might save you time and money later on.

AWS Lambda wasn’t our go-to solution for our feature. We had little experience with it, and our use case is probably not what Lambda functions were made for. But sometimes, if you understand the problem at hand, if you know your available resources, and if you use a little “outside of the box” thinking, you might find the most fitting solutions in places you didn’t think were relevant.

The Serverless Framework is awesome!

We use the Serverless Framework to write, check, and deploy our AWS Lambda functions with ease. If you want to work with AWS Lambda, you want to use the Serverless Framework. Serverless does all the AWS Lambda configuration for you and lets you deploy changes to your Lambda functions in seconds. But the best thing is that it allows you to invoke your Lambda function locally, making testing as easy as it can be and development much faster.

Make sure your Lambda functions are testable.

Local invoke, for us, is the best feature the Serverless Framework has to offer, so make sure you get the maximum out of it. Invoking locally doesn’t require deploying your Lambda functions, so testing is much faster. Try keeping your Lambda functions testable separately from the rest of the feature flow so that you can use your local Lambda functions. For example, we created test inputs that had the same format as an Invoke call to make sure our local Lambda functions work exactly as their remote versions will.

Use stages for environment separation.

The obvious use case for stages is to separate production code from development code, but you can take it a step further by using different stages for different features. If you have several people working on the same Lambda function, you might want each person to deploy his code to a different stage, thus avoiding deployment override when doing final testing.

Wrap it up!

As a startup, we’re used to doing things lean, but lean doesn’t always have to be about minimum effort to get something done. In our case, the lean way led us to build a far superior solution. We started with a $3,000 sub-par solution. With the help of AWS Lambda, we ended up with an excellent implementation for a fraction of the cost.