AWS Partner Network (APN) Blog

Generative AI Trends and Opportunities for AWS Partners: A Conversation with McKinsey & Company

By Priya Arora, Global Head of Generative AI Center of Excellence – AWS Partner Organization

|

As the AWS Partner community continues to build and evolve its generative artificial intelligence (AI) offerings to meet customer needs, consistent areas of inquiry have emerged that require insights to inform partners’ investments and where and how they recommend their customers invest.

These insights include:

- What generative AI use cases have the most potential to unlock business value? How do they manifest across priority industry verticals?

- How to accelerate customer realization of value with generative AI?

- What are best practices for building a long-term and scalable generative AI strategy and infrastructure?

Through the newly-introduced Generative AI Center of Excellence for AWS Partners, we are excited to share perspective on many of these questions. This includes leveraging the AWS Partner Network (APN) to bring unique insights from a variety of partner contributors and thought leaders.

To kick off this series, I recently sat down for a Q&A session with McKinsey & Company Senior Partner Naveen Sastry to talk about generative AI opportunities and best practices in implementation.

Recent whitepapers published by McKinsey & Company share data and insights that can help inform generative AI planning and execution:

- The economic potential of generative AI: The next productivity frontier

- Technology’s generational moment with generative AI: A CIO and CTO guide

Noted in these whitepapers is the $4 trillion of economic value that could be enabled by generative AI, and the Q&A below delves into the direct implications and how companies can capture value.

Q&A with McKinsey & Company

AWS: Can you share a little about your background and role at McKinsey & Company?

McKinsey: I am Naveen Sastry, Senior Partner at McKinsey in our digital practice. I serve clients across industries, as well as technology and software companies on cloud and AI. Through QuantumBlack, AI by McKinsey, our firm’s AI arm, we work with clients to improve performance by deploying cutting-edge advanced analytics including generative AI. QuantumBlack unites more than 5,000 practitioners, including more than 1,500 data scientists and engineers and five R&D centers around the globe.

McKinsey is uniquely positioned to serve clients on generative AI. We believe that value at scale from generative AI requires much more than foundation models (FMs), including large language models (LLMs). Through QuantumBlack, we have developed a set of ready-to-deploy code assets that we’re bringing to our clients’ tech stack to build generative AI applications at scale, augmented with relevant domain (industry or function) expertise and toolkits. These best practices that we follow and implement can inspire and guide AWS Partners as they help their customers on generative AI.

AWS: Both whitepapers share perspectives on the potential value to be derived through generative AI; what are the risks and opportunity costs to businesses not investing or deploying generative AI? How can they think about proactively addressing potential hurdles to implementation?

McKinsey: We see two main risks for organizations not investing in or deploying generative AI: 1) losing competitive advantage by waiting to invest; and 2) not scaling generative AI quickly, leading to organizations getting stuck in pilot purgatory. Speed is a strategy here. There are material costs to waiting to start with generative AI, so early and rapid path to production is key.

A big hurdle we see is organizations not scaling quickly enough. Organizations often experiment with “cool ideas,” but few identify a business challenge and deploy a solution to address the pain. This happens because organizations are either stuck too long in figuring out use cases or wait to have all their data perfectly lined up. AWS Partners can help their customers on generative AI across three phases: building a strategic roadmap, delivering a minimally viable generative AI product, and scaling across the organization.

AWS: What should AWS Partners know about building a strategic roadmap for generative AI?

McKinsey: Using a two-speed approach to “learn into” generative AI accelerates the strategic roadmap phase. To do this, AWS Partners should help their customers identify two use cases that can be delivered quickly to prove impact—that’s the first speed. Then, help them pick another two use cases that will fundamentally alter their business—this is the second speed. These four use cases should be prioritized based on impact and feasibility, setting the course to realize generative AI impact quickly.

AWS: Once the use cases are prioritized, how should AWS Partners think about proving the value?

McKinsey: By delivering the generative AI product as fast as possible. Start with a proof of concept (PoC) or demo that can be built quickly in 1-2 weeks to show the art of the possible. Then, build a minimally viable product (MVP) on the organization’s infrastructure, and scale the MVP across the organization. To do this, organizations must think through talent needs and gaps, tools, and budget. Some organizations will have internal talent, tools, and budget to develop their generative AI use cases in-house. Most organizations, however, will likely need to consider outsourcing aspects of development to move quickly.

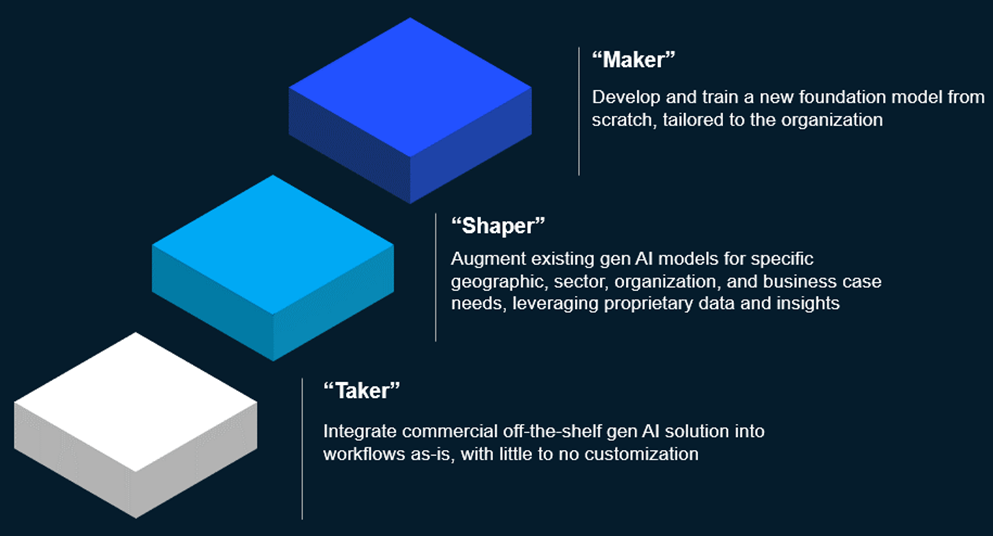

We see three archetypes for deploying generative AI solutions based on the talent, tools, and budget available: takers, shapers, and makers.

- Takers lean heavily on integrating off-the-shelf generative AI solutions into their workflows.

- Shapers (most corporate clients, outside of the technology space) customize existing generative AI models and tools based on their organization’s needs.

- Makers build the foundational models from scratch.

Each of these archetypes can lead to successful deployments. While the degree of outsourcing can vary, they can all benefit from outsourcing. The key takeaway across each is moving away from getting stuck in the experimentation phase and embarking on a path to production-grade deployments as quickly as possible.

AWS: Once an MVP is built, how should AWS Partners think about scaling it?

McKinsey: Over time, organizations will need help on becoming more efficient with generative AI development and deployments. Most tech-forward organizations are moving towards a product and platform operating model. Organizations could use the same construct and create a centralized, cross-functional platform team to enable generative AI across the enterprise. By moving generative AI capabilities into this model, AWS Partners can help create reusable components, scalable infrastructure, and drive organizational alignment.

In addition to the technology scaling, organizations will need help on evolving their talent and operating model strategy to accommodate generative AI. The adoption strategy (taker, shaper, maker) and use-case roadmap defines the talent needed to deliver and scale, with shaper and maker approaches requiring a bigger scale of talent needs relative to the taker approach. Over time, organizations should periodically re-evaluate talent needs and strategy to ensure efficient resource allocation as more use cases get added and existing ones evolve. This could mean building internal teams and/or working with partners to scale the use cases and maintain them on an ongoing basis.

McKinsey has invested more than three years into this topic and a few months ago launched a product suite, QB Horizon, which includes recent acquisitions, like Iguazio, to allow our clients to scale AI.

AWS: There is a robust analysis across 20+ industries on the potential impact of generative AI on business functions across industries; how important is industry specialization and knowledge to fully realize the value associated with generative AI applications?

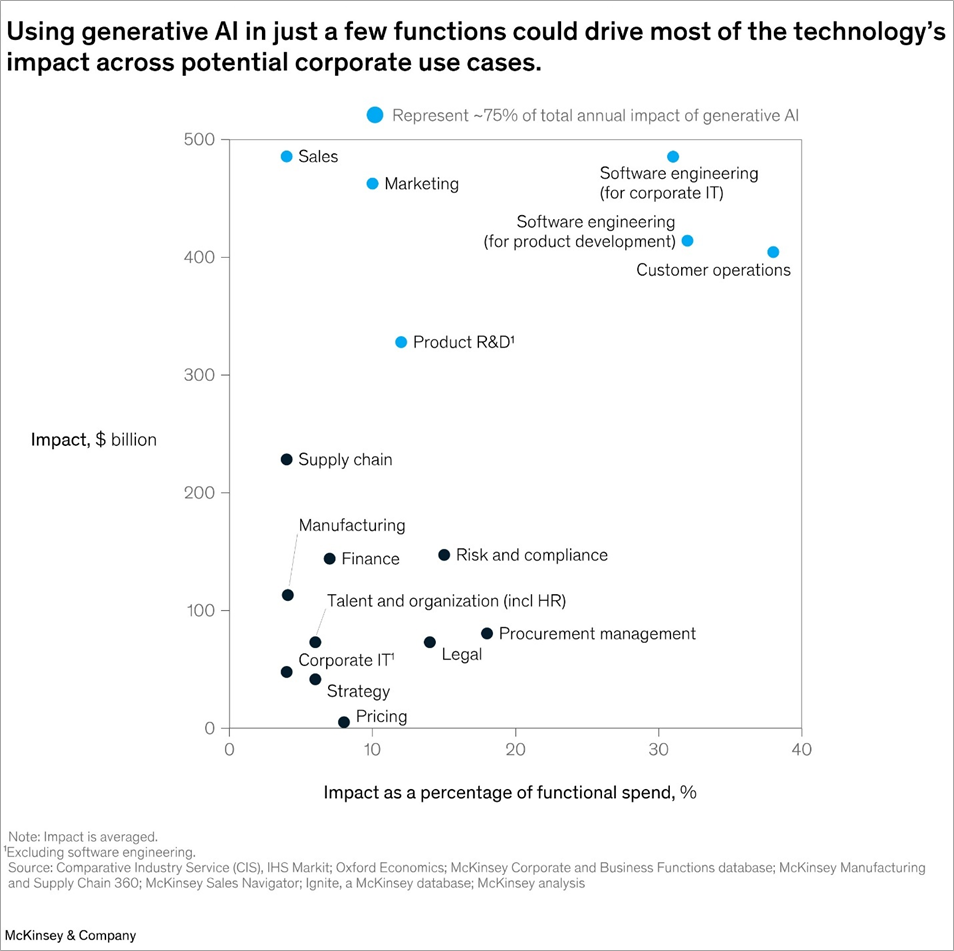

McKinsey: The research in the whitepaper “The economic potential of generative AI” estimates it could add the equivalent of $2.6-$4.4 trillion annually across 60+ use cases we analyzed. About 75% of that value falls across four functional areas: customer operations, marketing and sales, software engineering, and R&D.

The impact potential of generative AI in each functional area depends on the industry in which applications are deployed. Due to factors like industry need, regulatory requirements, and diversity of data used, we recommend AWS Partners invest in and build industry-specific applications to help their customers succeed with generative AI. To illustrate this, we can examine applications in each functional area.

Figure 1 – From McKinsey’s “The economic potential of generative AI: The next productivity frontier.”

- Customer operations: We are advising banks and financial services companies on how to use generative AI to augment or altogether automate call center activities. These applications can steer live client calls based on the bank’s product offerings, previous Q&A, policies, and past client logs. Banks and financial services companies are highly regulated and, as such, applications built for this use case require enhanced controls for using customer data, leveraging human-in-the-loop feedback methods to ensure compliance. Consider customer privacy needs and regulatory restrictions when building MVPs for customers in this realm.

- Marketing and sales: Retail and consumer packaged goods (CPG) companies can realize generative AI’s value via creative content generation. We’re helping our CPG clients create drafts at scale in the form of idea generation (storyboarding) and mass-version creation (personalized emails with different media). These applications leverage customer data from all over, including purchase intent data from ecommerce sites, internet surfing behavior, and social media posts, to generate targeted, multi-modal content for end customers. For customers with marketing and sales applications, consider the data that can be leveraged to support multi-model content generation based on the customer’s target audience.

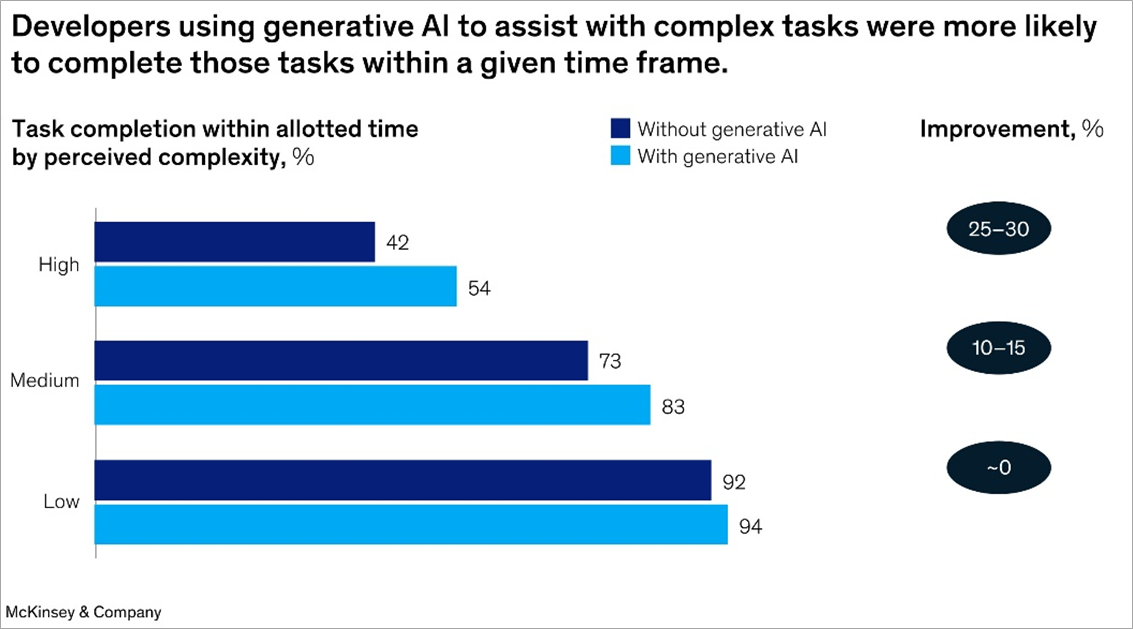

- Software engineering: Generative AI can assist in code creation through the build and test stages for tech-centric companies. In our work with software developer teams, we found the gains seen are variable based on the role type and activities, with some highly-skilled developers reporting 50-80% gains and some lesser. This gap highlights that coding copilots alone would be insufficient and that additional levers such as developer upskilling and putting in place enterprise enablers (DevSecOps for generative AI) can help unlock full productivity.

Figure 2 – Taken from McKinsey’s “Unleashing developer productivity with generative AI.”

- Research and development: Life sciences and MedTech companies have specific use cases that may require fine-tuned models to realize optimal value from generative AI within their R&D function. Where huge, all-encompassing models can be great for broad use cases (such as conversational AI bots), through our work with life sciences and MedTech companies we are finding that certain use cases such as large molecule design and protein sequencing will require fine-tuning of LLMs. As such, customers may also need value-added services around large language model fine-tuning, data engineering, and LLMOps while ensuring the stringent requirements are met.

AWS: You cite nine actions all technology leaders can take to create value and manage risks for generative AI; how do those actions intersect with build vs. buy decisions related to generative AI?

McKinsey: The nine actions help technology leaders assess what they need to do to create value and manage risks for generative AI. As a quick recap, these nine actions are:

- Determine company’s posture for adopting generative AI.

- Identify use cases that build value.

- Reimagine the technology function.

- Leverage existing services including open-source generative AI models.

- Upgrade enterprise technology architecture.

- Develop data architecture to enable access to quality data and to optimize costs.

- Create centralized cross-functional generative AI platform team.

- Upskill talent.

- Set up risk guidelines.

Considering each action will give a technology leader a good sense of where they stand with generative AI and influences their build vs. buy strategy. Earlier, I mentioned three archetypes for deploying generative AI solutions: taker, shaper, and maker. Each archetype lends itself to different build vs. buy considerations with shapers and makers more in the “build” camp and takers in the “buy” camp.

- Takers use publicly available models through a chat interface or API, with little-to-no customization. Takers are less into “building” but rather “consuming” generative AI solutions, like an off-the-shelf coding assistant for software developers or a general-purpose customer service chatbot through as-a-service models. Takers may still require help with services like workflow integration, custom user interface (UI), and user experience (UX).

- Shapers integrate commercially available models with internal data and systems to generate more customized results. With shapers, there are two methods: 1) bringing the model to the data; or 2) bringing the data to the model. In the former, the model is hosted on the organization’s infrastructure or virtual private cloud (VPC), reducing the need for data transfers. In the latter, the organization uses models hosted on public cloud infrastructure. Say a company wants to launch a customer service chatbot, fine-tuned with industry-specific knowledge and chat history. To do this, they may need help on building the data and model pipeline, fine-tuning the model, and building a plugin layer in addition to other aspects such as MLOps, business process redesign, workflow integration, UI/UX, and ongoing maintenance of the solutions.

- Makers build a foundation model to address a specific set of use cases. For this, makers require a substantial one-off investment to build and train the model, depending on the scope and complexity of the use cases. Further, makers will also incur annual costs for model inference and maintenance as well as plugin layer maintenance. While organizations choosing this strategy typically have internal data and analytics resources, there could be specific aspects of the build (such as app modernization and security) and ongoing maintenance that AWS Partners can help them with.

Figure 3 – From McKinsey’s “Technology’s generational moment with generative AI: A CIO/CTO guide.”

AWS: You talk about the importance of thinking about the enterprise technology architecture and not just the LLMs; how should AWS Partners guide their customers on this?

McKinsey: One of the biggest misconceptions we hear in generative AI discussions is that it’s just about foundation models, including large language models. While models are required, companies will not realize generative AI’s value by focusing only on the model— they must think beyond the model and consider the creativity, infrastructure, data architecture, MLOps, business process redesign, and change management aspects to ensure full value capture.

- Creativity is required to provide an innovative vision of how to apply the technology to disrupt your industry and business model.

- Infrastructure provides the computing resources and scalability required for training and inference of AI models. AWS Partners can help their customers understand the tradeoffs between different infrastructure options and help with integrations or migrations as needed.

- Data architecture involves structuring data in a way that’s accessible, secure, and optimized for training and inference of AI models. AWS Partners can help customers design and implement the right data architecture for generative AI, prep data to be ingested by the generative AI solution, and build pipelines to ensure data can be efficiently ingested over time.

- MLOps includes processes like model versioning, monitoring, and continuous integration and deployment (CI/CD). AWS Partners can help their customers establish MLOps processes and best practices, and provide tools and platforms for managing and deploying machine learning models.

- Business process redesign is important as generative AI scales within an organization because these processes need to be updated or sometimes altogether changed. Generative AI introduces the need for new workflows, roles, and responsibilities for full value capture. AWS Partners can provide guidance on how to redesign business processes to incorporate generative AI and identify areas where it can have the greatest impact.

- Change management is key as generative AI introduces change within the organization. Companies should consider the impact on employees, stakeholders, and overall culture. Helping companies develop and roll-out change management strategies will help ensure smooth adoption and integration of generative AI, including training and communication initiatives.

.

Next Steps for AWS Partners

I’d like to thank Naveen Sastry, Senior Partner at McKinsey & Company, for taking the time and providing these added insights and practical advice that can help AWS Partners build actionable strategies to drive generative AI outcomes with customers.

At AWS, we are excited to continue bringing market insights and learnings to our partner channel to help inform generative AI strategies, as well as tools and resources that help AWS Partners action those plans.

Stay engaged and up to date with training, content, and resources by visiting the Generative AI Center of Excellence for AWS Partners. To get started, log into AWS Partner Central (login required) and navigate to Resources > Guides > Generative AI Center of Excellence and explore the generative AI playbook. You can also access the Center of Excellence through the AWS Generative AI Partner Resources page.