AWS News Blog

Amazon Kinesis Video Streams – Serverless Video Ingestion and Storage for Vision-Enabled Apps

Cell phones, security cameras, baby monitors, drones, webcams, dashboard cameras, and even satellites can all generate high-intensity, high-quality video streams. Homes, offices, factories, cities, streets, and highways are now host to massive numbers of cameras. They survey properties after floods and other natural disasters, increase public safety, let you know that your child is safe and sound, capture one-off moments for endless “fail” videos (a personal favorite), collect data that helps to identify and solve traffic problems, and more.

Dealing with this flood of video data can be challenging, to say the least. Incoming streams arrive unannounced, individually or by the millions. The stream contains valuable, real-time data that cannot be deferred, paused, or set aside to be dealt with at a more opportune time. Once you have the raw data, other challenges emerge. Storing, encrypting, and indexing the video data all come to mind. Extracting value—diving deep in to the content, understanding what’s there, and driving action—is the next big step.

New Amazon Kinesis Video Streams

Today I would like to introduce you to Amazon Kinesis Video Streams, the newest member of the Amazon Kinesis family of real-time streaming services. You now have the power to ingest streaming video (or other time-encoded data) from millions of camera devices without having to set up or run your own infrastructure. Kinesis Video Streams accepts your incoming streams, stores them durably and in encrypted form, creates time-based indexes, and enables the creation of vision-enabled applications. You can process the incoming streams using Amazon Rekognition Video, MXNet, TensorFlow OpenCV, or your own custom code, all in support of the the cool new robotics, analytics, and consumer apps that I know you will dream up.

We manage all of the infrastructure for you. First, you use our Producer SDK (device-side) to create an app and then send us video from the device of your choice. The incoming video arrives over a secure TLS connection and is stored in time-indexed form, after being encrypted with a AWS Key Management Service (AWS KMS) key. Next, you use the Video Streams Parser Library (cloud-side) to consume the video stream and to extract value from it.

Regardless of how much you send – low resolution or high, from one device or from millions – Kinesis Video Streams, will scale to meet your needs. You can, as I never get tired of saying, focus on your application and on your business. Amazon Kinesis Video Streams builds on parts of AWS that you already know. It stores video in S3 for cost-effective durability, uses AWS Identity and Access Management (IAM) for access control, and is accessible from the AWS Management Console, AWS Command Line Interface (AWS CLI), and through a set of APIs.

Amazon Kinesis Video Streams Concepts

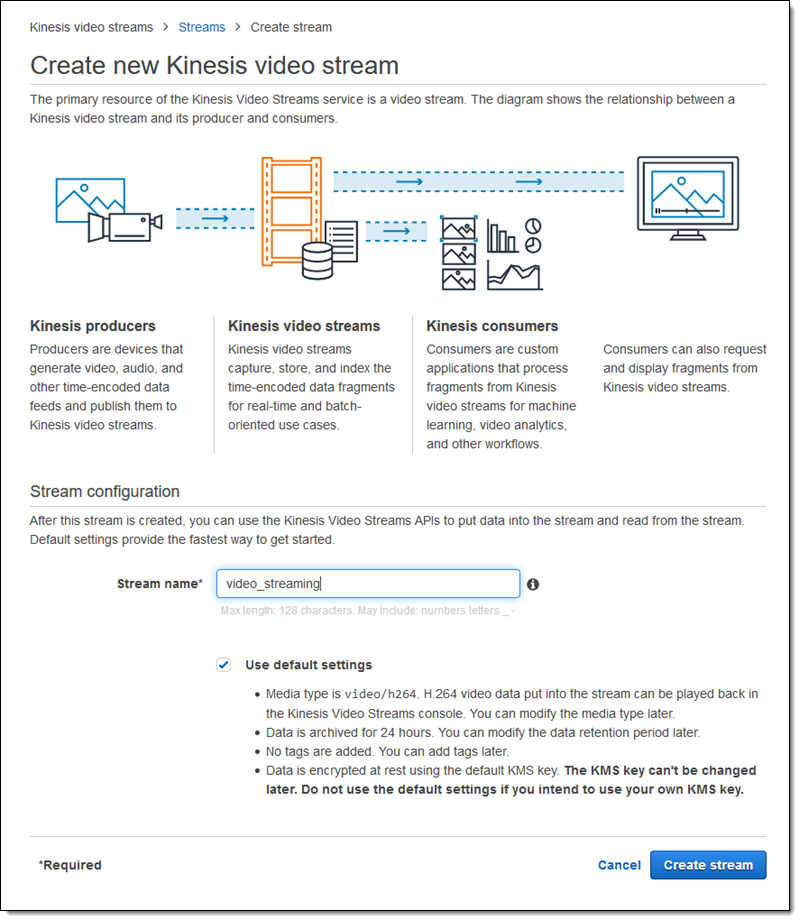

Let’s run through a couple of concepts and then set up a stream.

Producer – A producer is a data source that puts data into a stream. It could be a baby monitor, a video camera on a drone, or something more exotic: perhaps a temperature sensor or a satellite! The Amazon Kinesis Video Producer SDK provides a set of functions that make it easy to establish a connection and to stream video.

Stream – A stream allows you to transport live video data, optionally store it, and make it available for real-time or batch consumption. Streams can also carry other types of time-encoded data including audio, radar, lidar, and sensor readings. In most cases, there’s a 1-to-1 mapping between producers and streams. Multiple independent applications can consume and process data from a single stream.

Fragment & Frames – A fragment is a time-bound set of individual frames from a stream.

Consumer – A consumer gets data (fragments or frames) from a stream and processes, analyzes, or displays it. Consumers can run in real-time or after the fact, and are built atop the Video Streams Parser Library.

Using Amazon Kinesis Video Streams

As I noted earlier, there’s a 1-to-1 mapping between producers and streams. In most cases, each instance of a producer will create a unique stream using the Kinesis Video Streams API. However, you can create streams manually for test or demo purposes, or if you need a small, fixed number of them.

To create a stream manually, I open up the Kinesis Video Streams Console and click on Create Kinesis video stream:

I simply enter the name of my stream and click on Create stream:

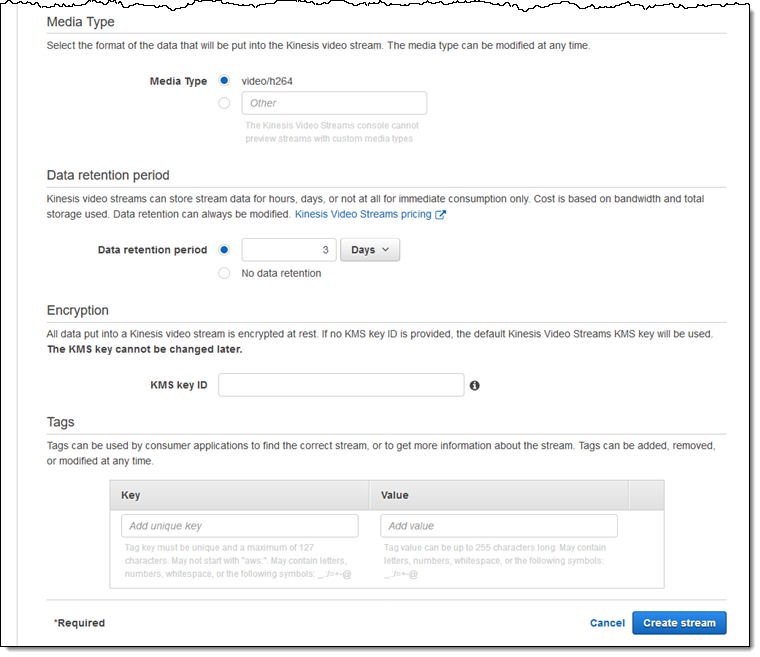

I can uncheck Use default settings if I want to customize my stream (most of the settings can be changed later):

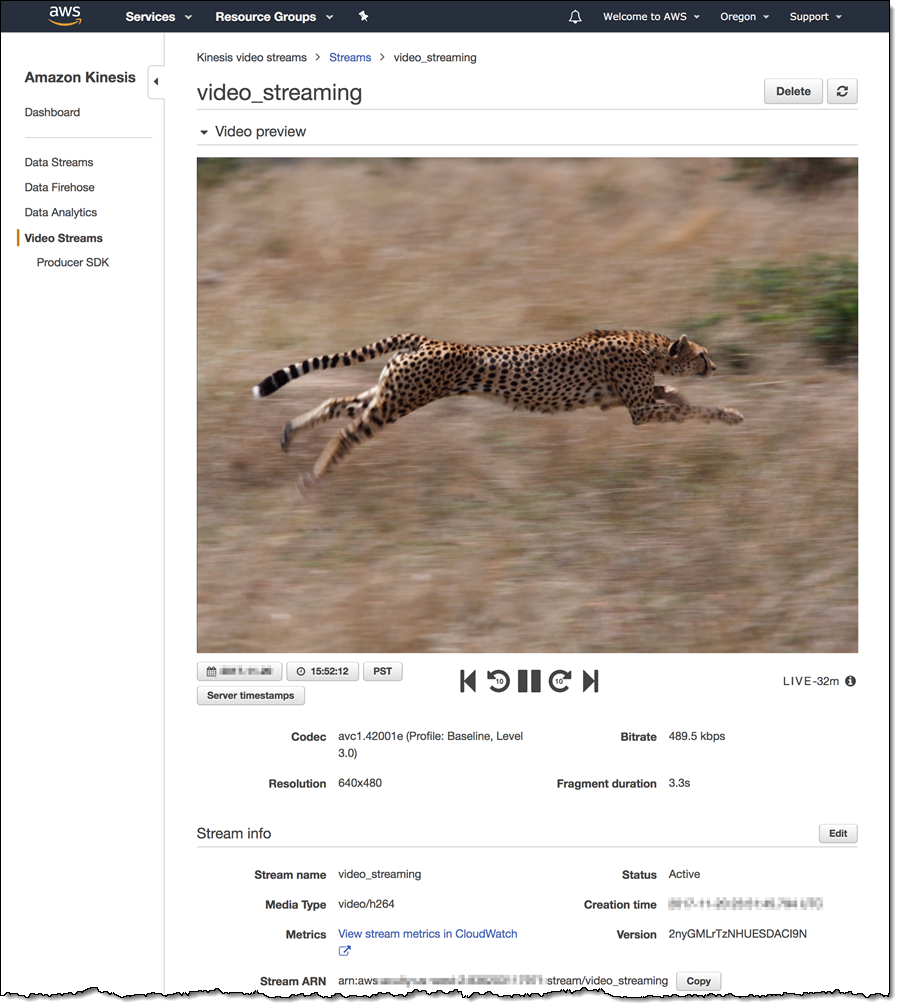

My stream is ready for use immediately. The console will display video as soon as I start to stream it:

The Kinesis team shared this screen with me; I did not have time to take a field trip. Does that make me a Cheetah?

Developing for Amazon Kinesis Video Streams

The next step is to use the Producer SDK to build the producer app. The app runs on the device or out in the field, and is responsible for creating a stream and then posting a stream of fragments (each typically represent 2 to 10 seconds of video) to the stream by making calls to the PutMedia function.

The consumer side calls the GetMedia and GetMediaFromFragmentList functions to access content from the stream in Matroska (MKV) container format, and uses the included Video Streams Parser Library to extract the desired content. GetMedia is intended to be used continuous streaming with very low latency; GetMediaFromFragment list is batch-oriented and allows selective processing.

Now Available

Amazon Kinesis Video Streams is available in the US East (N. Virginia), US West (Oregon), Europe (Ireland), Europe (Frankfurt), and Asia Pacific (Tokyo) Regions and you can start building your vision-enabled apps with it today.

Pricing is based on three factors: amount of video produced, amount of video consumed, and amount of video stored.

— Jeff;