AWS News Blog

Powerful AWS Platform Features, Now for Containers

Containers are great but they come with their own management challenges. Our customers have been using containers on AWS for quite some time to run workloads ranging from microservices to batch jobs. They told us that managing a cluster, including the state of the EC2 instances and containers, can be tricky, especially as the environment grows. They also told us that integrating the capabilities you get with the AWS platform, such as load balancing, scaling, security, monitoring, and more, with containers is a key requirement. Amazon Elastic Container Service (Amazon ECS) was designed to meet all of these needs and more.

We created Amazon ECS to make it easy for customers to run containerized applications in production. There is no container management software to install and operate because it is all provided to you as a service. You just add the EC2 capacity you need to your cluster and upload your container images. Amazon ECS takes care of the rest, deploying your containers across a cluster of EC2 instances and monitoring their health. Customers such as Expedia and Remind have built Amazon ECS into their development workflow, creating PaaS platforms on top of it. Others, such as Prezi and Shippable, are leveraging Amazon ECS to eliminate operational complexities of running containers, allowing them to spend more time delivering features for their apps.

AWS has highly reliable and scalable fully-managed services for load balancing, auto scaling, identity and access management, logging, and monitoring. Over the past year, we have continued to natively integrate the capabilities of the AWS platform with your containers through ECS, giving you the same capabilities you are used to on EC2 instances.

Amazon ECS recently delivered container support for application load balancing (Today), IAM roles (July), and Auto Scaling (May). We look forward to bringing more of the AWS platform to containers over time.

Let’s take a look at the new capabilities!

Application Load Balancing

Load balancing and service discovery are essential parts of any microservices architecture. Because Amazon ECS uses Elastic Load Balancing, you don’t need to manage and scale your own load balancing layer. You also get direct access to other AWS services that support ELB such as AWS Certificate Manager (ACM) to automatically manage your service’s certificates and Amazon API Gateway to authenticate callers, among other features.

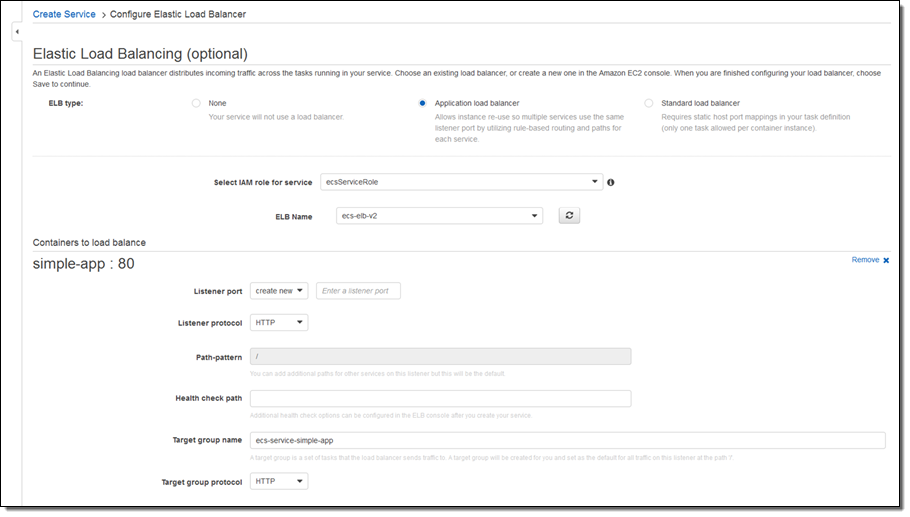

Today, I am happy to announce that ECS supports the new application load balancer, a high-performance load balancing option that operates at the application layer and allows you to define content-based routing rules. The application load balancer includes two features that simplify running microservices on ECS: dynamic ports and the ability for multiple services to share a single load balancer.

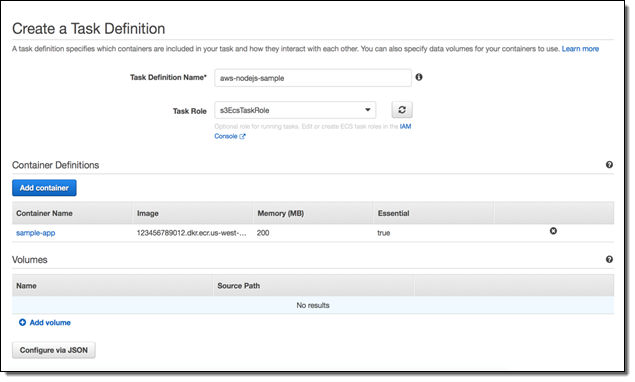

Dynamic ports makes it easier to start tasks in your cluster without having to worry about port conflicts. Previously, to use Elastic Load Balancing to route traffic to your applications, you had to define a fixed host port in the ECS task. This added operational complexity, as you had to track the ports each application used, and it reduced cluster efficiency, as only one task could be placed per instance. Now, you can specify a dynamic port in the ECS task definition, which gives the container an unused port when it is scheduled on the EC2 instance. The ECS scheduler automatically adds the task to the application load balancer’s target group using this port. To get started, you can create an application load balancer from the EC2 Console or using the AWS Command Line Interface (AWS CLI). Create a task definition in the ECS console with a container that sets the host port to 0. This container automatically receives a port in the ephemeral port range when it is scheduled.

Previously, there was a one-to-one mapping between ECS services and load balancers. Now, a load balancer can be shared with multiple services, using path-based routing. Each service can define its own URI, which can be used to route traffic to that service. In addition, you can create an environment variable with the service’s DNS name, supporting basic service discovery. For example, a stock service could be http://example.com/stock and a weather service could be http://example.com/weather, both served from the same load balancer. A news portal could then use the load balancer to access both the stock and weather services.

IAM Roles for ECS Tasks

In Amazon ECS, you have always been able to use IAM roles for your Amazon EC2 container instances to simplify the process of making API requests from your containers. This also allows you to follow AWS best practices by not storing your AWS credentials in your code or configuration files, as well as providing benefits such as automatic key rotation.

With the introduction of the recently launched IAM roles for ECS tasks, you can secure your infrastructure by assigning an IAM role directly to the ECS task rather than to the EC2 container instance. This way, you can have one task that uses a specific IAM role for access to, let’s say, S3 and another task that uses an IAM role to access a DynamoDB table, both running on the same EC2 instance.

Service Auto Scaling

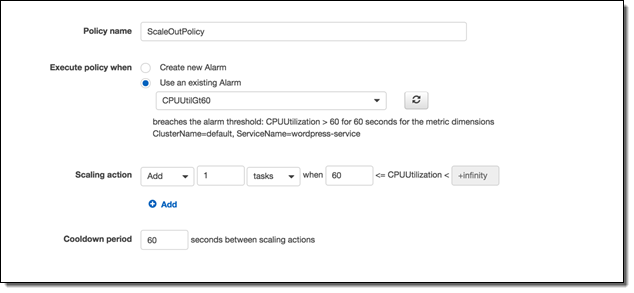

The third feature I want to highlight is Service Auto Scaling. With Service Auto Scaling and Amazon CloudWatch alarms, you can define scaling policies to scale your ECS services in the same way that you scale your EC2 instances up and down. With Service Auto Scaling, you can achieve high availability by scaling up when demand is high, and optimize costs by scaling down your service and the cluster, when demand is lower, all automatically and in real-time.

You simply choose the desired, minimum and maximum number of tasks, create one or more scaling policies, and Service Auto Scaling handles the rest. The service scheduler is also Availability Zone–aware, so you don’t have to worry about distributing your ECS tasks across multiple zones.

Available Now

These features are available now and you can start using them today!

— Jeff;