AWS Big Data Blog

Use Amazon Kinesis Data Firehose to extract data insights with Coralogix

February 9, 2024: Amazon Kinesis Data Firehose has been renamed to Amazon Data Firehose. Read the AWS What’s New post to learn more.

This is a guest blog post co-written by Tal Knopf at Coralogix.

Digital data is expanding exponentially, and the existing limitations to store and analyze it are constantly being challenged and overcome. According to Moore’s Law, digital storage becomes larger, cheaper, and faster with each successive year. The advent of cloud databases is just one example of how this is happening. Previous hard limits on storage size have become obsolete since their introduction.

In recent years, the amount of available data storage in the world has increased rapidly, reflecting this new reality. If you took all the information just from US academic research libraries and lumped it together, it would add up to 2 petabytes.

Coralogix has worked with AWS to bring you a solution to allow for the flawless integration of high volumes of data with the Coralogix platform for analysis, using Amazon Kinesis Data Firehose.

Kinesis Data Firehose and Coralogix

Kinesis Data Firehose delivers real-time streaming data to destinations like Amazon Simple Storage Service (Amazon S3), Amazon Redshift, or Amazon OpenSearch Service, and now supports delivering streaming data to Coralogix. There is no limit on the number of delivery streams, so you can use it to get data from multiple AWS services.

Kinesis Data Firehose provides built-in, fully managed error handling, transformation, conversion, aggregation, and compression functionality, so you don’t need to write applications to handle these complexities.

Coralogix is an AWS Partner Network (APN) Advanced Technology Partner with AWS DevOps Competency. The platform enables you to easily explore and analyze logs to gain deeper insights into the state of your applications and AWS infrastructure. You can analyze all your AWS service logs while storing only the ones you need, and generate metrics from aggregated logs to uncover and alert on trends in your AWS services.

Solution overview

Imagine a pipe flowing with data—messages, to be more specific. These messages can contain log lines, metrics, or any other type of data you want to collect.

Clearly, there must be something pushing data into the pipe; this is the provider. There must also be a mechanism for pulling data out of the pipe; this is the consumer.

Kinesis Data Firehose makes it easy to collect, process, and analyze real-time, streaming data by grouping the pipes together in the most efficient way to help with management and scaling.

It offers a few significant benefits compared to other solutions:

- Keeps monitoring simple – With this solution, you can configure AWS Web Application Firewall (AWS WAF), Amazon Route 53 Resolver Query Logs, or Amazon API Gateway to deliver log events directly to Kinesis Data Firehose.

- Integrates flawlessly – Most AWS services use Amazon CloudWatch by default to collect logs, metrics, and additional events data. CloudWatch logs can easily be sent using the Firehose delivery stream.

- Flexible with minimum maintenance – To configure Kinesis Data Firehose with the Coralogix API as a destination, you only need to set up the authentication in one place, regardless of the amount of services or integrations providing the actual data. You can also configure an S3 bucket as a backup plan. You can back up all log events or only those exceeding a specified retry duration.

- Scale, scale, scale – Kinesis Data Firehose scales up to meet your needs with no need for you to maintain it. The Coralogix platform is also built for scale and can meet all your monitoring needs as your system grows.

Prerequisites

To get started, you must have the following:

- A Coralogix account. If you don’t already have an account, you can sign up for one.

- A Coralogix private key.

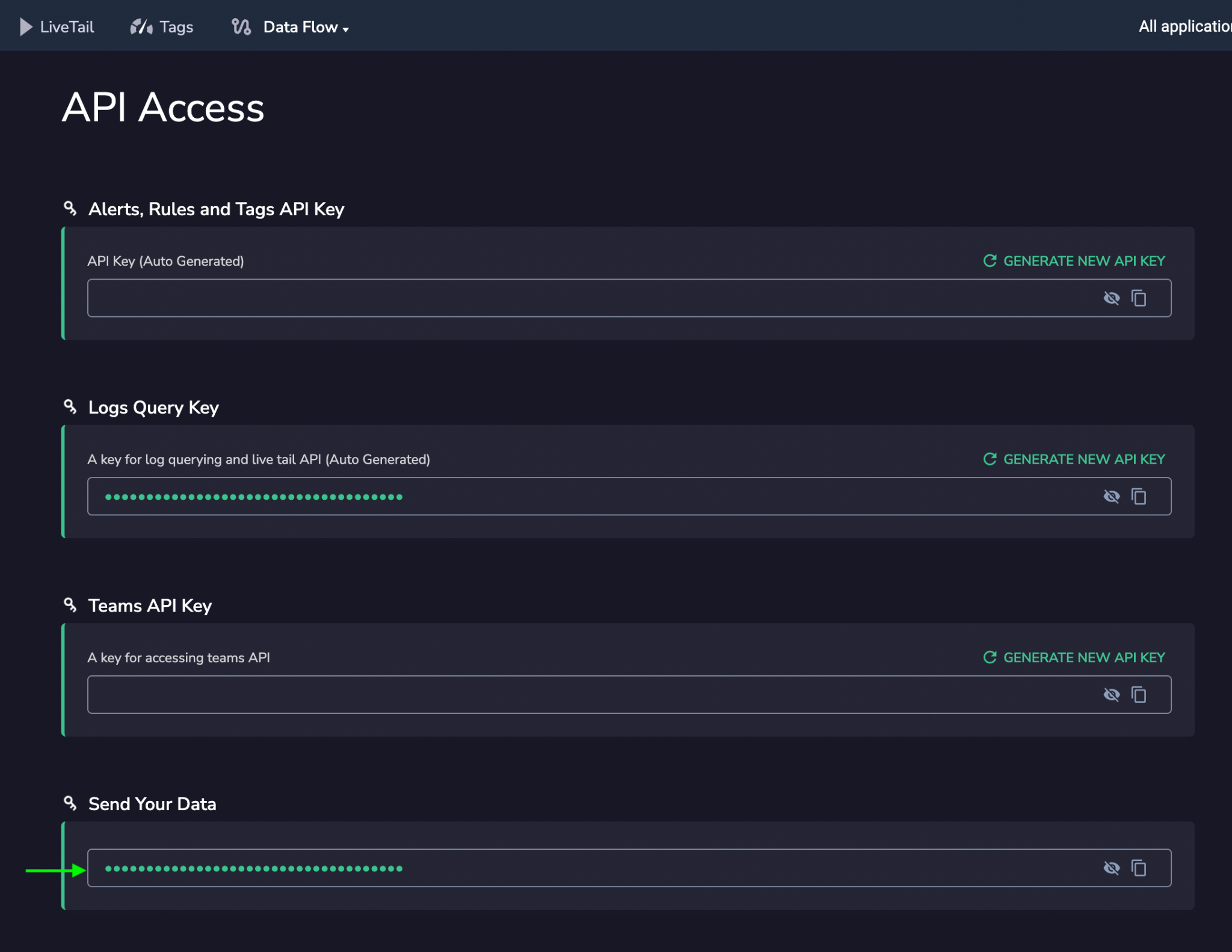

To find your key, in your Coralogix account, choose API Keys on the Data Flow menu.

Locate the key for Send Your Data.

Set up your delivery stream

To configure your deliver stream, complete the following steps:

- On the Kinesis Data Firehose console, choose Create delivery stream.

- Under Choose source and destination, for Source, choose Direct PUT.

- For Destination, choose Coralogix.

- For Delivery stream name¸ enter a name for your stream.

- Under Destination settings, for HTTP endpoint name, enter a name for your endpoint.

- For HTTP endpoint URL, enter your endpoint URL based on your Region and Coralogix account configuration.

- For Access key, enter your Coralogix private key.

- For Content encoding¸ select GZIP.

- For Retry duration, enter

60.

To override the logs applicationName, subsystemName, or computerName, complete the optional steps under Parameters.

- For Key, enter the log name.

- For Value, enter a new value to override the default.

- For this post, leave the configuration under Buffer hints as is.

- In the Backup settings section, for Source record in Amazon S3, select Failed data only (recommended).

- For S3 backup bucket, choose an existing bucket or create a new one.

- Leave the settings under Advanced settings as is.

- Review your settings and choose Create delivery stream.

Logs subscribed to your delivery stream are immediately sent and available for analysis within Coralogix.

Conclusion

Coralogix provides you with full visibility into your logs, metrics, tracing, and security data without relying on indexing to provide analysis and insights. When you use Kinesis Data Firehose to send data to Coralogix, you can easily centralize all your AWS service data for streamlined analysis and troubleshooting.

To get the most out of the platform, check out Getting Started with Coralogix, which provides information on everything from parsing and enrichment to alerting and data clustering.

About the Authors

Tal Knopf is the Head of Customer DevOps at Coralogix. He uses his vast experience in designing and building customer-focused solutions to help users extract the full value from their observability data. Previously, he was a DevOps engineer in Akamai and other companies, where he specialized in large-scale systems and CDN solutions.

Ilya Rabinov is a Solutions Architect at AWS. He works with ISVs at late stages of their journey to help build new products, migrate existing applications, or optimize workloads on AWS. His ares of interest include machine learning, artificial intelligence, security, DevOps culture, CI/CD, and containers.