AWS Big Data Blog

Write prepared data directly into JDBC-supported destinations using AWS Glue DataBrew

July 2023: This post was reviewed for accuracy.

AWS Glue DataBrew offers over 250 pre-built transformations to automate data preparation tasks (such as filtering anomalies, standardizing formats, and correcting invalid values) that would otherwise require days or weeks writing hand-coded transformations.

You can now write cleaned and normalized data directly into JDBC-supported databases and data warehouses without having to move large amounts of data into intermediary data stores. In just a few clicks, you can configure recipe jobs to specify the following output destinations: Amazon Redshift, Snowflake, Microsoft SQL Server, MySQL, Oracle Database, and PostgreSQL.

In this post, we walk you through how to connect and transform data from an Amazon Simple Storage Service (Amazon S3) data lake and write prepared data directly into an Amazon Redshift destination on the DataBrew console.

Solution overview

The following diagram illustrates our solution architecture.

In our solution, DataBrew queries sales order data from an Amazon S3 data lake and performs the data transformation. Then the DataBrew job writes the final output to Amazon Redshift.

To implement the solution, you complete the following high-level steps:

- Create your datasets.

- Create a DataBrew project with the datasets.

- Build a transformation recipe in DataBrew.

- Run the DataBrew recipe.

Prerequisites

To complete this solution, you should have an AWS account. Make sure you have the required permissions to create the resources required as part of the solution.

For our use case, we use a mock dataset. You can download the data files from GitHub.

Complete the following prerequisite steps:

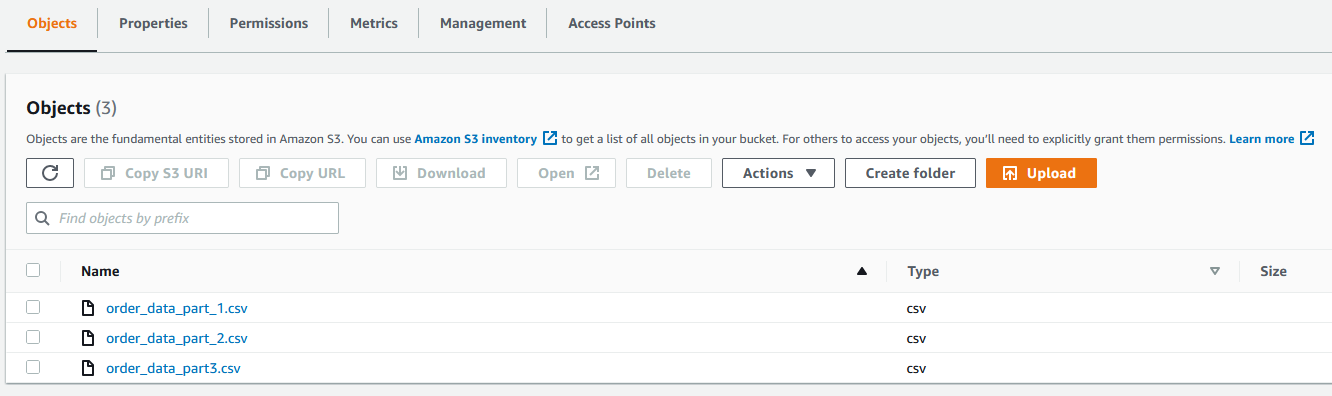

- On the Amazon S3 console, upload all three CSV files to an S3 bucket.

- Create the Amazon Redshift cluster to capture the product wise sales data.

- Set up a security group for Amazon Redshift.

- Create a schema in Amazon Redshift if required. For this post, we use the existing

publicschema.

Create datasets

To create the datasets, complete the following steps:

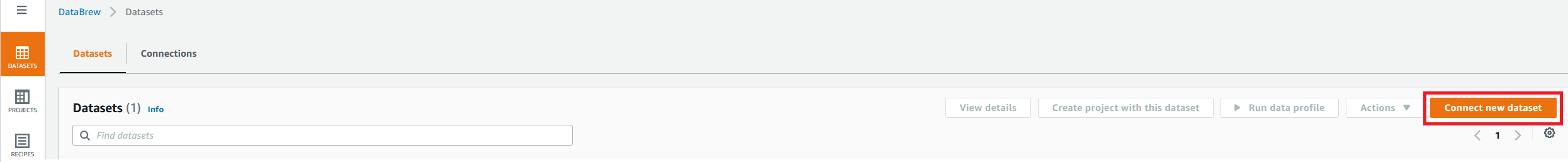

- On the Datasets page of the DataBrew console, choose Connect new dataset.

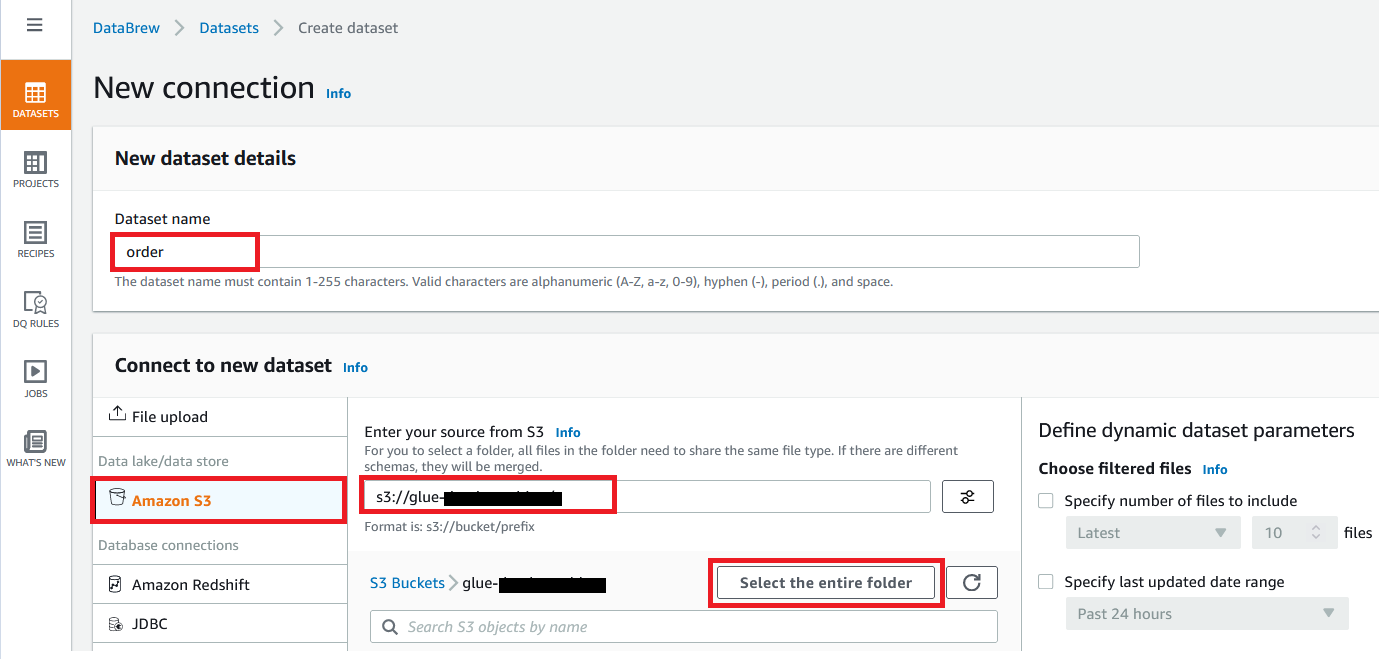

- For Dataset name, enter a name (for example,

order). - Enter the S3 bucket path where you uploaded the data files as part of the prerequisites.

- Choose Select the entire folder.

- For Selected file type, select CSV.

- For CSV delimiter, choose Comma.

- For Column header values, select Treat first row as header.

- Choose Create dataset.

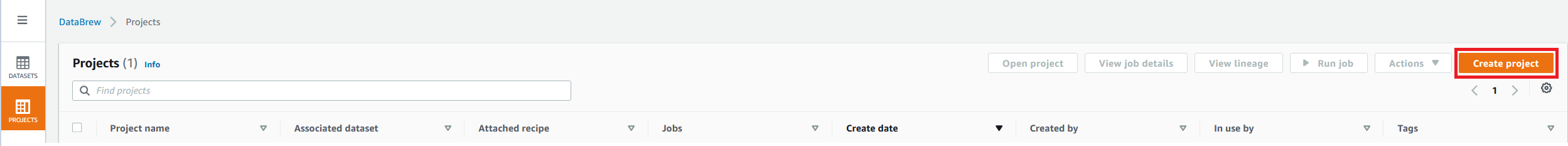

Create a project using the datasets

To create your DataBrew project, complete the following steps:

- On the DataBrew console, on the Projects page, choose Create project.

- For Project Name, enter

order-proj. - For Attached recipe, choose Create new recipe.

The recipe name is populated automatically.

- For Select a dataset, select My datasets.

- Select the

orderdataset.

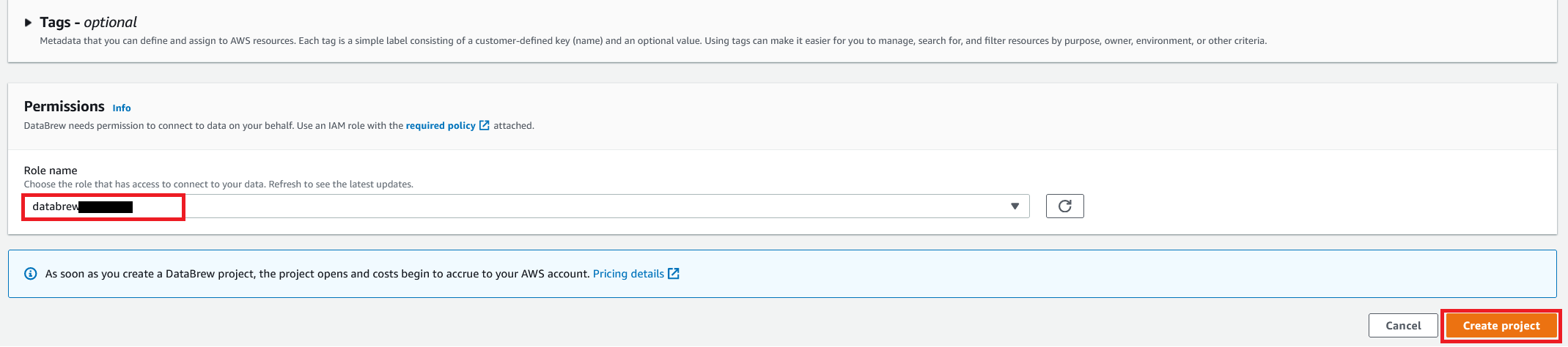

- For Role name, choose the AWS Identity and Access Management (IAM) role to be used with DataBrew.

- Choose Create project.

You can see a success message along with our Amazon S3 order table with 500 rows.

After the project is opened, a DataBrew interactive session is created. DataBrew retrieves sample data based on your sampling configuration selection.

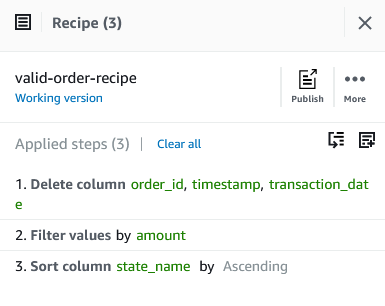

Build a transformation recipe

In a DataBrew interactive session, you can cleanse and normalize your data using over 250 pre-built transformations. In this post, we use DataBrew to perform a few transforms and filter only valid orders with order amounts greater than $0.

To do this, you perform the following steps:

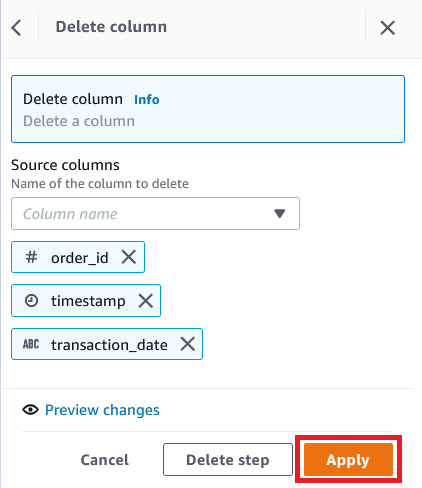

- On the Column menu, choose Delete.

- For Source columns, choose the columns

order_id,timestamp, andtransaction_date. - Choose Apply.

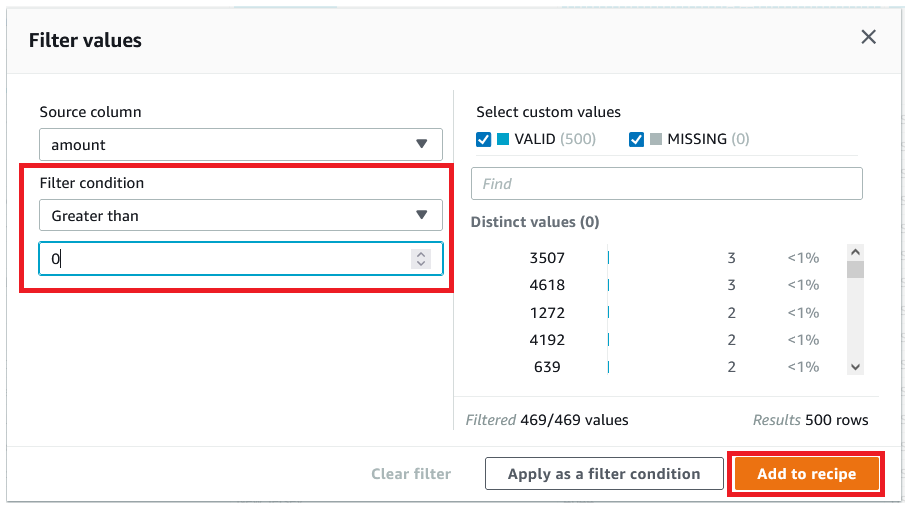

- We filter the rows based on an

amountvalue greater than $0. - Choose Add to recipe to add the condition as a recipe step.

- To perform a custom sort based on state, on the Sort menu, choose Ascending.

- For Source, choose

state_name. - Select Sort by custom

values. - Specify an ordered list of state names separated by commas.

- Choose Apply.

The following screenshot shows the full recipe that we applied to our dataset.

Run the DataBrew recipe job on the full data

Now that we have built the recipe, we can create and run a DataBrew recipe job.

- On the project details page, choose Create job.

- For Job name, enter

product-wise-sales-job.

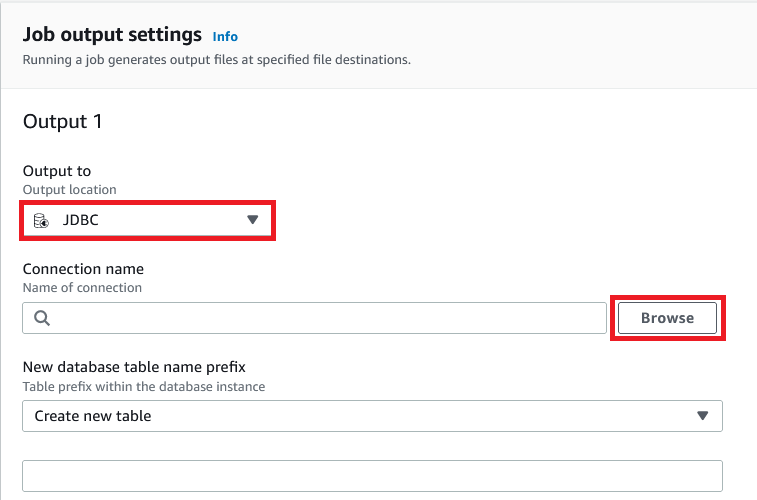

- For Output to, choose JDBC.

- For connection name, choose Browse.

- Choose Add JDBC connection.

- For Connection name, enter a name (for example,

redshift-connection). - Provide details like the host, database name, and login credentials of your Amazon Redshift cluster.

- In the Network options section, choose the VPC, subnet, and security groups of your Amazon Redshift cluster.

- Choose Create connection.

- Provide a table prefix with schema name (for example,

public.product_wise_sales).

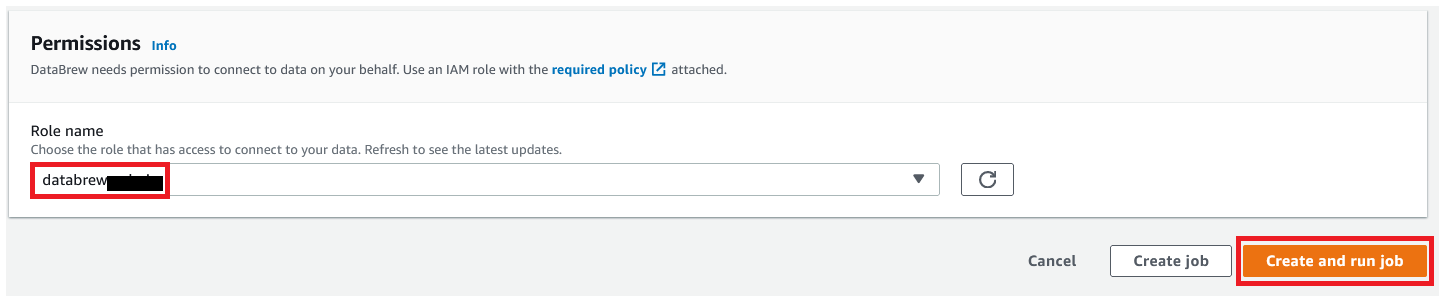

- For Role name, choose the IAM role to be used with DataBrew.

- Choose Create and run job.

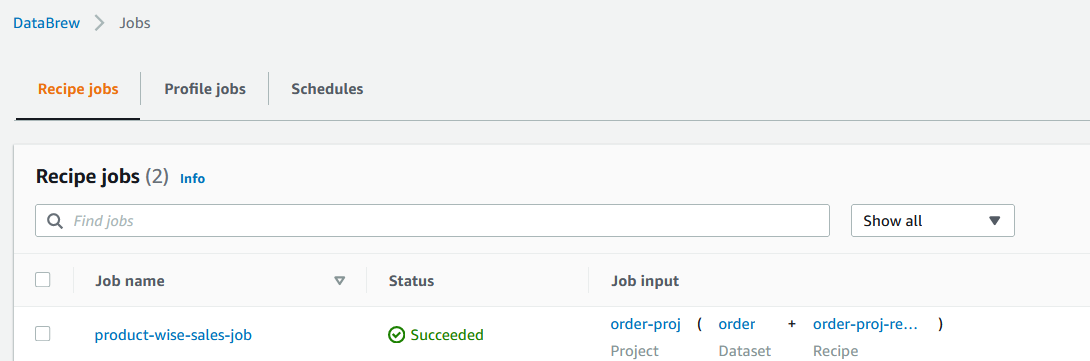

- Navigate to the Jobs page and wait for the

product-wise-sales-jobjob to complete.

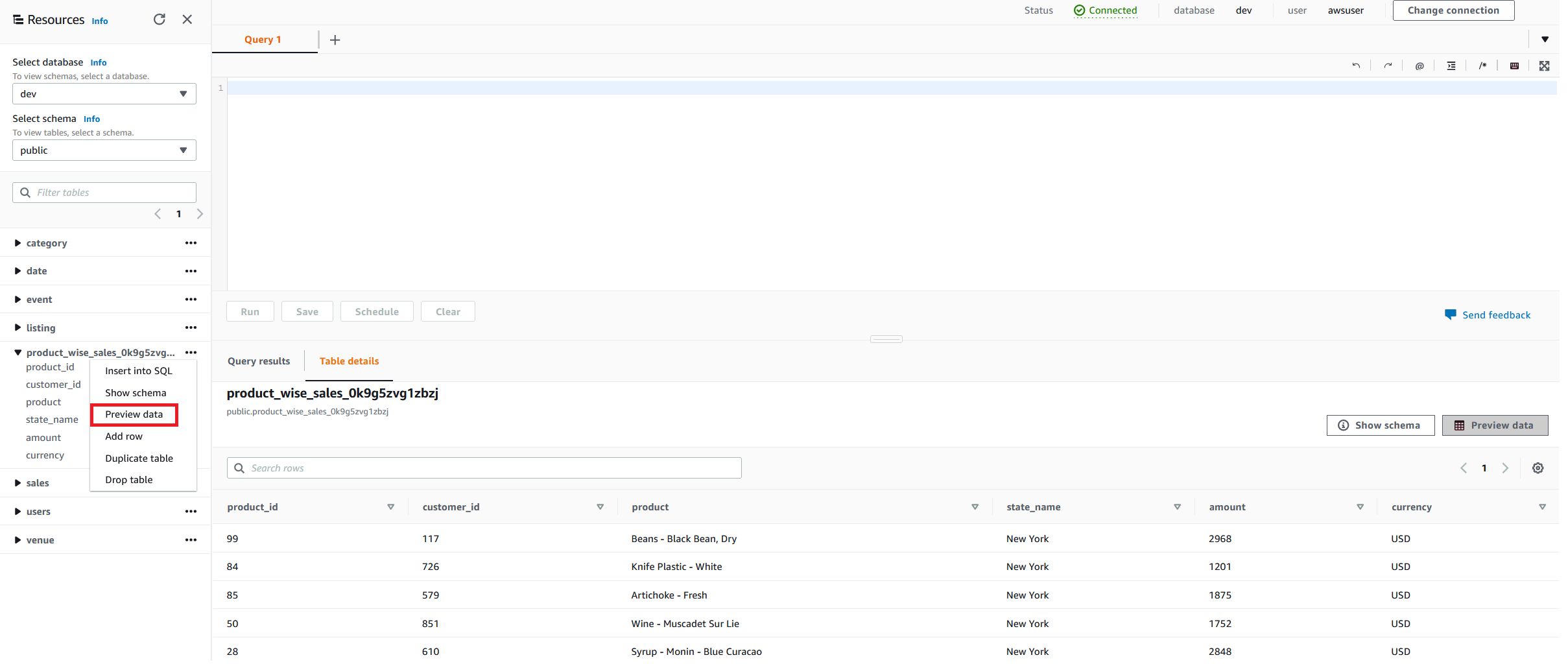

- Navigate to the Amazon Redshift cluster to confirm the output table starts with

product_wise_sales_*.

Clean up

Delete the following resources that might accrue cost over time:

- The Amazon Redshift cluster

- The recipe job

product-wise-sales-job - Input files stored in your S3 bucket

- The job output stored in your S3 bucket

- The IAM roles created as part of projects and jobs

- The DataBrew project order-proj and its associated recipe

order-proj-recipe - The DataBrew datasets

Conclusion

In this post, we saw how to how to connect and transform data from an Amazon S3 data lake and create a DataBrew dataset. We also saw how easily we can bring data from a data lake into DataBrew, seamlessly apply transformations, and write prepared data directly into an Amazon Redshift destination.

To learn more, refer to the DataBrew documentation.

About the Author

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to provide guidance on enterprise cloud adoption, migration, and strategy. He is passionate about technology and enjoys building and experimenting in the analytics and AI/ML space.

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to provide guidance on enterprise cloud adoption, migration, and strategy. He is passionate about technology and enjoys building and experimenting in the analytics and AI/ML space.

Amit Mehrotra is a Solution Architecture leader with Amazon Web Services. He leads an org that customers cloud journey.

Amit Mehrotra is a Solution Architecture leader with Amazon Web Services. He leads an org that customers cloud journey.