AWS Compute Blog

EFA-enabled C5n instances to scale Simcenter STAR-CCM+

This post was contributed by Dnyanesh Digraskar, Senior Partner SA, High Performance Computing; Linda Hedges, Principal SA, High Performance Computing

In this blog, we define and demonstrate the scalability metrics for a typical real-world application using Computational Fluid Dynamics (CFD) software from Siemens, Simcenter STAR-CCM+, running on a High Performance Computing (HPC) cluster on Amazon Web Services (AWS). This scenario demonstrates the scaling of an external aerodynamics CFD case with 97 million cells to over 4,000 physical cores. Simcenter STAR-CCM+ software was run on Amazon EC2 C5n.18xlarge instances, each providing 36 Intel Skylake 3.0 GHz processor cores with Hyper-Threading disabled. We also discuss the effects of scaling on efficiency, simulation turn-around time, and total simulation costs. TLG Aerospace, a Seattle-based aerospace engineering services company, contributed the data used in this blog. For a detailed case study describing TLG Aerospace’s experience and the results they achieved, see the TLG Aerospace case study.

For HPC workloads that use multiple nodes, the cluster setup including the network is at the heart of scalability concerns. Some of the most common concerns from CFD or HPC engineers are “how well will my application scale on AWS?”, “how do I optimize the associated costs for best performance of my application on AWS?”, “what are the best practices in setting up an HPC cluster on AWS to reduce the simulation turn-around time and maintain high efficiency?” This post aims to answer these concerns by defining and explaining important scalability-related parameters by illustrating the results from the CFD case. For detailed HPC-specific information, see visit the High Performance Computing page and download the CFD whitepaper, Computational Fluid Dynamics on AWS.

CFD scaling on AWS

Scale-up

HPC applications, such as CFD, depend heavily on the applications’ ability to scale compute tasks efficiently in parallel across multiple compute resources. We often evaluate parallel performance by determining an application’s scale-up. Scale-up – a function of the number of cores used – is the time to complete a run on one core, divided by the time to complete the same run on the number of cores used for the parallel run.

Note, the below images using the term “processor” refer to a single physical processor core or multiples of them as defined. They do not refer to vCPUs or CPU sockets.

In addition to characterizing the scale-up of an application, scalability can be further characterized as “strong” or “weak”. Strong scaling offers a traditional view of application scaling, where a problem size is fixed and spread over an increasing number of cores. As more cores are added to the calculation, good strong scaling means that the time to complete the calculation decreases proportionally with increasing core count. In comparison, weak scaling does not fix the problem size used in the evaluation, but purposely increases the problem size as the number of cores also increases. An application demonstrates good weak scaling when the time to complete the calculation remains constant as the ratio of compute effort to the number of cores is held constant. Weak scaling offers insight into how an application behaves with varying case size.

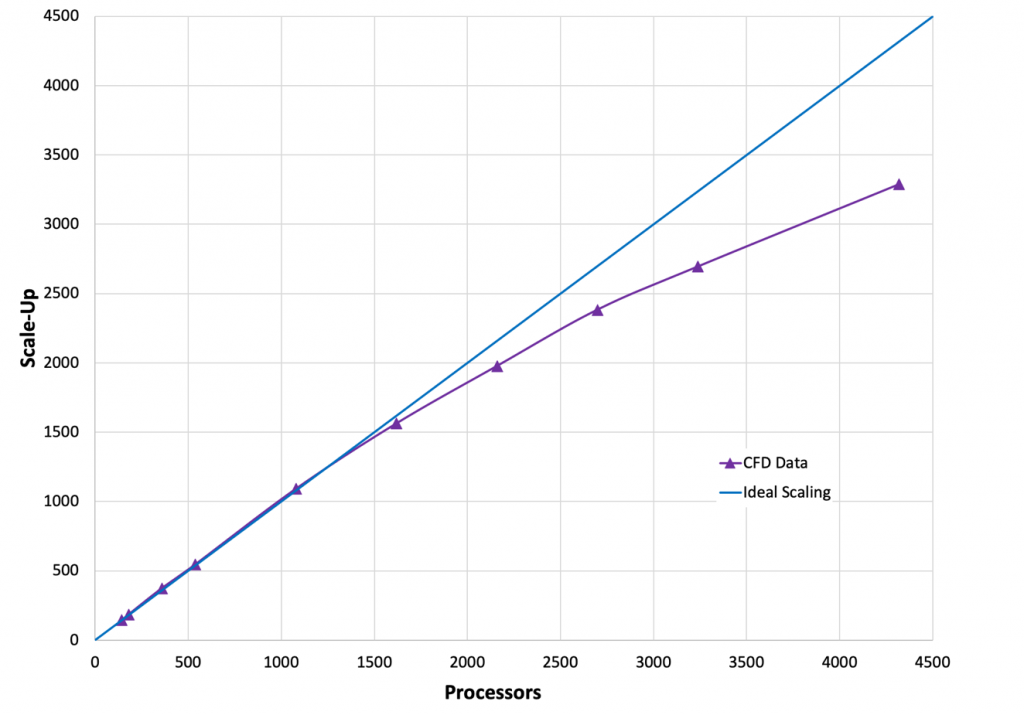

Figure 1, the following image, shows scale-up as a function of increasing core count for the Simcenter STAR-CCM+ case data provided by TLG Aerospace. This is a demonstration of “strong” scalability. The blue line shows what ideal or perfect scalability looks like. The purple triangles show the actual scale-up for the case as a function of increasing core count. The closeness of these two curves demonstrates excellent scaling to well over 3,000 cores for this mid-to-large-sized 97M cell case. This example was run on Amazon EC2 C5n.18xlarge Intel Skylake instances, 3.0 GHz, each providing 36 cores with Hyper-Threading disabled.

Efficiency

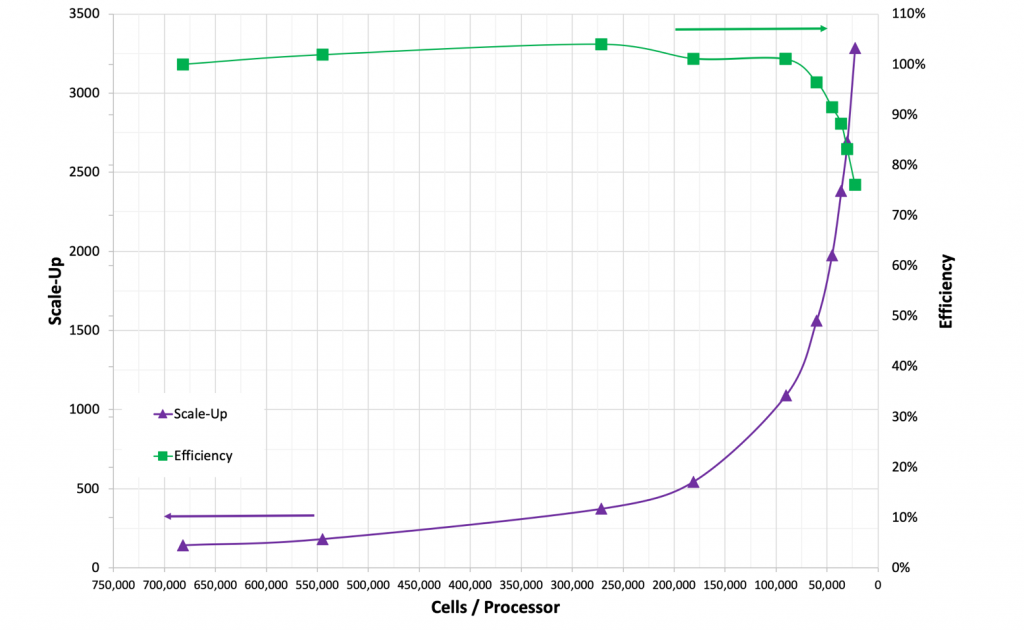

Now that you understand the variation of scale-up with the number of cores, we discuss the relation of scale-up with number of grid cells per core, which determines the efficiency of the parallel simulation. Efficiency is the scale-up divided by the number of cores used in the calculation. By plotting grid cells per core, as in Figure 2, scaling estimates can be made for simulations with different grid sizes with Simcenter STAR-CCM+. The purple line in Figure 2 shows scale-up as a function of grid cells per core. The vertical axis for scale-up is on the left-hand side of the graph as indicated by the purple arrow. The green line in Figure 2 shows efficiency as a function of grid cells per core. The vertical axis for efficiency is on the right side of the graph and is indicated by the green arrow.

Fewer grid cells per core means reduced computational effort per core. Maintaining efficiency while reducing cells per core demonstrates the strong scalability of Simcenter STAR-CCM+ on AWS.

Efficiency remains at about 100% between approximately 700,000 cells per core and 60,000 cells per core. Efficiency starts to fall off at about 60,000 cells per core. An efficiency of at least 80% is maintained until 25,000 cells per core. Decreasing cells per core leads to decreased efficiency because the total computational effort per core is reduced. The goal of achieving more than 100% efficiency (here, at about 250,000 cells per core) is common in scaling studies, is case-specific, and often related to smaller effects such as timing variation and memory caching.

Turn-around time and cost

Case turn-around time and cost is what really matters to most HPC users. A plot of turn-around time versus CPU cost for this case is shown in Figure 3. As the number of cores increases, the total turn-around time decreases. But as the number of cores increases, the inefficiency also increases, which leads to increased costs. The cost, represented by solid blue curve, is based on the On-Demand price for the C5n.18xlarge, and only includes the computational costs. Small costs are also incurred for data storage. Minimum cost and turn-around time are achieved with approximately 60,000 cells per core.

Many users choose a cell count per core count to achieve the lowest possible cost. Others may choose a cell count per core count to achieve the fastest turn-around time. If a run is desired in 1/3rd the time of the lowest price point, it can be achieved with approximately 25,000 cells per core.

Additional information about the test scenario

TLG Aerospace has used the Simcenter STAR-CCM+ Power-On-Demand (POD) license for running the simulations for this case. POD license enables flexible On-Demand usage of the software on unlimited cores for a fixed price of $22 per hour. The total cost per run, which includes the computational cost, plus the POD license cost is represented in Figure 3 by the dashed blue curve. As POD license is charged per hour, the total cost per run increases for higher turn-around times. Note that many users run Simcenter STAR-CCM+ with fewer cells per core than this case. While this increases the compute cost, other concerns—such as license costs or schedules—can be overriding factors. However, many find the reduced turn-around time well worth the price of the additional instances.

AWS also offers Savings Plans, which are a flexible pricing model offering substantially lower price on EC2 instances compared to On-Demand prices for a committed usage of 1- or 3-year term. For example, the 3-year all-upfront pricing of C5n.18xlarge instance is 62% cheaper than the On-Demand pricing. The total cost per run using the 3-year all-upfront pricing model is illustrated in Figure 3 by solid green line. The 3-year all-upfront pricing plan offers a substantial reduction in price for running the simulations.

Amazon Linux is optimized to run on AWS and offers excellent performance for running HPC applications. For the case presented here, the operating system used was Amazon Linux 2. While other Linux distributions are also performant, we strongly recommend that for Linux HPC applications, you use a current Linux kernel.

Amazon Elastic Block Store (Amazon EBS) is a persistent, block-level storage device that is often used for cluster storage on AWS. A standard EBS General Purpose SSD (gp2) volume was used for this scenario. For other HPC applications that may require faster I/O to prevent data writes from being a bottleneck to turn-around speed, we recommend FSx for Lustre. FSx for Lustre seamlessly integrates with Amazon S3, allowing users for efficient data interaction with Amazon S3.

AWS customers can choose to run their applications on either threads or cores. With hyper-threading, a single CPU physical core appears as two logical CPUs to the operating system. For an application like Simcenter STAR-CCM+, excellent linear scaling can be seen when using either threads or cores, though we generally recommend disabling hyper-threading. Most HPC applications benefit from disabling hyper-threading, and therefore, it tends to be the preferred environment for running HPC workloads. For more information, see Well-Architected Framework HPC Lens.

Elastic Fabric Adapter (EFA)

Elastic Fabric Adapter (EFA) is a network device that can be attached to Amazon EC2 instances to accelerate HPC applications by providing lower and consistent latency and higher throughput than the Transmission Control Protocol (TCP) transport. C5n.18xlarge instances used for running Simcenter STAR-CCM+ for this case support EFA technology, which is generally recommended for best scaling.

Summary

This post demonstrates the scalability of a commercial CFD software Simcenter STAR-CCM+ for an external aerodynamics simulation performed on the Amazon EC2 C5n.18xlarge instances. The availability of EFA, a high-performing network device on these instances result in excellent scalability of the application. The case turn-around time and associated costs of running Simcenter STAR-CCM+ on AWS hardware are discussed. In general, excellent performance can be achieved on AWS for most HPC applications. In addition to low cost and quick turn-around time, important considerations for HPC also include throughput and availability. AWS offers high throughput, scalability, security, cost-savings, and high availability, decreasing a long queue time and reducing the case turn-around time.

![Figure 3. Cost per run for: On-Demand pricing ($3.888 per hour for C5n.18xlarge in US-East-1) with and without the Simcenter STAR-CCM+ POD license cost as a function of turn-around time [Blue]; 3-yr all-upfront pricing ($1.475 per hour for C5n.18xlarge in US-East-1) [Green]](https://d2908q01vomqb2.cloudfront.net/1b6453892473a467d07372d45eb05abc2031647a/2020/09/21/Figure-3.-1024x676.png)