Containers

Achieve Consistent Application-level Tagging for Cost Tracking in AWS

Introduction

As organizations transform their business or grow due to market demand, they often struggle to implement the right tools to understand their AWS footprint and associated cost. A large AWS footprint may include multiple AWS accounts, different infrastructure environments, and application environments for specific projects. The complexity of this footprint grows by an order of magnitude if applications are built using a microservice architecture with individual build and deployment pipelines, and frequent release cycles.

To keep pace with growth and manage large AWS footprints effectively there are approaches that can help:

- Automation of infrastructure and resource provisioning

- Standardized cost tracking

First is automation, where organizations move to define their infrastructure using code (Infrastructure-as-Code [IaC]). The next level of maturity comes from defining a release lifecycle for infrastructure and applications. This is the process of continuous integration (CI) (build and test code continuously) and continuous delivery and/or deployment (CD) (automation) bringing agility to your product release cycle. To help speed up CI/CD adoption while maintaining control and standards, platform engineering teams can provide application teams (software developers) with a self-service mechanism to deploy standardized infrastructure.

In this post, we look at how AWS Proton helps with standardizing and automating across an organization.

Second is cost tracking, where organizational level visibility and a consistent view on cost is important to understand how teams spend on AWS resources. Every resource needs to be tracked for cost, and the tracking mechanism needs to be responsive to changes in resources. This becomes a big challenge with the proliferation of microservices and different environments. An application can have several microservices with each having multiple resources (such as Amazon ECS tasks, AWS Lambda functions, and Kubernetes deployments) and each microservice has its own release cycle (CI/CD) in different environments (development/test/stage/production).

How can you effectively track costs for the modern applications with microservices?

Using tags for cost allocation

AWS Billing and Cost management provides detailed billing information at the account level. From the billing console page ,you can get a cost breakdown at an AWS service level for the whole account. However, out of the box, this does not provide visibility at a granular microservices level. How can you get to this level of granularity?

AWS provides an AWS Cost Allocation Tags to achieve cost tracking. Tags are key:value pairs assigned to resources created. These tags are set by the organization’s administrators based on their organization’s method of classifying and tracking workloads. The following screen shot provides a way of tracking using Cost Center and Environment.

Solution overview

Once these tags are activated and applied to resources, all resources with a particular tag can be queried and costs can be consolidated to provide a granular view (such as specific cost center CostCenter:78925 or by environment userStack:Production). We now have a mechanism available, but how are these tags applied and how are they used to gain visibility and get actionable insights?

Challenges with tagging and effective use of tags

Applying tags to all resource is a great solution, but what are the challenges with tagging?

- First, every resource that has cost associated with it has to be tagged when resources are added, modified, or deleted. (Tags for individual resources can added in code using IaC).

- Second, tags need to be set at a granularity so management can see environment level (such as development/test/stage/production) and application level (and at service level [microservices]) resources.

- Third, granular tagging at a microservices level for containerized workloads becomes daunting as applications and microservices proliferation. Maintaining versions of microservices compounds this problem.

- Finally, if each application team uses a different set of tags to classify resources for their application, then a consistent view of spend cannot be achieved at an organizational level. It’s clear that tagging needs to be managed by a central entity at the organizational level and not by individual application teams.

The reasons listed above clearly indicates applying tags consistently and maintaining them in a large and rapidly growing organization in not easy. For effective cost tracking, there needs to be an automated way to apply tags to resources uniformly at a granular level (in other words, AWS account level, environments created, and at application or microservice level). How can this be achieved?

Walkthrough

AWS Proton

AWS Proton is a managed container and serverless application delivery service that helps platform teams to automate creation of consistent infrastructure and application templates that can be consumed by application team. AWS Proton automatically tags the resources that are created in AWS CloudFormation, and supports consistent Terraform tagging. As a result, AWS Proton is a central location from where consistent resource definition and tracking can be achieved.

AWS Proton achieves this through the use of Environment templates and Service templates:

- Environment Templates: Create common infrastructure resources (such as Amazon VPC, Amazon ECS, and AWS ParallelCluster) to provide an easy way to version control and replicate infrastructure.

- Service Templates: Create application-specific infrastructure resources (such as AWS Fargate and AWS Lambda) that provide self-service mechanism for application developers to develop and test their applications.

The above templates are version controlled by AWS Proton, which make it easy to managed and maintain changes to infrastructure and applications in a dynamic organization from a central location.

AWS Proton supports AWS CloudFormation and HashiCorp Configuration Language (Terraform). There are differences in how templates created with above IaC languages are managed. AWS-managed provisioning (CloudFormation) provides an integrated experience and while self-managed provisioning (HCL using Terraform) uses a pull request to a source code repository.

See Provisioning Methods documentation for how AWS-managed and Self-managed provisioning works (read blog AWS Proton Self-Managed Provisioning for more details).

How does this help with all the challenges associated with consistent tagging?

AWS Proton automatic tagging

The key mechanism that AWS Proton uses is template definition for infrastructure and Applications. When templates are created, tags can be easily injected. Since AWS Proton serves as a central location for creating infrastructure for both platform and application teams, the tagging becomes consistent automatically. This provides a consistent way of visualizing the resources based on cost tags at the Infrastructure environment and at each service.

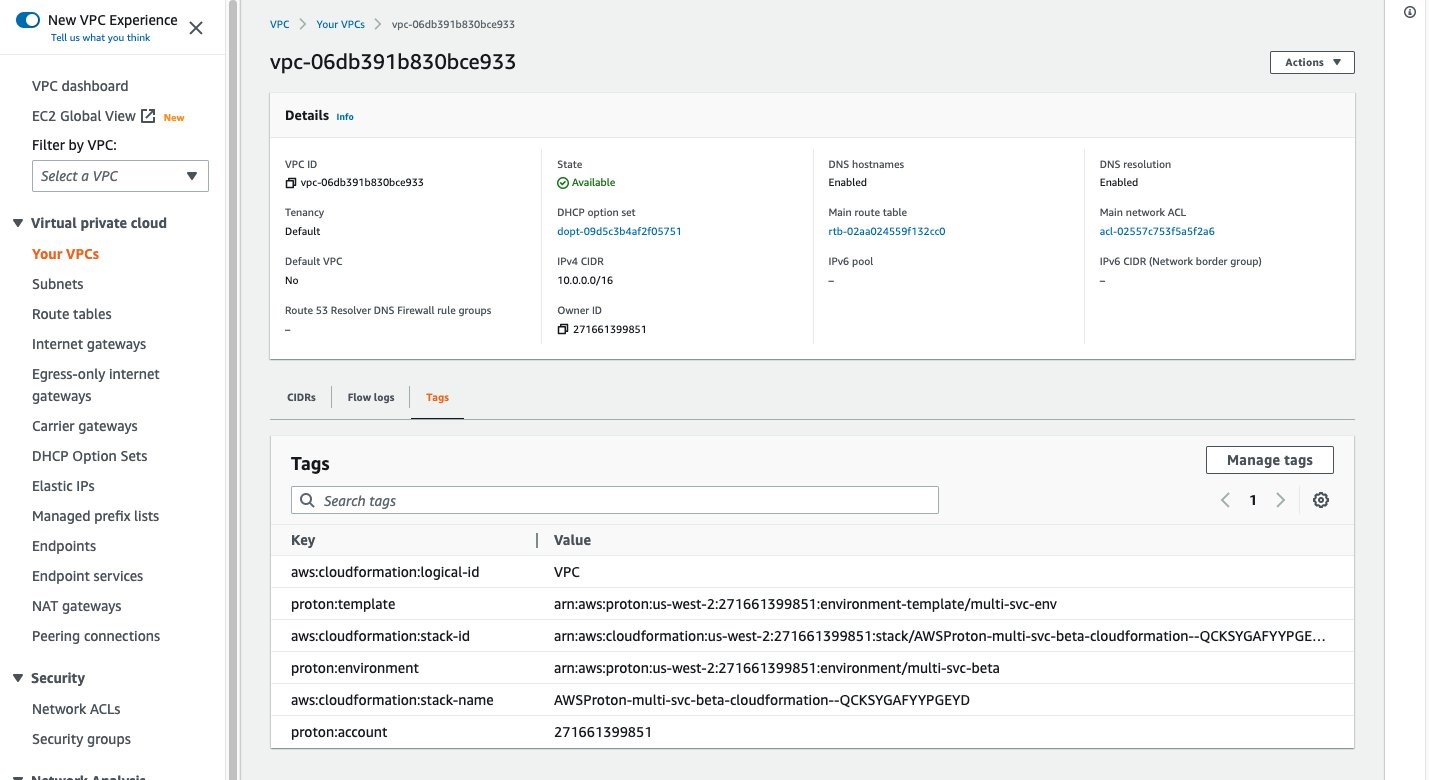

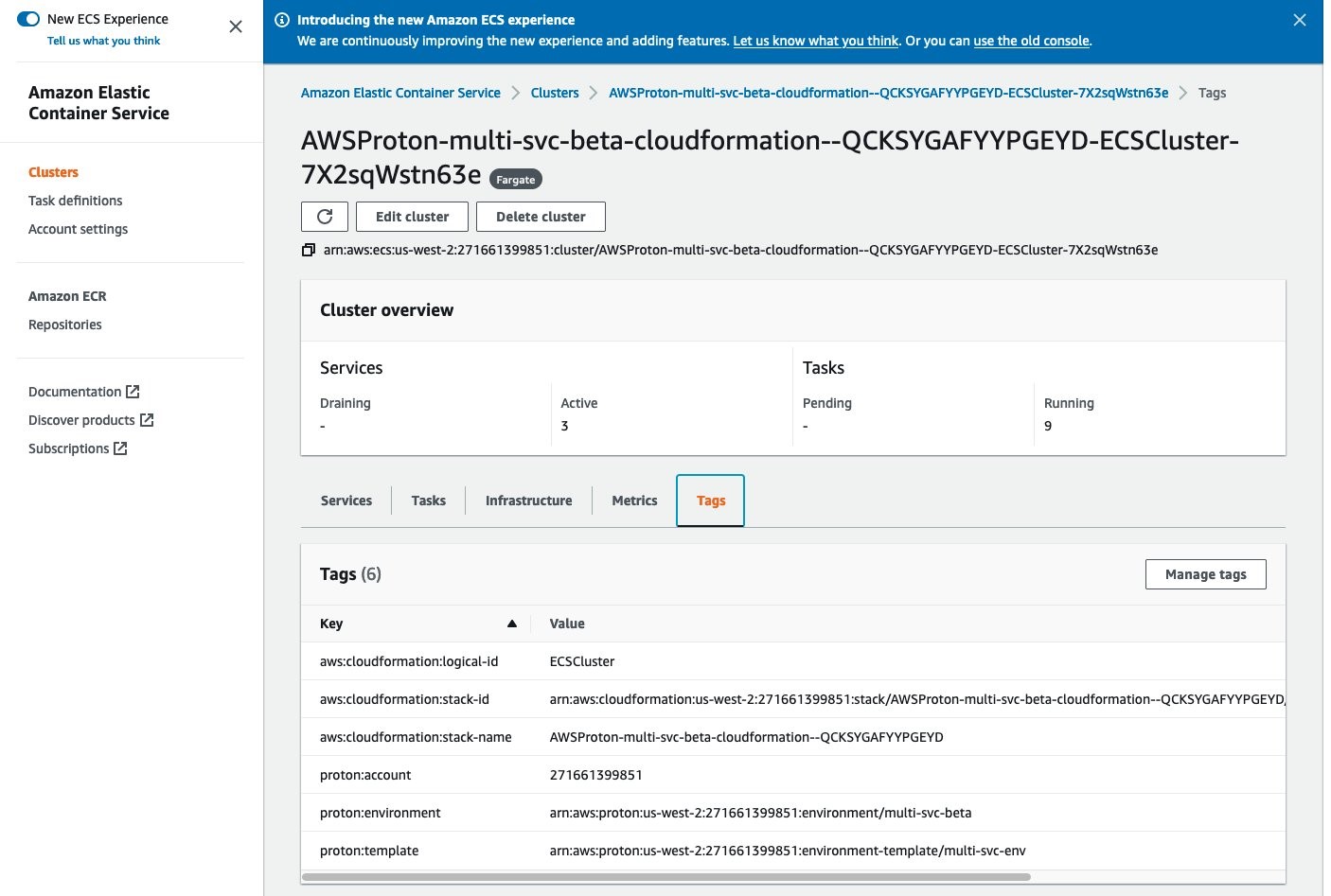

Tags at infrastructure level (such as VPC and ECS Cluster).

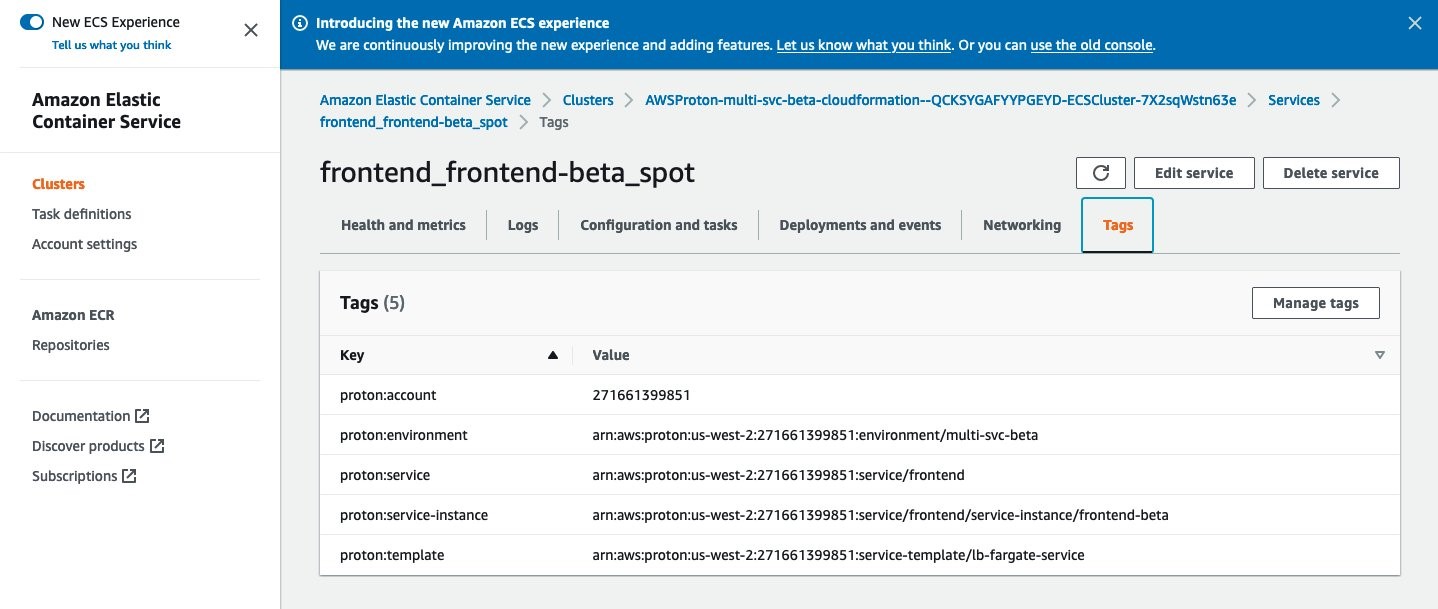

Tags at application and service level (such as frontend service).

Note: For AWS-Managed Provisioning (CloudFormation templates) Tags are added to automatically by AWS Proton. For Self-managed Provisioning (Terraform Templates), tagging is accomplished using

default_tagsfeature of Terraform (read about default tags here). Your AWS Proton template needs to include a Terraform variableproton_tagsneed to be defined in your AWS Proton template and included as default_tags in your AWS provider.

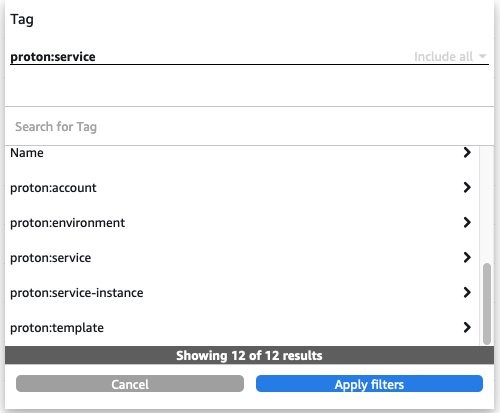

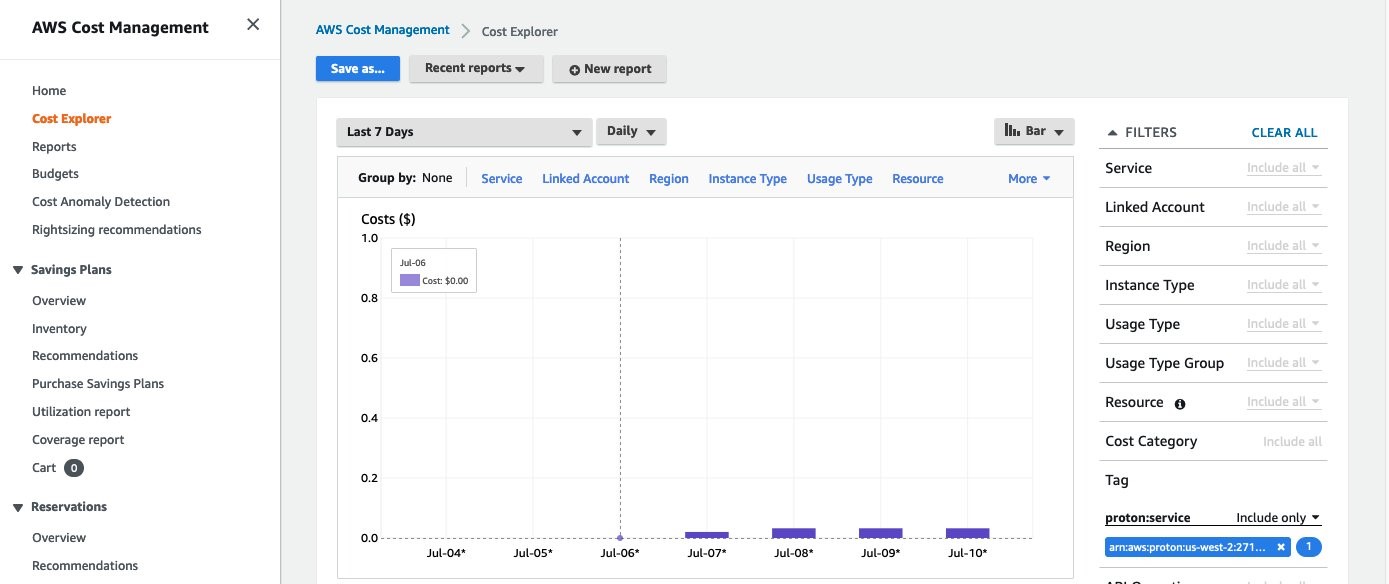

AWS Cost Explorer tag based visualization

As mentioned earlier AWS Cost Explorer (and other third-party tools) can be used to visualize costs at a granular level (e.g., Environments and Services) using Cost Tags automatically injected by AWS Proton. The following diagram shows how this can be done from AWS Cost Explorer.

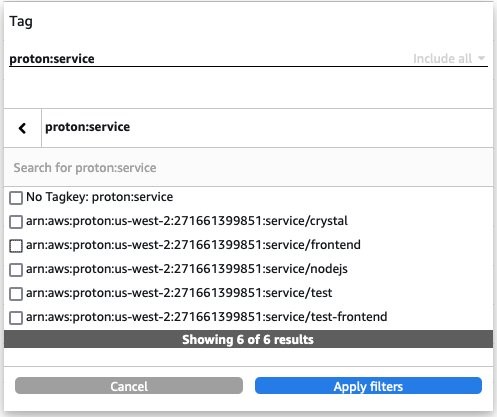

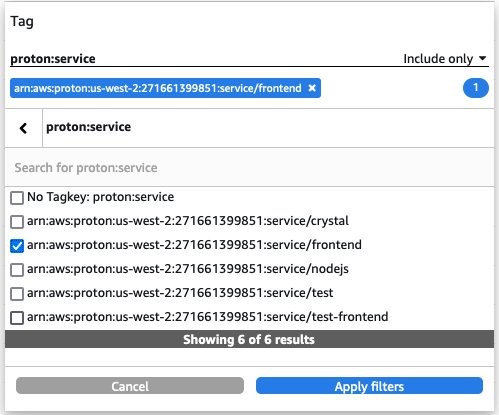

- Select Tag in the Filter section on the right hand side

- Select

proton:servicetag - Select the service name you wish to view (such as

arn:aws:proton:us-west-2:271661399851:service/frontend).

This filter applies to all application resources consumed by service frontend. The following report shows how a sample output from AWS Cost Explorer.

The post on AWS Proton Template Libraries provides samples templates for both AWS CloudFormation and Terraform. Also included are sample applications corresponding to the AWS Proton Environment templates. This provides an easy way to get started with AWS Proton. You can also learn more about AWS Proton as a provisioning mechanism for Amazon EKS clusters and AWS Proton Sample Multi Service deployment.

Additional considerations for Terraform: self-managed provisioning

As mentioned earlier AWS Proton Tagging of resources for Terraform based Templates is accomplished using default_tags feature of Terraform (read about Default Tags in the Terraform AWS Provider). AWS Proton uses a Terraform variable proton_tags. The variable needs to be defined in your AWS Proton template and included as default_tags in your AWS provider. You can learn more here about example tag propagation. The following example shows how you can achieve automatic tagging with Terraform.

Terrafrom AWS provider (with default_tags)

AWS Proton renders the HashiCorp Configuration Language (HCL) files by including the necessary tags in the proton_tags variable and AWS provider is updated to include the tags in all resources created by default.

Another consideration is to automation Terraform provisioning using GitHub actions. The AWS Samples GitHub repository provides a GitHub actions sample repo that can be used to automate Terraform infrastructure provisioning process when AWS Proton submits a pull request.

Prerequisites

To get started, make sure you have an AWS account, at least one IaC file in CloudFormation or Terraform that you can use to define a service, and get started with AWS Proton.

Cleaning up

After trying this tagging process, remember to delete example resources to avoid future costs.

Conclusion

In this post, we showed you how to effectively track and manage resources and track costs in an organization that develops applications based on modern application development methodologies using AWS Proton. Using AWS Proton’s automatic tagging feature, an organization can apply consistent infrastructure and application-level tags and visualize cost usage by environment application (per microservice and serverless application) and get actionable insights using tools such as AWS Cost Explorer.