This post also had contributions from Jiwon Yeom, Solutions Architect, AWS.

Introduction

AWS customers use infrastructure as code (IaC) to create cloud resources in a repeatable and predictable manner. IaC is especially helpful in managing environments with identical stacks, which is a common occurrence in active-active multi-region systems. Instead of managing each regional deployment individually, teams avoid infrastructure sprawl by managing infrastructure in both regions using code and pipelines.

Teams need fewer resources to manage and troubleshoot infrastructure when their system configuration is identical across regions or accounts. Using IaC tools like AWS CloudFormation, Terraform, Pulumi, and AWS Cloud Development Kit (CDK), operators create and manage multiple environments from a single codebase and avoid system failures caused by ad hoc changes. IaC also makes it easier to rollback when a change fails.

Similarly, the GitOps approach allows you to externalize your Kubernetes cluster configuration. GitOps tools like ArgoCD allow you to store your Kubernetes cluster state as code in a Git repository. If you manage multiple replica clusters, which is a common scenario in multi-region deployments, then you can control their configuration by pointing your GitOps operator to the same Git repository.

Unified infrastructure and workload deployments

Customers that want to manage their infrastructure and Kubernetes resources using the same code library with a variety of available solutions. Teams already using Terraform can package their applications using the Helm and Kubernetes provider. Pulumi users have Pulumi Kubernetes Provider.

Similarly, CDK and cdk8s allow you to code both the cloud infrastructure and Kubernetes resources using familiar programming languages. Customers can declare their Virtual Private Clouds (VPCs), Subnets, Amazon EKS clusters, Kubernetes namespaces, role-based access control (RBAC) policies, deployments, services, ingresses, and other tasks in the same code base. The Git repository that hosts the application code also becomes the source of truth for infrastructure and workload configuration.

This post shows you how to create Amazon EKS clusters in multiple AWS Regions using CDK and create a continuous deployment pipeline for infrastructure and application changes.

Use cases

Imagine you work for an Independent Software Vendor (ISV) with a security sensitive product that can’t be multi-tenant. The system design requires one Amazon EKS cluster per tenant. When the product becomes successful, your organization may be responsible for operating a multitude of replica clusters.

In scenarios where the cluster lifecycle is closely tied to the application’s, you can store the definition of your infrastructure, Amazon EKS cluster, and application resources in the same codebase. To deploy a new stack, you rerun the CDK code, which creates the infrastructure resources required to run the application and then deploys the application in an Amazon EKS cluster.

Day 2 operations, such as deploying new versions of applications and upgrading clusters, are also managed using CDK. We recommend checking code using a tool like cdk-nag, along with using standardization and parametrization to minimize the variability between regions.

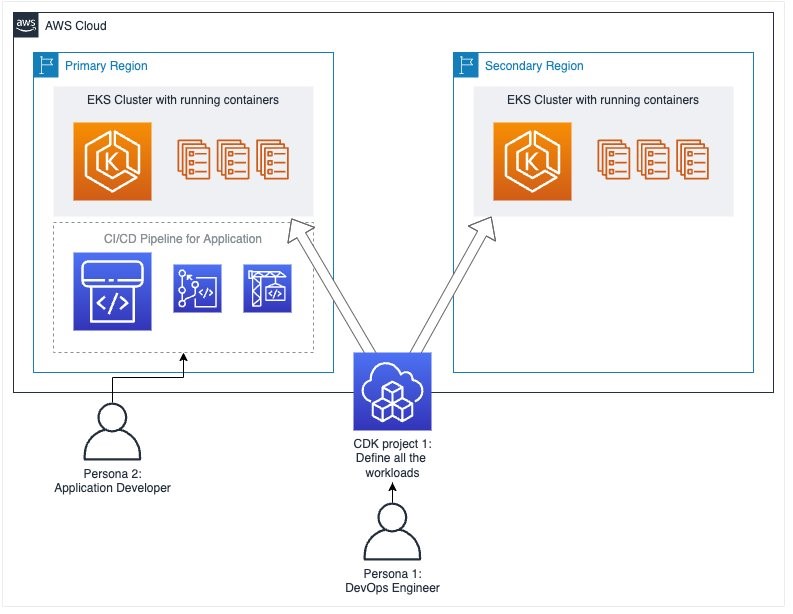

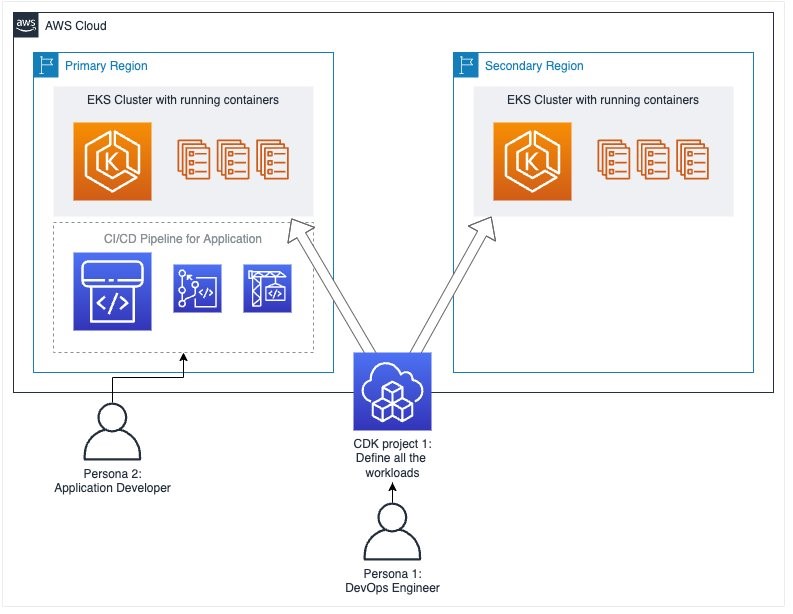

Multi-region architecture

The code included in this post creates two proof-of-concept Amazon EKS clusters and the supporting infrastructure in two AWS Regions.

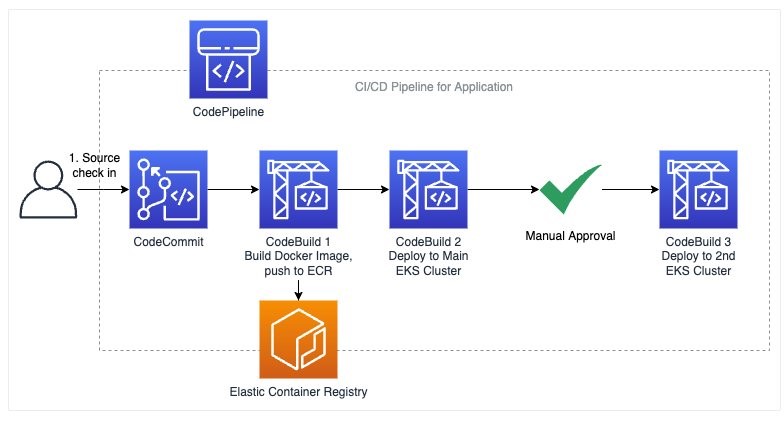

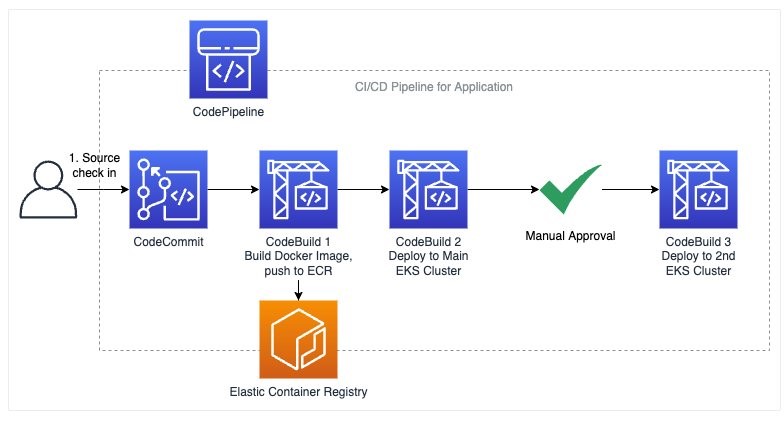

CDK also creates a stack with CodePipeline to build a container image from the sample application’s source code. Users trigger an automated deployment to the primary AWS Region by checking in code to the AWS CodeCommit Git repository CDK creates. CodePipeline triggers AWS CodeBuild when new changes are checked-in to the Git repository and the result is an image pushed to an Amazon Elastic Container Registry (Amazon ECR) repository.

The CDK code this post provides doesn’t include multi-region traffic routing. Customers can add a Global Accelerator, as explained in this post, or use Domain Name System (DNS) to route traffic between regions.

Solution overview

Prerequisites

You will need the following to complete the steps in this post:

Let’s start by setting a few environment variables:

ACCOUNT_ID=$(aws sts get-caller-identity --query 'Account' --output text)

AWS_PRIMARY_REGION=us-east-2

AWS_SECONDARY_REGION=eu-west-2

Clone the sample repository and install dependency packages. This repository contains CDK v2 code written in TypeScript.

git clone https://github.com/aws-samples/containers-blog-maelstrom

cd containers-blog-maelstrom/aws-cdk-eks-multi-region-skeleton/

npm install

The Amazon EKS cluster definition is stored in lib/cluster-stack.ts. The following is the snippet of the code:

const cluster = new eks.Cluster(this, 'demoeks--cluster', {

clusterName: `demoeks`,

mastersRole: clusterAdmin,

version: eks.KubernetesVersion.V1_21,

defaultCapacity: 2,

defaultCapacityInstance: new ec2.InstanceType(props.onDemandInstanceType)

});

As defined in bin/multi-cluster-ts.ts, the code creates two clusters and deploys the supporting infrastructure, the

continuous integration and continuous delivery/continuous deployment (CI/CD) stack (CodePipeline, CodeBuild, and CodeCommit), Amazon EKS clusters, and a sample web application.

You can customize the multi-cluster-ts.ts file to change regions and instance types based on your requirements.

const primaryRegion = {account: account, region: 'us-east-2'};

const secondaryRegion = {account: account, region: 'eu-west-2'};

const primaryOnDemandInstanceType = 'r5.2xlarge';

const secondaryOnDemandInstanceType = 'm5.2xlarge';

Bootstrap AWS Regions

The first step to any CDK deployment is bootstrapping the environment. cdk bootstrap is a tool in the AWS CDK command-line interface (AWS CLI) responsible for preparing the environment (i.e., a combination of AWS account and AWS Region) with resources required by CDK to perform deployments into that environment. If you already use CDK in a region, you don’t need to repeat the bootstrapping process.

Execute the commands below to bootstrap the AWS environment in us-east-2 and eu-west-2:

cdk bootstrap aws://$ACCOUNT_ID/$AWS_PRIMARY_REGION

cdk bootstrap aws://$ACCOUNT_ID/$AWS_SECONDARY_REGION

The CDK code creates five stacks:

- A

cluster-stack that creates an Amazon EKS cluster for primary region us-east-2

- A

container-stack that deploys sample nginx containers to Amazon EKS cluster for primary region us-east-2

- A

cluster-stack that creates an Amazon EKS cluster for secondary region eu-west-2

- A

container-stack that deploys sample nginx containers to Amazon EKS cluster or secondary region eu-west-2

- A

cicd-stack that creates a cicd pipeline using AWS CodePipeline and AWS CodeSuites to containerize and deploy a sample flask application that crosses regions of Amazon EKS

Run cdk list to see the list of the stacks to be created:

$ cdk list

ClusterStack-us-east-2

ClusterStack-eu-west-2

ContainerStack-us-east-2

ContainerStack-eu-west-2

CicdStack

You can use cdk diff to get a list of resources CDK will create:

cdk diff

If the result doesn’t match the output below, then refer to this step and check if npm run watch is running in the background.

You should see the following output:

Stack ClusterStack-ap-southeast-2IAM Statement Changes

┌───┬────────────────────────┬────────┬────────────────────────┬────────────────────────┬───────────┐

│ │ Resource │ Effect │ Action │ Principal │ Condition │

├───┼────────────────────────┼────────┼────────────────────────┼────────────────────────┼───────────┤

│ + │ ${AdminRole.Arn} │ Allow │ sts\:AssumeRole │ AWS\:arn:${AWS::Partiti│ │

│ │ │ │ │ on}\:iam::<<ACCOUNT_ID>│ │

│ │ │ │ │ root │ │

...

...

Resources

[+] AWS::IAM::Role AdminRole AdminRole38563C57

[+] AWS::EC2::VPC demogo-cluster/DefaultVpc demogoclusterDefaultVpc0F0EA8D6

[+] AWS::EC2::Subnet demogo-cluster/DefaultVpc/PublicSubnet1/Subnet demogoclusterDefaultVpcPublicSubnet1Subnet9B5D84CC

[+] AWS::EC2::RouteTable demogo-cluster/DefaultVpc/PublicSubnet1/RouteTable demogoclusterDefaultVpcPublicSubnet1RouteTableA9719167

[+] AWS::EC2::SubnetRouteTableAssociation demogo-cluster/DefaultVpc/PublicSubnet1/RouteTableAssociation demogoclusterDefaultVpcPublicSubnet1RouteTableAssociationF6BCC682

[+] AWS::EC2::Route demogo-cluster/DefaultVpc/PublicSubnet1/DefaultRoute demogoclusterDefaultVpcPublicSubnet1DefaultRoute0A0FDBF1

[+] AWS::EC2::EIP demogo-cluster/DefaultVpc/PublicSubnet1/EIP demogoclusterDefaultVpcPublicSubnet1EIP42D57092

[+] AWS::EC2::NatGateway demogo-cluster/DefaultVpc/PublicSubnet1/NATGateway

The code supports region-specific customization. It looks for Kubernetes deployment files in region-specific directories. The sample code contains directories for primary and secondary regions (called yaml-us-east-2 and yaml-eu-west-2). CDK will apply the deployment files in these folders to deploy the Kubernetes application in the two regions.

If your regions differ, then please create directories manually and copy the Kubernetes deployment manifest in each directory:

# mkdir yaml-<region-name> for primary and secondary regions

mkdir yaml-$AWS_PRIMARY_REGION

mkdir yaml-$AWS_SECONDARY_REGION

cp yaml-us-east-2/00_ap_nginx.yaml yaml-$AWS_PRIMARY_REGION/

cp yaml-us-east-2/00_ap_nginx.yaml yaml-$AWS_SECONDARY_REGION/

Create the clusters

If you are following the tutorial using different AWS Regions than the ones used in the post, please update the values of primary and secondary region in bin/multi-cluster-ts.ts before proceeding.

The cdk deploy subcommand deploys the specified stack(s) to your AWS account. Let’s deploy all five stacks:

Upon completion, you can check the pipeline in the CodePipeline Console. There is no master branch in CodeCommit yet, thus the pipeline will be on a failed status.

CDK creates the following resources:

- Amazon EKS clusters in two regions and the required infrastructure including VPCs, 3x public and private subnets, Internet Gateways, Network Address Translation (NAT) Gateways.

- Amazon EC2 instances: r5.2xlarge, mv5.2xlarge

- CI/CD Pipeline to deploy sample application to Amazon EKS clusters in

us-east-2 and eu-west-2

- Amazon ECR Repository in both to store sample application container images

- A Kubernetes namespace in each cluster

Deploy sample app to multiple regions

Navigate to sample application in the cloned repo and register the created CodeCommit repository in the application project with the following commands:

mkdir ../../sample-app

cp -R ../aws-cdk-multi-region-sample-app ../../sample-app

cd ../../sample-app/aws-cdk-multi-region-sample-app

EKS_CDK_CODECOMMIT_REPO=$(aws codecommit list-repositories --region $AWS_PRIMARY_REGION --query "repositories[?starts_with(repositoryName,'hello-py')].repositoryName" --output text)

EKS_CDK_CODECOMMIT_REPO_URL=$(aws codecommit get-repository --region $AWS_PRIMARY_REGION --repository-name $EKS_CDK_CODECOMMIT_REPO --query'repositoryMetadata.cloneUrlHttp' --output text)

git init

git remote add codecommit $EKS_CDK_CODECOMMIT_REPO_URL

Before checking code into the CodeCommit repository, we have to configure the Git client with CodeCommit credentials. This step requires using the AWS Management Console.

Navigate to the AWS Identity & Access Management (IAM) User console and create the HTTPS Git credentials for AWS CodeCommit for the IAM user your terminal is currently configured to use. For more information, please refer to AWS documentation:

Push the code in the directory to CodeCommit with the following command:

git add .

git commit -am "initial commit"

git push codecommit master

If this is your first time using CodeCommit, you will be prompted for username and password to push code to the CodeCommit Git repository. You can use the CodeCommit credentials downloaded in the prior step.

After you push to the repository, you can see in the CodePipeline console that the pipeline is triggered as follows:

Once the pipeline deploys the sample application to the Amazon EKS cluster in the primary region, it waits for a manual review before proceeding. Let’s check the deployment’s health in the primary cluster. Configure your kubectl to use your primary cluster:

CLUSTER1_KUBECONFIG_COMMAND=$(aws cloudformation describe-stacks \

--stack-name "ClusterStack-$AWS_PRIMARY_REGION" \

--region $AWS_PRIMARY_REGION \

--query 'Stacks[0].Outputs[?starts_with(OutputKey,`demoeksclusterConfig`)].OutputValue' \

--output text)

$(echo $CLUSTER1_KUBECONFIG_COMMAND)

See running services:

Let’s curl the hello-py service:

curl $(kubectl get service hello-py -o \

jsonpath='{.status.loadBalancer.ingress[*].hostname}') && echo ""

The service responds with a Hello World from <AWS Region> message to the curl request. Since the service is functional in the primary region, this deployment is assumed successful. As a result, the pipeline can proceed with deploying the application into the primary region.

Before we resume the pipeline, let’s verify that hello-py service doesn’t exist in the secondary Region.

Set your kubectl's current context to the Amazon EKS cluster in the secondary region:

CLUSTER2_KUBECONFIG_COMMAND=$(aws cloudformation describe-stacks \

--stack-name "ClusterStack-$AWS_SECONDARY_REGION" \

--region $AWS_SECONDARY_REGION \

--query 'Stacks[0].Outputs[?starts_with(OutputKey,`demoeksclusterConfig`)].OutputValue' \

--output text)

$(echo $CLUSTER2_KUBECONFIG_COMMAND)

Verify that hello-py service is not running in the secondary cluster:

kubectl get service hello-py

You should get an error:

Error from server (NotFound): services "hello-py" not found

Now we know there is no hello-py service in the secondary region. Let’s return to CodePipeline and approve the manual step.

Return to the CodePipeline AWS Management Console and select Review and select Approve in the next prompt. CodePipeline API PutApprovalResult can be used to automate this approval process.

When the pipeline gets the approval to deploy to the secondary region, it applies the deployment manifest (app-deployment.yaml) to the second Amazon EKS cluster.

Once the pipeline finishes successfully, check if hello-py service is functional:

kubectl get service hello-py

Once the service has an application load balancer (ALB) attached, then send a request to verify that the service responds with the secondary region’s name in the response.

curl $(kubectl get service hello-py -o \

jsonpath='{.status.loadBalancer.ingress[*].hostname}') && echo ""

This completes deployment process. hello-py service is deployed and functional in both regions.

Upgrades

As new code is checked-in to the CodeCommit repository, the pipeline will automatically attempt to create a new deployments. When operators verify the changes are successful in the primary region, they can approve the deployment to the secondary region.

The file lib/cluster-stack.ts contains the declaration of your Amazon EKS cluster settings. You can modify it to control cluster properties and upgrade clusters.

As you onboard newer services into these clusters, you can replicate the CI/CD stack created in this post for each service.

Cleanup

You continue to incur cost until deleting the infrastructure that you created for this post. Use the following manual process and commands to clean up the created AWS resources for this post:

$(echo $CLUSTER1_KUBECONFIG_COMMAND)

kubectl delete svc hello-py

$(echo $CLUSTER2_KUBECONFIG_COMMAND)

kubectl delete svc hello-py

cd ../../containers-blog-maelstrom/aws-cdk-eks-multi-region-skeleton

AWS_ECR_REPO=$(aws ecr describe-repositories --query "repositories[].[repositoryName]" --region $AWS_PRIMARY_REGION | grep 'cicdstack-ecrforhellopy' | sed -e 's/^[[:space:]]*//' | sed -e 's/^"//' -e 's/"$//')

AWS_ECR_IMAGES_TO_DELETE=$(aws ecr list-images --region $AWS_PRIMARY_REGION --repository-name $AWS_ECR_REPO --query 'imageIds[*]' --output json )

aws ecr batch-delete-image --region $AWS_PRIMARY_REGION --repository-name $AWS_ECR_REPO --image-ids "$AWS_ECR_IMAGES_TO_DELETE" || true

cdk destroy "*"

CDK will ask you Are you sure you want to delete: CicdStack, ContainerStack-<primary region>, ContainerStack-<secondary region>, ClusterStack-<primary region>, ClusterStack<secondary region> (y/n)? and enter y to delete.

Conclusion

This post demonstrated the procedure to create continuous deployment pipeline to deploy applications in multiple Amazon EKS clusters running in different regions. The accompanying CDK code creates EKS clusters and the CI/CD stack to continuously deploy application to the clusters.

CDK helps you define your cloud infrastructure, Kubernetes resources, and application in the same codebase. If your codebase is in a language that CDK supports, your applications, configuration, and the underlying infrastructure can be coded in the same language. If you must generate Kubernetes manifests using code, you can also use cdk8s to define Kubernetes resource programmatically.

As a next step, you can also explore EKS Blueprints, which is a collection of IaC modules, to help you configure and deploy consistent and batteries-included Amazon EKS clusters across accounts and Regions. You can use EKS Blueprints to easily bootstrap an Amazon EKS cluster with Amazon EKS add-ons. A wide range of popular open-source add-ons, including Prometheus, Karpenter, Nginx, Traefik, AWS Load Balancer Controller, Fluentbit, Keda, Argo CD, and more.

This post is also available as a workshop that uses CDK v1. You can find the changes between AWS CDK v1 and v2 here.