AWS Database Blog

Profiling slow-running queries in Amazon DocumentDB (with MongoDB compatibility)

Amazon DocumentDB (with MongoDB compatibility) is a fast, scalable, highly available, and fully managed document database service that supports MongoDB workloads. You can use the same MongoDB 3.6 or 4.0 or 5.0 application code, drivers, and tools to run, manage, and scale workloads on Amazon DocumentDB without having to worry about managing the underlying infrastructure. As a document database, Amazon DocumentDB makes it easy to store, query, and index JSON data.

AWS built Amazon DocumentDB to uniquely solve your challenges around availability, performance, reliability, durability, scalability, backup, and more. In doing so, we built several tools, like the profiler, to help you run analyze your workload on Amazon DocumentDB. The profiler gives you the ability to log the time and details of slow-running operations on your cluster. In this post, we show you how to use the profiler in Amazon DocumentDB to analyze slow-running queries to identify bottlenecks and improve individual query performance and overall cluster performance.

Prerequisites

To use the Amazon DocumentDB profiler, create an AWS Cloud9 environment and an Amazon DocumentDB cluster. You use the AWS Cloud9 terminal to run queries against the cluster.

For instructions on setting up your environment and creating your cluster, see Getting Started with Amazon DocumentDB (with MongoDB compatibility); Part 2 – using AWS Cloud9.

Solution overview

The solution described in this post includes the following tasks:

- Turn on the profiler for your cluster to profile slow-running queries

- Load a dataset and run sample queries

- Use AWS CloudWatch Logs to analyze logs and identify bottlenecks for slow-running queries

- Improve performance of slow-running queries by adding a missing index

Turning on the profiler

To enable the profiler, you must first create a custom cluster parameter group.

A cluster parameter group is a group of settings that determine how your cluster is configured. When you provision an Amazon DocumentDB cluster, it’s provisioned with the default cluster parameter group. The default settings are immutable; to make changes to your cluster, you need a custom parameter group.

- On the Amazon DocumentDB console, choose your parameter group.

If you don’t have one, you can create a cluster parameter group. - Select the profiler parameter and change its value to enabled.

You can also modifyprofiler_sampling_rateandprofiler_threshold_msparameters based on your preferences.profiler_sampling_rateis a fraction of slow operations that should be profiled or logged.profiler_threshold_msis a threshold in milliseconds; all commands that take longer thanprofiler-threshold-msare logged. For more information about parameters, see Enabling the Amazon DocumentDB Profiler.

For this post, I setprofiler_sampling_rateto 1 andprofiler_threshold_msto 50.

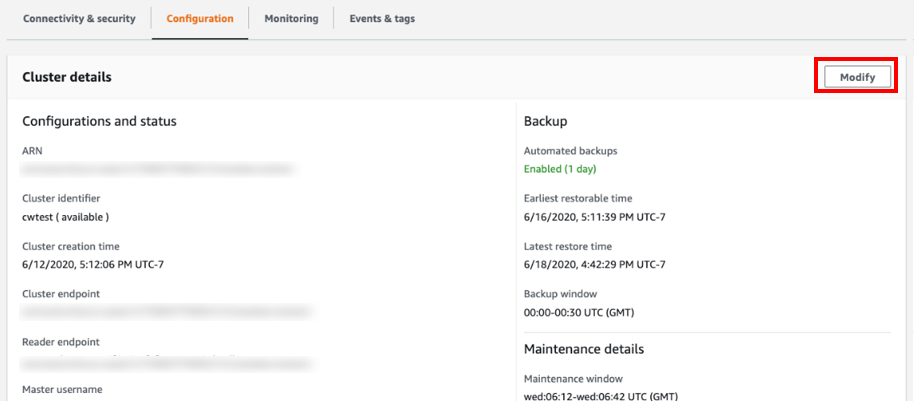

- Select the cluster you want to turn on the profiler for, and choose the Configuration

- Choose Modify.

- For Cluster parameter group, choose your custom parameter group.

If you’re already using a custom parameter group, you can skip this step. - For Log exports, select Profiler logs.

This enables your cluster to export the logs to CloudWatch. If you have already enabled log exports for profiler logs, you can skip this step.

- For When to apply modifications, select Apply immediately.

Alternatively, you can apply the change during your next scheduled maintenance window. - Choose Modify cluster.

You now need to reboot your instances to apply the new parameter group.

You now need to reboot your instances to apply the new parameter group. - On the Instances page, select your instance.

- From the Actions drop-down menu, choose Reboot.

You have successfully turned on the profiler and turned on log exports to CloudWatch.

Loading a dataset

For this post, I load a sample dataset on to my Amazon DocumentDB cluster and run some queries. The dataset consists of JSON documents that capture data related to the spread and characteristics, case count, and location info of the novel coronavirus (SARS-CoV-2).

- Download the dataset with the following code:

- Load the dataset using

mongoimport:Use

mongoimportversion 3.6.18 with Amazon DocumentDB. If you don’t havemongoimport, you can install it from the MongoDB download center.

To installmongoimport3.6 on Amazon Linux, run:To install

mongoimport3.6 on Ubuntu:For more information on using

mongoimport, see Dumping, Restoring, Importing, and Exporting Data.

To export a log to CloudWatch, I need to run a query that is longer than 50 milliseconds. To do that, I run two queries on my dataset, using the mongo shell on my AWS Cloud9 environment. For installation instructions, see Installing the mongo shell. - Run the first query:

- Run the second query:

Analyzing slow-running query logs in CloudWatch

To view the logs of your slow-running queries, complete the following steps:

- On the CloudWatch console, under Logs, choose Log groups.

- Select the log group associated with your cluster.

The log group should have the following format:/aws/docdb/<yourclustername>/profiler.

It can take up to 1 hour for the log group to show up after enabling the profiler and turning on log exports.

It can take up to 1 hour for the log group to show up after enabling the profiler and turning on log exports. - Select the log stream.

Typically, every Amazon DocumentDB instance has its own log stream. For this use case, because you only have one instance, you only see one log stream.

- Set the time period filter to your desired value.

For this use case, I filter it to 1 hour; in the following screenshot, you can see the two queries I ran earlier. It typically takes 1–2 minutes for your queries to show up in the log events.

You can now analyze the logs. The following two screenshots are EXPLAIN plans for the queries we ran. The plan outlines the stages and times for a query, and helps you discover potential bottlenecks. Looking at the logs, you can see that the first query took 485 milliseconds, and the second query took 559 milliseconds. Because both took longer than 50 milliseconds, they showed up in the profiler logs.

You can now analyze the logs. The following two screenshots are EXPLAIN plans for the queries we ran. The plan outlines the stages and times for a query, and helps you discover potential bottlenecks. Looking at the logs, you can see that the first query took 485 milliseconds, and the second query took 559 milliseconds. Because both took longer than 50 milliseconds, they showed up in the profiler logs.

Improving performance by adding an index

When you analyze the query logs, you can identify bottlenecks. The profiler logged both queries we ran earlier in CloudWatch because the runtime was over 50 milliseconds. From the logs, you can see that both the queries perform a COLLSCAN, which is a full collection sequential scan. COLLSCANs are often slow because you read your entire dataset. To reduce COLLSCANs, you can create indexes on fields that have a high degree of uniqueness and are queried frequently for a certain value or range of values for the field. For this use case, we can reduce COLLSCANS by creating an index on Cases. See the following code:

For more information about indexes, see Working with Indexes.

You can now rerun the queries to observe the new times. Ideally, the queries should run faster because the indexes prevent collection scans. To view the query plan and the amount of time it took to run at runtime, you can add explain("executionStats") to your query.

To rerun the first query, enter the following code:

The following screenshot shows the query plan.

To run the second query, enter the following code:

The following screenshot shows the query plan.

Instead of COLLSCANS, the query now uses IXSCANS and IXONLYSCANS. Instead of sequentially scanning the entire collection, the query optimizer uses indexes, which reduces the number of documents that need to be read to answer the query. This improved the runtime for the queries: the first query went from 485 milliseconds to 0.35 milliseconds, and the second query went from 559 milliseconds to 70 milliseconds.

Conclusion

In this post, you learned how to turn on the profiler in Amazon DocumentDB to analyze slow-running queries to identify performance issues, bottlenecks, and improve individual query performance and overall cluster performance. For more information, see Profiling Amazon DocumentDB Operations and Performance and Resource Utilization.

If you have any questions or comments about this post, please use the comments section. If you have any features requests for Amazon DocumentDB, email us at documentdb-feature-request@amazon.com.

About the Author

Meet Bhagdev is a Sr. Product Manager with Amazon Web Services