AWS Database Blog

Validating database objects after migration using AWS SCT and AWS DMS

Database migration can be a complicated task. It presents all the challenges of changing your software platform, understanding source data complexity, data loss checks, thoroughly testing existing functionality, comparing application performance, and validating your data.

AWS provides several tools and services that provide a pre-migration checklist and migration assessments. You can use the AWS Schema Conversion Tool (AWS SCT) to convert your existing database schema from one database engine to another. AWS Database Migration Service (AWS DMS) makes it easy to migrate relational databases, data warehouses, NoSQL databases, and other types of data stores. You can use AWS DMS to migrate your data into the AWS Cloud, between on-premises instances (through an AWS Cloud setup), or between combinations of cloud and on-premises setups.

In addition, AWS offers a wide range of documentation to guide you through your database migration. For more information, see Migrating an Oracle Database to PostgreSQL.

AWS SCT helps convert your schema objects and builds a report showing the percentage of Oracle code that it converted to PostgreSQL and how much code requires manual efforts for conversion. During database objects migration, you always risk a possibility of missing objects or creating new objects at the target database, or ignoring an entire source object intentionally. Validation is the process where you demonstrate to your customers that everything intended for migration is migrated successfully.

This post provides an outline on how to validate objects between a source Oracle database and a PostgreSQL target after completing database object migration and code conversion.

Validating objects

The question is what to validate? To understand this, you must know the different types of Oracle database objects and their equivalent PostgreSQL database object type.

The following table shows Oracle (source) database objects and the corresponding PostgreSQL (target) object type. To know that your DB conversion succeeded, you must thoroughly validate these objects.

| Oracle Objects | Migrated to PostgreSQL as |

| TABLE | TABLE |

| VIEW | VIEW |

| MATERIALIZED VIEW | MATERIALIZED VIEW |

| SEQUENCE | SEQUENCE |

| SYNONYM | Not available in PostgreSQL. Ignored in PostgreSQL, but can be partially achieved through Views. SET search_path can also be a work-around for synonyms. |

| TYPE | DOMAIN/ TYPE |

| FUNCTION | FUNCTION |

| PROCEDURE | Not available in PostgreSQL. Achieved through PostgreSQL Functions. |

| PACKAGE | Not available in PostgreSQL. Achieved through PostgreSQL Schemas to organize functions into groups. |

| TRIGGER | TRIGGER |

How to perform schema validation

Database migration involves lot many tasks, tools and processes. The size and type of Oracle database migration you want to do greatly determines the tools you should use. For example, a heterogeneous migration, where you are migrating from an Oracle database to a different database engine on AWS, is best accomplished using AWS DMS. A homogeneous migration, where you are migrating from an Oracle database to an Oracle database on AWS, is best accomplished using native Oracle tools.

Following task list shows when you should conduct schema validation during your migration process.

- Task 1: Configure Your Oracle Source Database

- Task 2: Configure Your PostgreSQL Target Database

- Task 3: Use the AWS SCT to Convert the Oracle Schema objects to PostgreSQL

- Task 4: Manually convert Oracle schema objects those AWS SCT couldn’t convert

- Task 5: Use AWS DMS to migrate data from Oracle to PostgreSQL

- Task 6: Perform the schema object validation

To validate the schema conversion, compare the objects found in the Oracle database and PostgreSQL database using any query editor.

The standard validation method compares object counts in the source database against the counts in the target database. You can perform count verification for any schema object, but count validation alone does not always satisfy end users. Users often look for object definition-level verification. This is why you must write your custom queries to retrieve the data definition language (DDL) from the database and compare them.

Schema objects validation queries

Oracle uses the LONG data type to store large character sets, with a maximum size of up to 2 GB. Oracle Long data type appears in many Oracle metadata tables or views. Few Oracle metadata tables used in validation queries that are explained later in this topic contains LONG data type. Unlike other data types, LONG data type in Oracle database doesn’t behave the same way and are subject to some restrictions.

The limitations to the LONG type include the following:

LONGcolumns can’t appear inWHEREclauses.- You can’t specify

LONGdata in regular expressions. - A stored function can’t return a

LONGvalue. - You can declare a variable or argument of a PL/SQL program unit using the

LONGdata type. However, you can’t then call the program unit from SQL.

The Oracle validation queries described in this topic use the DBMS_XMLGEN package to handle Oracle LONG data type differently and helps bypass few restrictions. DBMS_XMLGEN package takes an arbitrary SQL query as input, converts the entire record set into XML format and returns the result as CLOB data type, which then becomes easier to process and manipulate the data.

Step 1: Validate Oracle packages

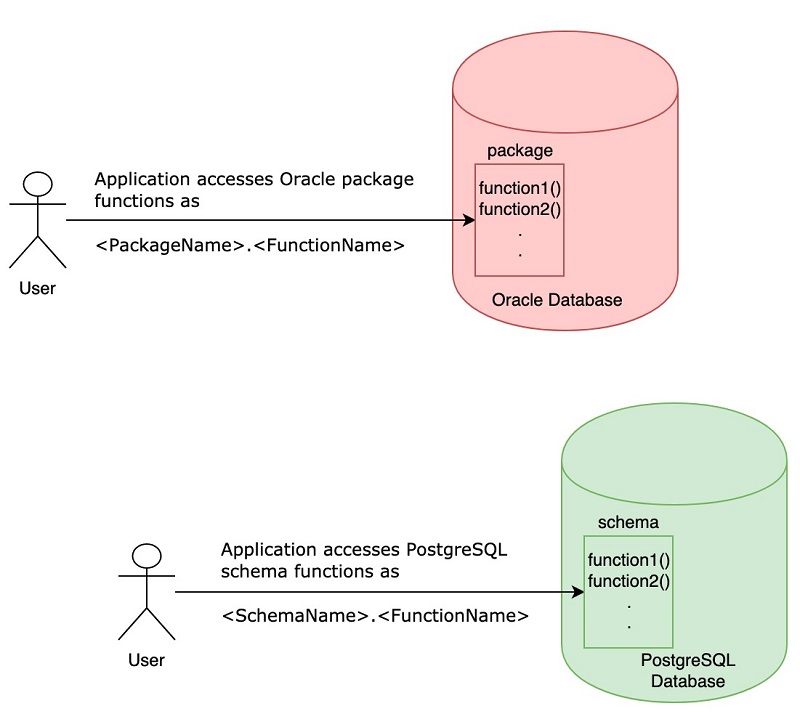

PostgreSQL schemas let you store all related functions in the same way as package groups functions in Oracle. Migrating Oracle packages as PostgreSQL schemas helps avoid unnecessary application changes, because applications accessing the Oracle database functions can access PostgreSQL individual functions in the same way. For example:

- Source Oracle database before migration: The application accesses Oracle package functions as PackageName.FunctionName.

- Target PostgreSQL database after migration: The application can access PostgreSQL functions as SchemaName.FunctionName.

Assume that you have one user in Oracle that contains 10 packages and other objects. You migrate all 10 packages as PostgreSQL schemas, and the Oracle user itself is another schema in PostgreSQL that stores all the other objects. So, in PostgreSQL after migration you will have 11 (10 package = 10 schemas; 1 user = 1 schema) schemas.

Use the following query to validate whether all the Oracle packages migrated as schemas in PostgreSQL:

Oracle

PostgreSQL

- If you exclude any object from migration in the source database or introduce one in the target database, then exclude those objects from the count by adding a

WHEREclause filter in the preceding and subsequent queries.

Step 2: Validate tables

AWS SCT converts Oracle tables with proper structure and provides the option to either deploy to a target PostgreSQL database or save the converted code as .sql files. You must verify whether all tables are migrated to target PostgreSQL database by checking the count on both source and target database. The following script performs counts verification for all tables:

Oracle

PostgreSQL

Step 3: Validate views

AWS SCT helps in migrating Oracle views code to views in PostgreSQL. Validate the count of views after migration in Oracle source and PostgreSQL target database using the following script:

Oracle

PostgreSQL

Step 4: Validate sequences

The sequences count must match at both source and target to be confident that the migration didn’t miss any sequences. Validate the sequences count after migration into PostgreSQL using the following script:

Oracle

PostgreSQL

Step 5: Validate triggers

You can perform a count validation of any database object. Because database triggers are distinctly important, it is essential to verify all attributes of the triggers. Validate the triggers after migration into PostgreSQL using the following script:

Oracle

PostgreSQL

Step 6: Validate primary keys

Constraints help to maintain data integrity in a relational database. During database migration, it is crucial to validate constraints carefully.

You can validate primary keys by checking if you have created a similar “primary key” constraint on the target table, which means column(s) and column order must be same on both source Oracle and target PostgreSQL database. The validation explained he re does not check the primary key columns and column order. Primary keys by default create a UNIQUE index in both Oracle and PostgreSQL. So, during index validation, you can verify primary key columns and the column order. Validate the primary keys after migration into PostgreSQL using the following script:

Oracle

PostgreSQL

Step 7: Validate indexes

Indexes speed up searching the database, and a bad index migration or missing an index creation can severely impact target database queries. Comparing indexes is not simple because the order of columns in each index matters. If the column order changes, the index behaves differently and considered as a new index completely.

The primary key in a relational database creates an index internally. While validating indexes, you must include the indexes created implicitly for primary keys and indexes created explicitly through CREATE INDEX syntax. The following query validates the type of index and column order for all tables for all indexes:

Oracle

PostgreSQL

Step 8: Validate check constraints

When you perform CHECK constraint validation, make sure that you include constraints created with condition checking as well as columns declared NOT NULL during table creation. You can validate check constraints by verifying the specific condition on the respective table column. Validate the check constraints after migration into PostgreSQL using the following script:

Oracle

PostgreSQL

Step 9: Validate foreign keys

Foreign keys help maintain links between relational tables by referring to the primary or unique key of another table. PostgreSQL allows users to create foreign keys with the NOT VALID option. If the constraint is marked NOT VALID, the potentially lengthy initial check to verify that all rows in the table satisfy the constraint is skipped. The constraint will still be enforced against subsequent inserts or updates (that is, they will fail unless there is a matching row in the referenced table).

Migrate your foreign keys with the NOT VALID option in PostgreSQL, if you are sure that source existing data conforms to foreign keys validation rules and you don’t want the same checks again in the target PostgreSQL database.

The following foreign keys validation query takes both parent and child tables into consideration and assumes you have created foreign keys with NOT VALID option in PostgreSQL during migration:

Oracle

PostgreSQL

Useful PostgreSQL catalog tables

The following PostgreSQL catalog tables and views help you build custom queries.

| Source Oracle Metadata | Target PostgreSQL Metadata |

| all_tables | pg_tables

information_schema.tables |

| all_views | pg_views

information_schema.views |

| all_sequences | information_schema.sequences |

| all_triggers | information_schema.triggers |

| all_indexes | pg_indexes |

| all_constraints | pg_constraint join to pg_namespace |

| all_procedures | information_schema.routines |

Conclusion

Validating database objects is essentially a view of the database migration accuracy and confirms that all objects migrated successfully with proper attributes. Validating various constraints helps you double-check target database integrity and keep bad data out of your database. Indexes speed up data retrieval operations, and validation of indexes prevents existing queries from slowing down at the target database.

You can use the queries in this post as-is in any programming language to retrieve record sets from your Oracle and PostgreSQL databases. You can always write your custom validation queries based on your requirement, but this post demonstrates one possible way to conduct post-migration object validation.

The following related resources help you understand more about database migration.

- How to Migrate Your Oracle Database to PostgreSQL

- Things to know before you start database migration

If you have any questions or suggestions about this post, feel free to leave a comment.

About the Author

Sashikanta Pattanayak is an Associate Consultant with Amazon Web Services. He works with customers to build scalable, highly available and secure solutions in the AWS cloud. His focus area is homogenous and heterogeneous database migrations.