AWS Developer Tools Blog

Tuning Apache Kafka and Confluent Platform for Graviton2 using Amazon Corretto

Tuning Apache Kafka and Confluent Platform for Graviton2 using Amazon Corretto

By Guest Blogger Liz Fong-Jones, Principal Developer Advocate, Honeycomb.io

Background

Honeycomb is a leading observability platform used by high-performance engineering teams to quickly visualize, analyze, and improve cloud application quality and performance. We utilize the OpenTelemetry standard to ingest data from our clients, including those hosted on AWS and using the AWS Distribution of OpenTelemetry. Once data is optionally pre-sampled within client Amazon Virtual Private Clouds (Amazon VPC), it flows to Honeycomb for analysis, resulting in a data volume of millions of trace spans per second passing through our systems.

To make sure of the durability and reliability of processing across software upgrades, spot instance retirements, and other continuous chaos, we utilize Application Load Balancers (ALBs) to route traffic to stateless ingest workers which publish the data into a pub-sub or message queuing system. Then, we read from this system to consume any telemetry added since the last checkpoint, decoupling the ingest process and allowing for indexing to be paused and restarted. For the entire history of Honeycomb dating back to 2016, we have used variants of the Apache Kafka software to perform this crucial pub-sub role.

Factors in Kafka performance

After having chosen im4gn.4xlarge instances for our Kafka brokers, we were curious how much further we could push the already excellent performance that we were seeing. Although it’s true that Kafka and other JVM workloads “just work” on ARM without modification in a majority of cases, a little bit of fine-tuning and polish can really pay off.

To understand the critical factors underlying the performance of our Kafka brokers, let’s recap what Kafka brokers do in general, as well as what additional features our specific Kafka distribution contains.

Apache Kafka is written in a mix of Scala and Java, with some JNI-wrapped C libraries for performance-sensitive code, such as ZSTD compression. A Kafka broker serializes and batches incoming data coming from producers or replicated from its peers, serves data to its peers to allow for replication, and responds to requests to consume recently produced data. Furthermore, it manages the data lifecycle and ages out expired segments, tracks consumer group offsets, and manages metadata in collaboration with a fleet of Zookeeper nodes.

As explained in the im4gn post, we use Confluent Enterprise Platform (a distribution of Apache Kafka customized by Confluent) to tier older data to Amazon Simple Storage Service (Amazon S3) to free up space on the local NVMe SSDs. This additional workload introduces the overhead of high-throughput HTTPS streams, rather than just the Kafka internal protocols and disk serialization.

Profiling the Kafka broker

Immediately after switching to im4gn instances from i3en, we saw 40% peak CPU utilization, 15% off-peak CPU utilization, and utilized 30% of disk at peak (15% off-peak). We were hoping to keep these two utilization numbers roughly in line with each other to maximize the usage of our instances and keep cost economics under control. By using Async Profiler for short runs, and later Pyroscope to continuously verify our results, we could see where in the broker’s code CPU time was being spent and identify wasteful usage.

The first thing that jumped out at us was the 20% of time being spent doing zstd in JNI.

We hypothesized that we could obtain modest improvements from updating the ZSTD JNI JAR from the 1.5.0-x bundled with Confluent’s distro to a more recent 1.5.2-x version.

However, our most significant finding from profiling was the significant time (12% of consumed CPU) being spent in com/sun/crypto/provider/GaloisCounterMode.encryptFinal, as part of the kafka/tier/store/S3TierObjectStore.putFile Confluent Tiered Storage process (28.4% of total broker consumed CPU in total). This was surprising, as we hadn’t seen this high of an overhead on the i3en instances, and others who ran vanilla Kafka on ARM had seen comparable CPU profiles to x86.

At this point, we began our collaboration with the Corretto team at AWS, which has been working to improve the performance of JVM applications on Graviton. Yishai reached out and asked if we were interested in trying the Corretto team’s branch that supplies ARM AES CTR intrinsic, because it’s a hot codepath used by TLS.

After upgrading to the branch build of the JDK, and enabling -XX:+UnlockDiagnosticVMOptions -XX:+UseAESCTRIntrinsics in our extra JVM flags, we saw a significant performance improvement with profiling showing that com/sun/crypto/provider/GaloisCounterMode.encryptFinal is only taking 0.4% of time (with an additional 1% each in ghash_processBlocks_wide and counterMode_AESCrypt attributed to unknown_Java). This makes the total cost of the kafka/tier/store/S3TierObjectStore.putFile workload now only 16.4% — a reduction of 12% of total broker consumed CPU for tiering.

We don’t currently use TLS between Kafka brokers or clients, otherwise the savings would likely have been even greater. Speculating, this almost certainly lowers to ~1% overhead of the performance cost of enabling TLS between brokers, which we otherwise might have been hesitant to do due to the large penalty.

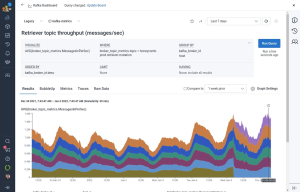

Show me the pictures and graphs!

Before any experimentation:

After applying UseAESCTRIntrinsics:

This result was clearly better. However, running a bleeding edge JVM build was not a great long-term approach for our production workload, and it wouldn’t necessarily make sense to ask all of the Confluent customers or all of the JVM or Kafka workloads to switch to using the latest Corretto JVM to generalize our results in the future. Furthermore, there was another problem not solved by the UseAESCTRIntrinsics patch: MD5 checksum time.

Enter AWS Corretto Crypto Provider

After addressing TLS/AES overhead, the remaining work left to do was fix the 9.5% of CPU time being spent calculating MD5 (which is also part of the process of doing an Amazon S3 upload). The best solution here would be to not perform the MD5 digest at all (since Amazon S3 removed the requirement for Content-MD5 header). Today, TLS already includes HMAC stream check summing, and if bits are changing on the disk, then we have bigger problems regardless of whether tiering is happening. The Confluent team is working on allowing for MD5 opt-out. Meanwhile, we wanted something that could address both TLS/AES overhead and MD5 overhead, all without having to patch the JVM.

The AWS Corretto Crypto Provider is a Java security API provider that implements digest and encryption algorithms with compiled C/JNI wrapped code. Although official binaries aren’t yet supplied for ARM, it was easy to grab a spare Graviton2 instance and compile a build, then set it as the -Djava.security.properties provider in the Kafka startup scripts. With ACCP enabled, only 1.8% of time is spent in AESGCM (better than the 0.4%+1%+1% = 2.4% seen with UseAESCTRIntrinsics), and only 4.7% of time is spent in MD5 (better than the 9.5% we previously saw).

This means that the total overhead of Kafka/tier/store/S3TierObjectStore.putFile is now 12-14% rather than 28% (about half what it was before).

We felt ready to fully deploy this result across all of our production workloads and leave it live, knowing that we could still benefit from future rolling Corretto official releases with JVM flags set to production, non-experimental values.

The future of Kafka and ARM at Honeycomb

Although we’re satisfied after tuning with the behavior of our fleet of six im4gn.2xlarge instances for serving our workload of 2M messages per second, we decided to preemptively scale up our fleet to nine brokers. The rationale had nothing to do with CPU performance. Instead, we were concerned after repeated weekly broker termination chaos engineering experiments about the network throughput required during peak hours to survive the loss of a single broker and re-replicate all of the data in a timely fashion

By spreading the 3x duplicated data across nine brokers, only one third of all of the data, rather than half of all of the data, would need to be re-replicated in the event of broker loss, and the replacement broker would have eight healthy peers to read from, rather than five. Increasing capacity by 50% halved the time required to fully restore in-service replica quorum at peak weekday traffic from north of six hours to less than three hours.

This change is just one of a series of many small changes that we’ve made to optimize and tune our AWS workload at Honeycomb. In the coming weeks, we hope to share how, without any impact on customer-visible performance, we decommissioned 100% of our Intel EC2 instances and 85% of our Intel AWS Lambda functions in our fleet for a net cost and energy savings.