AWS HPC Blog

Tag: HPC

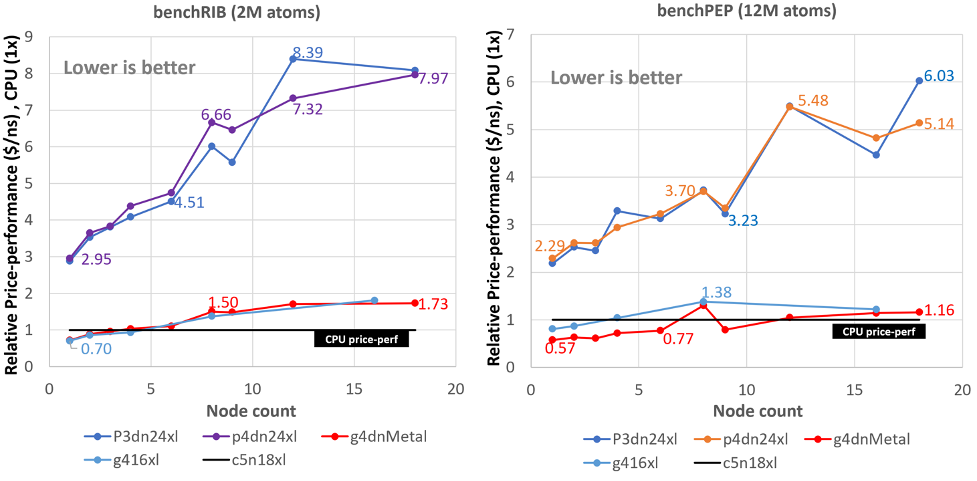

Running GROMACS on GPU instances: multi-node price-performance

This three-part series of posts cover the price performance characteristics of running GROMACS on Amazon Elastic Compute Cloud (Amazon EC2) GPU instances. Part 1 covered some background no GROMACS and how it utilizes GPUs for acceleration. Part 2 covered the price performance of GROMACS on a particular GPU instance family running on a single instance. […]

Running GROMACS on GPU instances: single-node price-performance

This three-part series of posts cover the price performance characteristics of running GROMACS on Amazon Elastic Compute Cloud (Amazon EC2) GPU instances. Part 1 covered some background no GROMACS and how it utilizes GPUs for acceleration. This post (Part 2) covers the price performance of GROMACS on a particular GPU instance family running on a […]

Running GROMACS on GPU instances

Comparing the performance of real applications across different Amazon Elastic Compute Cloud (Amazon EC2) instance types is the best way we’ve found for finding optimal configurations for HPC applications here at AWS. Previously, we wrote about price-performance optimizations for GROMACS that showed how the GROMACS molecular dynamics simulation runs on single instances, and how it […]

AWS Batch Dos and Don’ts: Best Practices in a Nutshell

AWS Batch is a service that enables scientists and engineers to run computational workloads at virtually any scale without requiring them to manage a complex architecture. In this blog post, we share a set of best practices and practical guidance devised from our experience working with customers in running and optimizing their computational workloads. The readers will learn how to optimize their costs with Amazon EC2 Spot on AWS Batch, how to troubleshoot their architecture should an issue arise and how to tune their architecture and containers layout to run at scale.

Running the Harmonie numerical weather prediction model on AWS

The Danish Meteorological Institute (DMI) is responsible for running atmospheric, climate and ocean models covering the kingdom of Denmark. We worked together with the DMI to port and run a full numerical weather prediction (NWP) cycling dataflow with the Harmonie Numerical Weather Prediction (NWP) model to AWS. You can find a report of the porting and operational experience in the ACCORD community newsletter. In this blog post, we expand on that report to present the initial timing results from running the forecast component of Harmonie model on AWS. We also present these as-is timing results together with as-is timings attained on the supercomputing systems based on Cray XC40 and Intel Xeon based Cray XC50.

Cost-optimization on Spot Instances using checkpoint for Ansys LS-DYNA

A major portion of the costs incurred for running Finite Element Analyses (FEA) workloads on AWS comes from the usage of Amazon EC2 instances. Amazon EC2 Spot Instances offer a cost-effective architectural choice, allowing you to take advantage of unused EC2 capacity for up to a 90% discount compared to On-Demand Instance prices. In this post, we describe how you 0can run fault-tolerant FEA workloads on Spot Instances using Ansys LS-DYNA’s checkpointing and auto-restart utility.

Virtual Screening of Novel Active Drug Compounds on AWS with Orion®

Computer-aided drug discovery (CADD) has been a key player in lowering the cost and speeding up the timeline for drug development. CADD uses high performance computing (HPC) resources to virtually screen databases with billions of molecules. It can speed up the searching of potential drug molecules, and filter out molecules and compounds that are unsuitable. OpenEye Scientific developed Orion®, a cloud-based molecular design platform for CADD. Orion provides computational chemists with virtually unlimited HPC resources. These include data visualization, collaboration, and workflow management tools that help them perform calculations more efficiently. In this post, we describe the Orion architecture on AWS, and it’s capabilities to address the challenges in drug development.

Call for participation: PRACE Winter School

The Inter University Computing Centre (IUCC) in Israel and AWS have joined forces to train Researchers and Research Software Engineers (RSEs) in the use of AWS for High Performance Computing (HPC) at the PRACE Winter School, 7-9 December 2021, and we’re calling for interested groups to sign up and join us.

New: Introducing AWS ParallelCluster 3

Running HPC workloads, like computational fluid dynamics (CFD), molecular dynamics, or weather forecasting typically involves a lot of moving parts. You need a hundreds or thousands of compute cores, a job scheduler for keeping them fed, a shared file system that’s tuned for throughput or IOPS (or both), loads of libraries, a fast network, and […]

Supporting climate model simulations to accelerate climate science

The Amazon Sustainability Data Initiative (ASDI), AWS is donating cloud resources, technical support, and access to scalable infrastructure and fast networking providing high performance computing solutions to support simulations of near-term climate using the National Center for Atmospheric Research (NCAR) Community Earth System Model Version 2 (CESM2) and its Whole Atmosphere Community Climate Model (WACCM). In collaboration with ASDI, AWS, and SilverLining, a nonprofit dedicated to ensuring a safe climate, the National Center for Atmospheric Research (NCAR) will run an ensemble of 30 climate-model simulations on AWS. The climate runs will simulate the Earth system over the period of years 2022-2070 under a median scenario for warming and make them available through the AWS Open Data Program. The simulation work will demonstrate the ability to use cloud infrastructure to advance climate models in support of robust scientific studies by researchers around the world and aims to accelerate and democratize climate science.