AWS for Industries

Remote Rendering for Real-time AR Applications at AWS Edge

Augmented Reality (AR) applications such as 3D machine design collaboration, equipment repair assisted by 3D digital twin models, and medical surgery training with 3D body images, all require 3D object rendering in real time. Remote rendering is a technology which performs 3D content manipulation in a server in response to AR headset motions and streams the rendered object back to the headset in real time. The benefits of remote rendering include higher performance, higher quality AR experiences, and lower cost than mobile devices. These benefits are gained as the server shares the graph processing resources and supports higher-resolution streaming platforms, compared to individual headsets with their own GPUs or limited resources. Remote rendering also provides better data protection by securing the data in the server instead of in the individual headsets. Yet, remote rendering must serve the common characteristics of real-time AR applications – low-latency response time, low jitter, and high throughput to deliver an interactive experience.

In this blog post, we describe how AWS for the Edge, specifically AWS Wavelength and AWS Snowball, can support the remote rendering in public 5G environment and private 5G environment, respectively. We use Holo-Light’s XR engineering application AR 3S with integrated remote rendering technology as an example to illustrate the remote rendering requirements and workflows in these edge computing environments.

AR 3S and Remote Rendering Requirements

Holo-Light’s AR 3S Augmented Reality Engineering Space is an XR application designed to improve engineering and product development by providing a digital workspace to work and collaborate on 3D CAD data in augmented and virtual reality. Engineers use AR 3S to visualize complex, full-scale 3D models, merge them with physical components, and evaluate as well as interact with them in the physical environment.

Figure 1. AR 3S user view showing the disassembly of a complex 3D model

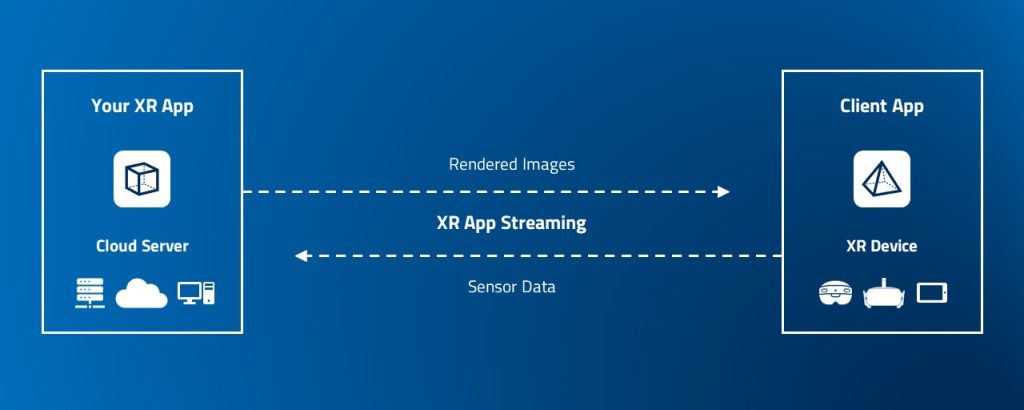

The solution includes a suite of engineering tools and technical features, including real-time 3D rendering, gesture recognition, and tracking on a server. Its built-in proprietary streaming technology ISAR SDK allows the XR application to run on a more powerful server – with no limits on the level of detail, data size, and polygon count of the 3D holograms displayed. The user simply connects from a client app on the XR device to the XR app on the external server. AR 3S can process more than 100 million polygons at a speed of 40-60 frames per second, whereas self-sufficient AR data goggles only work smoothly below 1 million polygons. Now, the rendering process shifts from the low-performance XR device to the high-performance server.

Figure 2. Remote Rendering Scenario with ISAR SDK Example

For end user’s immersive experience, the remote rendering typically requires less than 20ms XR device round-trip latency, also called “motion-to-photon latency.” With respect to the network bandwidth requirements, the remote rendering requires about 10Kbps uplink throughput for an XR device to transport its motion sensor data to the server and 20Mbps downlink throughput for the server to send rendered images to the XR device. Both the uplink and downlink throughputs increase as the number of concurrent XR devices increases, e.g., in case of multi-user 3D design collaboration.

Within ISAR, the WebRTC standard is used for XR-Streaming. This typically requires the use of a STUN (Session Traversal Utilities for NAT) or Turn server, if the server and the AR device are not within a local network. STUN or TURN enables WebRTC applications to discover and traverse network address translators (NATs) and firewalls in order to establish a direct peer-to-peer connection between the server and client devices. To learn more about STUN and TURN server technology, please search and study on-line articles (e.g., WebRTC course).

AWS Wavelength for Remote Rendering in a Public 5G Environment

AWS Wavelength embeds AWS compute and storage services within a Communication Service Providers’ (CSP) public 5G networks. It provides mobile edge computing infrastructure for developing, deploying, and scaling ultra-low-latency applications. At present, six CSPs – Bell Canada, British Telecom (BT), KDDI, SK Telecom, Verizon, and Vodafone host the AWS Wavelength services in 30 AWS Wavelength Zones.

The combination of AWS Wavelength and the CSPs’ 5G connectivity enables a class of AR and VR applications, or XR in general, that require real-time remote rendering in the cloud at the network edge in public environments. Use cases include gaming, engineering design review, and immersive training where users with XR devices are in different locations with CSP public 5G services.

A high-level solution architecture for the AWS Wavelength to host remote rendering servers is shown in Figure 3. The AWS VPC is extended into the AWS Wavelength Zone in a CSP network, where a virtualized remote rendering server can be deployed in one or more AWS EC2 instances equipped with GPUs in the AWS Wavelength Zone. The AWS Wavelength Zone and the CSP network in a specific location are connected through AWS Wavelength’s networking construct – Carrier Gateway (CGW), which allows inbound traffic from the CSP network and outbound traffic to the CSP network and the internet.

For the rendering function to serve the XR devices or client applications, you, as a remote rendering service owner deploy the XR streaming server in one or more EC2 instances on a VPC subnet in the AWS Wavelength Zone. Further, you create a CGW. As part of the CGW creation procedure, you select the subnet in which the remote rendering server(s) is deployed to route the rendering stream traffic to the CGW. You need to allocate a Carrier IP address and assign it to the EC2 instance. The CGW performs NAT of the EC2 instance’s private IP address to the Carrier IP address. A STUN server is required either in the CSP network or in the AWS Wavelength Zone to enable the direct WebRTC peer-to-peer connection between the XR streaming server on the EC2 instance and client devices.

The XR application users point their XR devices to the Carrier IP to enable the remote rendering.

Figure 3. Solution Architecture for Remote Rendering on AWS Wavelength

AWS Snowball Edge for Remote Rendering in Private 5G Environment

AWS Snowball Edge is a physical device with cloud compute and storage services such as Amazon EC2 with GPU, Amazon EKS Anywhere, AWS Lambda, AWS IoT Greengrass, and machine learning at the Edge. In particular, the device is ruggedized and can operate in a standalone mode disconnected from the AWS Region. These capabilities make it suitable for use in private environments such as factories, hospitals, or defense installations where resources are dedicated for private use and operations and data are secured locally with restricted network connectivity from the external world.

Auto manufacturers could use AR in a private environment during their 3D design review process aiding collaboration between designers and engineers. In this use case, the manufacturer requires all the design activities, the AR application and its remote rendering, and data be kept on premises.

A solution architecture for using AWS Snowball Edge for remote rendering in a private 5G environment is shown in Figure 4. The AWS Snowball Edge device must be of type “Compute Optimized with GPU” and the EC2 instance type must be “sbe-g” to host the remote rendering with GPU support. The AWS Snowball Edge device has two 10G RJ45 ports, one 10G/25G SFP+/SFP28 port, and one 40G/100G QSFP28 port. To separate the management plane and the data plane, AWS recommends using one RJ45 port for the management traffic and one SFP+/SFP28 port for the application traffic.

Figure 4. Solution Architecture for Remote Rendering on AWS Snowball Edge

Note that a Virtual Network Interface (VNI) is associated with the RJ45 port and a Direct Network Interface (DNI) is associated with the SPF+/SFP28 port. The former leads to IP NATing between the VNI and the EC2 instance eth0. The latter enables Layer-2 connection between the DNI interface and the EC2 instance eth1. STUN Server is not required when the XR streaming traffic, i.e., data plane traffic, goes through the DNI path.

AWS OpsHub is a graphical user interface for managing the AWS Snowball Edge device locally or remotely.

The AWS Snowball Edge can be connected to an AWS Region for such use cases as data upload and image download. You use AWS Site-to-Site VPN over Internet for a secure connection with IP Security (IPSec) tunnels.

The private 5G network consists of 5G Radio Access Network (RAN) and 5G Core network which are deployed on premises with a radio spectrum allocated for the private environment. An example of the RAN is AirSpeed 1900/2900, an all-in-one gNB solution with RU, DU, and CU in a single unit. An example of the 5G Core is Athonet 5G Core, which can run on AWS Snowball Edge.

XR devices use the remote rendering service hosted in the AWS Snowball Edge device through the private 5G network. An XR device can be connected to the 5G network through its 5G modem, a tethered 5G phone, or a 5G-WiFi hot spot. XR devices can use wireline connection to the AWS Snowball Edge for the remote render service as well.

To start the XR streaming, the user simply points the XR application client running in the XR device to the IP address of the remote rendering’s EC2 DNI interface.

Summary

AR/VR or XR applications can utilize real-time object remote rendering to reduce heavy computing resources such as GPUs required for XR devices, thus lowering the cost and energy consumption of XR devices. The remote rendering also provides better data protection by securing the data in the server. AWS edge services address the low latency and high downlink throughput requirements for the remote rendering. Specifically, this blog post provides two remote rendering reference architectures the with AWS Wavelength for public 5G environments and AWS Snowball Edge for private 5G environments. It describes how the two remote rendering solutions work with Holo-Light AR 3S XR streaming as an example.

To get started with XR remote rendering with AWS edge services, please review the AWS Wavelength for public 5G environments and AWS Snowball Edge for private 5G or wireline on premises.

Though not presented in this blog post, AWS Local Zones at the edge can also support remote rendering applications through either Internet connection or private connection with XR devices. Compared to CSP-hosted AWS Wavelength, AWS Local Zones are managed by AWS. Please explore AWS Local Zones for another edge solution option.

To use Holo-Light XR remote rendering, please check out Holo-Light official website and blog XR Streaming: How Holo-Light Solves Major Problems of Augmented and Virtual Reality. Contact Holo-Light for a free trial access to the SDK and XR engineering application. And in addition to the solution described above, there is also the possibility to test the Amazon Machine Image (AMI) of AR 3S Pro Cloud on the AWS Marketplace.