The Internet of Things on AWS – Official Blog

Building an AI Assistant for Smart Manufacturing with AWS IoT TwinMaker and Amazon Bedrock

Unlocking all of the insights hidden within manufacturing data has the potential to enhance efficiency, reduce costs and boost overall productivity for numerous and diverse industries. Finding insights within manufacturing data is often challenging, because most manufacturing data exists as unstructured data in the form of documents, equipment maintenance records, and data sheets. Finding insights in this data to unlock business value is both a challenging and exciting task, requiring considerable effort but offering significant potential impact.

AWS Industrial IoT services, such as AWS IoT TwinMaker and AWS IoT SiteWise, offer capabilities that allow for the creation of a data hub for manufacturing data where the work needed to gain insights can start in a more manageable way. You can securely store and access operational data like sensor readings, crucial documents such as Standard Operating Procedures (SOP), Failure Mode and Effect Analysis (FMEA), and enterprise data sourced from ERP and MES systems. The managed industrial Knowledge Graph in AWS IoT TwinMaker gives you the ability to model complex systems and create Digital Twins of your physical systems.

Generative AI (GenAI) opens up new ways to make data more accessible and approachable to end users such as shop floor operators and operation managers. You can now use natural language to ask AI complex questions, such as identifying an SOP to fix a production issue, or getting suggestions for potential root causes for issues based on observed production alarms. Amazon Bedrock, a managed service designed for building and scaling Generative AI applications, makes it easy for builders to develop and manage Generative AI applications.

In this blog post, we will walk you through how to use AWS IoT TwinMaker and Amazon Bedrock to build an AI Assistant that can help operators and other end users diagnose and resolve manufacturing production issues.

Solution overview

We implemented our AI Assistant as a module in the open-source “Cookie Factory” sample solution. The Cookie Factory sample solution is a fully customizable blueprint which builders can use to develop an operation digital twin tailored for manufacturing monitoring. Powered by AWS IoT TwinMaker, operations managers can use the digital twin to monitor live production statuses as well as go back in time to investigate historical events. We recommend watching AWS IoT TwinMaker for Smart Manufacturing video to get a comprehensive introduction to the solution.

Figure 1 shows the components of our AI Assistant module. We will focus on the Generative AI Assistant and skip the details of the rest of the Cookie Factory solution. Please feel free refer to our previous blog post and documentation if you’d like an overview of the entire solution.

The Cookie Factory AI Assistant module is a python application that serves a chat user interface (UI) and hosts a Large Language Model (LLM) Agent that responds to user input. In this post, we’ll show you how to build and run the module in your development environment. Please refer to the Cookie Factory sample solution GitHub repository for information on more advanced deployment options; including how to containerize our setup so that it’s easy to deploy as a serverless application using AWS Fargate.

The LLM Agent is implemented using the LangChain framework. LangChain is a flexible library to construct complex workflows that leverage LLMs and additional tools to orchestrate tasks to respond to user inputs. Amazon Bedrock provides high-performing LLMs needed to power our solution, including Claude from Anthropic and Amazon Titan. In order to implement the retrieval augmented generation (RAG) pattern, we used an open-source in-memory vector database Chroma for development environment use. For production use, we’d encourage you to swap Chroma for a more scalable solution such as Amazon OpenSearch Service.

To help the AI Assistant better respond to the user’s domain specific questions, we ground the LLMs by using the Knowledge Graph feature in AWS IoT TwinMaker and user provided documentation (such as equipment manuals stored in Amazon S3). We also use AWS IoT SiteWise to provide equipment measurements, and a custom data source implemented using AWS Lambda to get simulated alarm events data that are used as input to LLMs and generate issue diagnosis reports or troubleshooting suggestions for the user.

A typical user interaction flow can be described as follows:

- The user requests the AI Assistant in the dashboard app. The dashboard app loads the AI Assistant chat UI in the

iframe. - The user sends a prompt to the AI Assistant in the chat UI.

- The LLM Agent in the AI Assistant determines the best workflow to answer the user’s question and then executes that workflow. Each workflow has its own strategy that can allow for the use of additional tools to collect contextual information and to generate a response based on the original user input and the context data.

- The response is sent back to the user in the chat UI.

Building and running the AI Assistant

Prerequisites

For this tutorial, you’ll need a bash terminal with Python 3.8 or higher installed on Linux, Mac, or Windows Subsystem for Linux, and an AWS account. We also recommend using an AWS Cloud9 instance or an Amazon Elastic Compute Cloud (Amazon EC2) instance.

Please first follow the Cookie Factory sample solution documentation to deploy the Cookie Factory workspace and resources. In the following section, we assume you have created an AWS IoT TwinMaker Workspace named CookieFactoryV3. <PROJECT_ROOT> refers to the folder that contains the cookie factory v3 sample solution.

Running the AI Assistant

To run the AI Assistant in your development environment, complete the following steps:

- Set the environment variables. Run the following command in your terminal. The

AWS_REGIONandWORKSPACE_IDshould match the AWS region you use and AWS IoT TwinMaker workspace you have created. - Install the required dependencies. Run the following commands in your current terminal.

- Launch the AI Assistant module. Run the following commands in your current terminal.

Once the module is started, it will launch your default browser and open the chat UI. You can close the chat UI.

- Launch the Cookie Factory dashboard app. Run the following commands in your current terminal.

After the server is started, visit

https://localhost:8443to open the dashboard (see Figure 2).

AI Assisted issue diagnosis and troubleshooting

We prepared an alarm event with simulated data to demonstrate how the AI Assistant can be used to support users diagnose production quality issues. To trigger the event, click on the “Run event simulation” button on the navigation bar (see Figure 3).

The dashboard will display an alert, indicating there are more than expected deformed cookies produced by one of the cookie production lines. When the alarm is acknowledged, the AI Assistant panel will open. The event details are passed to the AI Assistant so it has the context about the current event. You can click the “Run Issue Diagnosis” button to ask AI to conduct a diagnosis based on the collected information.

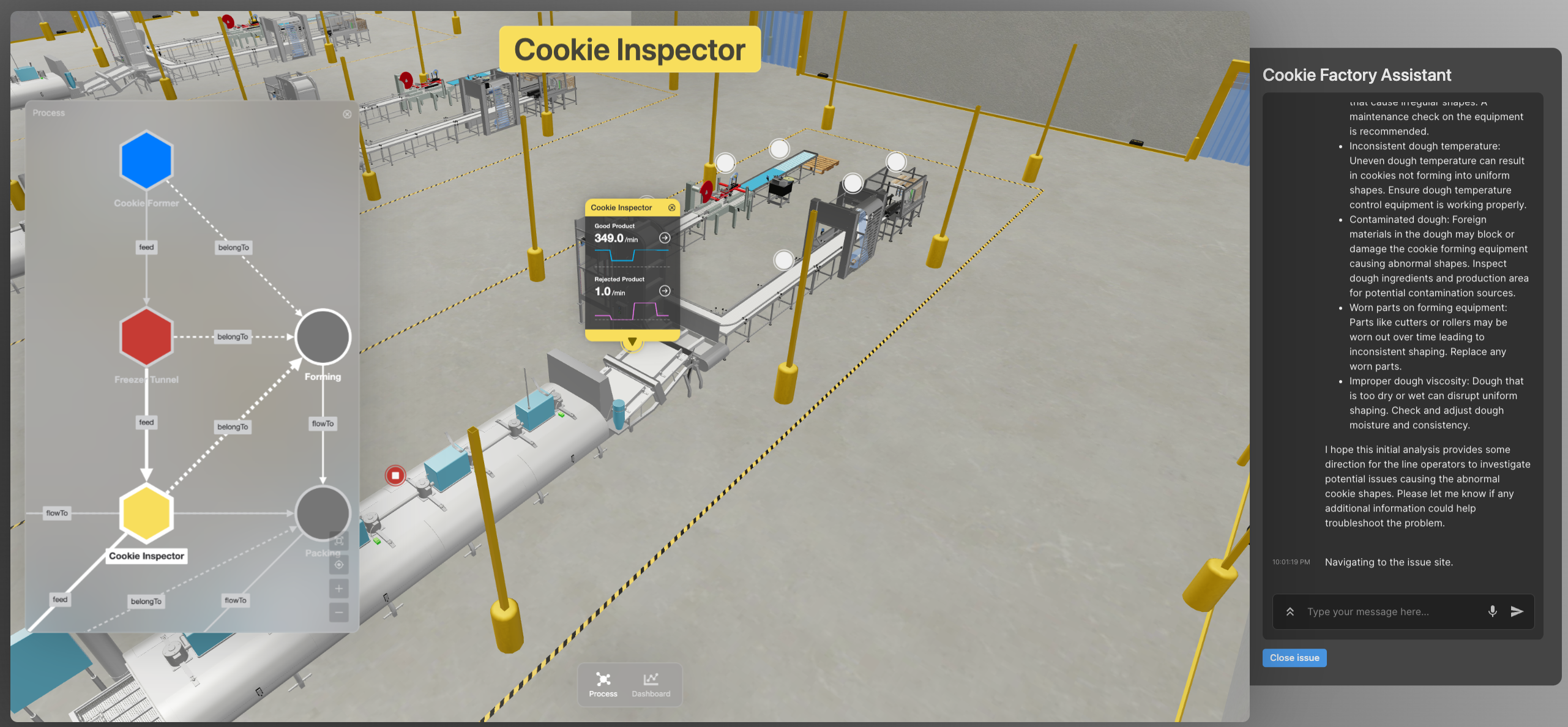

Once the diagnosis is completed, the AI Assistant will suggest a few potential root causes and provide a button to navigate to the site of the issue in the 3D viewer. Clicking on the button will change the 3D viewer’s focus to the equipment that triggers the issue. From there you can use the Process View or 3D View to inspect related processes or equipment.

Figure 5. AI Assistant shows the site of the issue in 3D. Left panel shows the related equipment and processes.

You can use the AI Assistant to find SOPs of a particular equipment. Try asking “how to fix the temperature fluctuation issue in the freezer tunnel” in the chat box. The AI will respond the SOP found in the documents associated to the related equipment and show links to the original documents.

Finally, you can click the “Close issue” button at the bottom the panel to clear the event simulation.

Internals of the AI Assistant

The AI Assistant chooses different strategies to answer a user’s questions. This allows it to use additional tools to generate answers to real-world problems that LLMs cannot solve by themselves. Figure 6 shows a high-level execution flow that represents how user input is routed between multiple LLM Chains to generate a final output.

The MultiRouteChain is the main orchestration Chain. It invokes the LLMRouterChain to find out the destination chain that is best suited to respond to the original user input. It then invokes the destination chain with the original user input. When the response is sent back to the MultiRouteChain, it post-processes it and returns the result back to the user.

We use different foundational models (FM) in different Chains so that we can balance between inference cost, quality and speed to choose the ideal FM for a particular use case. With Amazon Bedrock, it is easy to switch between different FMs and run experiments to optimize model selection.

The GraphQueryChain is an LLM Chain that translates natural language into a TwinMaker Knowledge Graph query. We use this capability to find information about the entities mentioned in the user question in order to encourage LLMs to generate better output. For example, when the user asks “focus the 3D viewer to the freezer tunnel”, we use the GraphQueryChain to find out what is meant by “freezer tunnel”. This capability can also be used directly to find information in the TwinMaker Knowledge Graph in the form of a response to a question like “list all cookie lines”.

The DomainQAChain is an LLM Chain that implements the RAG pattern. It can reliably answer domain specific question using only the information found in the documents the user provided. For example; this LLM Chain can provide answers to questions such as “find SOPs to fix temperature fluctuation in freezer tunnel” by internalizing information found in user provided documentation to generate a domain specific context for answers. TwinMaker Knowledge Graph provides additional context for the LLM Chain, such as the location of the document stored in S3.

The GeneralQAChain is a fallback LLM Chain that tries to answer any question that cannot match a more specific workflow. We can put guardrails in the prompt template to help avoid the Agent being too generic when responding to a user.

This architecture is simple to customize and extend by adjusting the prompt template to fit your use case better or configuring more destination chains in the router to give the Agent additional skills.

Clean up

To stop the AI Assistant Module, run the following commands in your terminal.

Please follow the Cookie Factory sample solution documentation to clean up the Cookie Factory workspace and resources.

Conclusion

In this post, you learned about the art of the possible by building an AI Assistant for manufacturing production monitoring and troubleshooting. Builders can use the sample solution we discussed as a starting point for more specialized solutions that can best empower their customers or users. Using the Knowledge Graph provided by AWS IoT TwinMaker provides an extensible architecture pattern to supply additional curated information to the LLMs to ground their responses with the facts. You also experienced how users can interact with digital twins using natural language. We believe this functionality represents a paradigm shift for human-machine interactions and demonstrates how AI can help empower us all to do more with less by extracting knowledge from data much more efficiently and effectively than was possible previously.

To see this demo in action, make sure to attend Breakout Session IOT206 at re:Invent 2023 on Tuesday at 3:30 PM.

About the authors

Jiaji Zhou is a Principal Engineer with focus on Industrial IoT and Edge at AWS. He has 10+ year experience in design, development and operation of large-scale data intensive web services. His interest areas also include data analytics, machine learning and simulation. He works on AWS services including AWS IoT TwinMaker and AWS IoT SiteWise.

Jiaji Zhou is a Principal Engineer with focus on Industrial IoT and Edge at AWS. He has 10+ year experience in design, development and operation of large-scale data intensive web services. His interest areas also include data analytics, machine learning and simulation. He works on AWS services including AWS IoT TwinMaker and AWS IoT SiteWise.

Chris Bolen is a Sr. Design Technologist with focus on Industrial IoT applications at AWS. He specializes in user experience design and application prototyping. He is passionate about working with industrial users and builders to innovate and create delightful user experience for the customers.

Chris Bolen is a Sr. Design Technologist with focus on Industrial IoT applications at AWS. He specializes in user experience design and application prototyping. He is passionate about working with industrial users and builders to innovate and create delightful user experience for the customers.

Johnny Wu is a Sr. Software Engineer in the AWS IoT TwinMaker team at AWS. He joined AWS in 2014 and worked on NoSQL services for several years before moving into IoT services. Johnny is passionate about enabling builders to do more with less. He focuses on making it easier for customers to build digital twins.

Johnny Wu is a Sr. Software Engineer in the AWS IoT TwinMaker team at AWS. He joined AWS in 2014 and worked on NoSQL services for several years before moving into IoT services. Johnny is passionate about enabling builders to do more with less. He focuses on making it easier for customers to build digital twins.

Julie Zhao is a Senior Product Manager on Industrial IoT at AWS. She joined AWS in 2021 and brings three years of startup experience leading products in Industrial IoT. Prior to startups, she spent over 10 years in networking with Cisco and Juniper across engineering and product. She is passionate about building products in Industrial IoT.

Julie Zhao is a Senior Product Manager on Industrial IoT at AWS. She joined AWS in 2021 and brings three years of startup experience leading products in Industrial IoT. Prior to startups, she spent over 10 years in networking with Cisco and Juniper across engineering and product. She is passionate about building products in Industrial IoT.