AWS for M&E Blog

Demonstrating shoppable TV for monetization in sports

This blog was co-authored by Bleuenn Le Goffic, VP Strategy & Business Development at Accedo.tv, and Noor Hassan, Ken Shek, and Alyson Stewart at Amazon Web Services

Whether in sports or other types of video content, audience engagement and content monetization are growing topics in the media domain. Content providers seek new ways to attract and retain customers through interactive platforms in order to increase revenues and create a more engaged user base.

One way to engage viewers is through shoppable TV, a concept that combines e-commerce with video content and allows viewers to interact with shoppable items in the video. Shopping on social media already enables brands to reach new audiences directly through influencer videos. Social influencer commerce is expected to reach $1.2 trillion globally by 2025, according to a study by Accenture, showing that audiences crave engagement with trusted influencers. We propose that the same engagement model can translate to trusted sports and media brands. Technology to enable shoppable commerce in sports is prime for targeted experimentation.

In order to qualify the appetite of sports bodies to experiment with video monetization, Accedo and AWS presented the shoppable TV concept at the Sports Innovation summit in May of 2022. The concept uses various technologies such as machine learning and computer vision, non-intrusive user experience, and 3D modeling to facilitate converting viewers to buyers.

The shoppable TV concept comprises two components:

- The backend workflows: these are the system pipelines that automate the ingest and processing of video feeds to output metadata identifying shoppable items (“shoppable metadata”) through machine learning. Backend workflows are built on Amazon Web Services (AWS).

- The user experience: This is the presentation layer (application) that taps into the shoppable metadata and enables end users to interact with shoppable items.

Backend workflows

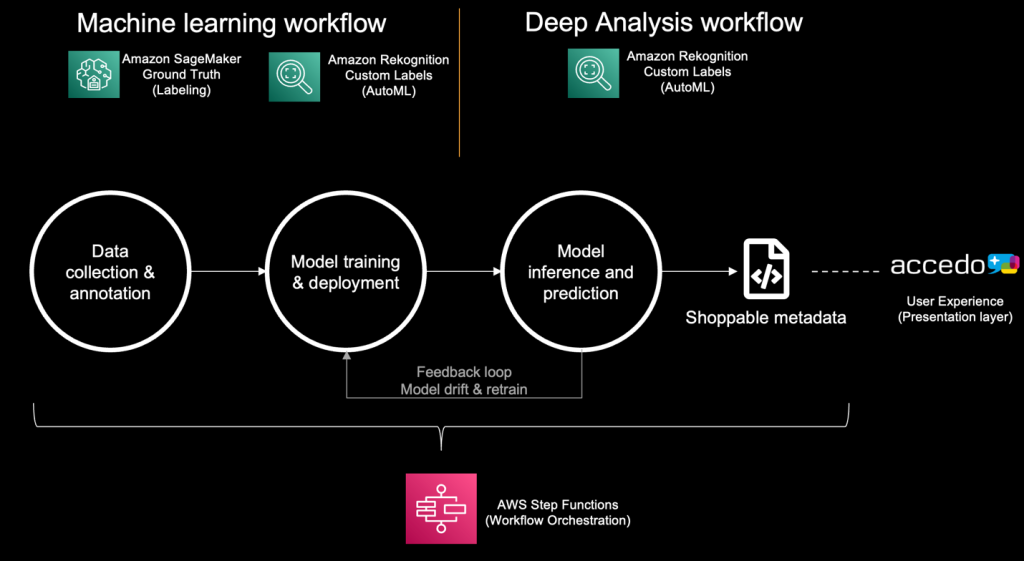

The backend workflows consist of two stages that use Amazon machine learning services and features such as Amazon Rekognition Custom labels and Amazon SageMaker Ground Truth, orchestrated by AWS Step Functions.

Stage 1 – Machine learning workflow: this comprises data collection and annotation for various objects such as shot change events, shot types and positions, team jerseys, sponsor logos, and player emotions, followed by model training and deployment.

Stage 2 – Analysis workflow: this comprises model inference and prediction, followed by construction of shoppable metadata for use by the presentation layer built by Accedo.tv to create the end user experience.

The backend workflows are further illustrated in the following diagram. Video frames are annotated and classified generating data sets to create and train machine learning models for later inference and prediction.

For accurate detection of key moments in a sports event or match, video frames first go through a series of machine learning processes to detect shot change events. From detected shots, a subset of frames is further analyzed to extract camera shot types and positions. Finally, deep analysis is applied to detect other components such as player emotion, text (to read scores), sponsor logos, and more.

User experience

Creating a seamless and intuitive user experience is crucial to unlocking the potential of shoppable TV and maintaining viewer engagement with video content, while presenting the audience with additional means to interact with content. For that, Accedo built a TV experience that presents shoppable items linked to the context of the video, accompanied by a second screen experience for viewers to interact with 3D models of shoppable items. This creates a simplified buying journey for users, making it more likely to result in a purchase. When viewers scan a shoppable item on the tv screen (first screen) with a mobile device, the application renders a 3D model of the scanned item leveraging the native Augmented Reality (AR) engine which is made available by the OS on mobile devices (ARkit for iOS and ARCore for Android). From there, users can access additional options in the application, such as adding an item to a cart, seeing more details about it, and so on.

The following images depict the result of scanning detected shoppable items through the Accedo solution.

Conclusion

The demonstration of the shoppable TV concept at Sports Innovation summit sparked discussion about how sports bodies can accelerate the opportunity to engage sponsors and build new inventories to engage fans through content monetization. The event presented invaluable insights on some of the drivers and challenges to transition from experimentation to production-ready integration of fan engagement technologies. Deep collaboration and innovation efforts with sports bodies, media, brands, and technology providers will accelerate the transition.