AWS Storage Blog

Optimizing cost for your high availability SQL Server deployments on AWS

Many customers run SQL Server workloads on AWS because AWS offers higher performance and reliability, greater security and identity services, more migration support, and flexible licensing options. Customers often select the license included deployment scenario for running SQL Server on AWS to avoid long-term licensing contracts with Microsoft and preserve licensing flexibility for potential future optimization and refactoring.

The high cost of Microsoft licenses for SQL Server, especially for the Enterprise Edition, represent a point of concern for many customers planning their migration of SQL Server workloads to AWS. In this blog, we review SQL Server cost optimization on AWS for some hypothetical customer migration scenarios. Through this review, we evaluate the Total Cost-of-Ownership (TCO) in each scenario based upon the prices for various AWS services and demonstrate how to use the breadth of AWS compute and storage offerings to reduce the cost of SQL Server deployments on AWS.

Initial configuration

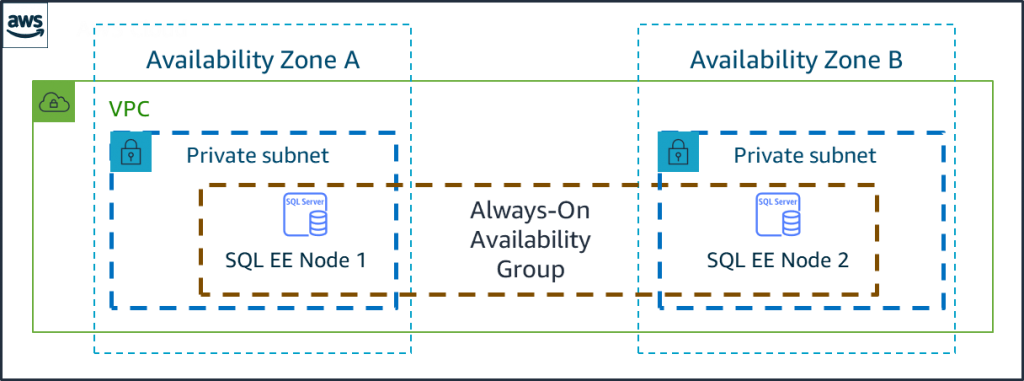

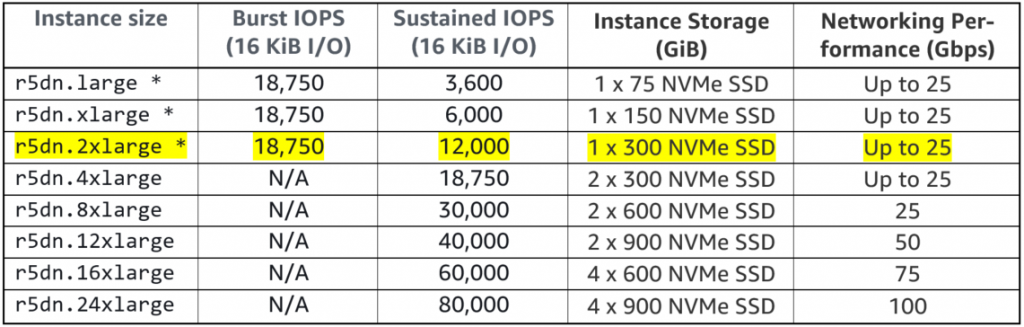

The usual optimization scenario is when a customer knows their required SQL Server performance level and tries to find a compute and storage deployment configuration on AWS that reaches this performance level at minimum cost. As a working example, we select SQL Server Enterprise Edition (EE) in Always-On Availability Groups (AO-AG) configuration for high availability (HA), which is typical for production deployments on AWS. Figure 1 presents this configuration.

Figure 1: SQL Server Always-On Availability Group on AWS

SQL Servers benefit from large RAM but usually do not require a lot of compute resources, and instances of the Amazon EC2 r5 family are preferred for SQL Server deployments on AWS. This is because these instances offer a 1-to-8 ratio between the number of vCPUs and the amount of RAM in GB.

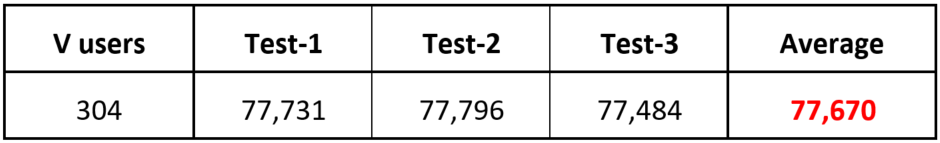

Based on the performance statistics collected from the on-premises system, the SQL Server workload requires about 30,000 IOPS to achieve expected response time. Thus, let us pick up an appropriately sized Amazon EC2 r5 instance considering that VMs maximum storage throughput depends on that instance size. The following table lists instances of various sizes in r5 family and their supported IOPS level (* indicates machine instances with burst capabilities):

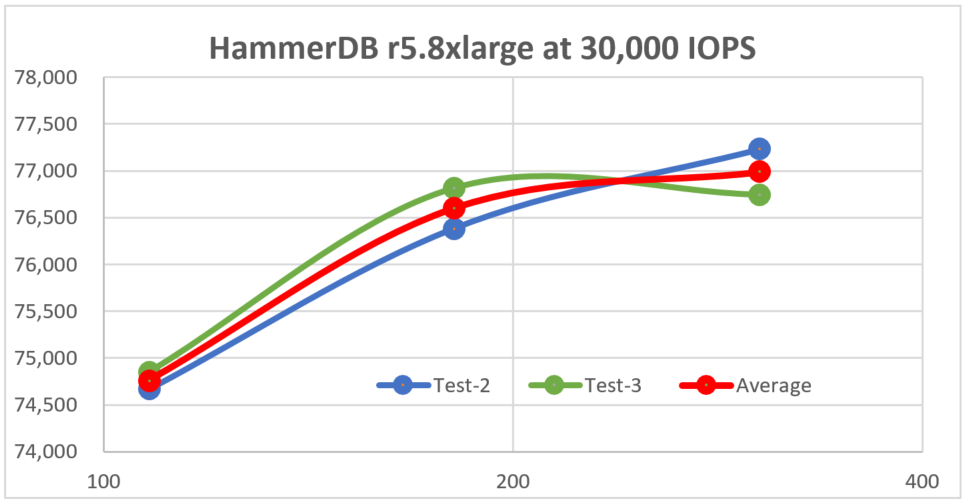

Table 1: R5 instance family IOPS levels

To satisfy the requirement of 30,000 IOPS for our workload, we select an Amazon EC2 r5.8xlarge instance for our SQL Server deployment. We provision the Amazon EBS io2 volume at 30,000 IOPS to hold SQL Server data and log files. We also attached a small 100 GB Amazon EBS gp3 volume to host the operating system and SQL Server binaries. Amazon EBS io2 and gp3 represent the next-generation volumes with enhanced features (like 5 9s of durability for io2) and lower cost.

Establishing a performance baseline

For every optimization or re-architecting effort, it is critical to establish a performance baseline to be able to prove that the new solution meets your performance requirements. You can implement performance testing in a repeatable fashion so that you can measure various proposed architectures against the same yardstick. For this blog, we rely on leading benchmarking and load testing tool HammerDB tool to establish performance baseline and measure proposed solutions.

It is important to create a test database significantly larger than the amount of RAM on the test machines – otherwise with the whole database, or its significant part cached in RAM, we will not get realistic performance estimates. We created a HammerDB test database using 30,000 warehouses resulting in a database of about 3 TB, which significantly exceeds the 256 GB of RAM available on an Amazon EC2 r5.8xlarge instance. We also selected option Use All Warehouses to increase I/O load on the system, as the typical production SQL Server workloads are IO-bound.

HammerDB enables us to run performance tests with various levels of load, which is defined by the number of “virtual users” accessing the test database simultaneously. Typically, measured performance increases with the increase in the load on the database server, up until the point of saturation. For initial testing, we selected load levels at 108, 181, and 304 virtual users, as measured performance increases slowly with the increase in the workload level.

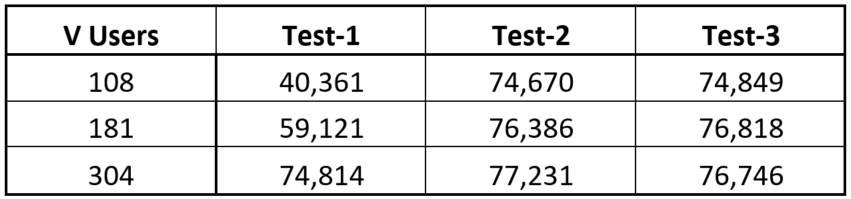

To achieve statistically consistent results, we set each test duration to 10 minutes and ran each test three times. For the rest of the setup, we followed best practices for using HammerDB to evaluate SQL Server performance. In our tests, we captured performance values in database-independent new orders per minute (NOPM) units. Results of our preliminary performance tests presented in Table 2.

Table 2: Initial test results

I plotted Table 2 data in a chart presented on Figure 2. The first series of tests had much lower performance than the subsequent tests. We attribute this to the fact that during the first series of tests SQL Server loads internal buffers. By the second series, it achieves steady state, so the result in the second and third series are consistent.

Figure 2: HammerDB performance results for r5.8xlarge at 30,000 IOPS

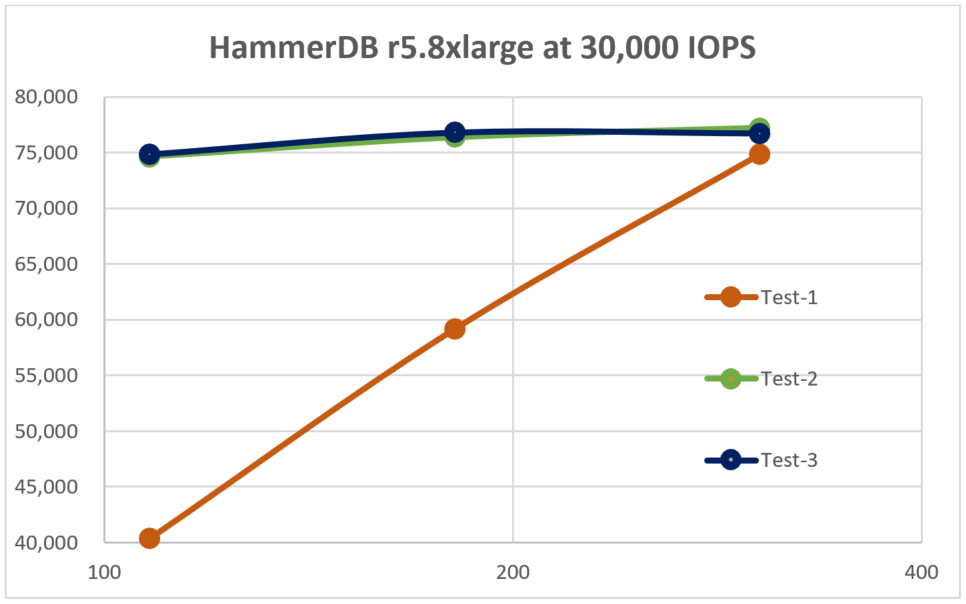

The chart on Figure 3 shows the results of the second and third series, in addition to their average, at a larger vertical axis scale. The performance increase with the increase of workload level plateaued at the workload of 304 virtual users as it saturates the SQL Server. Thus, we may use 304 virtual users as a baseline workload in subsequent tests.

Figure 3: HammerDB performance results for the last two series on r5.8xlarge at 30,000 IOPS

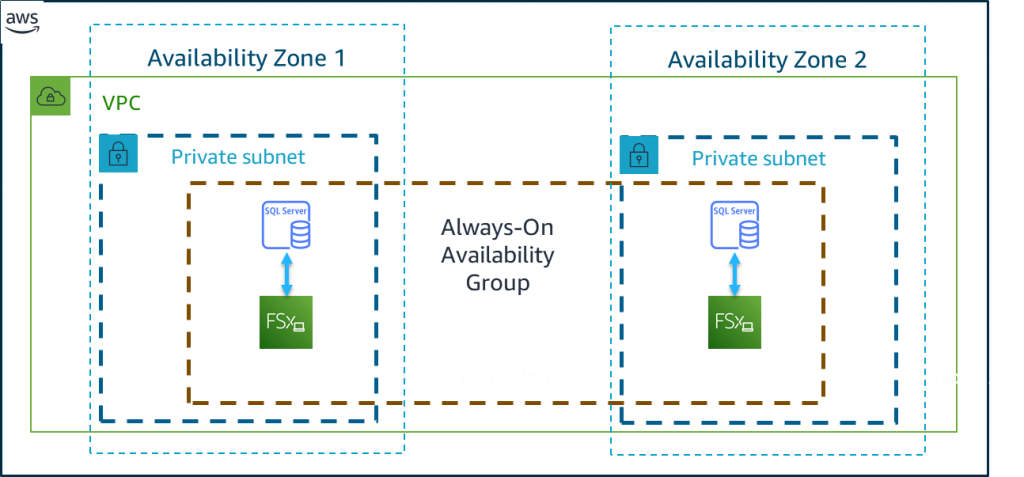

For the rest of the analysis, we configured HammerDB to run five tests with the load level set to 304 users. The first two tests are discarded, and then the results of the subsequent three tests averaged to establish performance baseline. The results of these tests together with the plot of the data presented in Table 3 and Figure 4 respectively. From these tests, each individual node in the Server environment presented on Figure 1 can provide performance level of 77,670 NOPM. We will use this value to compare with the performance levels achieved by other architectures.

During these tests, CPU utilization stayed consistently low, confirming that the SQL Server workload is IO-bound.

Table 3: Baseline performance test results

Figure 4: Baseline performance test results

Optimizing cost using the Amazon EC2 r5b family

For our initial deployment, we selected an Amazon EC2 r5.8xlarge instance to host SQL Server because of the requirement to provide sustained throughput of 30,000 IOPS to Amazon EBS. What about other instance families? At re:Invent 2020, a new instance family, r5b, was introduced which offers three times higher throughput to Amazon EBS storage. Table 4 lists instances of various sizes in the r5b family and respective supported IOPS level. From this table, we can see that r5b.4xlarge can support the required 30,000 IOPS level.

Again, we use HammerDB to test SQL Server performance on a r5b.4xlarge instance on the same Amazon EBS volume provisioned at 30,000 IOPS. Our initial test showed slightly lower performance; we attribute this to much lower amount of RAM (128 GB vs. 256 GB) available on r5b.4xlarge and, thus, lower cache hit ratio and more often buffer flushing.

Table 4: r5b instance family IOPS levels.

The r5b.4xlarge instance supports up to 43,333 IOPS, so what if we increase Provisioned IOPS on our EBS volume? Table 5 captures performance testing results for SQL Server deployed on a r5b.4xlarge instance with Amazon EBS volume provisioned at 36,000 IOPS, that is 20% higher than in the original configuration. When compared, the data in Table 3 and in Table 5 show that a SQL Server deployed on r5b.4xlarge with Amazon EBS volume provisioned at 20% higher IOPS can provide the same level of performance as SQL Server on r5.8xlarge instance.

Table 5: r5b.4xlarge at 36,000 IOPS performance test results

Let us see how this change will affect the cost. By going from r5.8xlarge with an EBS volume at 30,000 IOPS, to r5b.4xlarge with a volume provisioned at 36,000 IOPS, we reduced monthly cost per AO-AG node from $13,764.24 to $8,557.44. The total monthly savings for a 2-node cluster were $10,413.6, or in other words, a 38% cost reduction! Primarily, the savings are a result of switching from an r5.8xlarge instance to an r5b.4xlarge instance. Doing so enables us to slash the number of vCPU from 32 to 16, drastically reducing SQL Server Enterprise Edition license costs!

Optimizing cost using Amazon FSx for Windows File Server

Can we further reduce cost by going to even smaller instance in the Amazon EC2 r5b family? Unfortunately, not – the r5b.2xlarge can scale up to 43,333 IOPS but only in the short-term “burst” mode. Sustained IOPS level for this instance is only 21,667 IOPS, which is not enough for this workload.

But what if we separate compute and storage requirements by offloading storage to Amazon FSx for Windows File Server (Amazon FSx)? Amazon FSx provides fully managed, highly reliable, and scalable file storage that is accessible over the industry-standard Server Message Block (SMB) protocol. It offers Single-AZ and Multi-AZ deployment options, fully managed backups, and encryption of data at rest and in transit. By offloading I/O to Amazon FSx, we now have an option to scale down our compute node instance without affecting storage throughput.

For this step, we selected instances in the r5dn family, which provides enhanced networking throughput. This feature is important as we swapped access to Amazon EBS for network access to Amazon FSx. This family of instances also provides locally attached fast Non-Volatile Memory Express (NVMe) storage, which we use to store TempDB and extend SQL Server buffer pool to compensate for lower amount of RAM on smaller instances. Details for the r5dn family are provided in Table 6.

Table 6: r5dn instance family.

As we saw in the case of r5b, to achieve the same SQL Server performance from the smaller r5b.4xlarge instance we had to increase Amazon EBS IOPS by 20%. As we plan to scale down instance size even further to r5dn.2xlarge, we decided to configure Amazon FSx with 14 TB of storage at 1024 MB/s, providing roughly 40,000 IOPS. With Amazon FSx for Windows File Server as the storage layer, our new architecture resembles the following:

Figure 5: SQL Server Always-On Availability Group with storage in Amazon FSx

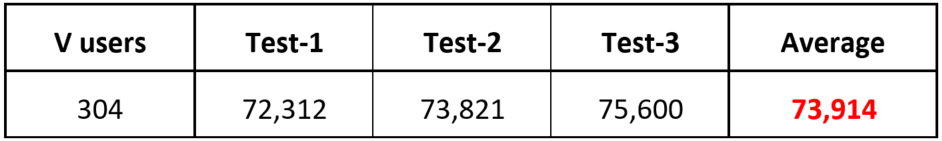

We performed our tests using the same HammerDB setup using the same 30,000 warehouses DB on r5dn.2xlarge instance. Our performance test results presented in Table 7:

Table 7: Performance Test Results for r5dn.2xlarge over Amazon FSx

The r5dn.2xlarge instance with 8 vCPUs and 64 GB of RAM running over Amazon FSx as the storage layer achieved levels of performance within just a few percentage points of the original configuration. Keep in mind that the original configuration used an r5.8xlarge instance with 32 CPUs and 256 GB of RAM! With this change, our monthly cost per AO-AG node came down to $2,954.28 for the compute component plus $4,097.84 for Amazon FSx for a total per-node monthly cost of $7052.12. If we compare this with the initial per-node cost of $13,764.24, our monthly savings for a 2-node cluster will reach $13,424.24, which represent 49% cost savings!

Summary

In this blog, we discussed several techniques to reduce cost for SQL Server on AWS for a very typical scenario. We started our optimization effort with a SQL Server Enterprise Edition 2-node cluster configured with Always-On Availability Group using Amazon EC2 r5.8xlarge instances with 32 vCPU and 256 GB of RAM.

- Reviewed switching cluster nodes to the new Amazon EC2 r5b family, which offer three times higher Amazon EBS throughput. For the case analyzed in this blog, this switch resulted in 38% cost reduction versus original configuration.

- Implemented separation of compute and storage requirements through offloading storage to Amazon FSx for Windows File Server and switching to even smaller compute instances. This resulted in 49% cost reduction versus original configuration.

Our cost optimization results summarized in the chart on Figure 6:

Figure 6: Results of AO-AG node optimization

In all reviewed cases, cost savings derived from reduction of expensive SQL Server Enterprise Edition licenses by moving to instances with fewer vCPUs. If under 5% reduction in performance is acceptable, moving to r5dn.2xlarge instances with storage layer supplied by Amazon FSx provided the largest cost reduction reaching 49%.

Thanks for reading this blog post! Your scenario might be different from the one we analyzed in this blog. However, the details in this blog, analyzing your performance requirements, and checking out the following listed resources can help you achieve cost optimization for your SQL Server deployment on AWS:

- Amazon EC2 families and instances

- Amazon EBS volume types and characteristics

- Amazon FSx for Windows File Server

If you have any comments or questions, please leave them in the comments section.