AWS Partner Network (APN) Blog

Re-Hosting Mainframe Applications to AWS with NTT DATA Services

|

|

|

|

By Edward Joo, Strategic Advisor at NTT DATA Services

Legacy mainframe applications aren’t typically the first workloads that come to mind when discussing cloud computing. Most mainframe applications have reliably supported business-critical processes for years or even decades, but due to perceived cost and complexity, many organizations have not yet incorporated these venerable workloads into their Amazon Web Services (AWS) strategy.

NTT DATA Services provides a mainframe re-host solution that minimizes application code change while benefiting from the agility AWS has to offer. Based on 25 years of production experience and more than 1,300 implementations worldwide, our re-hosting reference architecture, migration best practices, and extensive technology feature set streamline mainframe migrations to AWS.

NTT DATA Services is an AWS Partner Network (APN) Advanced Consulting Partner that helps clients navigate and simplify the modern complexities of business and technology, delivering the insights, solutions, and outcomes that matter most to their objectives.

In this post, I will discuss the benefits of re-hosting mainframe applications to AWS, our established mainframe re-hosting technology, and our reference architecture for confidently addressing the complete picture. Our technology preserves existing business logic, applications, end-user interfaces, and IT skill investments. When implemented through our experienced services team, our approach minimizes disruption, risk, and transition time.

Why Re-Host to AWS?

Since mainframe applications are not natively cloud aware, re-hosting to an AWS ecosystem is an excellent way to take advantage of some of the cloud’s key features, such as flexibility and scalability. Whether it’s a corporate mandate to move workloads to the cloud or simply a desire to reduce operating costs, re-hosting part or all of a mainframe application environment is a great step towards modernization.

Re-hosting opens possibilities to utilize many AWS services and benefits with little or no code modification. The move can also serve as a bridge to longer-term strategic digital transformation initiatives, such as a rewrite or re-architecture of legacy applications to better support new business models.

After a workload is re-hosted to AWS, further analysis can provide insight into developing a plan for code modifications as part of a long-term goal. Some level of application decomposition will be necessary so that it can be made to run on distributed instances or containers. Through NTT DATA’s Repository and Analyzer tool, application components can be evaluated and then migrated in groups to modern technology platforms and languages such as Java or C#.

NTT DATA Mainframe Re-Hosting Software Components

Mainframe re-hosting software from NTT DATA provides an alternative environment for natively running migrated CICS transactions, IMS applications, batch, and JCL workloads on AWS. We also offer solutions for converting IDMS, natural, and related application environments to operate within our re-hosting solution.

Figure 1 – Mainframe software stack maps to NTT DATA re-host solution components.

The primary components of NTT DATA’s mainframe re-hosting technology include:

NTT DATA Transaction Processing Environment (TPE)

Our TPE software runs re-hosted online CICS transactions, IMS Transaction Manager (TM) applications, transformed IDMS DC programs, and related resources on common servers and operating systems such as Red Hat Linux. As with other advanced transaction processing systems, NTT DATA TPE software manages application resources like programs, files, queues, transactions, screens, and terminals to provide a robust execution environment for business applications. This software includes support for a variety of client devices, including 3270 SNA, TN3270, ECI, EPI, and Java technology-based clients. CICS Client and Universal Client products are also supported.

Figure 2 – TPE components along with interfaces and integration options.

NTT DATA Batch Processing Environment (BPE)

Our BPE software provides a complete Job Entry Subsystem (JES) environment for the administration, execution, and management of batch workloads on virtualized servers. Concepts such as job step level management, workload classes, and priorities, as well as file types such as COBOL, VSAM, concatenated datasets, and Generational Data Groups (GDGs) are supported. This software also includes facilities for migrating JCL job streams.

Figure 3 – BPE components along with interfaces and integration options.

NTT DATA Transaction Security Facility (TSF)

TSF provides the administration and runtime services of an External Security Manager (ESM) for TPE software environments. With a role-based access control security model, an inclusive permissions model, multiple user profile choices, and adaptable hierarchical relationship options, TSF delivers detailed security protection.

NTT DATA TPE/BPE Manager

TPE/BPE Manager provides a graphical window into TPE software regions and BPE subsystems, allowing operators to view and monitor system events and performance. The TPE/BPE Manager software’s interface provides centralized, real-time information for determining system status, processing rates, potential bottlenecks, and configuration enhancements that can aid in ensuring performance levels and user response times.

NTT DATA Enterprise COBOL

Our COBOL technology includes a compiler, runtime environment, graphical source-level debugger, and a 100 percent portable JISAM indexed file system, where the file handling and the files themselves are not machine specific so they can be moved to any system that can run java. It supports ANSI-85 standard and legacy COBOL dialects and complies with a standard EXTFH interface.

Migration Approach

Migrating legacy mainframe workloads to AWS can be a complex task. NTT DATA’s five-step methodology is designed to proactively mitigate risk, take a holistic approach to the complete environment, and allow for the managed sharing of migration responsibility based on the specific project requirements.

Every mainframe migration project we manage begins with the Discovery and Design phases, which analyze all the factors affecting a migration or modernization project and assist in building a detailed plan for a smooth and efficient execution. It’s important to use a methodology that begins with a full understanding of the project’s feasibility and impact to the business. We begin by getting a clear picture of clients’ applications, infrastructure, interfaces, and processes.

This comprehensive inventory of business and technical assets, and their interdependences, provides insight into how the legacy applications are currently meeting organizational objectives. A detailed software mapping exercise to replace certain mainframe functions, such as scheduling and message queuing, is also required.

Figure 4 – Overview of NTT DATA’s mainframe re-hosting methodology process.

In general, mainframe application and data migration involves the following aspects:

Migrating Programs and JCL

Most COBOL programs can be migrated with limited changes and a recompilation on a distributed operating environment. Examples of code changes are when hexadecimal constants are used in EBCDIC or print control characters are used. Applications written in C and C++ as well as Java can also be executed in this environment.

NTT DATA BPE provides facilities to run existing job control language (JCL) with limited modifications. For instance, modifications are necessary when JCL syntax pertains to mainframe-specific constructs or utilities which are not applicable to the Linux operating system. Any JCL references to datasets, libraries, and Generation Data Groups (GDGs) are then mapped to the new directories and filenames in the distributed platforms’ OS file system where the migrated resources reside.

Migrating Data

Data stored in VSAM files as well as IMS databases (DB), IDMS, Adabas, and DB2 databases can be migrated to AWS. In addition to supporting database technologies on Amazon Elastic Compute Cloud (Amazon EC2) instances or Amazon Relational Database Service (Amazon RDS), including Amazon Aurora, our technologies provide VSAM file services.

Since IMS DB is a hierarchical database, its structure is translated to a relational database environment before the data can be migrated. If done manually, this process can be time intensive and error prone. By leveraging the NTT DATA H-RDB automated migration tools, we save time and effort during migration projects and deliver current relational database designs that are optimized for system performance.

NTT DATA Mainframe Re-Hosting Reference Architecture on AWS

NTT DATA’s TPE and BPE re-hosting technologies are available and used by AWS customers. In fact, our Mainframe Re-hosting Reference Architecture, or MRRA, showcases a real-world, re-hosted workload running on AWS and is available for demonstrations.

Figure 5 – Logical illustration of a reference architecture example on AWS.

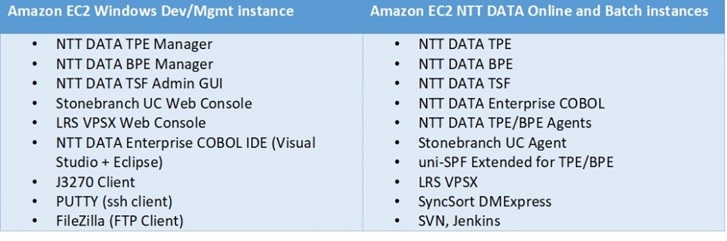

The table in Figure 6 lists the software components of both the development/management instance and the online and batch instances.

Figure 6 – Software components

NTT DATA’s MRRA is composed of a development and management server, application servers running our re-hosting software, and a backend database server for shared data. This reference architecture highlights our re-hosting technologies by demonstrating two key functional areas—operations and development.

The operations view provides a look into the administration and monitoring of the online and batch subsystems. The development view shows a software development lifecycle from creating a new CICS/COBOL project in Visual Studio to deploying and debugging applications.

AWS Load Balancing and Horizontal Scaling

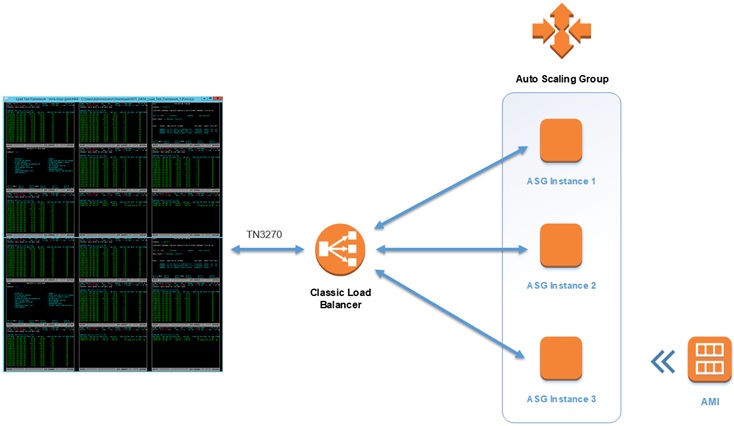

For certain online workloads, our TPE application servers can function behind an AWS load balancer and participate in an AWS Auto Scaling Group. The application should not have any affinity issues with the requestors (clients) of the application service, and the data being accessed by the application must be available from any instance of the application in an AWS shared data store.

This opens up the possibility of scaling horizontally across many instances either through AWS Auto Scaling Groups or by manual operator intervention. As shown on Figure 7, client 3270 sessions connect to the AWS load balancer, which then routes traffic to the TPE regions using a round-robin algorithm. The configuration not only provides resiliency to the legacy online application but also allows the scaling in and out of TPE nodes dynamically to meet demand.

Figure 7 – Illustration of 3270 connections using an AWS load balancer to route traffic.

As load increases, a new TPE instance can be added automatically or manually to the group to meet demand. The new instance is provisioned from a customized Amazon Machine Image (AMI) and then automatically added into service rotation as soon as it comes online.

Conversely, as load decreases an instance can be removed from the group. During this process, the load balancer suspends new traffic to the instance marked for removal and then waits to drain any existing connections. Once all connections to the instance are closed or the specified timeout value is reached, whichever occurs first, the instance is shut down and terminated.

Amazon Aurora Configuration

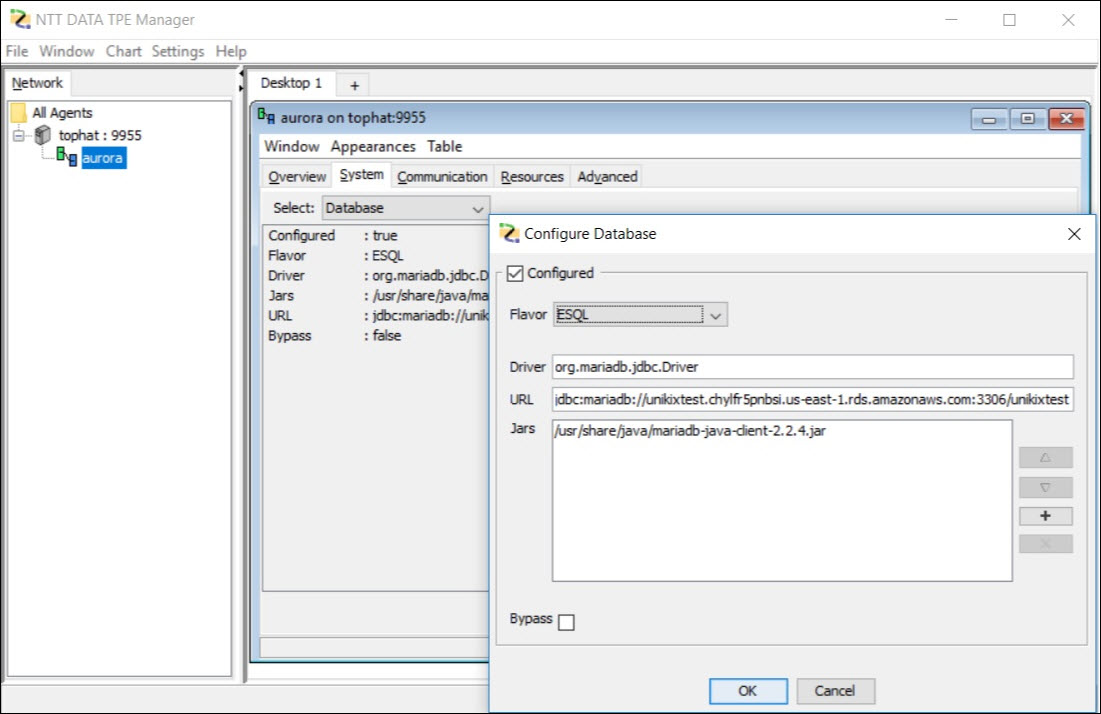

Amazon Aurora database is a supported database and can be used as the single relational database for the TPE/BPE deployment on AWS without requiring any third-party databases.

Aurora is supported via the ESQL/JDBC interface only when using NTT DATA Enterprise COBOL. For connecting to Aurora MySQL-Compatible Edition, TPE/BPE uses the MariaDB Connector/J utility. It is supported with NTT DATA TPE and BPE version 14 or higher and MariaDB Connector/j v2.2 or higher.

For TPE specifically, the region database is configured with ESQL flavor and MariaDB JDBC driver. The Aurora connection string is entered in the URL field with the format: jdbc:mariadb://auroraEndpoint:auroraPort/auroraIdentifier

Figure 8 – TPE region showing Amazon Aurora configuration.

The Aurora database access credentials are stored in the TPE region System Initialization Table (SIT). The details needed are the user name (UserID) and the password (PassWd).

Figure 9 – TPE region System Initialization Table showing RDBMS PassWd and UserID fields.

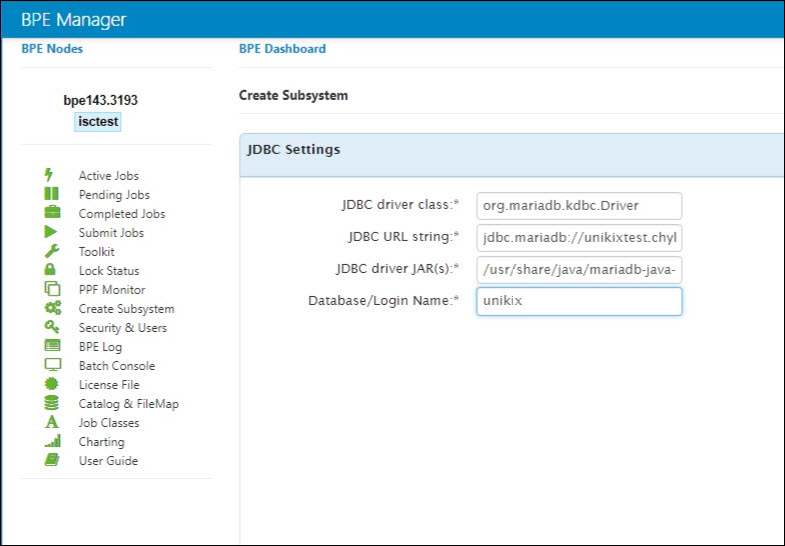

For BPE specifically, the region basic settings are configured with NTT DATA COBOL and JDBC.

Figure 10 – BPE region showing JDBC configuration.

Then ESQL JDBC settings are configured with the JDBC driver java information, the URL string (same as TPE one) and the database Username.

Figure 11 – BPE region showing JDBC settings.

For the BPE database connection password, either the preceding TPE SIT configuration is reused (if collocated on the same instance), or environment variables $RDBMS_USERNAME and $RDBMS_USERPASS are set with Aurora username and password respectively and exported in Subsystem’s $USER_SETUP.

When a TPE transaction or BPE job is run, database connection messages will be visible in the file “unikixmain.log” when the database user exit makes the connection with the Aurora database. In the sample log below, these database connection messages can be seen during a BPE job execution.

Learn More About NTT DATA Re-Hosting

NTT DATA’s TPE and BPE technology gives customers a platform to confidently run legacy mainframe applications on AWS. Our MRRA on AWS, along with our established migration approach and proven best practices, accelerates migration projects and minimizes risks.

To request a demonstration of the NTT DATA technologies discussed here, or for more information, please contact us.

The content and opinions in this blog are those of the third party author and AWS is not responsible for the content or accuracy of this post.

.

|

NTT DATA Services – APN Partner Spotlight

NTT DATA Services is an APN Advanced Technology Partner. They help clients navigate and simplify the modern complexities of business and technology, delivering the insights, solutions, and outcomes that matter most. As a division of NTT DATA Corporation, they wrap deep industry expertise around a comprehensive portfolio of infrastructure, applications, and business process services.

Contact NTT DATA Services | Practice Overview | Customer Success

*Already worked with NTT DATA Services? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.