AWS for M&E Blog

Lower latency with AWS Elemental MediaStore chunked object transfer

Reducing the latency of live streams provides a better experience for audiences, and in some cases, is essential to the core of what the live stream application is providing. For live sports, you want to make sure the viewers get to see the action with as little delay as possible. For gaming, auctions, and other live video use-cases, having the lowest latency possible is essential to the success of the service.

In a previous series of posts, we detailed how to compete with broadcast latency using current adaptive bitrate (ABR) technologies. In April, AWS Elemental MediaStore announced support for workflows that use chunked object transfer to deliver objects; this means you can improve the end-to-end or “glass-to-glass” latency while also reducing the trade-offs that the current ABR technology usually demands for low-latency workflows.

This support enables ultra-low latency end-to-end workflows for over-the-top (OTT) video when using chunked CMAF or transport streams encoded for DASH or HLS distribution.

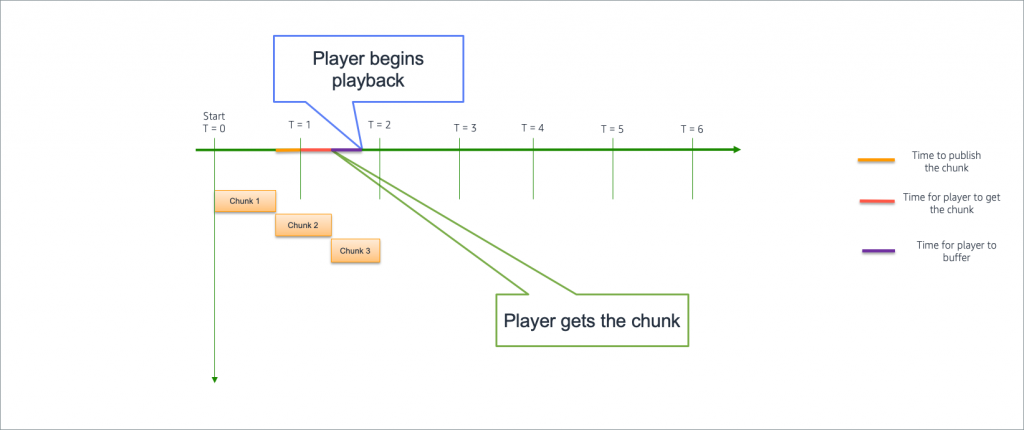

When using chunked object transfer to deliver segmented objects, your video segments are split into smaller chunks that can be played before the complete segment is delivered. Video players begin playback by requesting the video from a CDN, such as Amazon CloudFront, which in turn starts downloading the beginning of a video segment from MediaStore while the encoder is still in the process of writing the end of that same segment.

For example, consider a workflow that uses MediaStore and CloudFront to deliver video with Apple’s HTTP Live Streaming (HLS) delivery protocol using six-second video segments. With chunked object transfer, you no longer need to wait for the complete six-second segment to be written to MediaStore before you begin delivery to clients supporting chunked transfer coding delivery. As chunked segments are written to MediaStore, chunks within the segment immediately become available for delivery via CloudFront to viewers on the internet. This reduces the end-to-end latency, measured from source to screen, without lowering bitrates or shortening the video segment duration, which can reduce video quality and increase the likelihood of rebuffering.

Overview

Prerequisites:

- An encoder that is capable of packaging segments into chunks

- An origin that supports chunked transfer coding

- A player that supports chunked transfer coding playback

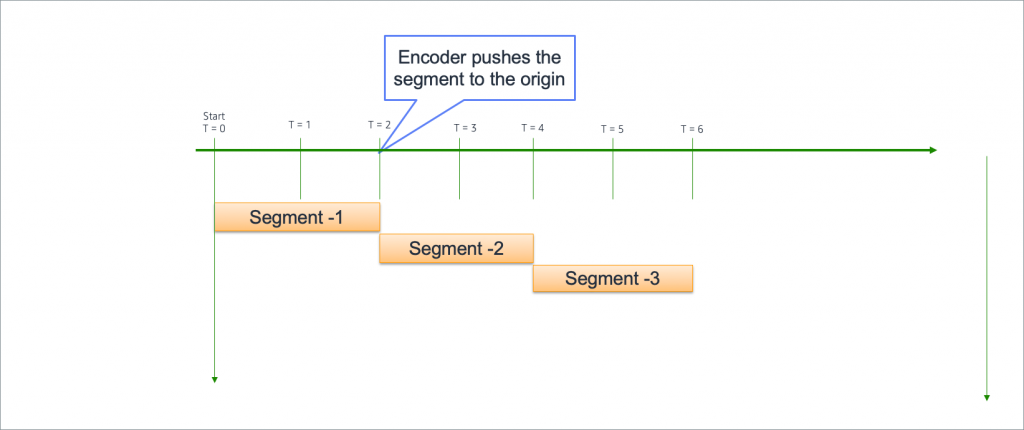

With traditional segmented HTTP video delivery, the encoder completes an entire segment before pushing it to the origin. When optimizing for low latency this has drawbacks, as it forces the encoder to choose shorter segment durations which compromises encoder efficiency and video quality. You also cannot take segments shorter than one second in duration as encoders need a minimum buffer size.

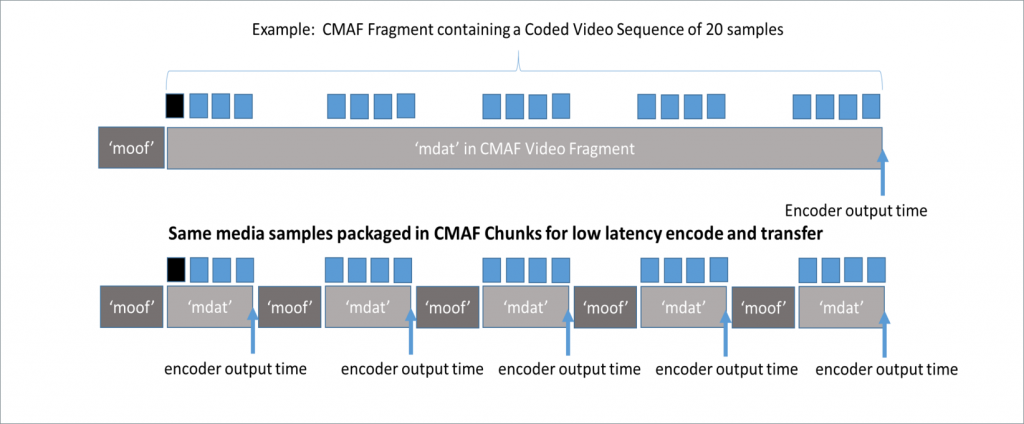

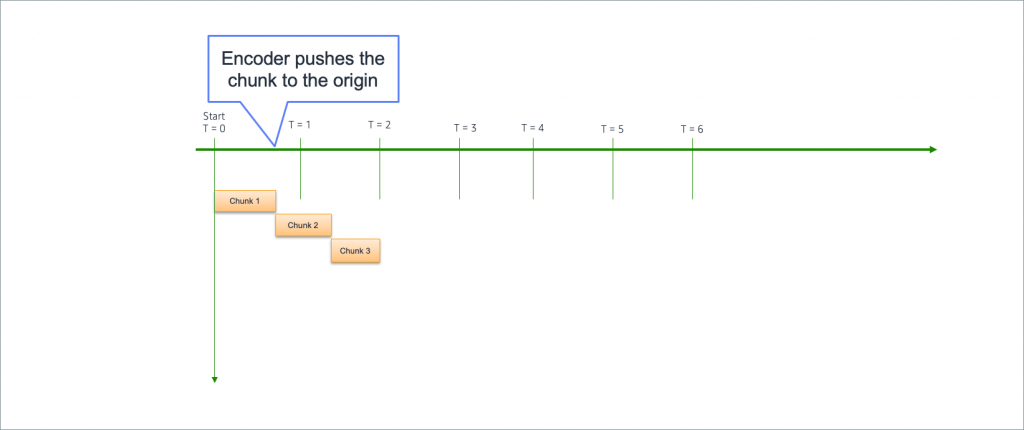

When using chunked transfer coding for segmented video delivery, the segments are produced as normal and are then packaged into chunks. Each chunk is playable without the full segment. This is one of the essential properties that make the ultra-low latency architecture described later, work. Playable video chunks can move across the video workflow as soon as they are ready, instead of having to wait for complete segment to be delivered at each step in the workflow. Fig. 1 shows the breakdown of chunks into metadata (moof) and video payload (mdat), which make each chunk playable. The CMAF specification allows the video payload to then be used for DASH or HLS delivery to clients.

Deep Dive

With traditional segmented video delivery, a complete segment must be completed before any delivery can commence

When using chunked transfer encoding, video delivery an start much sooner.

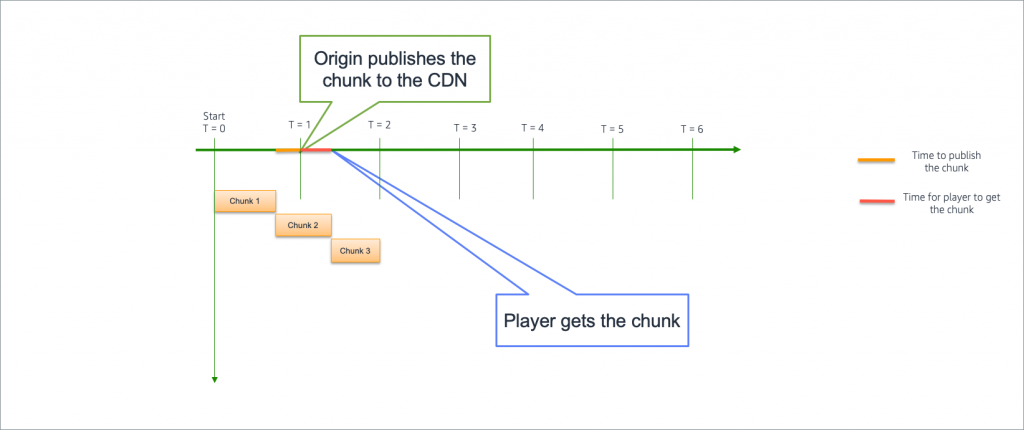

Once the chunk is pushed to MediaStore, it is available for delivery via a CDN like CloudFront. CloudFront will now be able to pull this chunk, and collapse all corresponding requests for this chunk.

The player can then pull this chunk from CloudFront, create a small buffer to guard against network issues, and begin playback.

Testing Workflow Setup

To test the workflow we set up a live stream using Videon’s Shavano product running preview firmware to send chunked HLS CMAF fMP4 into AWS Elemental MediaStore. Videon also has their new Edgecaster encoder which has the same firmware generally available.

We configured the Videon encoder for 2 second segments with a 10 segment window and 2 Mbps encoding. After creating our MediaStore container, we were able to set up the Videon encoder to send chunked HLS CMAF fMP4 to it (this guest post has detail on how to set up a Videon encoder to write to a MediaStore container). Amazon CloudFront points to our MediaStore container as origin for the distribution endpoint.

Players that support HLS fMp4 over chunked transfer coding and CMAF are available from CastLabs, NexStream, and TheoPlayer. For this demo we are using the NexPlayer multi-platform player that supports Android, IOS, and HTML5.

With the demo setup explained above we saw under 3 seconds of latency end-to-end. This screenshot here shows a latency of under 2 seconds: 57.217 seconds – 55.439 seconds = 1.778 seconds.

Conclusion

Ensuring scalable, reliable, low latency streaming for OTT video providers gives audiences the best service and lets providers focus on offering premium content and experiences.

“Chunked CMAF (Ultra Low Latency) allows Simplestream to offer its customers another cutting-edge solution to help solve their problems. From live sports to teleshopping – latency is an issue. Now we can we eliminate this headache with an end-to-end solution, from ingest through to the end user; with sub 3 second latency!

Gaining access to the AWS [Elemental] Media Store pre-release allowed us to bring this to market for our clients. The requirement was to expand our existing cloud architecture and Amazon CloudFront CDN to support CMAF – opposed to having a new workflow and this is what the team at AWS were able to achieve. We expect to start active delivery of CMAF from April 2019.” – Adam Smith – Founder & CEO – Simplestream Ltd