AWS Partner Network (APN) Blog

Amazon VPC for On-Premises Network Engineers – Part 2

Editor’s note: This is the second of a popular two-part series by Nick Matthews. Read Part 1 >>

By Nick Matthews, Partner Solutions Architect, CCIE #23560

In the previous post on Amazon Virtual Private Cloud (Amazon VPC), we covered the basic anatomy of a VPC and the different ways to connect a VPC to the outside world. In this post, we’ll explain advanced VPC concepts in a way that we hope you’ll be able to relate to as a network engineer.

Creating a New VPC

Let’s build a VPC from scratch. There are detailed instructions here. Briefly, here are the steps:

- Create a new VPC. When configuring the VPC, choose a CIDR range within the RFC 1918 addresses that won’t overlap with on-premises addresses or existing VPCs.

- If there’s an overlap, you might need to look at doing a one-way or two-way Network Address Translation (NAT). You might want to consider NAT options on the AWS Marketplace to assist with this, such as solutions from Check Point, Cisco, Fortinet, Palo Alto Networks, and Sophos.

- Determine your subnet segmentation strategy, keeping in mind the number of Availability Zones (2+), network ACLs, what type of routing is required, and how workloads will be divided (dev/prod, by team, by security level, etc.). To start, group subnets as either private or public, and group by security requirements. The goal is to have enough subnets for availability that are large enough to handle growth, but not so large that it’s difficult to create non-overlapping subnets with the rest of your address space. Remember that “private” and “public” are just descriptions for subnets―they are the same components but just configured differently, and they can be changed later.

- Determine your subnet sizing strategy, keeping in mind growth and elasticity of services, how large the VPC is, the five addresses the VPC uses in each subnet, and addresses for services, including Elastic Load Balancing. Remember that there are no broadcasts, so broadcast storms aren’t relevant any longer.

- Create the subnets and spread them across Availability Zones.

- Determine the external connectivity, and attach the Internet gateway, virtual private gateway, and/or NAT gateway to the VPC.

- Create route tables. Consider the NAT gateway Availability Zone requirements and subnet security requirements for routing.

- Add route table entries to the Internet gateway, virtual private gateway, or NAT gateway.

- Associate the route table to the subnets.

- Create any pre-approved security groups instances should use.

It’s beneficial to follow these manual steps a few times to understand the components, but for accuracy and automation, we recommend that you use AWS CloudFormation to manage network configuration.

CloudFormation makes it easy to spin up, spin down, and make changes to networks by using JSON to organize the configuration. Read more in the AWS CloudFormation documentation, and check out these sample VPC templates.

Connecting to the WAN – AWS Direct Connect

If you want to connect the AWS network into your existing WAN architecture, it’s important to understand how to use AWS Direct Connect.

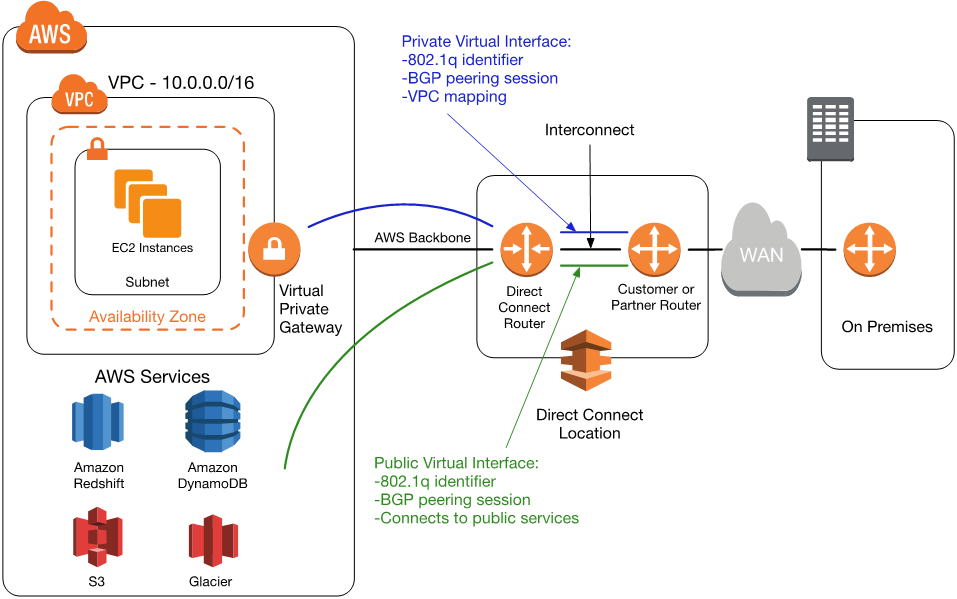

Figure 1 – AWS Direct Connect architecture with both a public and private virtual interface.

AWS Direct Connect: This service allows you to physically connect into the AWS network to connect to your AWS services. Direct Connect locations are public datacenters that have AWS operated private backbone connectivity to AWS regions.

In these locations, you can connect into the Direct Connect routers to extend your network into AWS. There are Direct Connect points of presence in every region, and each point of presence has one or more routers with 1/10G ports you can request.

Direct Connect allows for private communication over a dedicated line, and can provide additional security, performance, and privacy. It can also bridge the network architecture gap between on-premises networks and AWS. Check out our Direct Connect partners.

The requirements for Direct Connect connectivity are:

- A Border Gateway Protocol (BGP) peering session per virtual interface

- A unique VLAN per virtual interface

- An Ethernet connection (1/10G SMF, 1000BASE-LX/10GBASE-LR)

- A virtual gateway in the VPC for which you want connectivity

These requirements offer a good deal of flexibility for connectivity options to your WAN. A simple scenario for an MPLS WAN would be to add a MPLS router to the facility where you want to use Direct Connect. To the rest of the MPLS network, this would look like another branch.

The next step is to acquire an interconnect from your router in the DX location to the DX router, which a number of Direct Connect Partners can supply.

If you have a Layer 2 WAN (like VPLS), then you can do the BGP peering in a different location.

Virtual interface: A virtual interface is like a subinterface on a router in a virtual routing and forwarding (VRF) table, with a route in the VRF toward a specific location. This location could be a single VPC, or the collection of public AWS services such as Amazon S3, Amazon DynamoDB, and more.

Virtual interfaces can be private or public. A single Direct Connect connection can contain many virtual interfaces, each with their own VLAN, which only has local significance because all connectivity is routed.

Private virtual interfaces are used to route a Direct Connect connection to a specific VPC. On the AWS Direct Connect router, a VLAN is used to define the subinterface and has its own BGP peering session. The private interface is mapped to a VPC-enabling private connectivity. The destination VPC can be in another account. A private IP address and private BGP ASN can be used.

Public virtual interfaces allow for direct routing into AWS’s public services. Connections would originate somewhere outside AWS, come in through the WAN connection in the Direct Connect location, through the Direct Connect router, and then over AWS’s backbone to the correct public service.

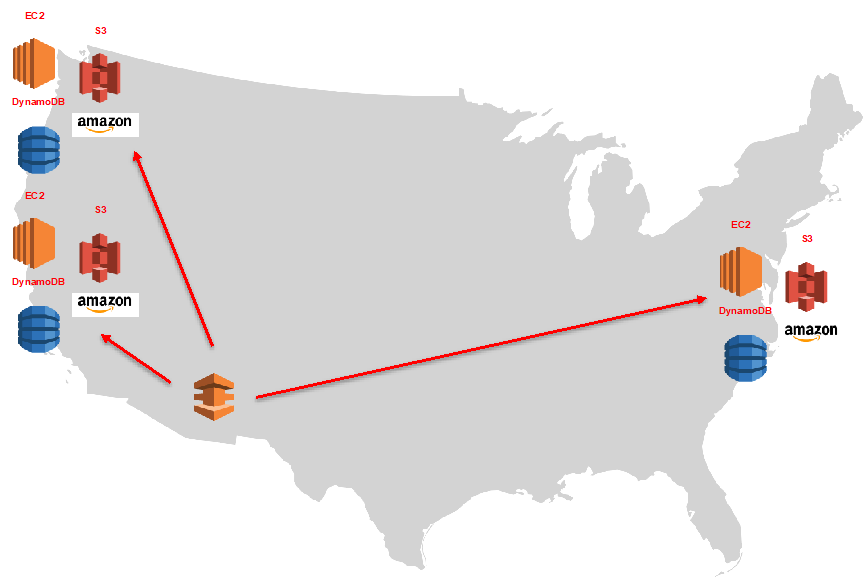

Figure 2 – Public virtual interfaces advertise the addresses for public services in all US Regions.

In the United States, public services from every region are advertised over this connection. For example, this would allow a public virtual interface in a Direct Connect location in N. Virginia to connect to Amazon Simple Storage Service (Amazon S3) buckets hosted in the N. California Region using the AWS backbone instead of the Internet.

Public virtual interfaces should be treated and secured like any other Internet-facing service, and require a public /30 address to be assigned and advertised. If a public ASN is used, it must be owned by the customer; otherwise, a private ASN can be used.

Network-to-Network Interface (NNI): Not everyone wants a dedicated router or 1G connection into AWS. AWS Direct Connect Partners can provide you with varying connection options. A NNI allows a partner to manage the connection and router, and to offer speeds under 1G (50, 100, 200 , 300, 400 , 500 Mbps).

Hosted virtual interfaces are virtual interfaces offered by APN partners that host NNI connections. They can be public or private, and offer speeds under 1G (50, 100, 200, 300, 400, 500 Mbps).

Advanced Networking and Features

So far, we’ve discussed general use cases for a VPC. Let’s dive into some of the more advanced topics and features.

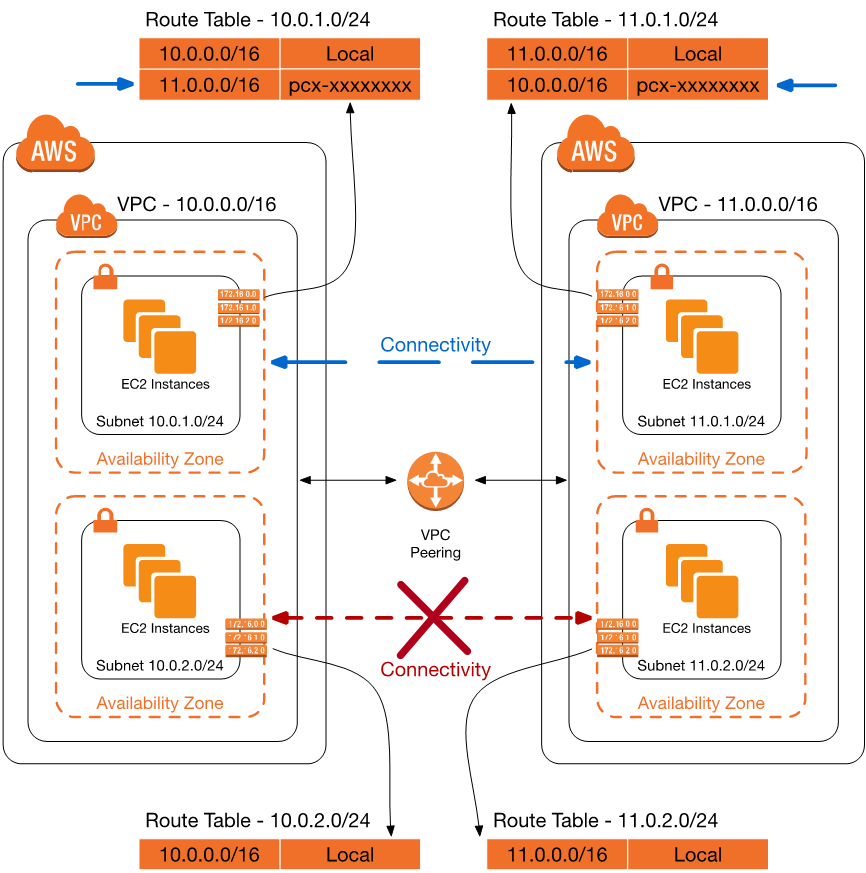

VPC peering: VPC peering enables two VPCs in the same AWS Region to communicate with each other. It’s similar to having a group of subnets share routing reachability with each other―almost like putting a static route on each side of a network that was otherwise partitioned.

Peering simply enables network connectivity between two VPCs to be possible. Any actual reachability is enabled with configuration of route tables, security groups, and network ACLs.

VPC peering allows instances to directly communicate with one another while residing in two different VPCs, even across accounts. Peering is established when one side initiates a request and the other VPC accepts.

Peering adds an option in the routing table to point routes towards the new VPC peering connection. Each subnet that needs connectivity across the peering connection will need a route in the associated routing table.

Figure 3 – A route entry is added in the 10.0.1.0/24 and 11.0.1.0/24 subnets for a VPC peering connection. For the 10.0.2.0/24 and 11.0.2.0/24 subnets to have connectivity, they would need a route entry as well.

In Figure 3, a VPC peering connection has been established between VPC 10.0.0.0/16 and 11.0.0.0/16. To enable subnet 10.0.1.0/24 to communicate with 11.0.1.0/24, a route has been added to each routing table for the respective VPC’s CIDR range. In this example the full CIDR /16 was used, but for more specific routing between the two subnets the /24 range could be used as well.

There are a few things to keep in mind when using VPC peering: transitive routing, VPC limits, and CIDR overlap. First, as detailed in the “Routing and Switching” section in part one of this blog post, traffic can’t come from outside a VPC and leave the VPC. You can use instances that act as routers or proxies to enable these flows.

Furthermore, peering operates only when there are no CIDR conflicts. This could impact your subnet and CIDR design, and using less common (not 10.0.0.0/16 or 172.16.0.0/16!) CIDR ranges reduces this risk. When assessing VPC peering as a connectivity feature, check that the VPC limits meet your requirements. For example, the VPC peering limit is 50 peering connections by default, with 125 as the hard limit.

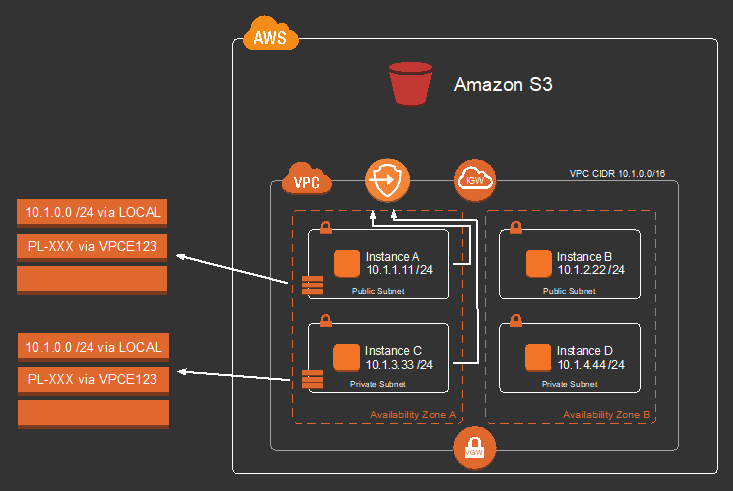

Figure 4 – Route table for two subnets, allowing Instance A and C to reach the S3 endpoint.

Amazon S3 endpoint: There are AWS services that were originally designed to be consumed over the Internet, like Amazon S3. Amazon S3 allows users to store and retrieve objects, and can have high bandwidth requirements for large or commonly accessed files.

Before S3 endpoints were introduced, an instance in a private VPC needed a way to access the Internet for S3 files. You could route requests through a proxy, but the proxy could be a performance and availability bottleneck. This posed a problem for network administrators who wanted to limit Internet access and provide high bandwidth for instances.

With S3 endpoints, you can create a private route in your VPC that allows you to route traffic directly to and from S3 in your VPC. Think of this as adding a collection of /32 routes for all the live S3 IP addresses you want to use, which is called a prefix list (pl-xxxxxxx) in AWS.

The prefix list in Figure 4 points to an endpoint (vpce-xxxxxxxx) that corresponds to the service―in this case, S3. This allows you to forward traffic within a private VPC without any bandwidth or availability bottlenecks.

S3 was the first AWS service with endpoints, and AWS is constantly evaluating endpoints for other services. For more detailed information, check out the VPC Endpoints documentation.

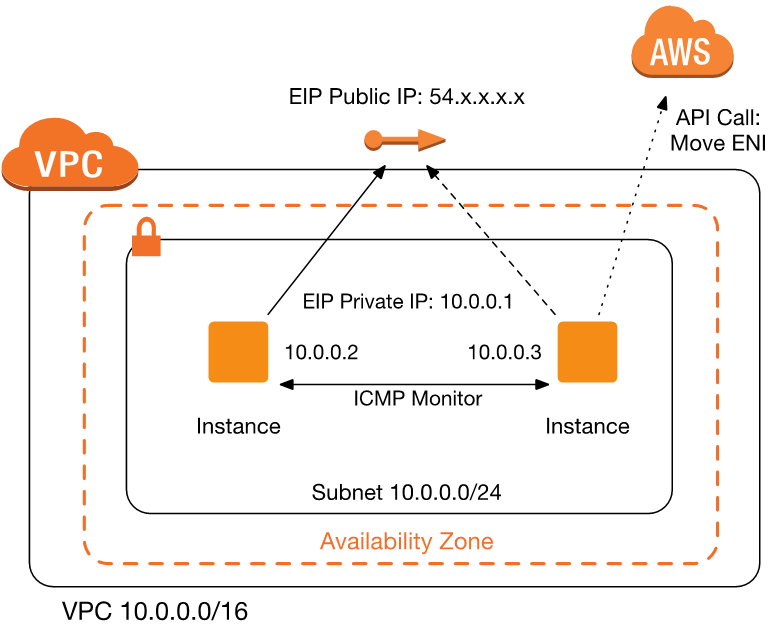

Figure 5 – A single Elastic IP address is assigned to one instance, and is used to forward traffic. The second instance monitors the first instance, and, if failure is detected, makes an API call to move the Elastic IP address to itself. Session traffic is dropped unless the instances are doing state synchronization.

Virtual IP: A virtual IP is a building block of on-premises networks that allows a single IP address to be present on multiple systems at once. An elastic network interface (ENI) that moves between two or more instances is similar to a virtual IP. This is also called a floating ENI, and is one way to emulate a virtual IP. There are a few differences between an ENI and a virtual IP:

- The time to move an ENI is not constant, and may take several seconds.

- We recommend that you use an external monitoring system to do health checks on the instances that are using the shared ENI, though systems can also monitor themselves, similar to Virtual Router Redundancy Protocol (VRRP) and Hot Standby Router Protocol (HSRP).

- The private address of an ENI is specific to a subnet, and thus to an Availability Zone.

- Simply moving the IP address doesn’t mean that all instances will have the same TCP state. Use synchronization features (like clustering) to keep TCP state between instances.

- You can choose to associate an Elastic IP address between a set of secondary ENIs or move an ENI with associated IP addresses to another machine. In other words, you can move the address or you can move the interface. The interface (ENI) is specific to the subnet, and the IP (Elastic IP) is specific to the region. If you move an Elastic IP address between Availability Zones or subnets, the public address will remain the same and the private address will change.

VPC Flow Logs: VPC Flow Logs are similar to scheduled NetFlow/sFlow/IPFIX reports. Flow logs collect the source and destination IP, source and destination ports, protocol, packet counts, and ALLOW or DENY action for a particular VPC, subnet, or ENI. They are currently collected and sent as a report every 10 minutes.

Enhanced networking: Enhanced networking is available on particular instance types to enable faster network performance when there is operating system support. This feature can reduce network latency, CPU overhead, and enable more efficient network packet processing. For more information, see the Amazon EC2 documentation.

Limits: When designing VPC networks, we recommend that you review the VPC limits to determine whether your network design fits the capabilities of the VPC. The documentation specifies which limits you can raise by contacting support, and which limits you can’t change.

For example, VPC peering may solve many of your problems, but if you need more than 125 peering connections it may affect your design. As another example, if your subnet and CIDR blocks are highly fragmented, you will want to find a way to get it under the 100 routes that can be propagated into a VPC.

Wrapping Up

There are many ways to build your network on AWS. In this post, I’ve explained common building blocks to understand as you build a VPC, how these components work, and how they relate to concepts in the traditional networking world. If you’re hungry for more details, check out these additional resources:

- VPC Best Practices: Video

- Direct Connect Redundancy Design: Video

- Another Day, Another Billion Packets: Video

- AWS Amazon VPC Documentation

In the comments section, please tell us what you would like to hear more about. We’re always interested in hearing about network problems we can help you solve.