AWS Partner Network (APN) Blog

Building Serverless SaaS Applications on AWS

By Tod Golding, Partner Solutions Architect at AWS

Software as a service (SaaS) solutions often present architects with a diverse mix of scaling and optimization requirements. With SaaS, your application’s architecture must accommodate a continually shifting landscape of customers and load profiles.

The number of customers in the system and their usage patterns can change dramatically on a daily—or even hourly—basis. These dynamics make it challenging for SaaS architects to identify a model that can efficiently anticipate and respond to these variations.

Dynamically scaling servers and containers have certainly given SaaS architects a range of tools to accommodate these scaling patterns. And now, with the advent of serverless computing and AWS Lamba functions, architects have a computing and consumption model that aligns more precisely with the demands of SaaS environments.

In this blog post, we’ll discuss how serverless computing and AWS Lambda influence the compute, deployment, management, and operational profiles of your SaaS solution.

It’s All About Managed Functions

Adopting a serverless model requires developers to adopt a new mindset. Serverless touches nearly every dimension of how developers decompose application domains, build and package code, deploy services, version releases, and manage environments. The key contributor to this shift is the notion that serverless computing relies on a much more granular decomposition of your system, requiring each function of a service to be built, deployed, and managed independently. In many respects, serverless takes the spirit of microservices to the extreme.

While making this move make requires a paradigm shift, the payoff is significant—especially for SaaS solutions. This more granular model provides us with a much richer set of opportunities to align tenant activity with resource consumption. It is at the core of enabling your ability to tackle many of the challenges associated with SaaS cost and performance optimization.

The impact of serverless reaches beyond your code and services. It completely removes the notion of servers from your view. Gone is the need to provision, configure, patch, and manage instances or containers. In fact, as a developer of serverless applications, you are intentionally shielded from the details of how and where your application’s functions are executed. Instead, you must rely on the managed service—AWS Lambda—to control and scale the execution of your functions.

This notion of moving away from the awareness of any specific instance or container sets the stage for all the goodness we are looking for in our SaaS environments. It also frees you up to focus more of your attention on the functionality of your system.

Escaping the Policy Challenge

The ability to dynamically scale environments is essential to SaaS. Being able to respond quickly to changes in tenant load is key to maximizing a customer experience while still optimizing the cost footprint of your solution. Achieving these scaling goals with server-based environments can be challenging. With instances and containers, the responsibility for defining effective and efficient scaling policies lands squarely on your shoulders. The diagram below illustrates the complexity that is often associated with configuring the policies in traditional server-based SaaS environments.

In this example, we have decomposed an e-commerce application into a set of services. This decomposition was partly motivated by the desire to have each service scale independently. This is illustrated by the specific policies that are attached to each service. Here, for example, the search service might be scaling on memory, while the checkout service might be scaling on CPU.

This is a perfectly valid model. However, it puts significant pressure on the SaaS architect to continually refine and tune these policies to align them with the evolving usage patterns of your multi-tenant environment. The policies that are valid today might not be valid tomorrow. As new tenants come on board, the profile and behavior of the system can change. Ultimately, you might end up over-allocating resources to accommodate these variations in load. The end result is often higher per-tenant costs.

Now, as you move beyond thinking about instances and start implementing your solutions as a series of serverless methods, you can imagine how this influences your approach to managing scale. With AWS Lambda, you can mostly remove yourself from the policy management equation. Instead, scaling and responding effectively to load becomes the job of the managed service.

The Power of Granularity

The sections above outlined the value and impact of decomposing your system into a series of independent functions. Let’s dig a bit deeper into a real world example that provides a more detailed view of how a serverless model influences the profile of an application service that is implemented with Lambda.

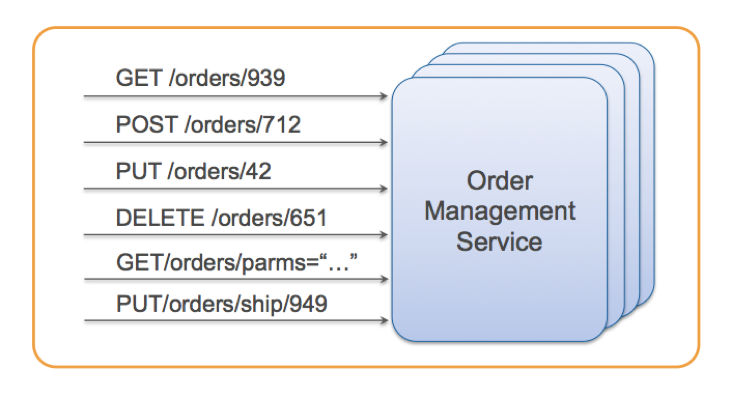

The image below provides and example of an order management service that might be deployed as a REST service hosted on an instance or container. This service supports a collection of methods that encapsulate the basic operations needed to store, retrieve, and control the state of orders in an e-commerce system.

This service includes a range of straightforward capabilities. In a typical scenario, the service would likely support a more detailed set of operations. Still, as you look at the scope of this service, it seems to meet most of the reasonable criteria. It’s relatively focused and is likely loosely coupled to other services.

While the service seems fine, it could present problems when it comes to scaling in a SaaS environment. Suppose, for example, that the DELETE operation of this service is very CPU-intensive while the PUT operation tends to be more memory-intensive. And, from our profiling, we see that some tenants are pushing the GET operation hard while others are using PUT operations more heavily. This creates a challenge when figuring out how to scale this service effectively without over-allocating resources. Essentially, with this more coarse-grained surface, your options for scaling the service can be somewhat limited. Without more control over your scaling granularity, you’ll be unable to match usage of the service to potential variations in tenant activity. Instead, you’re left with a best guess approach to picking a scaling model with the hope that it might represent an efficient consumption of resources.

Now, let’s see what it would mean to deliver this order management service in a serverless model. The following diagram illustrates how scale would be achieved in an environment where each of the service’s operations (functions) is implemented as a separate Lambda function.

As load is placed on an operation, that operation can scale out independently of the others. More calls to GetOrders(), for example, force the scale out of that function. Meanwhile, DeleteOrder() consumes almost no resources. The beauty of this model is that you no longer need to think about how best to decompose your services to find the right balance of consumption and scale. Instead, by representing your service as a series of separately deployed functions, you directly align the consumption of each function with the real-time activity of tenants. If there’s tremendous demand for order searches right now, the system will scale that specific method to meet the demands of that load. Meanwhile, if other functions are going untouched, these functions will not generate any compute costs.

You can imagine the value this model brings to SaaS environments where the activity of existing and new tenants is constantly changing. With traditional SaaS implementations, it would not be uncommon to have idle services that are rarely exercised or only pushed during specific windows of the day. Now, with a serverless architecture, this is no longer an issue. You can simply deploy your functions and let them to respond actual tenant load. If a group of functions are not called for a day they will incur no costs for remaining idle. Then, if a new tenant suddenly pushes these same functions, Lambda will be responsible for providing the required scale.

Serverless Management and Monitoring

The more granular nature of serverless applications also adds value to the SaaS management and monitoring experience. With SaaS applications, it’s essential to proactively detect—with precision—any anomalies that may exist in your system. Imagine the dashboard and operational view that could show you the health of your system at the function level. The following image provides a conceptual view of how a serverless system could help you analyze your system’s health and activity more effectively:

The heat map on the left provides a coarse-grained representation of the services. The health of each service is represented by a range of colors that convey the current status of a service. In this example, you’ll notice that the order management service is red, indicating that there is some kind of issue with the health of that service. However, we won’t know which aspect of this service is actually failing without drilling into logs and other metrics.

The view on the right represents the health of the system in a serverless model. Here, each square in the grid corresponds to a Lambda function. Now, when the health of any aspect of the system starts to diminish, you get a more granular view of what may be failing. This makes it easier to develop proactive policies and streamlines the troubleshooting process, both of which are essential in SaaS environments where an outage could impact all your customers.

More Chances to Impact Availability

With SaaS applications, you’re always looking for opportunities to improve the availability profile of your application. Most SaaS solutions lean heavily on building in fault tolerance mechanisms that allow an application to continue to function, even when some portions of the system could be failing.

Imagine, for example, that your e-commerce application has a ratings service that provides customer reviews about products. Although this feature is valuable to customers, the system could continue to function when this service is down. In this scenario, your system could either temporarily remove the display of the ratings or use a cached copy of the latest ratings data during the failure.

This approach to fault tolerance is a common technique that is used in many SaaS architectures. However, more coarse-grained services often undermine your ability to introduce effective fault tolerance strategies. The outage of an entire service can be more difficult to overcome. This is an area where the serverless model shines. The decomposition of your system into independently executable functions now gives you a much more diverse set of options for introducing fault tolerant policies.

Supporting Siloed Tenants

SaaS providers are often required to deliver some or all of their system in a siloed model where each tenant has its own unique set of infrastructure resources. This may be driven by any number of factors, including compliance, regulatory, or legacy architecture requirements. There are a number of downsides to operating a SaaS product in this model. Cost often rises to the top of this list, because the overhead associated with provisioning, operating, and managing separate tenant infrastructure can be substantial.

Serverless computing often represents a compelling alternative for these siloed solutions. With this model, the execution of each tenant’s functions can be completely isolated from other tenants. In fact, you can leverage AWS Identity and Access Management (IAM) policies to ensure that a Lambda function is executed in the context of a specific tenant, which helps address any concerns customers may have about cross-tenant access.

The other key upside of using serverless computing in a siloed SaaS model is its impact on costs. If you’ve used virtual machine or containers as your underlying infrastructure, this will require each tenant to have some idle footprint—even if the tenant isn’t exercising any of the system’s functionality. Meanwhile, with serverless computing, your tenant costs will be directly correlated to their consumption of the functions you’ve deployed. And, if there are areas of the system that tenants aren’t using, there will be no compute costs associated with these unused features. This can amount to a significant savings in a siloed environment.

The API Gateway and SaaS Agility

The Amazon API Gateway is a key piece of the AWS serverless model. It provides a managed REST entry point to the functions of your application. It also offloads issues like metering, DDoS, and throttling, allowing your services to focus more on their implementation and less on managing and routing requests.

In addition to providing API fundamentals, API Gateway also includes mechanisms to manage the deployment of functions to one or more environments. API Gateway includes support for stage variables that allow you to associate functions with a specific environment. So, for example, you could define separate DEV and PROD stages in the gateway and point these stage at specific versions of your functions. This can simplify both deployment and rollback of releases. It can also simplify the tooling you’ll need to build for your deployment pipeline.

As you move into a serverless model, you’ll also find that the function-based model aligns nicely with your SaaS agility goals. The following diagram illustrates how the move to more granular functions impacts your continuous delivery pipeline. Since each function is executed in isolation, they can also be deployed separately.

This smaller unit of deployment is especially helpful in SaaS environments where there is an even higher premium on maximizing up time. It also narrows the scope of potential impact for each item you deploy, promoting more frequent releases of product features and fixes.

Focus on What Matters

While there are a number of technical, agility, and economic advantages to building a SaaS solution with a serverless architecture, the biggest advantage of serverless is that frees you up to focus more of your energy on your application’s features and functionality. Serverless computing takes the entire notion of managing servers off your plate, allowing you to create applications that can continually change their scaling profile based on the real-time activity of your tenants.

For many teams, the real challenge of serverless computing is making the shift to a function-based application decomposition. This transition represents a fairly fundamental change in the mental model for building solutions. It may also have you reconsidering your choice of languages and tooling.

Challenges aside, the natural alignment between the values of SaaS and the principles of the serverless model are very compelling. The upsides of cost, fault tolerance, deployment agility, and managed scale make serverless computing an attractive model for SaaS providers.

About AWS SaaS Factory

AWS SaaS Factory helps organizations at any stage of the SaaS journey. Whether looking to build new products, migrate existing applications, or optimize SaaS solutions on AWS, we can help. Visit the AWS SaaS Factory Insights Hub to discover more technical and business content and best practices.

SaaS builders are encouraged to reach out to their account representative to inquire about engagement models and to work with the AWS SaaS Factory team.

Sign up to stay informed about the latest SaaS on AWS news, resources, and events.