AWS Partner Network (APN) Blog

Harnessing Generative AI and Semantic Search to Revolutionize Enterprise Knowledge Management

By Nicholas Burden, Technical Evangelist – TensorIoT

By Yuxin Yang, Machine Learning Practice Manager – TensorIoT

By Tony Trinh, Sr. Partner Success Solutions Architect – AWS

By David Min, Sr. Partner Success Solutions Architect – AWS

|

| TensorIoT |

|

Modern enterprises face the challenge of providing seamless access, retrieval, capture, and organization of rapidly proliferating knowledge, which is often scattered across disconnected data sources. Fortunately, transformative technologies like generative AI and semantic search are radically transforming the way businesses organize, discover, and utilize these knowledge assets.

A subset of generative AI, known as large language models (LLMs), excel at understanding and deriving valuable insights from unstructured data. On the other hand, semantic search represents a new era of context-aware capability that digs deeper into the essence of search queries, delivering precise and highly relevant results.

Together, generative AI and semantic search form an integrated ecosystem for intelligent knowledge management. In this post, we’ll explore how generative AI and semantic search can be harnessed to improve productivity and operational efficiency by democratizing enhanced search capabilities and generating actionable insights.

As the digitization of businesses accelerates, the value of effective knowledge management continues to rise. However, as with any substantial technological advancement, implementing these technologies presents certain challenges. The complexity of LLMs and semantic search demands a modern data strategy and specific technical skill sets.

TensorIoT is an AWS Advanced Tier Services Partner and AWS Marketplace Seller with many AWS Competencies, including Machine Learning and Conversational AI. TensorIoT enables digital transformation and greater sustainability for customers through Internet of Things (IoT), AI/ML, data and analytics, and app modernization

Generative AI: Knowledge Extraction and Insight Generation

Large language models such as Amazon Titan Text, Anthropic Claude, or OpenAI GPT4 are a subset of generative AI which works with text input (also known as prompt) and output. These models have the ability to parse and generalize vast volumes of internet-scale amounts of data, including news, articles, books, financial data, open-ended customer feedback, social media comments, and more.

Building upon this knowledge, the models can perform a range of actions such as generating text, answering questions, and synthesizing long-form content into concise summaries. This helps improve operational efficiency, productivity, and overall customer experience in multiple use cases.

For instance, LLMs can condense lengthy research papers into concise abstracts, thus allowing researchers to quickly grasp the key points without needing to read the entire paper. Another example is sentiment analysis in ecommerce; LLMs can sift through thousands of reviews to help ecommerce websites understand customer sentiment towards their flagship products to make swift, informed decisions.

Amazon Bedrock is a fully managed service that makes foundation models (FMs), which include LLMs from Amazon as well as leading AI startups, via an API. This lets you choose from a wide range of LLMs to find the model that’s best suited for your use case.

Additionally, Amazon SageMaker JumpStart provides pre-trained, open-source FMs from Amazon and other leading AI companies suitable for solving a wide range of problem types to help you get a head start on your machine learning journey.

Semantic Search: Navigating Information with Precision

Semantic search provides a dramatic improvement in information retrieval by comprehending the meaning and context of words in search queries and documents. Consequently, it can deliver highly relevant and accurate results.

Semantic search transcends the limitations of traditional keyword-based search because, unlike conventional search methods that rely solely on exact matches, semantic search understands the context and meanings behind words (semantic relationships) and the complex relationship within the data (syntactic relationships). This leads to search tools becoming more intuitive and efficient, as they can retrieve more accurate results (improved precision) and miss fewer relevant results (enhanced recall).

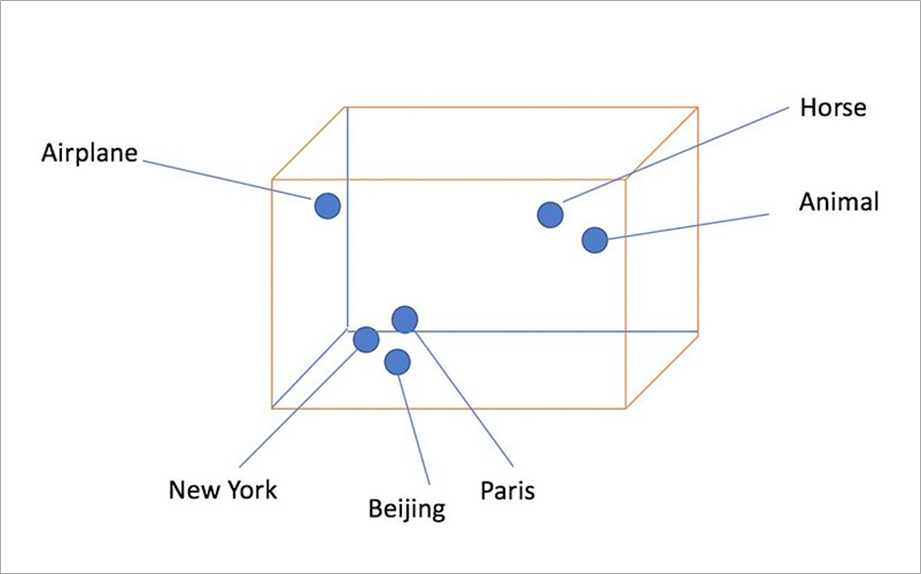

The following diagram provides a visual representation of what semantic relationships look like based on similarity of word meaning and context.

Figure 1 – Words that are semantically similar are close together in the embedding space.

To understand the mechanisms underpinning semantic search, it’s crucial to know about creating semantic indexing and embedding. Together, these techniques facilitate efficient and relevant information retrieval based on similarity, resulting in comprehensive and accurate results.

- Semantic indexing is a technique used in information retrieval to organize and categorize documents based on their meaning rather than just their words. It involves analyzing the content of each document and assigning it to a set of keywords or concepts that describe its main ideas.

- Embedding is the process of creating numerical representations as vectors in a high-dimensional space of words or documents that capture their meanings.

Combining LLMs and Semantic Search with Enterprise Data

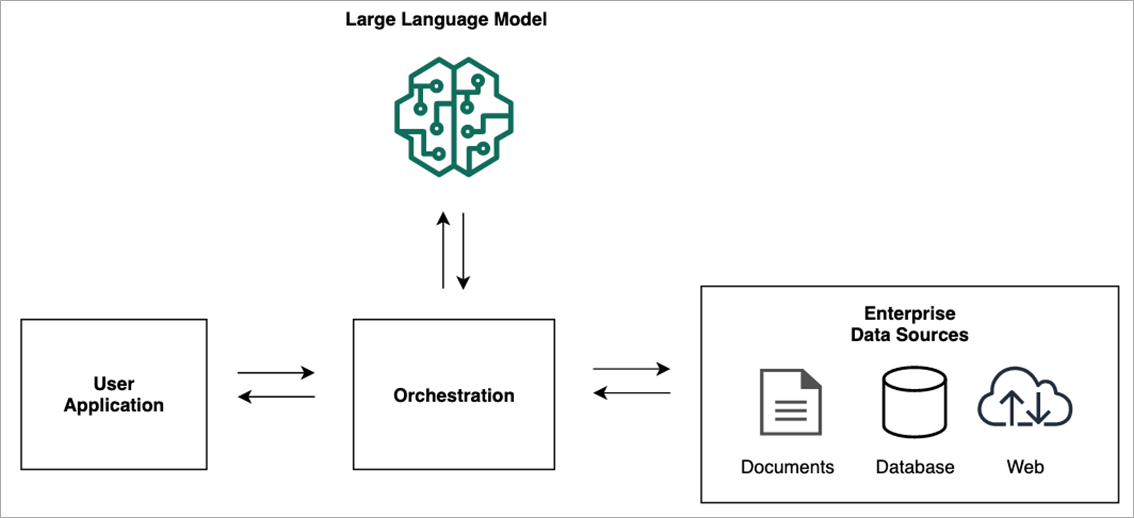

Retrieval-augmented generation (RAG) is a framework for building LLM-powered systems that make use of external or proprietary data sources. RAG integrates with many types of data sources including documents, wikis, expert systems, web pages, databases, and vector stores. Its comprehensive approach lends itself to integration with managed solutions like Agents for Amazon Bedrock, which can further streamline the data interaction process.

RAG plays a pivotal role in orchestrating the interplay between LLMs and semantic search. Through RAG, the model retrieves relevant documents and then generates a response, merging the advantages of both retrieval-based and generative methods. As a result, LLM systems can handle a wide array of topics while maintaining a specific, context-driven interaction with users.

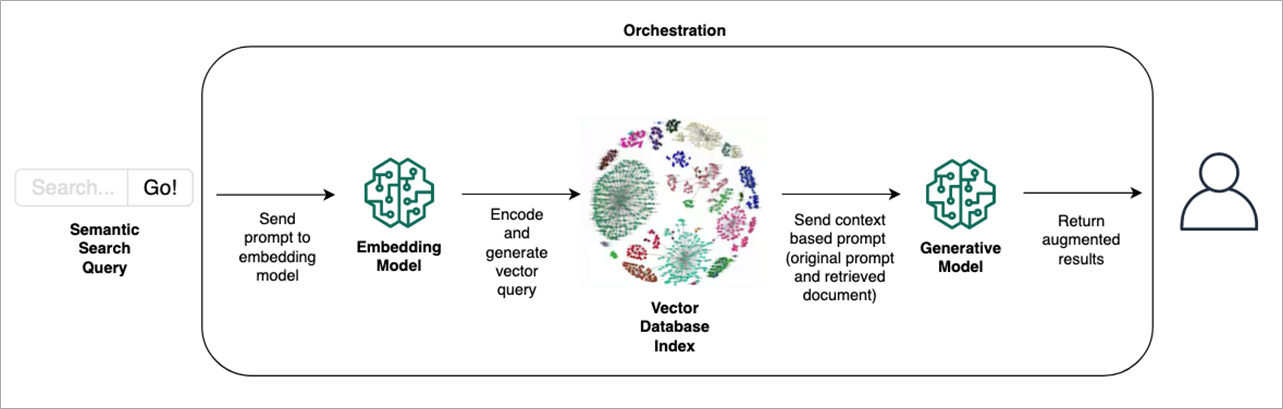

Within a RAG orchestration workflow, relevant documents or information pieces are retrieved from a vast dataset based on the user’s original prompt.

Figure 2 – Retrieval-augmented generation concise workflow.

The original prompt is used to provide the model with a general overview of the task, while the retrieved documents provide the model with specific factual information that can be used to generate more accurate and informative text.

The combination of the original prompt and retrieved documents is called the “context-based” prompt. This prompt will be fed to the language model, which generates the final output. For more details, see Amazon SageMaker JumpStart’s RAG documentation and the example notebooks.

Agents for Amazon Bedrock can complement this process, automatically converting data into a machine-readable format and augmenting the request with relevant information to generate a more accurate response.

Figure 3 – Retrieval-augmented generation detailed workflow.

For the remainder of this post, we’ll use the term “generative AI” to represent a RAG system consisting of an LLM and semantic search, with the potential for enhancement through tools like Agents for Amazon Bedrock.

Applying Generative AI in Enterprise Knowledge Management

With the rise of generative AI, we are witnessing an explosion of highly-efficient conversational chatbots, personalized virtual assistants, and search systems. These applications, embedded with generative AI capabilities, are the linchpins of effective knowledge sharing, informed decision-making, improved user experience, and enhanced productivity.

A variety of real-world use cases, both for internal usage and customer facing, demonstrate the power and versatility of using generative AI to enhance businesses. In large organizations, for instance, locating specific information can be daunting given the limitation of word matching search systems. Semantic search streamlines this process by understanding an information request’s semantics and providing a list of the most relevant documents, articles, or resources.

Another key area of application is market research and competitive intelligence. Semantic search can help businesses find relevant information about market trends, customer preferences, and competitor activities.

By understanding the semantics of business queries, generative AI systems can generate insightful data from diverse sources, such as news articles, social media posts, and industry reports. Having access to consolidated data across a massive range of sources can also guide investment decisions and predict upcoming trends.

For customer-facing use cases, generative AI can power chatbots and virtual assistants to interpret and respond to customers’ queries with greater precision by understanding the context and intent. This improves customer satisfaction and reduces the need for human intervention, optimizing the overall process and freeing human agents to focus on more complex issues.

Additionally, in the areas of personalized marketing, generative AI can create marketing content that resonates with various customer segments.

Conclusion

This post highlighted the transformative potential of generative AI in revolutionizing enterprise knowledge management, both internally and externally. The technology is shifting the paradigms of how businesses handle their knowledge assets. As generative AI evolves and its adoption proliferates, its role in shaping the future of enterprise knowledge management will only become more prominent.

TensorIoT is dedicated to achieving successful generative AI outcomes for customers on AWS. Utilizing Amazon SageMaker JumpStart Marketplace, Amazon Bedrock, and other cutting-edge AWS services, TensorIoT has a proven track record of helping customers move quickly from idea to reality deploying Large Language Models (LLMs).

TensorIoT’s offerings include generative AI assessments to develop roadmaps and upskill your team, proof of value engagements to rapidly prototype and deploy models using your data, and data science on-demand benefits from a data scientist who can supplement your existing team to drive critical initiatives forward and foster generative AI and data transformation skills within your organization.

There has never been a more opportune time for enterprises to invest in LLM and semantic search. As the digitization of business accelerates, the value of effective knowledge management continues to rise. However, as with any substantial technological advancement, implementing these technologies presents certain challenges.

The complexity of LLM and semantic search demands a modern data strategy and specific technical skill sets. TensorIoT takes pride in its expertise in data analytics, machine learning, generative AI, and extensive experience in developing solutions that best suit customers’ needs.

TensorIoT – AWS Partner Spotlight

TensorIoT is an AWS Advanced Tier Services Partner that enables digital transformation and greater sustainability for customers through IoT, AI/ML, data and analytics, and app modernization.

Contact TensorIoT | Partner Overview | AWS Marketplace | Case Studies