AWS Architecture Blog

Detecting solar panel damage with Amazon Rekognition Custom Labels

Enterprises perform quality control to ensure products meet production standards and avoid potential brand reputation damage. As the cost of sensors decreases and connectivity increases, industries adopt real-time imagery analysis to detect quality issues.

At the same time, artificial intelligence (AI) advancements enable advanced automation, reduce overall cost and project time, and produce accurate defect detection results in manufacturing plants. As these technologies mature, AI-driven inspections are more common outside of the plant environment.

Overview of solution

This post describes our SOLVED (Solar Roving Eye Detector) project leveraging machine learning (ML) to identify damaged solar panels using Amazon Rekognition Custom Labels and alert operators to take corrective action.

As solar adoption increases, so does the need to detect panel damage. Applying AWS-managed AI services is a simpler, more cost-effective approach than human solar panel inspection or custom-built production applications.

Customers can capture and process videos from the field and build effective computer vision models without creating a dedicated data science team. This approach can be generalized for use cases across industries to detect defects in wind turbines, cell phone towers, automotive parts, and other field components.

Amazon Rekognition Custom Labels builds off of existing service capabilities already trained to identify the objects and scenes in millions of cross-category images. You upload a small set of training images—typically a few hundred or less—into our console. The solution automatically loads and inspects the training data, selects the right ML algorithms, trains a model, and provides model performance metrics. You can then integrate your custom model into your applications through the Amazon Rekognition Custom Labels API.

Walkthrough

This post introduces the SOLVED project featured at the re:Invent 2021 Builders Fair. It will:

- Review the need for solar panel damage detection

- Discuss a cloud-based approach to ingest, store, process, analyze, and detect damaged solar panels

- Present a diagram streaming videos from a Raspberry Pi, storing them on Amazon Simple Storage Service (Amazon S3), processing them using an AWS video-on-demand solution, and inferring damage using Amazon Rekognition

- Introduce a console to mimic an operation center for appropriate action

- Demonstrate the integration of AWS IoT Core with a Philips Hue bulb for operator alerts

Prerequisites

Before getting started, review the following prerequisites for this solution:

- This blog assumes familiarity with AWS Lambda, Amazon Kinesis Video Streams, AWS IoT Core, and AWS Identity and Access Management (IAM)

- Access to an AWS account with permissions to create the resources described in the installation steps section

- An AWS IAM user with the permissions described later in this post

- Access to a Freenove 4WD Smart Car

- Access to a Raspberry Pi

- Access to a Philips Hue Smart Bulb

The SOLVED project

The SOLVED project leverages ML to identify damaged solar panels using Amazon Rekognition Custom Labels. It involves four steps:

- Data ingestion: Live solar panel video ingested from moving rover into an Amazon S3 bucket

- Pre-processing: Captured video split into thumbnail images

- Processing and visualization: ML models making real-time inferences to identify defective panels with a dashboard to review images and prediction scores

- Alerting: Defective panels result in notification sent through MQTT messages to light a smart bulb

Figure 1 shows the SOLVED project system architecture.

Installation steps

Let’s review each of the steps in this use case.

Data ingestion

The data ingestion layer of the SOLVED project consists of a continuous video stream captured as a rover moves through a field of solar panels.

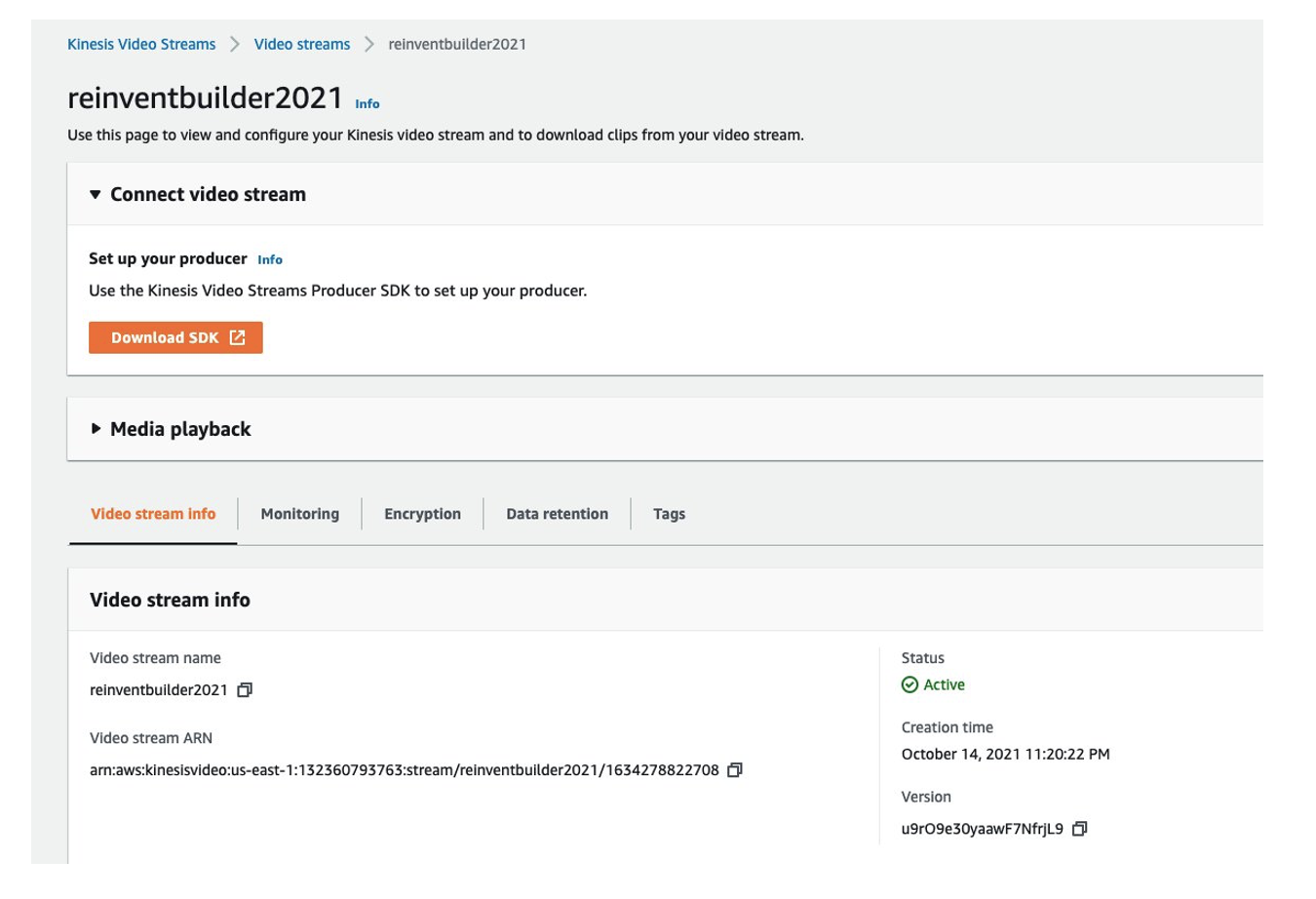

We used a Freenove 4WD Smart Car rover with Raspberry Pi. The mounted camera captures video as it moves through the field. We installed an Amazon Kinesis Video Streams Producer on the Pi and streamed the live video to a Kinesis Video Stream named reinventbuilder2021.

Figure 2 shows the Kinesis Video Stream setup window for reinventbuilder2021.

To start streaming, use the following steps.

- Create a new Kinesis Video Stream using this Amazon Kinesis Video Streams Developer Guide

- Make a note of the Amazon Resource Name (ARN)

- On the Pi, access the command prompt and use aws sts get-session-token for temporary credentials. The IAM user should have the permissions for Kinesis Video Streams PutMedia.

- Set the following environment variables:

export AWS_DEFAULT_REGION="us-east-1" export AWS_ACCESS_KEY_ID="xxxxx" export AWS_SECRET_ACCESS_KEY="yyyyy" export AWS_SESSION_TOKEN=“zzzzz”

- Start the streamer using the following command:

cd ~/amazon-kinesis-video-streams-producer-sdk-cpp/build ./kvs_gstreamer_sample reinventbuilder2021

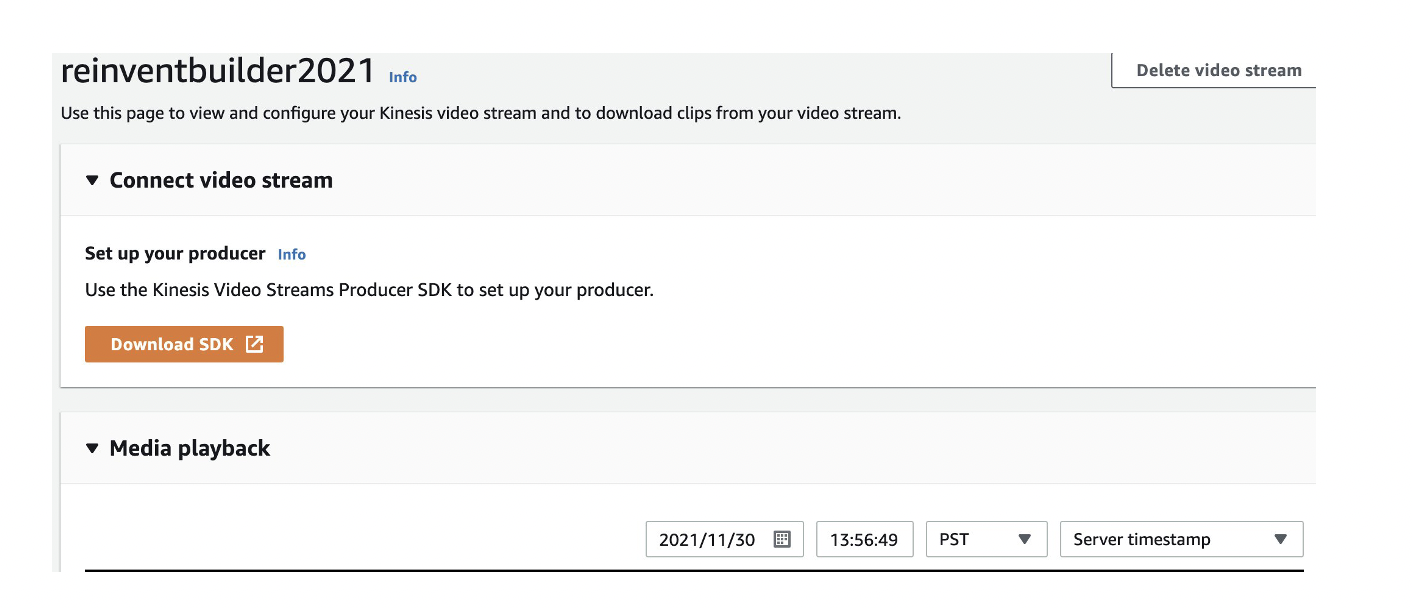

- Validate the captured stream by viewing the Media playback on the console.

Figure 3 shows the video stream console, including the Media playback option.

There are two ways to clip video snippets, which we’ll do next.

You can use the Download clip button on the video stream console as shown in Figure 4.

Alternately, you can use a script from the following command line:

ONE_MIN_AGO=$(date -v -30S -u "+%FT%T+0000")

NOW=$(date -u "+%FT%T+0000")

FILE_NAME=reinventbuilder-solved-$RANDOM.mp4

echo $FILE_NAME

S3_PATH=s3://videoondemandsplitter-source-e6lyof9qjv1j/

aws kinesis-video-archived-media get-clip --endpoint-url $KVS_DATA_ENDPOINT \

--stream-name reinventbuilder2021 \

--clip-fragment-selector "FragmentSelectorType=SERVER_TIMESTAMP,TimestampRange={StartTimestamp=$ONE_MIN_AGO,EndTimestamp=$NOW}" \

$FILE_NAME

echo "Running get-clip for stream"

sleep 45

aws s3 cp $FILE_NAME $S3_PATH

echo "copying file $FILE_NAME TO $S3_PATH"

The clip is available in the Amazon S3 source folder created using AWS CloudFormation, as shown in Figure 5.

Pre-processing

To process the video, we leverage Video on Demand at AWS. This solution encodes video files with AWS Elemental MediaConvert. Out of the box, it:

1. Automatically transcodes videos uploaded to Amazon S3 into formats suitable for playback on a range of devices using MediaConvert

2. Customizes MediaConvert job settings by uploading a custom file and using different settings per input

3. Stores transcoded files in a destination Amazon S3 bucket and uses CloudFront to deliver them to end viewers

4. Provides outputs including input file metadata, job settings, and output details in addition to transcoded video. These outputs are stored in a separate JSON file, available for further processing

For our use case, we used the frame capture feature to create a set of thumbnails from the source videos. The thumbnails are stored in the Amazon S3 bucket with the video output.

To deploy this solution, use the CloudFormation stack.

Processing and visualization

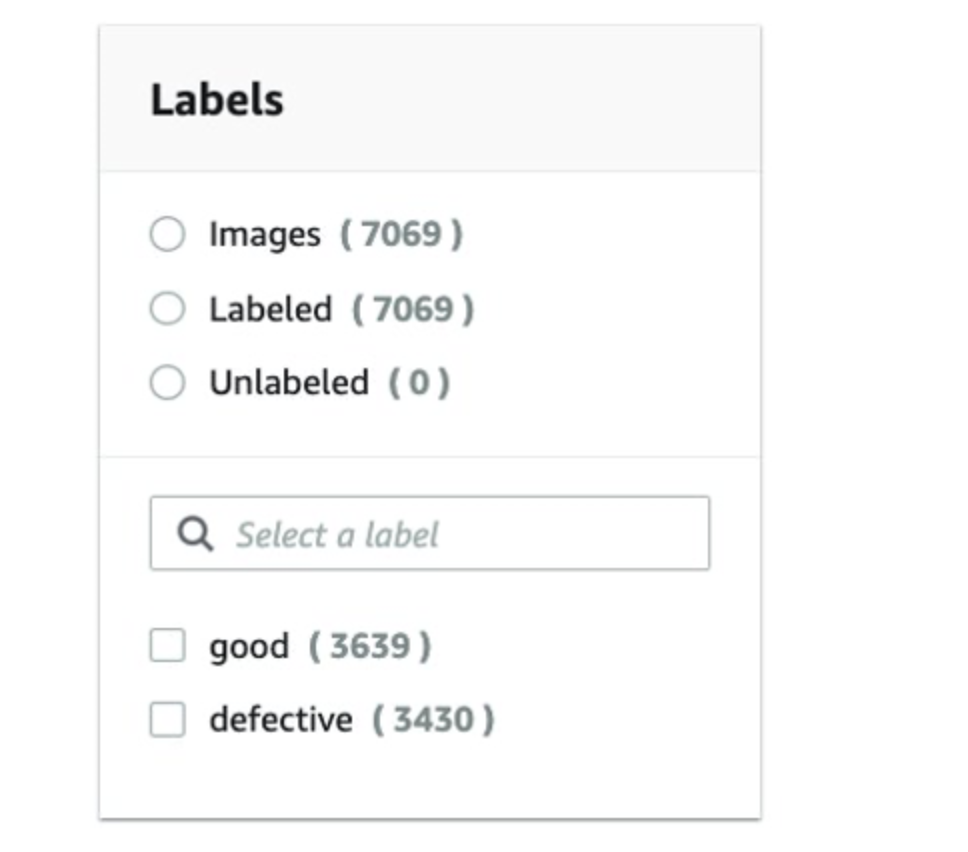

Every trained ML model requires quality training data. We began with publicly available solar panel images that were categorized as “good” or “defective” and uploaded the images to an Amazon S3 bucket into corresponding folders.

Next, we configured Amazon Rekognition Custom Labels with the folders to indicate the labels to use in training and deploying the model. Using the rover images, we tested the model.

We used the rover to record videos of good and damaged solar panels over an extended period and label the outcome favorably. The video was then split into individual frames using MediaConvert, giving us a well-labeled dataset that we trained our model with using Amazon Rekognition Custom Labels.

We used the model endpoint to infer outcomes on solar panels with varying damage footprints across multiple locations. AWS Elemental Mediaconvert expedited the process of curating the training set, and creating the model and endpoint using Amazon Rekognition was straightforward.

As shown in Figure 6, we used a training set of 7,000 images with an even mix of good and damaged panels.

Examples of good panel images are depicted in Figure 7.

Examples of damaged panel images are depicted in Figure 8.

In this use case, 90 percent model accuracy was achieved.

To visualize the results, we leveraged AWS Amplify to provide an operator interface to identify the damaged panels.

Figure 9 shows screenshots from the operator dashboard with output from the Amazon Custom Labels Rekognition model for good and defective panels.

Alerting

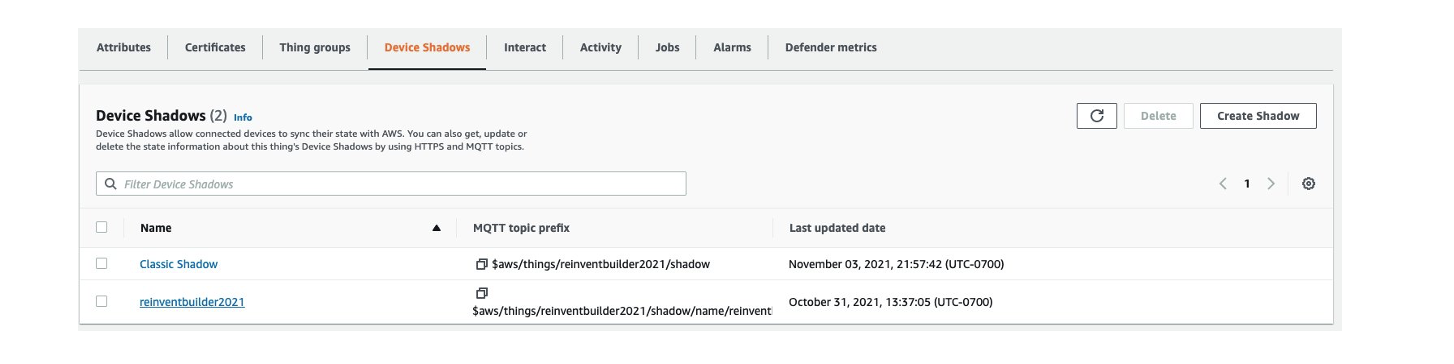

Maintenance teams must be notified of defective panels to take corrective action. To create alerts, we configured AWS IoT Core to send MQTT messages to a Philips Hue smart bulb, with red bulbs indicating defective panels. To set up the Philips Hue API, use the How to develop for Hue guide.

For example, here’s the API to change color:

PUT https://192.xx.xx.xx/api/xxxxxxx/lights/1/state

{"on":true, "sat":254, "bri":254,"hue":20000}

turns color to green

{"on":true, "sat":254, "bri":254,"hue":1000}

turns to red.

We set up a client on the Pi that listens on an AWS IoT Core MQTT topic and makes an API request to Philips Hue.

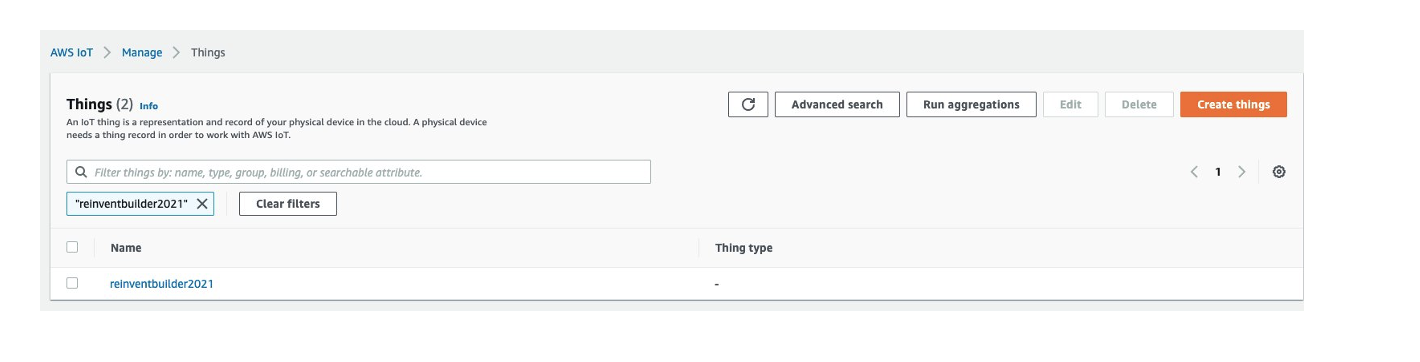

To connect a device to AWS IoT, complete these steps:

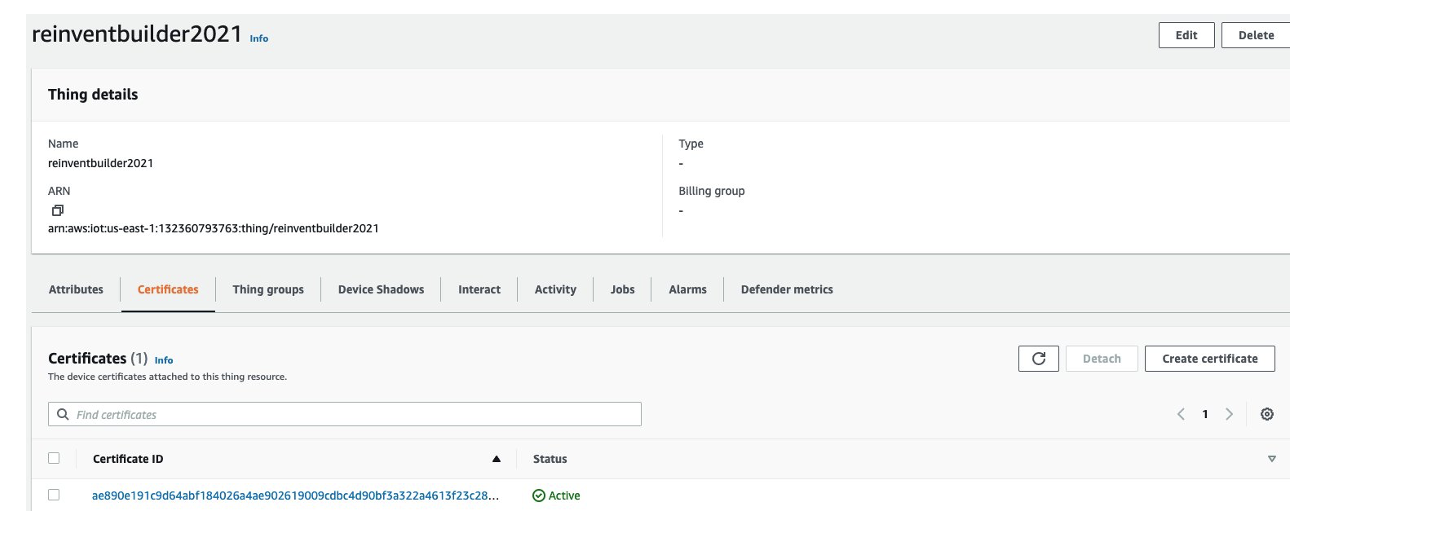

- Create an IoT thing, a device certificate, and an AWS IoT policy. An AWS IoT thing represents a physical device (in this case, Raspberry Pi) and contains static device metadata, as shown in Figure 10.

2. Create a device certificate, required to connect to and authenticate with AWS IoT. An example is shown in Figure 11.

3. Associate an AWS IoT policy with each device certificate. They determine which AWS IoT resources the device can access. In this case, we allowed iot.*, giving the device access to all IoT resources, as shown in Figure 12.

Devices and other clients use an AWS IoT root CA certificate to authenticate the server they’re communicating with. For more on how devices authenticate with AWS IoT Core, see Server authentication in the AWS IoT Core Developer Guide. Copy the certificate chain to the Raspberry Pi.

For communication with the Philips Hue, we used the Qhue wrapper as shown in Figure 13.

The authors presented a demo of this solution at re:Invent 2021 Builder’s Fair.

Clean up

If you used the CloudFormation stack, delete it to avoid unexpected future charges. Delete Amazon S3 buckets and terminate Amazon Rekognition jobs to stop accruing charges.

Conclusion

Amazon Rekognition helps customers collect images in the field and apply AI-based analysis to interpret the condition of assets within the images.

In this post, you learned how to configure the Kinesis Video Stream producer on a Raspberry Pi to upload captured videos to Amazon Kinesis Video streams. You also learned how to save video streams to Amazon S3 and leverage the Video on Demand at AWS solution.

Using AWS MediaConvert, we transcoded the videos and create a set of thumbnails from the source videos. We then used Amazon Rekognition Custom Labels to train and deploy models for solar panel damage detection. Finally, we configured AWS IoT core to send MQTT messages to a Philips Hue smart bulb for notifications.

In this post, we presented a serverless architecture on AWS to detect defective solar panels. The reference architecture diagram is adaptable to solve inspection and damage detection problems across other industries.