AWS Architecture Blog

Microservices discovery using Amazon EC2 and HashiCorp Consul

These days, large organizations typically have microservices environments that span across cloud platforms, on-premises data centers, and colocation facilities. The reasons for this vary but frequently include latency, local support structures, and historic architectural decisions. However, due to the complex nature of these environments, efficient mechanisms for service discovery and configuration management must be implemented to support operations at scale. This is an issue also faced by Nomura.

Nomura is a global financial services group with an integrated network spanning over 30 countries and regions. By connecting markets East & West, Nomura services the needs of individuals, institutions, corporates, and governments through its three business divisions: Retail, Investment Management, and Wholesale (Global Markets and Investment Banking). E-Trading Strategy Foreign Exchange sits within Global Markets, and focuses on all quantitative analysis and technical aspects of electronic FX flows. The team builds out a number of innovative solutions for clients, all of which are needed to operate in an ultra-low latency environment to be competitive. The focus is to build high-quality engineered platforms that can handle all aspects of Nomura’s growing 24 hours a day, 5-and-a-half days a week FX business.

In this blog post, we share the solution we developed for Nomura and how you can build a service discovery mechanism that uses a hierarchical rule-based algorithm. We use the flexibility of Amazon Elastic Compute Cloud (Amazon EC2) and third-party software, such as SpringBoot and Consul. The algorithm supports features such as service discovery by service name, Domain Name System (DNS) latency, and custom provided tags. This can activate customers with automated deployments, since services are able to auto-discover and connect with other services. Based on provided tags, customers can implement environment boundaries so that a service doesn’t connect to an unintended service. Finally, we built a failover mechanism, so that if a service becomes unavailable, an alternative service would be provided (based on given criteria).

After reading this post, you can use the provided assets in the open source repository to deploy the solution in their sandbox environment. The Terraform and Java code that accompanies this post can be amended as needed to suit individual requirements.

Overview of solution

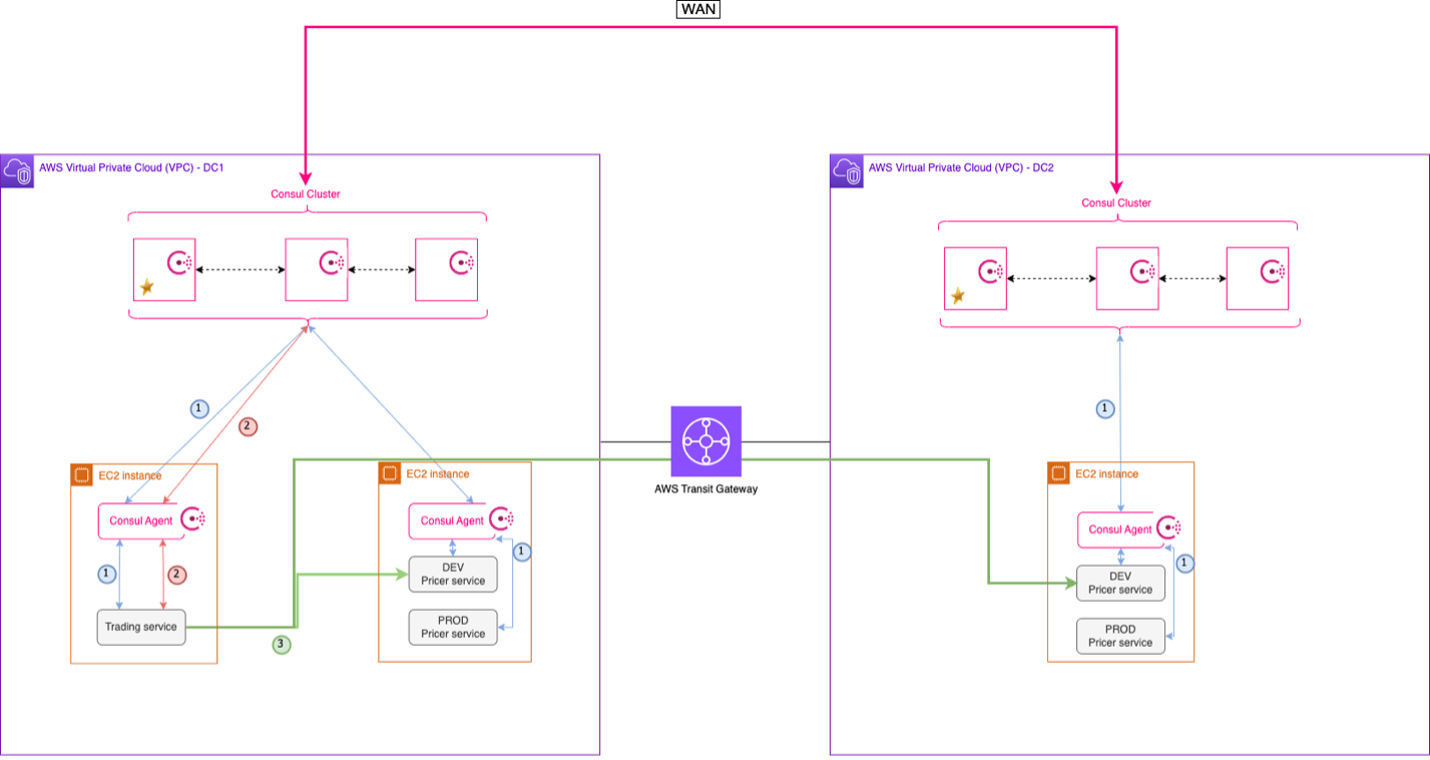

The solution is composed of a microservices platform that is spread over two different data centers, and a Consul cluster per data center. We use two Amazon Virtual Private Clouds (VPCs) to model geographically distributed Consul “data centers”. These VPCs are then connected via an AWS Transit Gateway. By permitting communication across the different data centers, the Consul clusters can form a wide-area network (WAN) and have visibility of service instances deployed to either. The SpringBoot microservices use the Spring Cloud Consul plugin to connect to the Consul cluster. We have built a custom configuration provider that uses Amazon EC2 instance metadata service to retrieve the configuration. The configuration provider mechanism is highly extensible, so anyone can build their own configuration provider.

The major components of this solution are:

-

- Sample microservices built using Java and SpringBoot, and deployed in Amazon EC2 with one microservice instance per EC2 instance

- A Consul cluster per Region with one Consul agent per EC2 instance

- A custom service discovery algorithm

Figure 1. Multi-VPC infrastructure architecture

A typical flow for a microservice would be to 1/ boot up, 2/ retrieve relevant information from the EC2 Metadata Service (such as tags), and, 3/ use it to register itself with Consul. Once a service is registered with Consul it can discover services to integrate with, and it can be discovered by other services.

An important component of this service discovery mechanism is a custom algorithm that performs service discovery based on the tags created when registering the service with Consul.

Figure 2. Service discovery flow

The service flow shown in Figure 2 is as follows:

- The Consul agent deployed on the instance registers to the local Consul cluster, and the service registers to its Consul agent.

- The Trading service looks up for available Pricer services via API calls.

- The Consul agent returns the list of available Pricer services, so that the Trading service can query a Pricer service.

Walkthrough

Following are the steps required to deploy this solution:

- Provision the infrastructure using Terraform. The application .jar file and the Consul configuration are deployed as part of it.

- Test the solution.

- Clean up AWS resources.

The steps are detailed in the next section, and the code can be found in this GitHub repository.

Prerequisites

- Install Git

- Install Terraform

- Install Packer

- Install AWS CLI

- An AWS account

- A Consul Enterprise License – if you don’t have an Enterprise license, you can contact the Hashicorp Support Team to request a trial license.

Deployment steps

Note: The default AWS Region used in this deployment is ap-southeast-1. If you’re working in a different AWS Region, make sure to update it.

Clone the repository

First, clone the repository that contains all the deployment assets:

git clone https://github.com/aws-samples/geographical-hierarchical-service-lookup-with-consul-on-aws

Build Amazon Machine Images (AMIs)

1. Build the Consul Server AMI in AWS

Go to the ~/deployment/scripts/amis/consul-server/ directory and build the AMI by running:

packer build .

The output should look like this:

==> Builds finished. The artifacts of successful builds are:

--> amazon-ebs.ubuntu20-ami: AMIs were created:

ap-southeast-1: ami-12345678910

Make a note of the AMI ID. This will be used as part of the Terraform deployment.

2. Build the Consul Client AMI in AWS

Go to ~/deployment/scripts/amis/consul-client/ directory and build the AMI by running:

packer build .

The output should look like this:

==> Builds finished. The artifacts of successful builds are:

--> amazon-ebs.ubuntu20-ami: AMIs were created:

ap-southeast-1: ami-12345678910

Make a note of the AMI ID. This will be used as part of the Terraform deployment.

Prepare the deployment

There are a few steps that must be accomplished before applying the Terraform configuration.

1. Update deployment variables

-

- In a text editor, go to directory

~/deployment/ - Edit the variable file template.var.tfvars.json by adding the variables values, including the AMI IDs previously built for the Consul Server and Client

- In a text editor, go to directory

Note: The key pair name should be entered without the “.pem” extension.

2. Place the application file .jar in the root folder ~/deployment/

Deploy the solution

To deploy the solution, run the following commands from the terminal:

export VAR_FILE=template.var.tfvars.json

terraform init && terraform plan --var-file=$VAR_FILE -out plan.out

terraform apply plan.out

Validate the deployment

All the EC2 instances have been deployed with AWS Systems Manager access, so you can connect privately to the terminal using the AWS Systems Manager Session Manager feature.

To connect to an instance:

1. Select an instance

2. Click Connect

3. Go to Session Manager tab

Using Session Manager, connect to one of the Consul servers and run the following commands:

consul members

This command shows you the list of all Consul servers and clients connected to this cluster.

consul members -wan

This command shows you the list of all Consul servers connected to this WAN environment.

To see the Consul User Interface:

1. Open your terminal and run:

aws ssm start-session --target <instanceID> --document-name AWS-StartPortForwardingSession --parameters '{"portNumber":["8500"],"localPortNumber":["8500"]}' --region <region>

Where instanceID is the AWS Instance ID of one of the Consul servers, and Region is the AWS Region.

Using System Manager Port Forwarding allows you to connect privately to the instance via a browser.

2. Open a browser and go to http://localhost:8500/ui

3. Find the Management Token ID in AWS Secrets Manager in the AWS Management Console

4. Login to the Consul UI using the Management Token ID

Test the solution

Connect to the trading instance and query the different services:

curl http://localhost:9090/v1/discover/service/pricer

curl http://localhost:9090/v1/discover/service/static-data

This deployment assumes that the Trading service queries the Pricer and Static-Data services, and that services are returned based on an order of precedence (see Table 1 following):

| Service | Precedence | Customer | Cluster | Location | Environment |

|---|---|---|---|---|---|

| TRADING | 1 | ACME | ALPHA | DC1 | DEV |

| PRICER | 1 | ACME | ALPHA | DC1 | DEV |

| PRICER | 2 | ACME | ALPHA | DC2 | DEV |

| PRICER | 3 | ACME | BETA | DC1 | DEV |

| PRICER | 4 | ACME | BETA | DC2 | DEV |

| PRICER | 5 | SHARED | ALPHA | DC1 | DEV |

| PRICER | 6 | SHARED | ALPHA | DC2 | DEV |

| STATIC-DATA | 1 | SHARED | SHARED | DC1 | DEV |

| STATIC-DATA | 2 | SHARED | SHARED | DC2 | DEV |

| STATIC-DATA | 2 | SHARED | BETA | DC2 | DEV |

| STATIC-DATA | 2 | SHARED | GAMMA | DC2 | DEV |

| STATIC-DATA | -1 | STARK | ALPHA | DC1 | DEV |

| STATIC-DATA | -1 | ACME | BETA | DC2 | PROD |

Table 1. Service order of precedence

To test the solution, switch on and off services in the AWS Management Console and repeat Trading queries to look at where the traffic is being redirected.

Cleaning up

To avoid incurring future charges, delete the solution from ~/deployment/ in the terminal:

terraform destroy --var-file=$VAR_FILE

Conclusion

In this post, we outlined the prevalent challenge of complex globally distributed microservice architectures. We demonstrated how customers can build a hierarchical service discovery mechanism to support such an environment using a combination of Amazon EC2 service and third-party software such as SpringBoot and Consul. Use this to test this solution into your sandbox environment and to see if it could bring the answer to your current challenge.

Additional resources: