AWS News Blog

Tag: Amazon Elastic Load Balancer

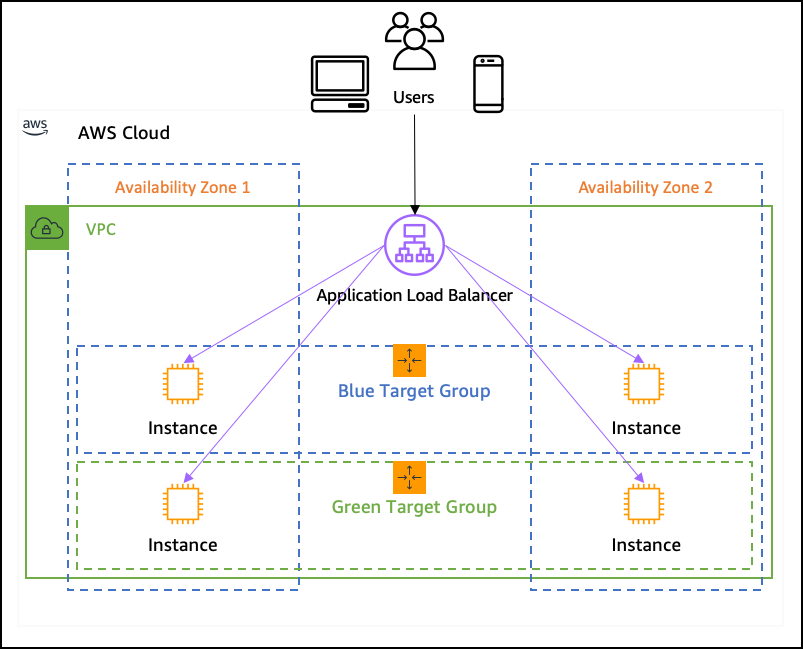

New – Application Load Balancer Simplifies Deployment with Weighted Target Groups

One of the benefits of cloud computing is the possibility to create infrastructure programmatically and to tear it down when it is no longer needed. This allows to radically change the way developers deploy their applications. When developers used to deploy applications on premises, they had to reuse existing infrastructure for new versions of their […]

New – Application Load Balancing via IP Address to AWS & On-Premises Resources

I told you about the new AWS Application Load Balancer last year and showed you how to use it to do implement Layer 7 (application) routing to EC2 instances and to microservices running in containers. Some of our customers are building hybrid applications as part of a longer-term move to AWS. These customers have told […]

New – Host-Based Routing Support for AWS Application Load Balancers

Last year I told you about the new AWS Application Load Balancer (an important part of Elastic Load Balancing) and showed you how to set it up to route incoming HTTP and HTTPS traffic based on the path element of the URL in the request. This path-based routing allows you to route requests to, for […]

AWS IPv6 Update – Global Support Spanning 15 Regions & Multiple AWS Services

We’ve been working to add IPv6 support to many different parts of AWS over the last couple of years, starting with Elastic Load Balancing, AWS IoT Core, AWS Direct Connect, Amazon Route 53, Amazon CloudFront, AWS WAF, and S3 Transfer Acceleration, all building up to last month’s announcement of IPv6 support for EC2 instances in […]

AWS Web Application Firewall (WAF) for Application Load Balancers

I’m still catching up on a couple of launches that we made late last year! Today’s post covers two services that I’ve written about in the past — AWS Web Application Firewall (WAF) and AWS Application Load Balancer: AWS Web Application Firewall (WAF) – Helps to protect your web applications from common application-layer exploits that […]

Application Performance Percentiles and Request Tracing for AWS Application Load Balancer

In today’s guest post, my colleague Colm MacCárthaigh discusses the new CloudWatch Percentile Statistics and the implications and benefits load balancing. He also introduces a new request tracing feature that will make it easier for you to track the progress of individual requests as they arrive at a load balancer and make their way to […]

New – AWS Application Load Balancer

We launched Elastic Load Balancing (ELB) for AWS in the spring of 2009 (see New Features for Amazon EC2: Elastic Load Balancing, Auto Scaling, and Amazon CloudWatch to see just how far AWS has come since then). Elastic Load Balancing has become a key architectural component for many AWS-powered applications. In conjunction with Auto Scaling, […]

Elastic Load Balancing Update – More Ports & Additional Fields in Access Logs

Many AWS applications use Elastic Load Balancing to distribute traffic to a farm of EC2 instances. An architecture of this type is highly scalable since instances can be added, removed, or replaced in a non-disruptive way. Using a load balancer also gives the application the ability to keep on running if an instance encounters an […]