AWS Big Data Blog

Query Snowflake using Athena Federated Query and join with data in your Amazon S3 data lake

This post was last reviewed and updated July, 2022 with updates in Athena federation connector.

If you use data lakes in Amazon Simple Storage Service (Amazon S3) and use Snowflake as your data warehouse solution, you may need to join your data in your data lake with Snowflake. For example, you may want to build a dashboard by joining historical data in your S3 data lake and the latest data in your Snowflake data warehouse or create consolidated reporting.

In such use cases, Amazon Athena Federated Query allows you to seamlessly access the data from Snowflake without building extract, transform, and load (ETL) pipelines to copy or unload the data to the S3 data lake or Snowflake. This removes the overhead of creating additional ETL processes and shortens the development cycle.

In this post, we walk you through a step-by-step configuration to set up Athena Federated Query using AWS Lambda to access data in a Snowflake data warehouse.

Let’s start with discussing the solution and then detailing the steps involved.

Solution overview

Data federation refers to the capability to query data in another data store using a single interface (in our case, Amazon Athena). The following diagram depicts how a single Athena federated query uses Lambda to query the underlying data source and parallelizes query runs across many workers.

Athena is an interactive query service that makes it easy to analyze data in Amazon S3 using standard SQL. If you have data in sources other than Amazon S3, you can use Athena Federated Query to query the data in place or build pipelines to extract data from multiple data sources and store them in Amazon S3. With Athena Federated Query, you can run SQL queries across data stored in relational, non-relational, object, and custom data sources.

When a federated query is run, Athena identifies the parts of the query that should be routed to the data source connector and runs them with Lambda. The data source connector makes the connection to the source, runs the query, and returns the results to Athena. If the data doesn’t fit into Lambda RAM runtime memory, it spills the data to Amazon S3 and is later accessed by Athena.

Athena uses data source connectors that internally use Lambda to run federated queries. Data source connectors are pre-built and can be deployed from the Athena console or from the AWS Serverless Application Repository. Based on the user submitting the query, connectors can provide or restrict access to specific data elements.

To implement this solution, we complete the following steps:

- Create a secret for the Snowflake instance using AWS Secrets Manager.

- Create an S3 bucket and subfolder for Lambda to use.

- Configure Athena federation with the Snowflake instance.

- Run federated queries with Athena.

Prerequisites

Before getting started, make sure you have a Snowflake data warehouse up and running.

Create a secret for the Snowflake instance

Our first step is to create a secret for the Snowflake instance with a user name and password using Secrets Manager.

- On the Secrets Manager console, choose Secrets.

- Choose Store a new secret.

- For Secret type, select Other types of secret.

- Enter the credentials as the following key-value pairs (click +Add row to add extra values).

- Key = username, Value = <Your username>

- Key = password, Value = <Your password>

- For Secret name, enter a name for your secret. Use the prefix

snowflake-athenaso it’s easy to find.

- Leave the remaining fields at their defaults and choose Next.

- Complete your secret creation.

Create an S3 bucket for Lambda

On the Amazon S3 console, create a new S3 bucket and subfolder for Lambda to use. For this post, we use navnis-athena-federation/snowflake.

Configure Athena federation with the Snowflake instance

To configure the Athena data source connector for Snowflake with your Snowflake instance, complete the following steps:

- On the Athena console, choose Data sources in the navigation pane.

- Choose Create data source.

- For Choose a data source, search for the Snowflake connector and choose Next.

- For Data source name, provide a name for the data source (for example,

athena-snowflake). - Under Connection details, choose Create Lambda function.

- For Application name, keep the default

AthenaSnowflakeConnector. - For SecretNamePrefix, enter

snowflake-athena, which you created earlier. - For SpillBucket, enter your S3 bucket name (for example,

navnis-athena-federation). - For JDBCConnectorConfig, use the format

snowflake://jdbc:snowflake://{snowflake_instance_url}/?warehouse={warehousename}&db={databasename}&schema={schemaname}&${secretname}

For example: snowflake://jdbc:snowflake://glb37380.us-east-1.snowflakecomputing.com/?warehouse=COMPUTE_WH&db=SNOWFLAKE_SAMPLE_DATA&schema=TPCH_SF1&${snowflake-athena}

- For LambdaFunctionName, enter

snowflakeathena. - For SecurityGroupID, enter the security group ID that is associated to the VPC ID corresponding to your subnet (a security group with default configuration should work fine).

- For SpillPrefix, create a folder under the S3 bucket you created and specify the name (for example,

snowflake). - For Subnetids, enter the corresponding subnet that the Lambda function can use to access your data source. For example: subnet1, subnet2.

Please refer the Appendix section to learn more about network setting. - Select the I acknowledge check box.

- Choose Deploy.

The deployment of lambda may take 3–4 minutes to complete. Make sure that the AWS Identity and Access Management (IAM) roles have permissions to access AWS Serverless Application Repository, AWS CloudFormation, Amazon S3, Amazon CloudWatch, AWS CloudTrail, Secrets Manager, Lambda, and Athena.

For more information, see Example IAM Permissions Policies to Allow Athena Federated Query.

- After the function is created, choose the function name under Lambda function and keep the other options at their default.

- Choose Next.

- Choose Create Data Source.

Now you can see the newly created data source.

Run federated queries with Athena

Before running your federated query, be sure that you have selected Athena engine version 2. The current Athena engine version for any workgroup can be found on the Athena console.

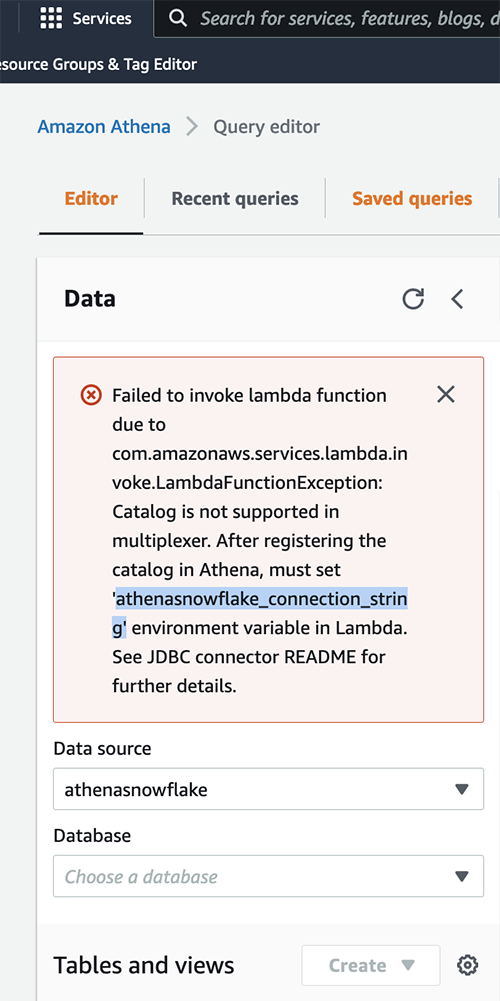

On the Athena query editor, when you first try to refresh the database, you get an error message. Copy the environment variable from this error message.

To fix this issue, complete the following steps:

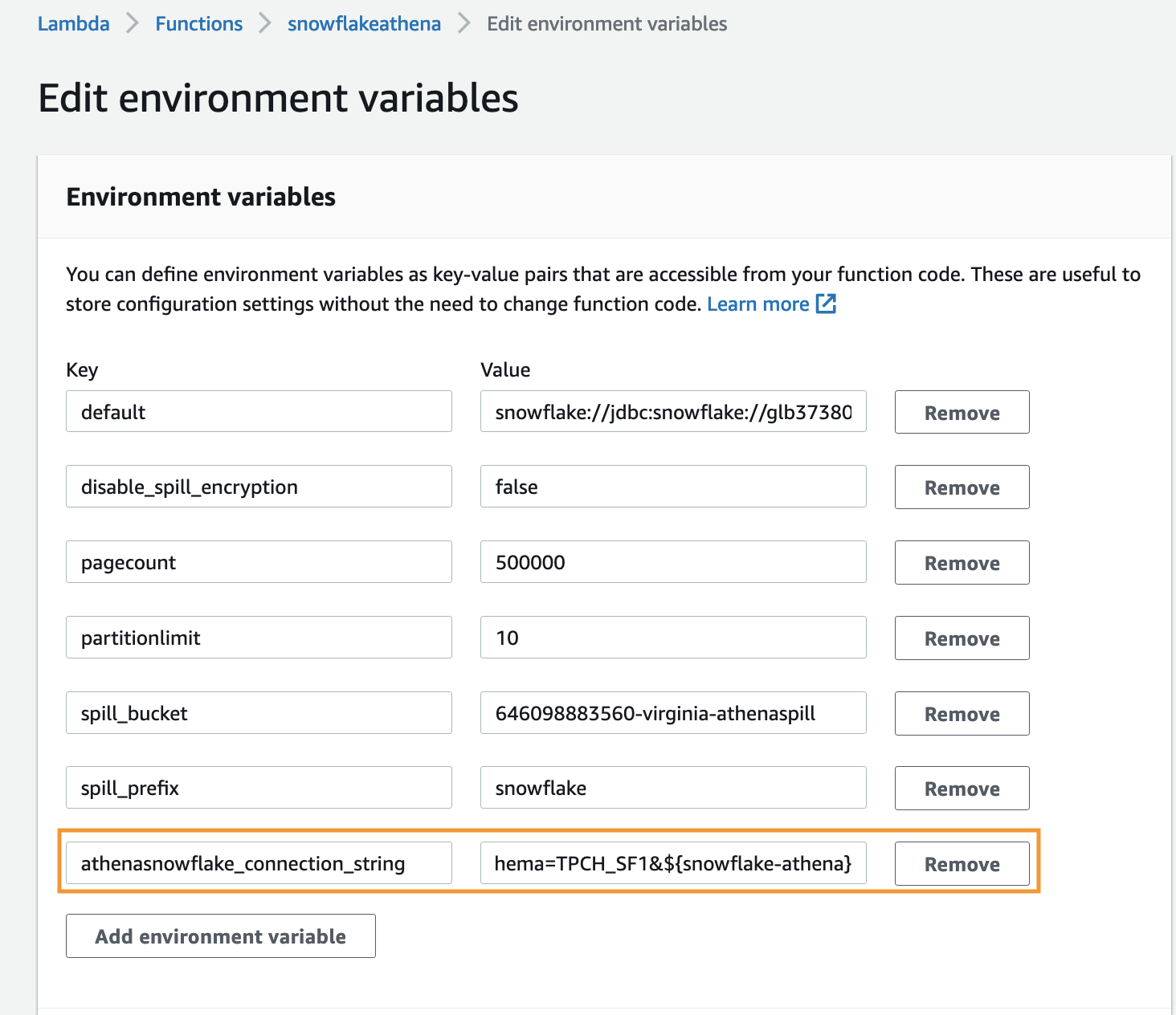

- On the Lambda console, choose Functions in the navigation pane.

- Open the function

snowflakeathena. - Go to Edit environment variables and choose Add environment variable.

- Provide the following values:

- Key –

athenasnowflake_connection_string - Value –

snowflake://jdbc:snowflake://glb37380.us-east-1.snowflakecomputing.com/?warehouse=COMPUTE_WH&db=SNOWFLAKE_SAMPLE_DATA&schema=TPCH_SF1&${snowflake-athena}

- Key –

- Choose Save.

Now, when you refresh you will able to see all the tables.

- Select

TPCH_SF1from the list and it will list all the tables available underTPCH_SF1database and run the below query:

Querying using Serverless Application Repository

Under the hood lambda function has deployed as part of Serverless Application Repository, hence you can query by using lambda:snowflakeathenato run against tables in the Snowflake database. This is the name of lambda function which we have created in step 5 of previous section of this blog.

lambda:snowflakeathenato is a reference data source connector Lambda function using the format lambda:MyLambdaFunctionName. For more information, see Writing Federated Queries.

Key performance best practices

If you’re considering Athena Federated Query with Snowflake, we recommend the following best practices:

- Athena Federated Query works great for queries with predicate filtering because the predicates are pushed down to the Snowflake database. Use filter and limited-range scans in your queries to avoid full table scans.

- If your SQL query requires returning a large volume of data from Snowflake to Athena (which could lead to query timeouts or slow performance), you may consider copying data from Snowflake to your S3 data lake.

- The Snowflake schema, which is an extension of the star schema, is used as a data model in Snowflake. In the Snowflake schema model, unload your large fact tables into your S3 data lake and leave the dimension tables in Snowflake. If large dimension tables are contributing to slow performance or query timeouts, unload those tables to your S3 data lake.

- When you run federated queries, Athena spins up multiple Lambda functions, which causes a spike in database connections. It’s important to monitor the Snowflake database

QUEUED_LOADqueue slots to ensure there is no queuing. Additionally, you can use concurrency scaling on your Snowflake database cluster to benefit from concurrent connections to queue up.

Conclusion

In this post, you learned how to configure and use Athena federated queries with Snowflake using Lambda. With Athena federated queries, you can access all your data to produce analytics and derive business value without building ETL pipelines to bring data from different data stores such as Snowflake to your data lake.

You can use the best practice considerations outlined in the post to help minimize the data transferred from Snowflake for better performance. When queries are well written for federation, the performance penalties are negligible.

For more information, see the Athena User Guide and Using Amazon Athena Federated Query.

Appendix:

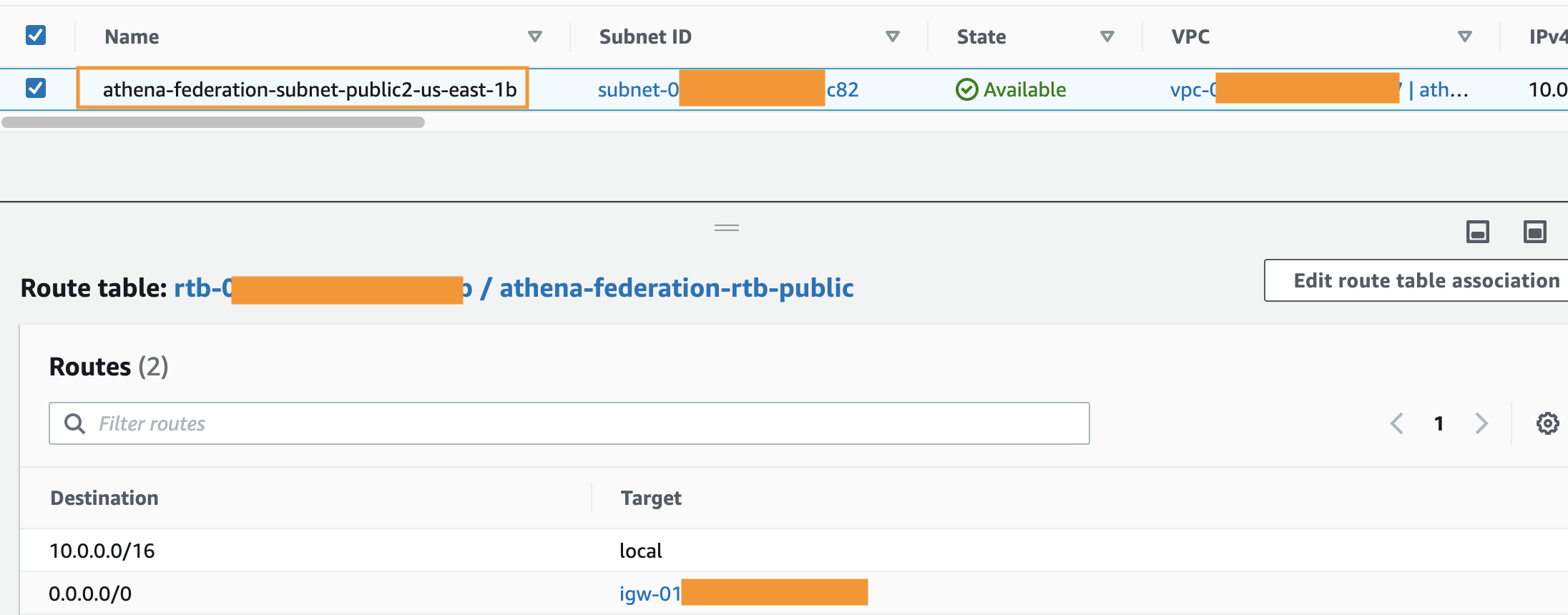

This is guidance for setting up the AWS Lambda function that’s connected to an Amazon Virtual Private Cloud (Amazon VPC) to have access to the internet.

Internet access from a private subnet requires network address translation (NAT). To give internet access to an Amazon VPC-connected Lambda function, route its outbound traffic to a NAT gateway or NAT instance in a public subnet.

- Provide private subnet IDs in your lambda function.

- Private Subnet IDs should have route table with NAT Gateway associated to it.

- NAT Gateway should have Public Subnet attached to it.

- Public gateway should have route table with Internet gateway attached.

For more information, see Internet gateways in the Amazon VPC User Guide. For an example setup, see VPC with public and private subnets (NAT).

You can find additional details here.

About the Author

Navnit Shukla is AWS Specialist Solution Architect in Analytics. He is passionate about helping customers uncover insights from their data. He has been building solutions to help organizations make data-driven decisions.

Navnit Shukla is AWS Specialist Solution Architect in Analytics. He is passionate about helping customers uncover insights from their data. He has been building solutions to help organizations make data-driven decisions.