AWS Database Blog

AWS DMS now supports R4 Type Instances and learn to choose the right instance AWS DMS migrations

We are happy to announce support for R4 memory-optimized instances of Amazon EC2 in AWS Database Migration Service (AWS DMS). These instances come with more memory and higher network bandwidth to help support migrations requiring higher throughput and memory-intensive operations.

Here you can see the lineup of instances that is supported in DMS.

Now that DMS supports new instance classes, you might wonder which instance class to choose.

Which instance class is right for my situation?

Before answering this question, let’s look at how you can use each instance class with DMS:

- T2 instances: Designed for light workloads with occasional bursts in performance. We recommend using this instance class to learn about DMS and do test migrations of small, intermittent workloads.

- C4 instances: Designed for compute-intensive workloads with best-in-class CPU performance. At times, DMS can be CPU-intensive, especially when performing heterogeneous migrations and replications (for example, Oracle to PostgreSQL). C4 instances can be a good choice for these situations.

- R4 instances: Designed for memory-intensive workloads. These instances include more memory per vCPU. Ongoing migrations or replications of high-throughput transaction systems using DMS can, at times, consume large amounts of CPU and memory. R4 instances can be a good choice for these situations.

With these points in mind, let’s walk through some examples to understand where C4 and R4 type instances can fit the requirements when running migrations using AWS DMS.

Advantages of R4 in the full load phase

As we discussed in a previous blog post, PostgreSQL is a target engine for which values are handed off using a comma-separated value (CSV) file. This being the case, DMS does the following when starting migration:

- DMS unloads data from source table to replication instance memory to prepare a CSV file with the data. The size of this CSV file depends on the parameter maxFileSize.

- DMS saves the CSV file to disk and pushes the data to PostgreSQL using the COPY command.

When you perform this type of migration, increasing the maxFileSize value can significantly increase throughput. From our experience, we have seen that going up to 1,048,576KB (1.1 GB) for maxFileSize significantly improves migration speed. Since version 2.x, DMS has been able to increase this parameter to 30 GB.

The following diagram shows memory utilization on a C4.4Xlarge instance when we ran a full load for a database using a higher maxFileSize setting.

After about 30 minutes, we ran out of memory:

From the preceding test, we can conclude that using a larger maxFileSize with more tables requires more memory. This result occurs because the table data is being unloaded into the replication instance memory in parallel for multiple tables.

In comparison, we ran the same migration with the same settings on an R4.4xlarge instance. The instance comes with four times the memory of the comparable C4 instance (c4.4xlarge). Thus, it completes some of the smaller tables faster and lets the bigger table use the extra memory available to complete the migration.

Given the increased maxFileSize that we use for this test in our environment, here is a summary of our full-load-only tests:

- c4.4xlarge with default

maxFileSize—a full load of about 3.6 terabytes of data completed in about 3 days and 12 hours. - c4.4xlarge with

maxFileSizeincreased to 1.1 GB—the task failed due to memory pressure. - r4.4xlarge with

maxFileSizeincreased to 1.1 GB – a full load of about 3.6 terabytes of data completed in about 2 days and 6 hours.

To conclude, you might look at using the R4 instance class (especially for the full load phase) if you want to migrate huge tables cross-engine faster than C4 instances can. This suggestion applies to only certain target engines, such as MySQL-based and PostgreSQL-based engines and Amazon Redshift. A quick test migration with Amazon CloudWatch monitoring can tell you if your migration requires more memory.

Advantages of R4 in the ongoing replication phase

Before we jump into an example, let’s look at the change data capture (CDC) internals in the DMS engine.

Let’s assume that you’re running a full load plus CDC task (bulk load plus ongoing replication). In this case, the task has its own SQLite repository to store metadata and other information. Before DMS starts a full load, these steps occur:

- DMS starts capturing changes for the tables it’s migrating from the source engine’s transaction log (we call these cached changes). After full load is done, these cached changes are collected and applied on the target. Depending on the volume of cached changes, these changes can directly be applied from memory, where they are collected first, up to a set threshold. Alternatively, they can be applied from disk, where changes are spilled when they can’t be held in memory.

- After cached changes are applied, by default DMS starts a transactional apply on the target instance.

During the applied cached changes phase and ongoing replications phase, DMS uses two stream buffers, one each for incoming and outgoing data. DMS also uses an important component called a sorter, which is another memory buffer. Here are two important uses of the sorter component (which has others):

- It tracks all transactions and makes sure that it forwards only relevant transactions to the outgoing buffer.

- It makes sure that transactions are forwarded in the same commit order as on the source.

As you can see, we have three important memory buffers in this architecture for CDC in DMS. If any of these buffers experience memory pressure, the migration can have performance issues that can potentially cause failures.

When you plug heavy workloads with a high number of transactions per second (TPS) into this architecture, you can find the extra memory provided by R4 instances useful. You can use R4 instances to hold a large number of transactions in memory and prevent memory-pressure issues during ongoing replications. Here are some tips to decide if you need to use R4 instances for ongoing replications:

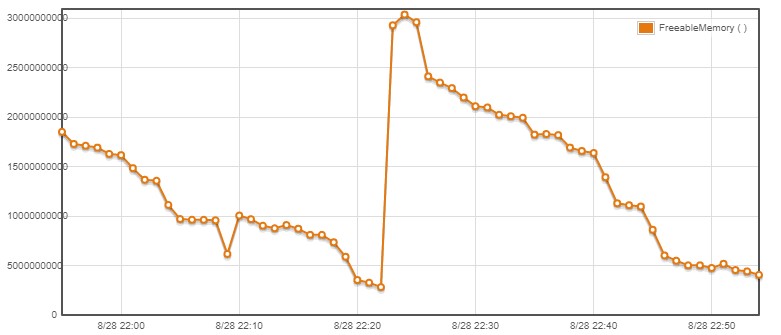

- Review your memory usage using the published CloudWatch metric showing freeable memory to see the memory utilization for an instance and a replication task. If it’s high, look at our best practices to see if the memory utilization is accounted for.

- Review our three-part series on debugging database migrations and do any debugging necessary.

- In our experience, some workloads are more memory-bound than CPU-bound in the CDC phase. If this is true of your workload, you can drop an instance class, going from C4 to R4 to get more memory and save on costs. For example, R4.2xlarge costs less than C4.4xlarge and comes with more memory that can be used during CDC.

- Check for memory exhaustion. You can easily see memory exhaustion log messages in the DMS task CloudWatch logs, for example:

- Add a CloudWatch alarm for freeable memory to get notified in case of high memory usage, so you can use an instance class with more memory if required.

If you take these steps and the task legitimately requires more memory, consider a switch to the R4 instance class.

To conclude, we’re excited to launch support for R4 type instances because you can use them to boost throughput on large and high-transaction workloads. R4 instances can help you concentrate more on other complex parts of the migration, like object and code conversion. Additionally, you can choose to put multiple tasks on a single large R4 DMS instance. Doing this means that you can reduce costs and overhead compared to using multiple smaller instances for multiple tasks at a given point in time.

Feel free to drop us a note in the comments section—we’re happy to get back to you as soon as possible. Happy migrating using the new memory-optimized instance class for DMS!

About the Author

Arun Thiagarajan is a database engineer with the Database Migration Service (DMS) & Schema Conversion Tool (SCT) team at Amazon Web Services. He works on DB migrations related challenges and works closely with customers to help them realize the true potential of the DMS service. He has helped migrate 100s of databases into the AWS cloud using DMS and SCT.

Arun Thiagarajan is a database engineer with the Database Migration Service (DMS) & Schema Conversion Tool (SCT) team at Amazon Web Services. He works on DB migrations related challenges and works closely with customers to help them realize the true potential of the DMS service. He has helped migrate 100s of databases into the AWS cloud using DMS and SCT.