AWS Database Blog

Migrating to PostgreSQL Lifecycle

This is a guest post by David Rader, the vice president of product development at OpenSCG.

OpenSCG is an AWS Consulting Partner and a leading expert in PostgreSQL, helping customers migrate to, operate, and optimize PostgreSQL and other data platforms on-premises and in the cloud.

There is a lot of interest these days in migrating data from relational databases to the open source PostgreSQL. PostgreSQL is a great choice for migration, offering advanced features, high performance, rock-solid data integrity, and a flexible open source license. PostgreSQL is ANSI SQL compliant, supports drivers for nearly all development languages, and has a strong community of active committers and companies to provide support.

This post describes the overall approach for migrating an application from your current database to PostgreSQL.

Choosing a database or application

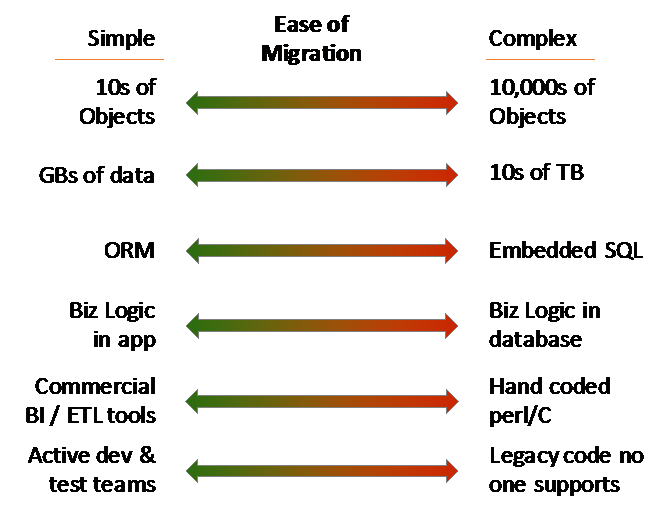

When you’re deciding which of your applications and databases to start with, you should consider several factors. If this is your company’s first PostgreSQL migration, you want to start with a less challenging, lower-risk migration to get your feet wet. Just as with any technology switch, don’t choose your mission-critical, 24/7, high-volume, main revenue generator as the first application you try to run in production on a technology you’re just learning. PostgreSQL is certainly capable of running that important application, but you should build up some experience with the technology before making a “bet-the-company” migration. If, for some reason, you do have to jump into the deep end right away, make sure that you hire experienced database administrators (DBAs) who can help you do it right the first time.

For database migrations, you need to think about the applications that use the database. In general, an in-house application or website that you control the source code for is easier to modify for a new database. A third-party commercial software product that doesn’t provide support for PostgreSQL is usually a non-starter.

Departmental applications or reporting data marts are lower-risk choices that give you an opportunity to introduce the new technology to your organization. Dashboards or extract, transfer, load (ETL) tools that work against multiple databases through ODBC or JDBC connections are great candidates because of the very high compatibility and low modification requirements.

Migration assessment

As a first step in planning your migration, analyze the application and estimate how easy or difficult it will be to migrate it from your existing database to PostgreSQL. This “Migration Assessment” stage should cover several areas, including technology, personnel, and processes.

Technology issues

The technical aspect is the easiest to evaluate. The level of effort needed to migrate the data and database code is determined by the size and complexity of the current database, the type of data access layer that is used, how many database objects there are (functions, triggers, and stored procedures), and whether any database-specific functionality is used.

You should analyze the application code in addition to the database to understand what changes might be required in the application. For example, an application that has a lot of dynamic SQL scattered throughout the code requires more effort to convert than an application with a clean data access layer.

Personnel and tool requirements

In addition to evaluating the technical issues involved, it’s important to ensure that you can continue to deploy, operate, and enhance your application on an ongoing basis. This means understanding the skills and tools that your organization needs to run and support the new database in operations. It also means knowing what skills and tools your development and testing teams will need to support a new database in your development process.

It’s critical that you fully test your converted database and modified application so that you can be confident when you launch the migrated database. This testing effort is often the most time-consuming part of a migration project.

Processes

Finally, you should ensure that your migration plays well with the rest of your business. Analyze the requirements for uptime of the new database, downtime allowed during migration, and project timing in relation to other initiatives. You should also determine whether you can freeze the current application or handle parallel development of new features.

Schema and code migration

The PostgreSQL ANSI standard SQL syntax and datatypes make converting your database schema from other relational databases a fairly straightforward process. The AWS Schema Conversion Tool (AWS SCT) can automate the conversion of most of your tables and columns. Some high-performance applications might need some additional manual tuning of the type mapping for numeric types to optimized PostgreSQL types.

AWS SCT can convert many of the triggers, procedures, sequences, permissions, and other database objects from your source database. However, due to differences in database programming languages (for example, Microsoft T-SQL or Oracle pl/SQL versus PostgreSQL PL/pgSQL), many items in the migrated code require manual resolution. There are usually simple workarounds or near equivalents that can be applied. In some cases, the functionality might not be available, or the details of how the functionality works is different enough that you have to modify the application logic for it to work correctly.

In addition to the database code, application code that interfaces with the database has to be modified. In many instances, AWS SCT can identify and make the changes needed, but again, some items might require manual conversion. The automated tooling produces a good first pass on the code, but DBAs and application developers must review and complete the conversion to produce a fully working environment.

Data migration and sync

After you convert the schema, the next step is to migrate the data from your source database. Usually you can take an iterative approach, by migrating a subset or testing dataset multiple times in development and then in a staging environment before performing the full production data migration.

There are different approaches to data migration depending on the size of database to be migrated, how quickly the data is changing, and how much downtime is permitted. The AWS Data Migration Service is a viable option in most circumstances when you are migrating to an Amazon RDS or Amazon EC2 instance. For environments that have limited network bandwidth or very high rates of data change, it may be necessary to try alternative approaches, such as converting on-premises first and then replicating to Amazon RDS, or using AWS Snowball.

If a customized approach is required, verifying that all the data is correctly synchronized via data quality scripts is critical to ensuring data integrity in the migrated database.

Testing

Testing is usually one of the largest efforts during a database migration because nearly the entire application must be validated. You must test any business logic in the database thoroughly and identify any differences in the resulting data. Automated testing scripts that work against your UI or APIs are great because they enable you to retest your application quickly when correcting issues.

In addition to functional testing, you should plan for and allocate enough time to execute performance and load testing of your migrated database and modified application. To deliver optimized performance, you often have to make small adjustments in SQL queries or application behavior.

Testing in a migration also includes testing your backup and restore procedures, high availability configuration, deployment processes, and operational monitoring.

Training

If your organization is new to PostgreSQL, your developers, DBAs, and operations teams will need training. In fact, the people who are executing the migration may need training before they begin the migration process!

During the training planning and process, you should address how to integrate PostgreSQL with your existing operations management and support processes. Many commercial monitoring tools have support for PostgreSQL, and there are also many open source options.

Production go-live

If your migration process, data synchronization, and testing efforts are thorough, the actual production go-live should be uneventful. But it’s important to have a carefully thought-out plan for the final data synchronization, configuring the application to switch to PostgreSQL, modifying DNS entries or AWS service definitions, and turning on writes to the migrated database.

If possible, you should look for ways to break up a migration go-live to reduce the “big bang” nature. For example, a common approach is to migrate a reporting database as the first step and modify ETL, application dashboards, and reports as a first phase before you migrate the main transactional part of your application database. If your application has a modular design or employs micro-services, you may be able to migrate one service (and associated database) at a time.

By breaking up the conversion into multiple phases, you can test both your application and your processes without the risk of a full database cutover. However, in some situations it’s not possible to migrate part of your data layer if multiple application modules share the same data and database tables. In these cases, ensure that you test the modified application and database objects and test your actual cutover process. Yes, you should do the migration and cutover two times—at least once in test and then on your scheduled production move.

Database tuning and DBAs

To support your new PostgreSQL database, ensure that basic backups and reliability measures are in place and continue to work well. As your application is running and your traffic volumes start increasing, you will inevitably learn that some parts of your database need tuning. You may need specific data type mappings, different index types, or query tuning to optimize your application performance. As you learn how your application behaves with the PostgreSQL database engine, you can tune the vacuum, memory, and worker settings to improve application response times and throughput.

When you use the Amazon RDS for PostgreSQL managed service, many of the daily operations are handled automatically for you—allowing your DBAs to spend more time on new feature development or proactive operations. However, they still have to ensure that the performance tuning and ad hoc maintenance items are scheduled, executed, and reviewed on a regular basis.

Support and health check

When your database is critical to your business, you should ensure that you can quickly address problems that may occur and proactively prevent problems when you can. Review the expertise that your team has. Then determine what level of support you need from AWS and a dedicated PostgreSQL company so that you can respond to problems and either prevent downtime or get your database back up and running when necessary.

We recommend that you schedule your first database health check about six months after your migration. Review your database usage and performance, growth pattern, and tuning requirements. Identify when you can perform minor and major version upgrades.

Keep learning

The PostgreSQL tagline is “The world’s most advanced open source database” for good reason. PostgreSQL offers powerful features that can make your application faster, easier, more secure, and more scalable. And the community adds new features with every release. As your application and database grow and your business expands, keep exploring to make sure you’re getting the best database solution possible.

And, start looking for your next application or database migration target!