AWS for Games Blog

Building Perforce Helix Core on AWS (Part 1)

This is the first article of a two-part series on building Perforce Helix Core on AWS. Updated information can be found on the second part of this blog series, please start there.

While version control is very important in software development, when it comes to managing version control systems, there are many developers struggling with its management, its performance or its cost. Some people use a hosting platform like GitHub, and some people might build it on their own on-premises. On the other hand, have you ever considered hosting it in the cloud?

Perforce is a proprietary version control system that features fast synchronized operation. It’s used mainly in development environments and is particularly popular in the game industry.

Perforce is commonly set up in on-premises environment. In this article, however, I explain the benefits of building Perforce on AWS and show you how to build the environment. AWS CloudFormation templates will be also available in the next blog. Perforce and AWS offer a free tier, Perforce Helix Core Studio Pack for AWS, which allows customers to start running Perforce on AWS.

What is Perforce Helix Core and why use it?

Git, Subversion (SVN), CVS, Mercurial, and Perforce are commonly known as typical version control systems (VCS). Some version control systems have a centralized management server (including Subversion and CVS). The others are distributed system to store source codes on each developer’s local PC and share them when needed (Git, Mercurial etc.) Perforce Helix Core (Perforce) is the former, a centrally managed versioning system.

The benefit of using Perforce is its performance. Perforce only preserves changes of updates in text files. For binary files, it compresses each version and saves them as chunks, known as versioned files. Even when a large development project increases the number of files or commits, the size of the repository (depot) is not prone to explode*. It also has a mechanism that works faster even if clients are physically away from the master server**. Therefore, Perforce is used for version controlling not only text files, but also large binary files, such as images. Perforce also has an integration with Git, which allows you to keep the source code managed by Git, while only binary files are saved in Perforce.

Benefits of building Perforce on AWS

Server Availability

Perforce supports replica servers. You can use the master server and the replica server in separate Availability Zones to achieve high availability and redundancy. This design makes the system more available than placing on-premises machines as master and replica servers in the same data center.

Elasticity of Disk Size

Amazon Elastic Block Store (EBS) for block storage attached to Amazon Elastic Compute Cloud (EC2) allows you to change volume size (disk size) and volume type (disk type) dynamically. This elasticity of the volume size allows you to update the disk with ease when the file size unexpectedly grows and prevent the disk space from maxing out in the middle of development.

Amazon Elastic File System (Amazon EFS) also supports automatic, elastic, fully managed network file system (NFS). With EFS, you don’t have to previously provision the volume size and costs can also be reduced by setting a lifecycle policy to transition files not accessed after a set period of time to a more cost-effective storage tier.

AWS Global Infrastructure

If your developers are distributed across the globe, AWS global infrastructure benefits distributed development. Perforce uses caches for versioned files transferred frequently. By providing a proxy function, Perforce Proxy (P4P), on the network near the client, and Perforce improves performance by coordinating between applications and versioning services.

If your developers are distributed all over the world and they access a single Perforce master server in a single region, you can build Perforce proxies in AWS regions closer to the developers in order to improve the data transfer performance. In this case, you can use inter-region VPC peering that allows you to improve the performance between the master server and the proxy server using AWS global network backbone instead of general internet connections.

AWS Service Integration

Hosting Perforce on AWS enables you to work with other AWS services, and take advantage of the AWS ecosystem. For example, you can build a series of build pipelines with EC2 instances from build to test on AWS, which allows you to fetch the codes directly from Perforce hosted on AWS. EC2 instances can be launched as needed, deleted when the build is finished, and you only pay for the hours you use. Meanwhile, building the equivalent pipeline in an on-premises environment costs an up-front, fixed cost of the server. You can choose from wide range of EC2 instances; instances with GPUs, with maximum 4.0 GHz CPU, or with maximum 24 TiB memory.

By scaling out the number of EC2 instances to run parallel processing, you also benefit from having fast local connections in the AWS Region between Perforce and build machines, further reducing overall compile times. AWS Batch can be also used for the purpose of managing your build pipeline, while you also have the option of using EC2 Spot Instances to save on cost.

Perforce Design Patterns on AWS

We will discuss 4 design patterns including an All-in pattern where we design everything only in AWS, and a hybrid pattern which aims to enhance performance using both AWS and on-premises environment. Further details on architecting Perforce on AWS can be found in Perforce’s technical guide, Best Practices for Deploying Helix Core on AWS.

1. Building only Perforce Servers (P4D) on AWS

This method follows a classic all-in pattern where we create a Perforce master server and a replica server for redundancy.

All source code and data assets are stored on AWS. Developers connect directly to the AWS hosted server, or to securely connect they can use VPN from PC to Amazon VPC or AWS Direct Connect to connect and commit data directly to the Perforce master server.

In this simple and basic pattern, you can also start with running only the Perforce master server on AWS to save costs in the beginning of your project, and then optionally take a step-by-step approach to move on to a hybrid pattern described below.

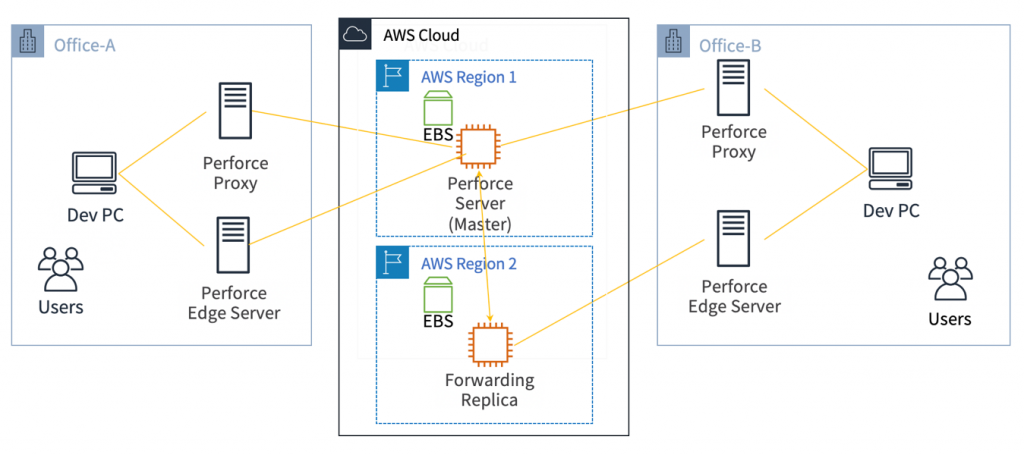

2. Hybrid pattern on AWS

While you build a master server the same way as the pattern above, you can build Perforce proxy servers (P4P) on on-premises machines at the edge of a user network or at your office.

In this hybrid configuration (building both AWS and on-premises infrastructure), you can improve the performance for users by setting up proxy servers (P4P) or edge servers on on-premises machines that are close to users. This will also reduce costs as local servers will cache data, reducing data being sent from the AWS Region.

-

Perforce Proxy Server (P4P)

This is a proxy usually located in developers’ edge such as office network to cache versioned files developers frequently access. This server cannot process Submit requests, so the client (P4) requests Submit directly to the master server.

-

Perforce Edge Servers

This is a replica of the Perforce master server that can also be placed on the developer’s network. This server can have the part of replication of the master server and its own metadata (database) independent of the master server. You can complete your update request from p4 clients on the edge server side as far as the update refers to only independent metadata. This server improves performance for most of the update requests by processing in locations closer to developers without sending to the master server. Submit requests are still transferred to the master server.

-

Forwarding Replica

This is a read only replica of the Perforce master server that has full or partial replication of the master server (it contains Versioned Files + Metadata + Journal Files). This replica directly returns data for read requests such as Sync requests from the p4 client unless the database needs to be updated. Update requests for metadata from clients are forwarded to the master server instead of processing by itself.

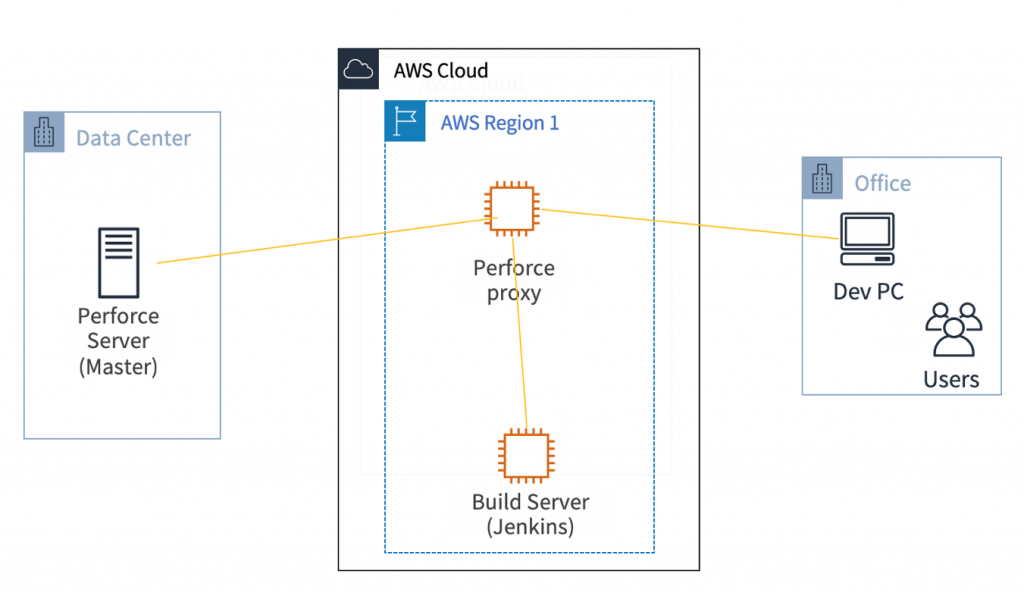

3. Building only Perforce Proxy Server (P4P) on AWS

If you already have a Perforce master server running on an on-premises machine in your data center or office, this design pattern helps developers improve performance with Perforce proxy servers hosted on AWS.

For developers who are working geographically away from the Perforce master server in the on-premises data center, you can set up Perforce proxy servers or Edge servers in AWS regions closest to developers to improve performance for remote developers.

In another case, if you already have build servers on AWS, you can setup a Perforce Proxy Server in the same AWS Region to speed up the time to sync data between the data center and AWS. You can also choose AWS Direct Connect between the data center and AWS depending on the location of your data center.

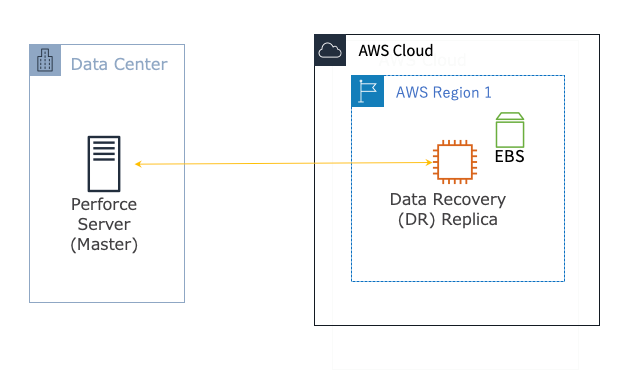

4. Building only Perforce Replica on AWS

If you already have a Perforce master server running on-premises, you can only setup a hot standby replica for Disaster Recovery on AWS. This might be a special use case, but this can be the first step toward migration if you are looking to build the master server on AWS in the future. For additional architectures and best practices, see the Best Practices for Deploying Helix Core on AWS Technical Guide.

Technical Points for Configuring Perforce Master Server

Here, I explain the main technical points to configure Perforce Server built on AWS. For more detailed instructions for the setup process, stay tuned for the second post in this three-part series (coming).

EBS Storage Configuration

| Volume Name | Usage | Volume Size (Base Size) | EBS Volume Type | EBS Encryption Options |

| hxdepots | Archive File Storage

volume for depot |

1TB | .st1 | Encrypted |

| hxmetadata | volume for metadata | 64GB | .gp2 | Unencrypted |

| hxlogs | volume for journal files, access logs, etc. | 128GB | .gp2 | Unencrypted |

It is important to set up storage properly regardless of building master server or replica server for HA because Perforce is a repository server to store data.

The data structure of Perforce consists of 3 parts: 1/ the spaces to store the versioned files, 2/ metadata (database), and 3/ live journals.

For this reason, it is recommended to create the following three volumes for each datum other than OS root volume in EC2. Although it depends on how large the project is that you are working on, the following volume types and size are instructed by the Perforce Technical Guide:

-

hxdepots: depot volume to store all Perforce archive files

Archive files are potentially massive amounts of data and require the capability to process sequential access. For the volume type, Throughput optimized HDD (st1) is recommended as it has low-cost per storage and guaranteed throughput. Multiple Terabytes (or more) size is expected based on the scale of software to be developed with this server. The Perforce Technical Guide recommends at least 1TB volume.

-

hxmetadata: metadata volume with frequent random I/O

Metadata databases are frequently accessed with random I/O patterns. For the volume type, the Perforce Technical Guide recommends low-latency General Purpose SSD (gp2) to store metadata. When the number of users increases and further scaling is required, Provisioned IOPS SSD (io1) can be considered. For the volume size, 64GB is recommended in the technical guide.

-

hxlogs: volume for logs of journal files, and access logs, etc.

The Perforce Technical Guide recommends low-latency General Purpose SSD (gp2) for the volume type and 128GB for the volume size.

EC2 Instance Configuration

Perforce server should normally be a light consumer of system resources. However, as your installation grows, it is useful to take the following two elements into account when you are implementing and tuning Perforce.

CPU Resources

If you are using a large amount of binary files such as large digital assets in game development, CPU performance is an important factor for the large number of compression and decompression operations. C5 family compute-optimized EC2 Instance meets this requirement.

Memory (RAM) Resources

Sufficient memory size can be expected to efficiently prevent the server from paging in the process for a large number of queries and cache some tables in the database file stored in the metadata. R5 family memory-optimized EC2 Instance meets this requirement.

In addition, C5 and R5 instance families support EBS Optimized and also “up to 10Gbps” or more performance for network bandwidth.

When choosing an instance type, it depends on your project size, but the Perforce Technical Guide recommends using c5.4xlarge configured with 32 GiB for sufficient RAM because the compute-optimized instance with 32 GiB RAM meets the requirements above to deliver the best overall performance for Helix Core game development.

Security Groups

EC2 instance requires Inbound Port Access for running Perforce server as follows.

-

Port 1666

Allow Port 1666. p4broker process listens to Port 1666.

-

Port 1999

Allow Port 1999. p4d process listens to Port 1999.

-

Port 22 (SSH)

You must allow Port 22 to execute ssh for the Perforce server instance.

p4d process runs on port 1999 and p4broker process runs on port 1666, which is optional. If you do not use p4broker, p4d process runs on Port 1666 instead. In the case, you no longer need to open Port 1999.

In addition, if you want to allow access only from your corporate network for your security, it is highly recommended that you restrict access from others to set only your corporate Source IP address in Inbound Rules.

Get started with Perforce Helix Core Studio Pack for AWS

Recently, Perforce and AWS Game Tech together launched AWS Studio Pack. This is a free starter package for users who want to build and try Perforce Helix Core on AWS. The package offers:

- Free access to up to 5 users and up to 20 workspaces on Helix Core

- 12-month free tier on AWS Cloud

- Best Practices For Deploying Helix Core on AWS, a free technical guide to help you get started faster

Get started by registering for Perforce Helix Core Studio Pack for AWS and start using the free tier.

Summary

Thank you for reading the first article in this two-part series on building Perforce Helix Core on AWS. Now that we’ve discussed the benefits of building it with AWS and configuration patterns along with each consideration, look for the next blog post where I will explain how to build Perforce Master Server (P4D) with the design patterns discussed above. Plus, I’ll also provide CloudFormation templates to help you get started.

Keep reading with part two of the series, Centralize your Game Production Assets on AWS With Perforce Helix Core.

About the author

Zenta Hori

Solutions Architect

Zenta Hori started his career as an engineer and researcher at a semiconductor-related company. He has experiences in developing in the mobile games industry, financial technology and CTO experience at startups. He is passionate about helping customers get the most out of cloud technology in the game industry, and is currently interested in leveraging machine learning for game development and anomaly detection in the security domain.

*Git has an excellent workflow with distributed development environment. However it keeps one snapshot per commit instead of keeping only changes of updated contents. If additions and deletions are frequently performed on large data such as binaries, the size of the repository tends to increase, and the operation becomes slow as the repository becomes larger. For this reason, git is not quite suitable for working with binary data. Perforce can be considered controlling versions of binary data. Git also has git LFS extension to deal with large files. However this requires extra fee to use separate storage called Large File Storage (LFS) and the main repository just stores its pointers. Besides, note that it is just an extension that all clients need to install to benefit from it.

** If developers are distributed in separated locations around the world, they all access a single centralized master server from all over the world. Every time a sync or submit (commit) is executed, the data are transferred between the server and the client. For clients in very remote locations and/or under the limited bandwidth, the performance for sync and submit declines. In order to improve the performance of these remote clients, Perforce provides the Perforce Proxy (P4P) and Edge Server mechanisms.