AWS for Industries

GxP Continuous Compliance on AWS

In today’s fast changing digital landscape in the cloud, besides advancing innovative solutions, many customers’ priority is on reducing risks related to regulations and compliance standards. Risk has traditionally been one of the reasons for slower change release cycles in validated systems. Organizations in the Healthcare and Life Sciences industry are looking at ways to increase the pace of product innovation while optimizing the processes needed to ensure compliance to regulatory requirements.

The objective of this blog is to share compliance recommendations, and best practices for running GxP workloads in Amazon Web Services (AWS) cloud that could help:

- minimize risks,

- reduce overall qualification timeline,

- provides point-in-time traceability, and

- ultimately accelerate product time to market.

GxP

GxP is an acronym which refers to the good practice guidelines applicable to Life Sciences organizations that manufacture medical products. Organizations in the Healthcare and Life Sciences industry are subject to GxP regulatory requirements such as FDA 21 CFR Part 11.

Continuous Compliance Reference Architecture

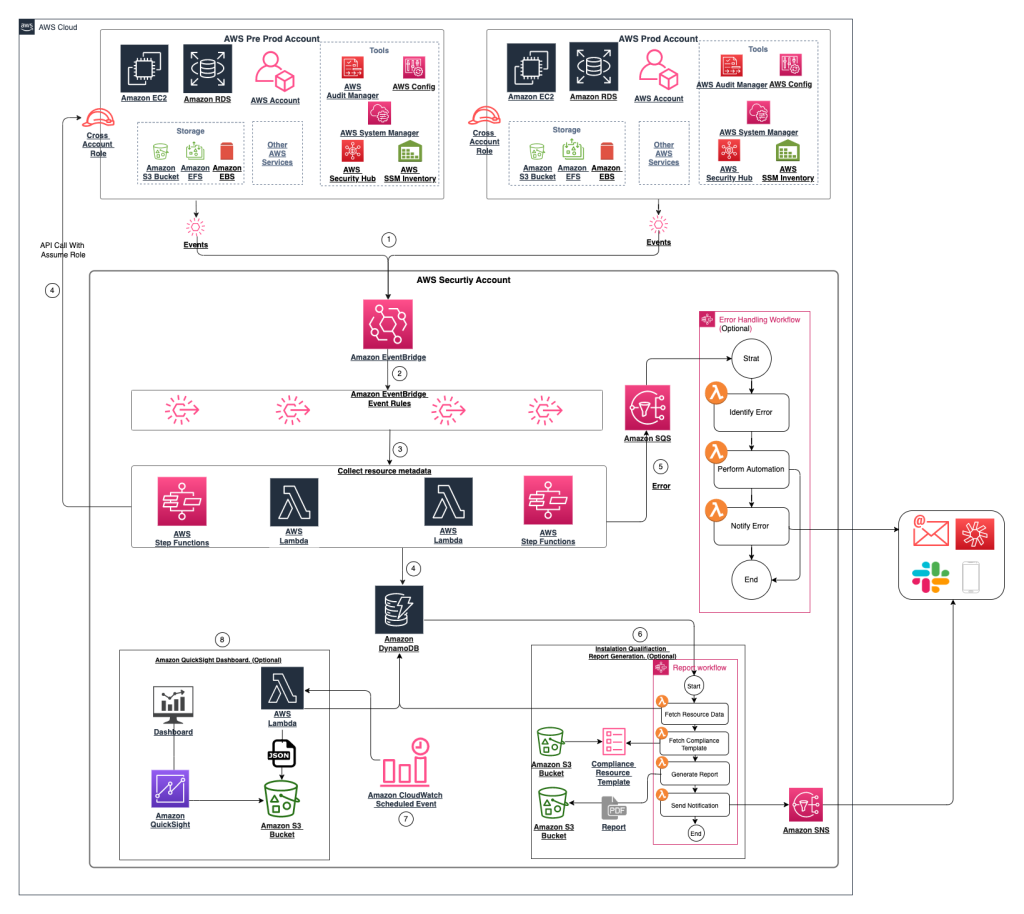

The diagram below shows a continuous compliance reference architecture for GxP workloads. Let’s walk through the steps, and components in the architecture diagram.

Figure 1: Continuous Compliance reference architecture for GxP workloads

Step 1: AWS Resource’s Attributes Change Trigger

AWS CloudTrail (CloudTrail) supports logging events for many AWS services. You can find the specifics for each supported service in the CloudTrail service’s guide. Actions taken by a user, role, or an AWS service are recorded as events in CloudTrail. With CloudTrail, you can trace account activities, and continuously monitor events of supported services in your AWS infrastructure. CloudTrail is a service that enables you to maintain governance, compliance, and risk auditing of your AWS account.

CloudTrail provides event history of your AWS account activity, including actions taken through the AWS Management Console, AWS SDKs, command line tools, and other AWS services. This event history simplifies security analysis, resource change tracking, and troubleshooting. AWS Control Tower (Control Tower) is a service to setup and govern a secure, compliant multi-account AWS environment. Control Tower automates setup of your landing zone based on best-practice blueprints and applies guardrails for governance over your AWS workloads. In a multi-account setup, it is recommended that you send the CloudTrail logs to a centralized account, such as a “Log Archive” account created by Control Tower, to prevent logs from being accessed by unauthorized user.

You will be able to filter particular events that are captured in CloudTrail for subsequent processing via Amazon EventBridge, which will be discussed further in Step 2.

Step 2: AWS Event Rules

Amazon EventBridge (EventBridge) enables you to retrieve and filter events for subsequent continuous compliance processing. It is a serverless event bus that makes it easier to build event-driven applications, at scale, using events generated from applications, integrated Software-as-a-Service (SaaS), and AWS services.

Here are a few examples of events that you can define in EventBridge based on the data captured in CloudTrail:

- Non-compliance detection from AWS Config

- Security findings from AWS Security Hub (Security Hub)

- Changes in the AWS Systems Manager (Systems Manager)

- Such as Inventory Resources and Parameter Store

- Attribute changes on AWS services

- Such as an instance type of Amazon Elastic Compute Cloud (Amazon EC2), or an Amazon Relational Database Service (Amazon RDS) cluster deletion protection setting

In this step, you will be defining EventBridge rules to filter for compliance related events. The incoming event structure may vary depending on the input sources and AWS services.

Here are some recommendations for when you create EventBridge rules:

- Create a rule for each AWS service type, because each service generates different event structures.

- Keep the rule simple for debugging purposes.

- Utilize the Input Transformer feature in EventBridge’s rules to help standardize and transform the event structure.

Step 3: Collect AWS Resource Controls

AWS Software Development Kits ( SDKs), which are available in multiple programming languages, provide you with the capabilities to query for AWS resources’ metadata.

Describe API returns metadata pertaining to the particular resource. In some cases, you might need to run multiple Describe APIs across different AWS services to retrieve full metadata related to the resource. For example, to have a complete view of your EC2 instance, along with the corresponding attached Amazon Elastic Block Store (Amazon EBS) storage, two Describe APIs are needed to retrieve attributes of the EC2 instance and EBS Storage.

In certain scenarios, you might have requirements to collect metadata beyond the infrastructure’s metadata. This is detailed information such as installed software, software configurations, running services and other settings. AWS Systems Manager inventory is the service that you will be using to collect metadata from managed instances.

Once the resource metadata is collected, you will then process control configurations by using an AWS serverless compute such as AWS Lambda (Lambda) or a serverless container service like Amazon Elastic Container Service (Amazon ECS) on AWS Fargate (Fargate). Depending on compute power requirements, you could use different serverless compute services.

AWS Step Functions allow you to define multiple serverless processing steps into a single state machine for parallelization, wait condition, approval workflow and error handling with a retry mechanism.

Below are the AWS services that you can use to collect more system controls besides the ones listed above:

- AWS Config (Config) Conformance Pack for FDA Title 21 CFR Part 11 Operational Best Practices maps AWS Config rules to one or more FDA Title 21 CFR Part 11 controls.

- AWS Systems Manager (Systems Manager) executes bash commands remotely by using a Systems Manager Run Command. It securely runs bash commands as a Systems Manager Document that queries the metadata at an Operating System level.

- Amazon Inspector continually scans workloads for software vulnerabilities and unintended network exposure.

- AWS Security Hub’s custom actions allow you to configure AWS Security Hub to send selected insights and security findings to Amazon CloudWatch (CloudWatch). CloudWatch is a monitoring and management service that provides data and actionable insights for AWS, hybrid and on-premises applications and infrastructure resources. CloudWatch collects monitoring and operational data in the form of logs, metrics, and events.

- AWS Audit Manager (Audit Manager) automates evidence collection. It helps translate evidence from cloud services into auditor-friendly reports. It maps your AWS resources to the regulation’s requirements such as the General Data Protection Regulation (GDPR). Based on the framework you select Audit Manager launches an assessment that continuously collects relevant evidence from your AWS accounts and resources. Audit Manager assesses if your policies, procedures, and activities are operating effectively. It also helps you manage stakeholder reviews of your controls and enables you to build audit-ready reports with much less manual effort.

Step 4: Storing Resource Metadata into a Centralized Location

Amazon DynamoDB (DynamoDB) allows you to store the metadata collected in Step 3 for traceability, and reporting purposes. DynamoDB is a fully managed NoSQL database service which supports key-value pairs and document data structures.

Since DynamoDB, in this reference architecture, is the central location to store resource metadata that may contain sensitive information, we recommend that you follow the principle of least privilege to restrict access and permission policies. The principle of least privilege is a security concept in which the minimum access is defined in policies to successfully complete a job.

Optionally, you can use Amazon OpenSearch and Kibana for near real-time compliance monitoring, dashboards and alerts of GxP workloads.

Step 5: Error Handling Automation

In unforeseen scenarios you might encounter errors in the processing workflow. When errors are encountered you will need error handling automation such as retry mechanism and exception logs for debugging purposes. The objective of having the error handling automation is to enable you to determine error source, type and status for error handling. Amazon Simple Notification Service (Amazon SNS), which is a fully managed publish and subscribe messaging service, allows you to send error notification to configured channels such as Slack, Amazon Chime or an Incident Management system.

Besides receiving error notification, integration of the Amazon SNS error topics to Amazon Simple Queue Service’s (Amazon SQS) Dead Letter Queue provides you the ability to hold unprocessed error messages for further review and investigation. At regular intervals, you can implement a clean-up routine mechanism that polls the dead letter queue, investigates the issue and handles the error messages.

Step 6: Automated Installation Qualification Report Generation (Optional)

The automated Installation Qualification (IQ) process is a capability to create documents containing deployed AWS resources and verification test results that the infrastructure is configured as intended. The automated process allows you to run a list of tests and collect detailed attributes of deployed resources. It helps reduce overall time for the qualification process and provides accurate deployment attributes, which otherwise would need to be done manually. The automated Installation Qualification report generation process is an optional step where you can set it to run as needed or upon resource changes. Some organizations already have a well-defined process for Installation Qualification report creation. In these scenarios, the automation process is a supplemental or optional step to produce detailed deployment and test results of AWS resources.

Having an automated solution for infrastructure IQ improves consistency due to automation of development, deployment and testing processes. Besides the benefits stated above, the following are some of the advantages of automating the IQ process:

- Cost reduction: Cost of managing infrastructure compliance can be reduced by decreasing manual efforts of updating documentation.

- Version control: The infrastructure environment can be governed by having the Infrastructure as Code script version controlled and roll back to the last good state upon failure.

- Repeatable: Infrastructure that you can replicate, re-deploy, and re-purpose. This solution can be centralized at a master account, organization unit or per app basis.

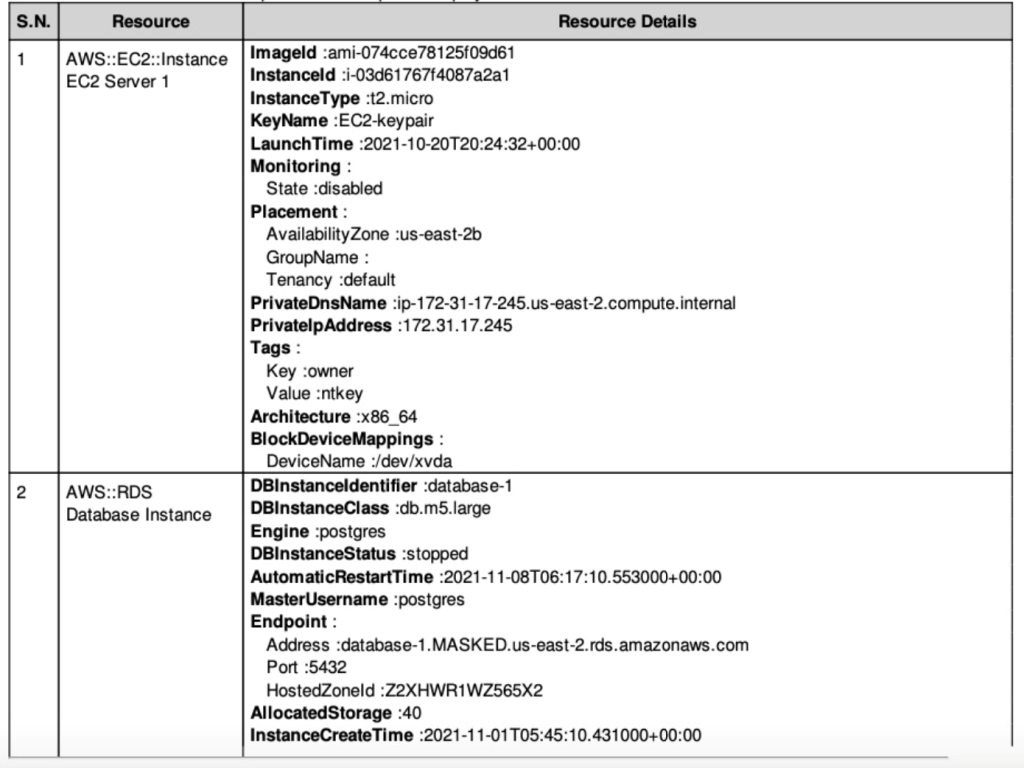

In our reference architecture diagram, you can leverage Amazon DynamoDB (DynamoDB) Streams to trigger automated IQ report generation on any record change. Each record in the DynamoDB refers to an AWS resource. The resource’s current state that has been collected and stored in DynamoDB (Step 4) will be compared against the expected configuration specifications stored as JSON files in a secured and version enabled Amazon Simple Storage Service (Amazon S3) bucket. You may have different build specification files for the same resource type depending on requirements. For example, in a regulated SAP environment, there are different SAP systems and roles requiring different EC2 families and expected control values.

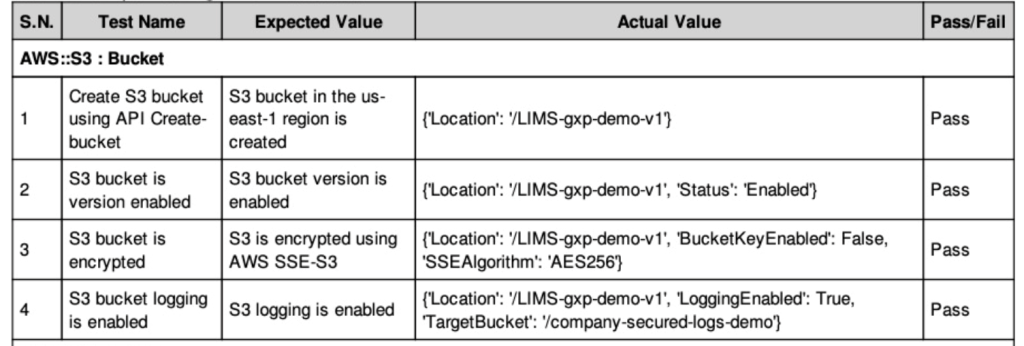

You will need to tag the AWS resources accordingly, as tags will be used to identify resources that are part of the qualification scope. It compares the actual configuration values with expected values. This process iterates through all the deployed resources and generates a PDF report. The report contains details of the deployed resources and verification results, comparing attributes of deployed resources against planned attributes documented in the Configuration Specifications. The comparison condition between expected value and actual value could be a range or a specific value depending on the test cases. The PDF report generation process can be setup to run as needed or upon any resource changes.

Figure 2: An example of an automated Installation Qualification report displaying deployed resources

Figure 3: An example of an automated Installation Qualification report displaying build specification test results

Step 7: Scheduled Event for Data Sync and Reporting

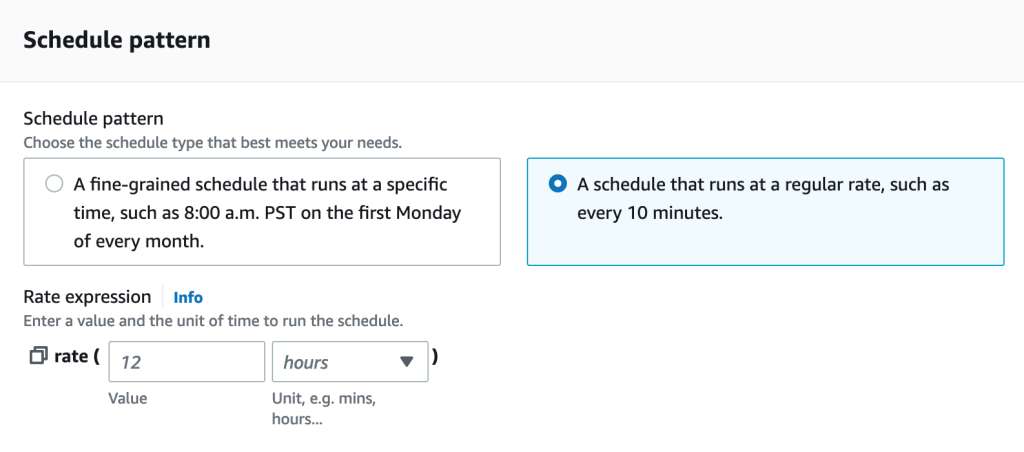

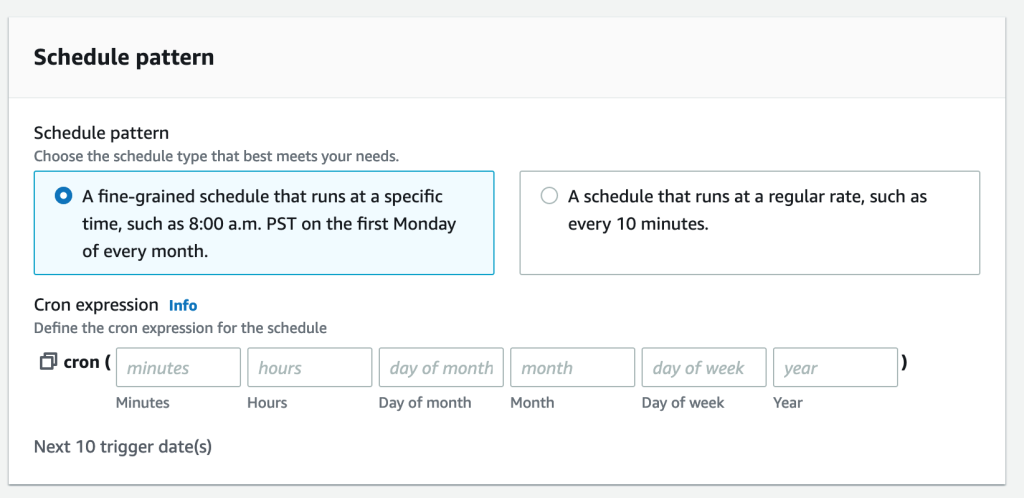

In order to support analytics and dashboarding capabilities, you will need to sync data to an Amazon S3 bucket dedicated for reporting purposes. EventBridge events support cron expressions and rate expressions to enable data synchronization scheduling. The difference between a cron expression and a schedule expression is a cron expression offers fine-grained schedule definition by minutes, hours, day, month, and year but rate expressions are interval definition in minutes, hours or days as shown in Figure 5.

Figure 4: Amazon EventBridge event schedule configuration

Figure 4: Amazon EventBridge event schedule configuration

Figure 5: Amazon EventBridge event schedule with rate expression in minutes, hours, and days

Step 8: Dashboard Workflow

In certain scenarios, you might want to continuously monitor deployed AWS resources to ensure that there are no configuration deviations since the last successful deployment. The automated Installation Qualification process discussed in Step 6 provides a point-in-time artifact of the resource’s post-deployment state. The IQ report is produced as part of the deployment process. In contrary to point-in-time, a dashboard provides you with visibility to your resources’ current state. With a continuous compliance monitoring dashboard, you could identify resources that have drifted from the last successful Infrastructure as Code deployment.

Amazon QuickSight (QuickSight) is a cloud-scale Business Intelligence visualization tool that you can use to build compliance dashboards for your GxP workload. QuickSight can be integrated with Amazon Athena (Athena), data stored on Amazon S3, or directly from DynamoDB. For data queries from Amazon S3, you can leverage AWS Glue to crawl through the data and specify tables and columns as data sources for Athena. Please note that you will need to perform data transformation steps such as labelling, aggregation, and metrics calculation before data is ready to be used in the QuickSight dashboard.

Security Considerations

In regards to security and permission, you should only allow Read access in the production account’s AWS Management Console to non-administrators. Also, any deployment in a production environment should be through an Infrastructure as Code pipeline upon approval of the Change Management and Pull Request. You should enforce least privilege principles while setting up Service Control Policies (SCP), Identity and Access Management (IAM), and resource level policies.

As part of Control Tower, guardrails for preventive and detective controls are available to help you govern your resources and monitor compliance across a multi-account setup. There are two guardrail behaviors:

- Preventive: A preventive guardrail ensures that your accounts maintain compliance, because it disallows actions that lead to policy violations.

- Detective: A detective guardrail detects noncompliance of resources within your accounts, such as policy violations, and provides alerts through the dashboard.

We highly recommend that you explore the list of guardrails available in Control Tower and enable the ones applicable to your requirements. In addition to the guardrails available in Control Tower, you can also define custom preventive guardrails within AWS Organizations (Organizations) by using SCP. SCPs help you to ensure your accounts stay within your organization’s access control guidelines.

Enabling organization-wide AWS Security Hub, AWS Backup and a tagging strategy will play key roles to organize your resources, allocate cost, automate processes, control access, and manage security risk. We also recommend having EventBridge policies in place to avoid any accidental deletion of Event bus rules and configuration.

Conclusion

Risk has traditionally been one of the reasons for slower change release cycles in validated systems. This blog proposed a risk-based approach to automate an end-to-end continuous compliance process including automated Installation Qualification to help optimize qualification time, and improve overall system compliance.

The steps discussed in this blog include suggestions on compliance automation, non-compliance events detection, continuous monitoring of defined attributes, and alert notification. Automating the GxP Continuous Compliance process will help maximize the security, and compliance of your cloud posture. Continuous compliance automation enables you to be more responsive and agile, making it more efficient when working closely with developer and operations teams to create and deploy code faster and more securely. Also, compliance automation reduces human configuration errors, enabling you to be more secure and giving your team more time to focus on the work that is critical to the business.

We recommend consulting with your technical and quality teams before implementing automated GxP controls to ensure that best practices are aligned with your existing Quality Management System. For help in implementing the reference architecture, please contact your AWS Account team.

Please refer to documentation on AWS Security Hub, AWS Audit Manager, AWS Inspector, and AWS Detective for further readings about the service’s capabilities to help improve compliance posture. For more detailed information about ways you could collect information at the Operating Systems level using Systems Manager, please read Maintain an SAP landscape inventory with AWS Systems Manager and Amazon Athena.