AWS Storage Blog

Optimizing SAS Grid on AWS with Amazon FSx for Lustre

Many customers run complex analytics and high performance SAS-based applications on premises using the SAS Grid platform to perform large-scale analytics. Customers with a strategy to move to open-source or cloud-native solutions will often consider refactoring applications to Python or R to lower their total cost of ownership, however refactoring these applications as part of their cloud migration journey is often complex, expensive, and time consuming. Migrating SAS platforms to AWS provides an ideal solution for increasing agility and reducing data center footprints. In addition, customers often achieve similar or better performance and cost with this approach.

AWS Partner SAS provides SAS Grid, a shared analytic computing environment that enables high availability and accelerates processing for high-performance workloads such as machine learning, statistical processing, and big data analytics. In this post, we walk through common scenarios of a SAS migration project moving from on-premises compute clusters and storage arrays to Amazon EC2 and Amazon FSx for Lustre. We also cover best practices for disaster recovery, optimizing storage costs, and the additional business impact you can achieve by migrating your SAS Grid workloads to AWS.

Solution overview

SAS-based workloads create a high volume of predominately large-block, sequential access I/O and typically perform large sequential reads and writes. Storage performance is the most critical component of implementing SAS in a grid environment. If your storage system is not designed with a file system capable of handling these types of high performance workloads, the application doesn’t perform well – because even with enough compute, the communication with storage system adds latency, which is the same as “adding slow.” This is where Amazon FSx for Lustre shines. FSx for Lustre provides cost-effective, high-performance, scalable shared storage with sub-millisecond latencies, up to hundreds of gigabytes per second of throughput, and millions of IOPS making it a great solution for the compute-intensive workloads – like financial analysis – at a massive scale. FSx for Lustre “adds fast” by removing slow. By using Amazon EC2 for the SAS Grid compute nodes and FSx for Lustre for shared storage, you are able to accelerate your SAS workloads without long-term hardware commitments.

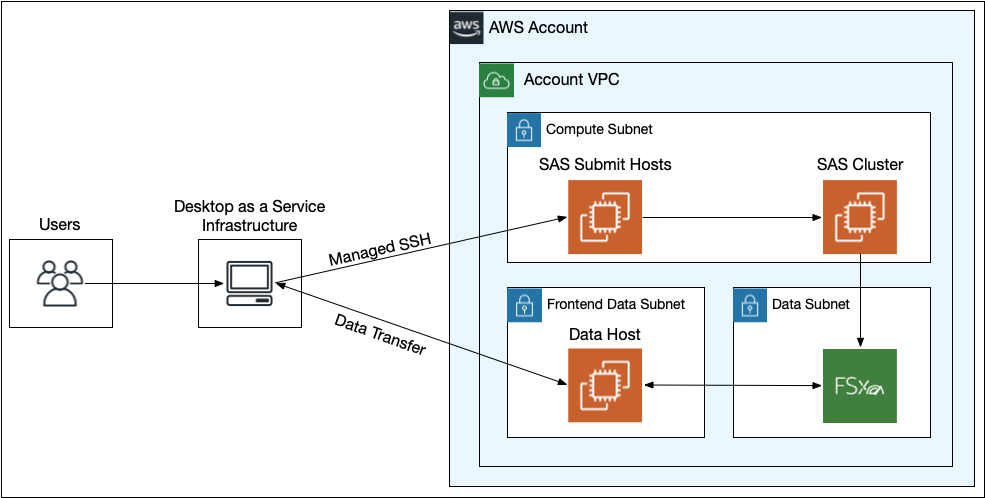

Figure 1: Running SAS Grid on AWS architecture

Data transfer planning

When planning for a SAS Grid data migration the predominant question to tackle is “What will it take to get X petabytes of data to cloud?” this scenario can be broken down into a few considerations.

- POSIX permissions must be preserved throughout the transfer. This is because SAS leverages the permissions to properly present each user the files and directories that they are entitled to use.

- The data that is being moved/copied may contain Non-Public Personal Information (NPI) and Personally Identifiable Information (PII). If it is possible that the data possesses those attributes, it will be necessary to work closely with the Information Security team on how to handle this; It may be necessary to redact, exclude, or scan certain datasets. It is also likely that many levels of approvals must be obtained before the data migration.

- Throughout the data migration, we use encryption at-rest and in-transit. In order to accomplish this encryption methodology, it is important to consider the pattern of your transfer. While the FSx for Lustre file system can be mounted to an on-premises virtual machine (VM), data would not be encrypted in transit. You must use a supported EC2 instance that supports transparent encryption in transit.

Given the preceding requirements, you can leverage a multi-threaded version of rsync, fpsync to facilitate the data migration from on-premises host to an EC2 instance (that supports transparent encryption in transit) with the FSx for Lustre file system mounted.

It is important to take into account the amount of network bandwidth available and how much of that bandwidth you can safely use without saturating the network. Receiving the necessary approvals to start the transfer and appropriate monitoring of the transfer is also critical.

Data transfer execution

In order to determine the amount of bandwidth available from the on-premises host that will be used for the transfer to the EC2 instance in AWS, you should run preliminary tests with a network throughput benchmark tool like iperf. Once total available network bandwidth has been determined, it is recommended to work with your networking team(s) to determine how much bandwidth you can safely consume as to not saturate and impact the production corporate network. You are able to set bandwidth limits in fpsync to accommodate any restrictions. Close coordination with your networking team will be necessary to properly monitor the transfer and overall network health. An individual should be identified with the ability to start/stop the transfer with 24/7 availability in the event the transfer must be stopped due to network disruption.

Disaster recovery planning

Given that SAS is a production system, there are disaster recovery (DR) considerations that must be accounted for when planning the migration. In order to achieve a low Recovery Time Objective (RTO) and short Recovery Point Objective (RPO) while minimizing extraneous cost, you can implement a backup and restore DR strategy with replication between two AWS Regions. While SAS is installed on EC2 instances, all SAS-related data is stored on the shared FSx for Lustre file system to accommodate the storage and performance needs of the SAS Grid platform.

AWS Backup can be part of your SAS deployment to accommodate a low RTO, short RPO, cross-Region, or cross-account backups. You can create hourly incremental backups of the FSx for Lustre file system by using AWS Backup. With this setup, the primary Region is automatically copied to the secondary Region. You can automate the SAS Grid installation process with an AWS CloudFormation template for minimal post-installation work and further lower your RTO. In a DR scenario, you can quickly create a new FSx for Lustre file system from your AWS Backup vault in a secondary Region. Once the file system is created, use your CloudFormation template to create the necessary infrastructure and bootstrap the Amazon EC2 instances. This approach supports an RTO of less than 6 hours and an RPO of one hour.

Optimizing storage costs with FSx for Lustre

With the FSx for Lustre data compression feature, you can reduce your data footprint to reduce storage cost and improve file system throughput capacity. FSx for Lustre automatically compresses newly written files before they are written to disk and automatically uncompresses them when they are read. This means for compressible data, you get lower storage space, but it also means faster data speeds. Of course, different data compresses at different rates with some data types (such as text) compressing by a large percentage and some (imagery such as scanned documents) not at all.

You can enable compression on new or existing file systems via the console, Amazon FSx CLI or API operations. In the console, compression type is an option under “File System Details.” The create-file-system and update-file-system API operations now have a new option: DataCompressionType. Choose either None or LZ4. LZ4 compression is optimized to deliver high levels of compression without adversely impacting file system performance. LZ4 is a Lustre community-trusted and performance-oriented algorithm that provides a balance between compression speed and compressed file size.

You can change an existing file system using update-filesystem API or in the console, but it won’t have any effect on data that already exists in the file system. If you want to compress already-existing data, you need to issue an lfs migrate against the files in the file system that will rewrite them in place – compressing the data as it gets written. Data compression rates can be observed by using du –apparent-size to view the uncompressed size, and du to view the compressed size.

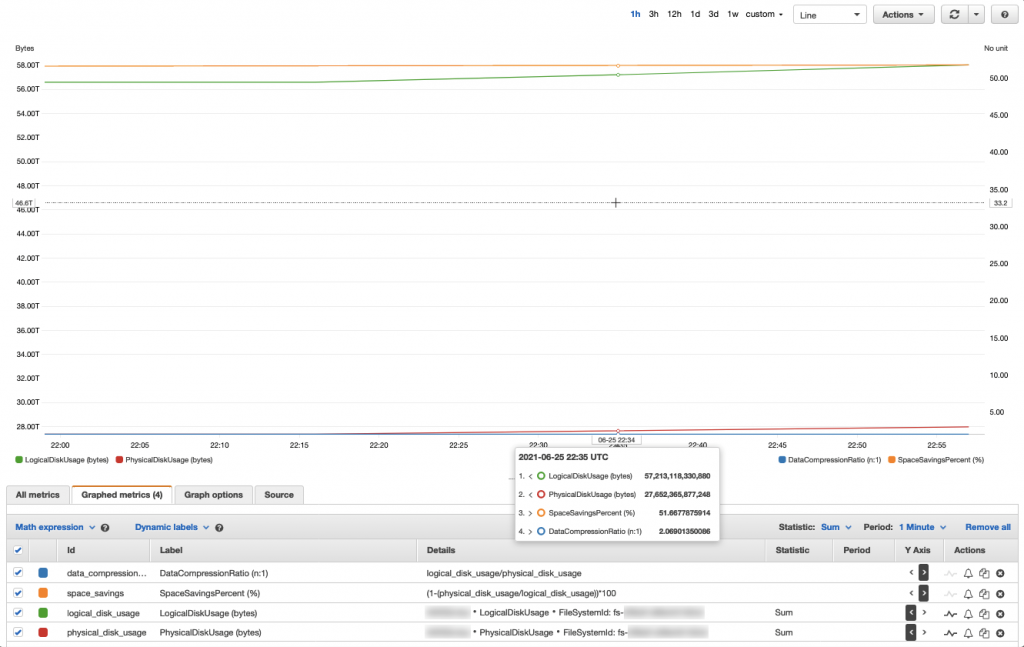

Figure 2: FSx for Lustre data compression usage and savings metrics example

Data compression rates can also be viewed within an Amazon CloudWatch dashboard (Figure 1). If a file system has or has had data compression enabled – remember you can enable or stop data compression at any time. Two additional CloudWatch metrics are used to monitor disk usage. The LogicalDiskUsage metric shows the total logical disk usage (without compression), and the PhysicalDiskUsage metric shows the total physical disk usage (with compression). In Figure 1, the customer’s Space Savings is 51.66% translating to a Data Compression ratio of 2.06 — meaning roughly 57 TiB of data, only takes up roughly 27 TiB with the data compression feature enabled.

To calculate the file system’s compression ratio, divide the Sum of the LogicalDiskUsage statistic by the Sum of the PhysicalDiskUsage statistic, and the result is typically expressed as a result to 1 ratio (for example, 3.75:1) To calculate the file system’s space savings, subtract 1 from the quotient of the Sum of the PhysicalDiskUsage statistic divided by the Sum of the LogicalDiskUsage statistic, and the result is typically expressed as a percentage (for example, 73.33%). More best practices with this feature are found in the Spend less while increasing performance with Amazon FSx for Lustre data compression blog.

Performance impact

At AWS, it’s been our experience that without any code changes or application modifications that SAS apps can regularly achieve over 45% faster performance for customers. Customers see these benefits through a combination of modern EC2’s and the high bandwidth of Amazon FSx for Lustre. We have observed SAS applications runtime drop from over 15 minutes to just over 7 minutes, an over 50% improvement with zero code changes. Many customers continue to use SAS due to the complex applications developed over many years that are critical to their operation and need to be carried forward. They need to maintain this code and the business processes it enables while continuing to improve security, cost efficiency, and reliability. By migrating their SAS cluster and SAS applications running on it to the cloud, they remove a major impediment to closing data centers.

By moving SAS to cloud, you can enable your business units to write SAS applications that can work in concert with native applications such as Python, R, and Amazon SageMaker. This allows your business units to expand the use of your data with new workloads, and solve problems efficiently using purpose-built tools while integrating the established logic and processing in SAS. By running SAS Grid on AWS, you enable your developers to use the latest AWS high performance compute instances, networking, and storage for their workloads, potentially increasing performance from what you observe on premises.

Conclusion

In this blog, we described best practices for migrating SAS Grid to AWS, Amazon FSx for Lustre and how its’ data compression feature reduces cost. Using FSx for Lustre can help you easily migrate your SAS infrastructure to cloud, while reducing data storage costs. Many customers can benefit from migrating their applications that require high performance and shared cluster POSIX-compliant storage to the cloud.

AWS Compute and Storage solutions help our customers easily integrate SAS Grid into their existing cloud environments. This can help reduce their IT total cost of ownership (TCO), increase overall performance of jobs running on SAS in AWS, and reduce the footprint of their data by leveraging Amazon FSx for Lustre and its data compression feature.

Thanks for reading this blog post and leave any questions or comments in the comments section!