AWS Partner Network (APN) Blog

Amazon Redshift Benchmarking: Comparison of RA3 vs. DS2 Instance Types

By Jayaraman Palaniappan, CTO & Head of Innovation Labs at Agilisium

By Smitha Basavaraju, Big Data Architect at Agilisium

By Saunak Chandra, Sr. Solutions Architect at AWS

|

|

Agilisium Consulting, an AWS Advanced Consulting Partner with the Amazon Redshift Service Delivery designation, is excited to provide an early look at Amazon Redshift’s ra3.4xlarge instance type (RA3).

This post details the result of various tests comparing the performance and cost for the RA3 and DS2 instance types. It will help Amazon Web Services (AWS) customers make an informed decision on choosing the instance type best suited to their data storage and compute needs.

As a result of choosing the appropriate instance, your applications can perform better while also optimizing costs.

The test runs are based on the industry standard Transaction Processing Performance Council (TPC) benchmarking kit.

Amazon Redshift RA3 Architecture

The new RA3 instance type can scale data warehouse storage capacity automatically without manual intervention, and with no need to add additional compute resources.

RA3 is based on AWS Nitro and includes support for Amazon Redshift managed storage, which automatically manages data placement across tiers of storage and caches the hottest data in high-performance local storage.

The instance type also offloads colder data to Amazon Redshift managed Amazon Simple Storage Service (Amazon S3).

Through advanced techniques such as block temperature, data-block age, and workload patterns, RA3 offers performance optimization. It can be resized using elastic resize to add or remove compute capacity. If elastic resize is unavailable for the chosen configuration, then classic resize can be used.

RA3 nodes with managed storage are an excellent fit for analytics workloads that require high storage capacity. They can be the best fit for workloads such as operational analytics, where the subset of data that’s most important continually evolves over time. In the past, there was pressure to offload or archive historical data to other storage because of fixed storage limits.

You can upgrade to RA3 instances within minutes, no matter the size of the current Amazon Redshift clusters. To learn more, please refer to the RA3 documentation.

Infrastructure

Amazon Redshift’s ra3.16xlarge cluster type, released during re:Invent 2019, was the first AWS offering that separated compute and storage. However, due to heavy demand for lower compute-intensive workloads, Amazon Redshift launched the ra3.4xlarge instance type in April 2020.

Customers using the existing DS2 (dense storage) clusters are encouraged to upgrade to RA3 clusters. A benchmarking exercise like this can quantify the benefits offered by the RA3 cluster.

For more details on the specification of DS2 vs RA3 instances, two Amazon Redshift clusters chosen for this benchmarking exercise. Please note this setup would cost roughly the same to run for both RA3 and DS2 clusters.

*- ra3.4xlarge node type can be created with 32 nodes but resized with elastic resize to a maximum of 64 nodes.

WLM Configuration

We decided to use TPC-DS data as a baseline because it’s the industry standard. The volume of uncompressed data was 3 TB. After ingestion into the Amazon Redshift database, the compressed data size was 1.5 TB.

We carried out the test with the RA3 and DS2 cluster setup to handle the load of 1.5 TB of data. All testing was done with the Manual WLM (workload management) with the following settings to baseline performance:

- Queue: Default

- Concurrency on main: 10

- Concurrency auto scaling: Auto

- Short query acceleration: Enabled

The table below summarizes the infrastructure specifications used for the benchmarking:

| Node Type | Number of Nodes | Total vCPU | Total Data Storage (TB) | Total Memory (GiB) | I/O (GB/Sec) | Cost Per Hour/Node (On-Demand) | Total Cluster Cost/Hr |

| ra3.4xlarge | 2 | 24 | 128 | 192 | 4 | $3.26 + $0.024/GB/month | $6.67 |

| ds2.xlarge | 8 | 32 | 16 | 104 | 3.2 | $0.85 | $6.80 |

Test Data

For this test, we chose to use the TPC Benchmark DS (TPC-DS), intended for general performance benchmarking. We decided the TPC-DS queries are the better fit for our benchmarking needs. Hence, we chose the TPC-DS kit for our study.

We imported the 3 TB dataset from public S3 buckets available at AWS Cloud DW Benchmark on GitHub for the test.

Test Scope

We measured and compared the results of the following parameters on both cluster types:

- CPU

- Query performance

- I/O – read and write

The following scenarios were executed on different Amazon Redshift clusters to gauge performance:

| Test Type | Number of Users | Number of Queries Per User | Total Queries |

| Single user | 1 | 99 | 99 |

| Concurrent users | 5 | 99 | 495 |

| Concurrent users | 15 | 99 | 1,485 |

Query Performance

With the improved I/O performance of ra3.4xlarge instances,

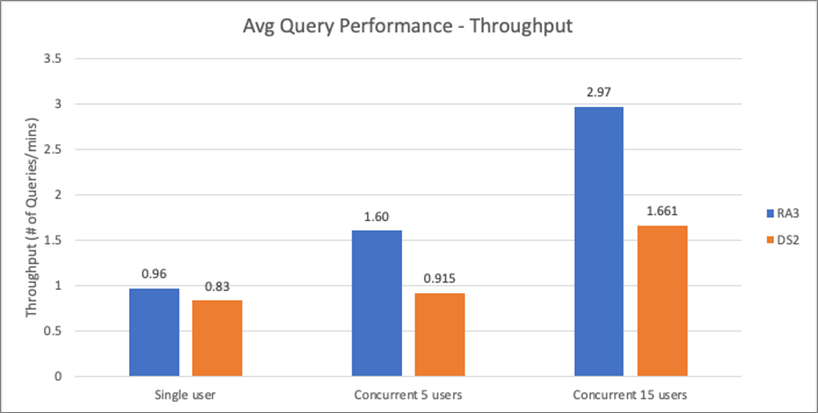

- The overall query throughput to execute the queries improved by 55 percent in RA3 for concurrent users (both five users and 15 users).

- The difference was marginal for single-user tests. In real-world scenarios, single-user test results do not provide much value.

| Tests | RA3 | DS2 | Performance |

| Single user | 0.964 | 0.830 | 86% |

| Concurrent 5 users | 1.601 | 0.915 | 57% |

| Concurrent 15 users | 2.966 | 1.661 | 56% |

The graph below represents that RA3 consistently outperformed DS2 instances across all single and concurrent user querying.

Figure 1 – Query performance metrics; throughput (higher the better).

CPU Performance – Single and Concurrent User

The local storage used in the RA3 instances types is Solid State Drive (SSD) compared to DS2 instances, which has (Hard Disk Drive) HDD as local storage. We wanted to measure the impact of change in the storage layer has on CPU utilization.

Customers check the CPU utilization metric period to period as an indicator to resize their cluster. A CPU utilization hovering around 90 percent, for example, implies the cluster is processing at its peak compute capacity.

In this case, suitable action may be resizing the cluster to add more nodes to accommodate higher compute capacity. This is particularly important in RA3 instances because storage is separate from compute and customers can add or remove compute capacity independently.

In this setup, we decided to choose manual WLM configuration. The graph below designates the CPU utilization measured under three circumstances.

Figure 2 – CPU performance metrics.

The observation from this graph is that the CPU utilization remained the same irrespective of the number of users. Considering the benchmark setup provides 25 percent less CPU as depicted in Figure 3 above, this observation is not surprising.

I/O Performance – Read and Write IOPS

With ample SSD storage, ra3.4xlarge has a higher provisioned I/O of 2 GB/sec compared to 0.4 GB/sec for ds2.xlarge, which has HDD storage.

These results provide a clear indication that RA3 has significantly improved I/O throughput compared to DS2.

From this benchmarking exercise, we observe that:

- The Read and Write IOPS of ra3.4xlarge cluster performed 220 to 250 percent better than ds2.xlarge instances for concurrent user tests.

- The Read and Write IOPS of ra3.4xlarge cluster performed 140 to 150 percent better than ds2.xlarge instances for concurrent user tests.

Figure 3 – I/O performance metrics: Read IOPS (higher the better; Write IOPS (higher the better).

Other Key Observations

Disk Utilization

The average disk utilization for RA3 instance type remained at less than 2 percent for all tests. The peak utilization almost doubled for concurrent users test and peaked to 2.5 percent. In comparison, DS2’s average utilization remained at 10 percent for all tests, and the peak utilization almost doubled for concurrent users test and peaked at 20 percent.

Temp space growth almost doubled for both RA3 and DS2 during the test execution for concurrent test execution.

Figure 4 – Disk utilization: RA3 (lower the better); DS2 (lower the better).

Read and Write Latency

We also compared the read and write latency. The read latency of ra3.4xlarge shows a 1,000 percent improvement over ds2.xlarge instance types, and write latency led to 300 to 400 percent improvements.

This improved read and write latency results in improved query performance. The graph below shows the comparison of read and write latency for concurrent users. We see that RA3’s Read and write latency is lower than the DS2 instance types across single / concurrent users.

Figure 5 – Read and write latency: RA3 cluster type (lower is better).

Concurrency Scaling

For the single-user test and five concurrent users test, concurrency scaling did not kick off on both clusters. Concurrency scaling kicked off in both RA3 and DS2 clusters for 15 concurrent users test.

Figure 6 – Concurrency scaling active clusters (for two iterations) – RA3 cluster type.

We observed the scaling was stable and consistent for RA3 at one cluster. However, for DS2 it peaked to two clusters, and there was frequent scaling in and out of the clusters (eager scaling).

This graph depicts the concurrency scaling for the test’s two iterations in both RA3 and DS2 clusters.

Figure 7 – Concurrency scaling active clusters (for two iterations) – DS2 cluster type.

Workload Concurrency

The workload concurrency test was executed with the below Manual WLM settings:

- Queue: Default

- Concurrency on main: 10

- Concurrency auto scaling: Auto

- Short query acceleration: Enabled

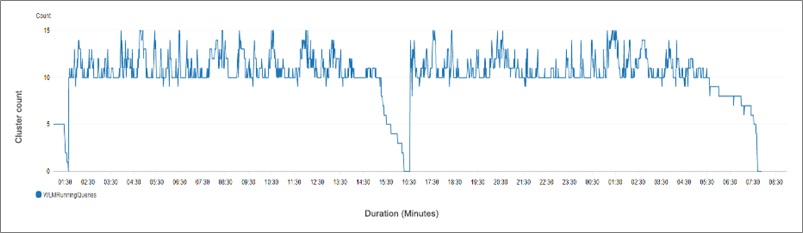

In RA3, we observed the number of concurrently running queries remained 15 for most of the test execution. This is because concurrency scaling was stable and remained consistent during the tests.

Total concurrency scaling minutes was 97.95 minutes for the two iterations.

Figure 8 – WLM running queries (for two iterations) – RA3 cluster type.

However, for DS2 clusters concurrently running queries moved between 10 and 15, it spiked to 15 only for a minimal duration of the tests. This can be attributed to the intermittent concurrency scaling behavior we observed during the tests, as explained in the Concurrency Scaling section of this post above.

Total concurrency scaling minutes was 121.44 minutes for the two iterations.

Figure 9 – WLM running queries (for two iterations) – DS2 cluster type.

Conclusion

This post can help AWS customers see data-backed benefits offered by the RA3 instance type. As a result of choosing the appropriate instance, your applications can perform better while also optimizing costs.

Based on Agilisium’s observations of the test results, we conclude the newly-introduced RA3 cluster type consistently outperforms DS2 in all test parameters and provides a better cost to performance ratio (2x performance improvement).

We highly recommend customers running on DS2 instance types migrate to RA3 instances at the earliest for better performance and cost benefits.

Agilisium – AWS Partner Spotlight

Agilisium is an AWS Advanced Consulting Partner and big data and analytics company with a focus on helping organizations accelerate their “data-to-insights leap.”

Contact Agilisium | Partner Overview

*Already worked with Agilisium? Rate the Partner

*To review an AWS Partner, you must be a customer that has worked with them directly on a project.