AWS Partner Network (APN) Blog

Dremio Cloud is a Lakehouse Platform on AWS That Democratizes Data

By Tomer Shiran, Founder and CPO – Dremio

By Shashi Raina, Partner Solutions Architect – AWS

|

| Dremio |

|

Organizations today need to become data-driven with urgency because the competition isn’t waiting, and everyone expects a digital-first experience.

However, it’s difficult to be data-driven when you constantly need to move data around. Traditional data architectures that require you to extract, transform, load (ETL), and copy data into and out of data warehouses and maintain extracts and cubes for performance are brittle, hinder data democratization, and don’t scale as your data grows.

It’s time to challenge this paradigm and shift to an open data architecture that unlocks new use cases and future-proofs your data-driven organization.

Dremio Cloud is a cloud lakehouse platform on Amazon Web Services (AWS) that democratizes data and provides self-service access to data consumers by connecting business intelligence (BI) users and analysts directly to data on Amazon Simple Storage Service (Amazon S3) and beyond.

With Dremio Cloud, you can use Amazon S3 as your lakehouse to run workloads ranging from ad-hoc analytics to mission-critical BI, with other engines for ETL and streaming on that same data in Amazon S3.

In this post, you’ll learn the benefits of Dremio Cloud, how to set it up, and start using Dremio’s high-performance lakehouse platform in less than 15 minutes. We’ll review Dremio Cloud’s key features, and provide a getting started tutorial with sample datasets.

Dremio Corporation is an AWS Data and Analytics Competency Partner.

Dremio Overview and Key Features

Dremio Cloud delivers Dremio’s sub-second query acceleration, self-service semantic layer, and seamless integration with your favorite BI tools in a fully-managed service.

Key features of Dremio Cloud include:

- Fully-managed and frictionless SaaS: With Dremio Cloud, you don’t have to install and manage software, or think about versions and upgrades. You bring your data and queries, and Dremio takes care of the rest. You only pay for what you consume.

- Limitless elasticity and concurrency: Dremio Cloud scales automatically to meet your workload requirements, whether you’re running one query a day or a thousand queries per second.

- Global control plane: The control plane, a multi-tenant, always-on service, enables global query planning, routing, and management. It provides a single pane of glass for end-to-end data management.

- Execution plane: Physically isolated, auto-scaling execution engines are automatically provisioned by the control plane, and workloads are isolated by project. Workloads can be routed to specific engines to ensure query response times are achieved.

- Data ownership: Dremio Cloud gives you full ownership of your data, which is important from both a cost standpoint and a strategic perspective.

- Enterprise-grade security: Dremio Cloud provides the highest level of security at different levels, including organization, cloud, project, or data entity.

For additional information about Dremio Cloud, read more in the data sheet or watch this short video.

Dremio Cloud Architecture

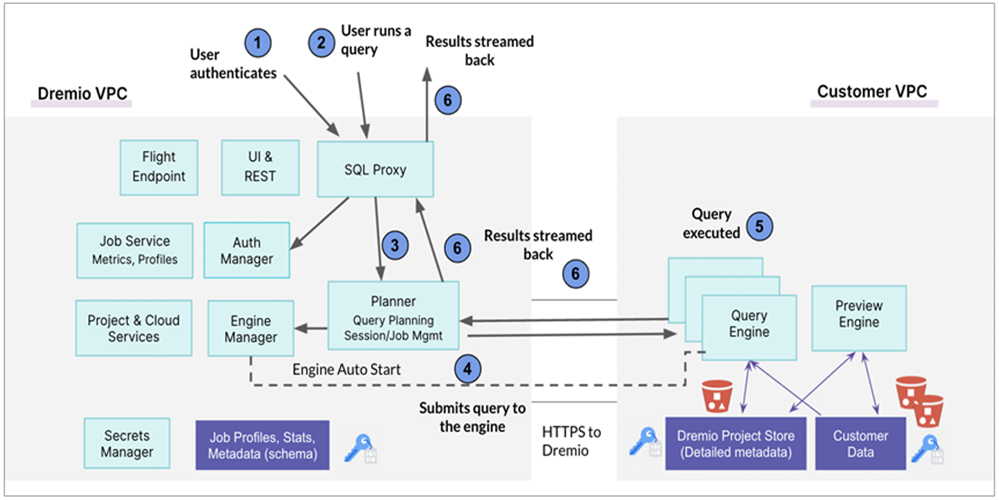

Dremio Cloud’s functions are divided between Dremio’s virtual private cloud (VPC) and the customer’s VPC. Dremio’s VPC acts as the control plane, while the customer’s VPC acts as an execution plane. If the customer uses multiple cloud accounts with Dremio Cloud, each account’s VPC acts as an execution plane.

Figure 1 – Dremio Cloud architecture.

Dremio Cloud’s Global Control Plane

The Dremio Cloud control plane serves as the single pane of glass for you to manage your Dremio Cloud deployment, including users, security, and integrations. A control plane makes data management easier, especially for global companies with large data footprints.

The control plane hosts Dremio Cloud’s web interface, client endpoints (including JDBC, ODBC, REST, and Arrow Flight), manages the lifecycle (including provisioning, scaling, and quiescing) of the compute engines that reside in customer VPCs, and receives, plans, and coordinates the execution of queries.

A customer can use the Dremio Cloud control plane to define their organization’s semantic layer, create isolated compute engines so that different workloads across the organization can be handled without resource contention, manage auto-scaling policies for your engines to minimize compute costs, and monitor all jobs being executed on their system.

The Dremio Cloud control plane is a multi-tenant, always-on service hosted within a Dremio-managed cloud account. Dremio currently maintains two control planes for data security: one in the United States, and one in Europe.

The Execution Plane

The Dremio Cloud execution plane consists of one or more compute engines per subnet and resides in the customer’s VPC.

Dremio Cloud provisions engines automatically as needed for the successful execution of queries. For example, on AWS the Dremio Cloud control plane deploys compute engines as Amazon Elastic Compute Cloud (Amazon EC2) instances within the customer’s VPC. Customer data, as well as the metadata for Dremio Cloud projects, is stored in the customer’s VPC.

Dremio Cloud also auto scales your compute engines, so you don’t need to manually scale servers to meet your workload requirements. Simply define auto-scaling policies for your engines using the control plane, and Dremio will handle the rest.

To learn more about how queries are executed, please refer to the Dremio documentation.

Getting Started with Dremio Cloud

To get started, first ensure you have your own AWS account. This post assumes you have the required permissions to configure Dremio and create AWS resources in your account.

We will create a Dremio organization, which is connected to your AWS account, and run a query on a large dataset in a sample Amazon S3 bucket without copying any data.

These are the steps in this procedure:

- Create a Dremio organization.

- Connect your AWS account to Dremio using AWS CloudFormation.

- Connect to sample data and prepare your dataset.

- Run your first query on the sample dataset.

If you need help along the way, documentation is available here.

Step 1: Create a Dremio Organization

Go to the Dremio signup page. Users with data in European AWS regions can sign up through the European Dremio control plane.

Create an organization by entering your email and creating a password, or sign up with a social identity provider.

Verify your email account.

Step 2: Connect your AWS Account to Dremio Using CloudFormation

Once you have verified your email, connect your AWS account to Dremio through the Dremio onboarding wizard, which will launch an AWS CloudFormation stack in your AWS account.

The CloudFormation template creates:

- An Amazon S3 bucket that will be used as the project metadata store.

- A cross-account AWS Identity and Access Management (IAM) role required to access the project store.

- Cross-account IAM roles required for Dremio to create engines (EC2 instances).

Provide a stack name, and the VPC and subnet (private or public) in which Dremio will provision EC2 instances, which are used to run your Dremio workloads.

Following the stack completion that takes around six minutes, Dremio will create a project within your organization that will be linked to this account and the project store S3 bucket.

Step 3: Connect to Sample Data and Prepare Your Dataset

Now that you’ve created a Dremio organization that’s linked to your AWS account, you will connect Dremio to your Amazon S3 data lake storage and create your physical data set.

While on the Dremio home screen, navigate to the bottom left corner of the page and click the + icon next to Data Lakes. A pop-up window will appear with the list of data lake sources you can connect to.

Click on Sample Source, which will create a live connection to an S3 bucket with sample data for you to explore. Once this is complete, you’ll see Samples under your data lake connections.

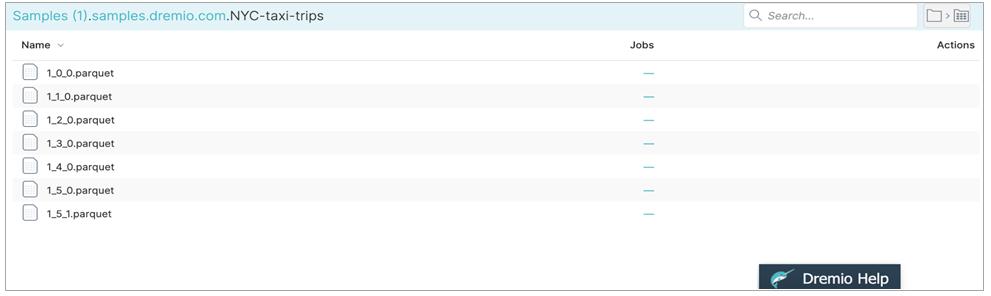

Navigate to the NYC-taxi-trips folder by clicking Samples > samples.dremio.com > NYC-taxi- trips. In the NYC-taxi-trips folder, you will see a series of Parquet files.

Figure 2 – View the Parquet files that comprise the sample dataset.

These Parquet files comprise a dataset containing information about taxi trips that occurred in New York City over the course of a few years. You can analyze these files as a single dataset by promoting them to a physical dataset for Dremio to query.

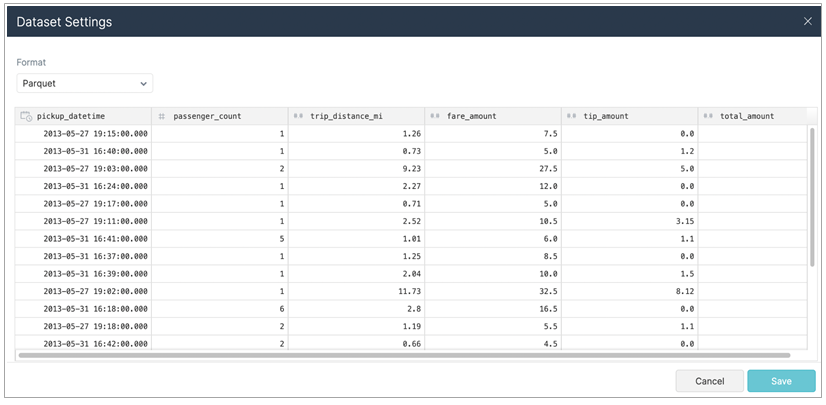

In the top right corner of the screen click the folder icons to create your dataset for Dremio to query. Dremio automatically recognizes the data as Parquet and will create a table from the Parquet files. Click Save.

Figure 3 – Promote your Parquet files into a single physical dataset you can query with Dremio.

Once you click Save, you’ll be automatically brought to the SQL Runner so you can query your dataset.

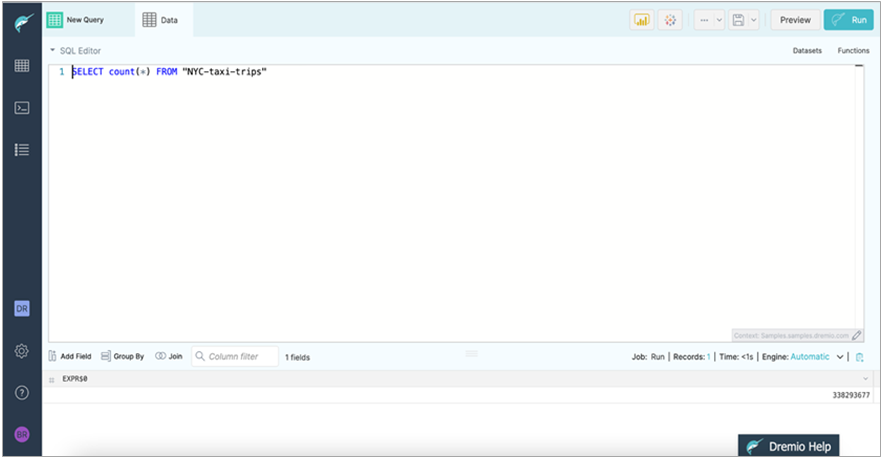

Figure 4 – Easily query your data using the SQL runner.

Step 4: Run Your First Query on the Sample Dataset

To query this data, enter the following in the SQL Runner:

SELECT count(*) FROM "NYC-taxi-trips"

Click the Run button.

Figure 5 – Run your first query on the sample dataset.

Congratulations! You’ve now set up Dremio Cloud and have run your first query on data that lives in Amazon S3.

Where to next? Here are a few things that you might want to try:

- Run more SQL queries on this sample dataset: Feel free to continue to use the SQL runner to run as many queries on this dataset as you’d like.

- Connect to your own data sources: Outside of the sample data we’ve provided, you can also connect to your own Amazon S3 and AWS Glue sources. Dremio works closely with numerous AWS service teams, including the Amazon S3 and AWS Glue teams, so you can analyze data as easily as possible there.

Figure 6 – Connect to your Amazon S3 account.

- Configure more engines: Dremio Cloud enables you to set up multiple physically isolated engines so you can tackle various workloads across your organization without worrying about resource contention. You can easily manage engines (and scaling rules) and manage engine routing rules through the Dremio Cloud control plane.

Conclusion

Dremio Cloud enables you to run mission-critical BI and analytics directly on Amazon S3, resulting in a simpler and more streamlined data architecture that greatly reduces time to value while enhancing data security and eliminating vendor lock-in.

Dremio Cloud’s unique architecture provides infinite scale and limitless concurrency through a cost-efficient, fully-managed service, while ensuring you maintain full purview and ownership of your organization’s data.

Sign up for Dremio Cloud today, and start delivering meaningful insights on your Amazon S3 data lake in minutes.

Dremio – AWS Partner Spotlight

Dremio is an AWS Data and Analytics Competency Partner that provides a cloud lakehouse platform on AWS that democratizes data and provides self-service access to data.

Contact Dremio | Partner Overview | AWS Marketplace

*Already worked with Dremio? Rate the Partner

*To review an AWS Partner, you must be a customer that has worked with them directly on a project.