AWS Partner Network (APN) Blog

Enabling Tiering and Throttling in a Multi-Tenant Amazon EKS SaaS Solution Using Amazon API Gateway

By Ranjith Raman, Sr. Partner Solutions Architect – AWS

By Peter Yang, Sr. Partner Solutions Architect – AWS

Software-as-a-service (SaaS) solutions often rely on a multi-tenant model where some or all of a system’s resources are shared. In these environments, you often have to be concerned about noisy neighbor conditions where the load of one tenant can adversely impact the load of another tenant.

Every SaaS architecture must introduce mechanisms and policies that prevent noisy neighbor conditions. Getting these policies right is essential to building a robust SaaS solution that delivers a consistent experience to customers.

This post looks at the different strategies that can be used to introduce the throttles (transaction rate) and quotas (transaction volume) that manage each tenant’s activity, exploring the various AWS services that can be used to bring these concepts to life.

The concepts covered here are part of a full working solution that outlines the various strategies we discuss in this post. The GitHub repo for this solution can be found here.

Why Throttling is Important for a SaaS Solution

SaaS providers introduce throttling policies for a variety of reasons. Operationally, they can be used to help ensure no single tenant can put load on the system in a way that impacts the performance and experience of other tenants. They can also be part of a business strategy where your solution defines throttles and quotas. This allows the SaaS provider to create different tiering strategies that offer different experiences to a range customers and personas.

These options for tiers and pricing allow customers to choose the right solution for their needs and budget. The ability to forecast transaction rate and volume helps SaaS providers keep the cost of supporting infrastructure aligned with customer needs. More importantly, applying throttling policies ensures tenants can’t do something—at any tier—that could impact the availability and stability of your SaaS environment.

High-Level Architecture

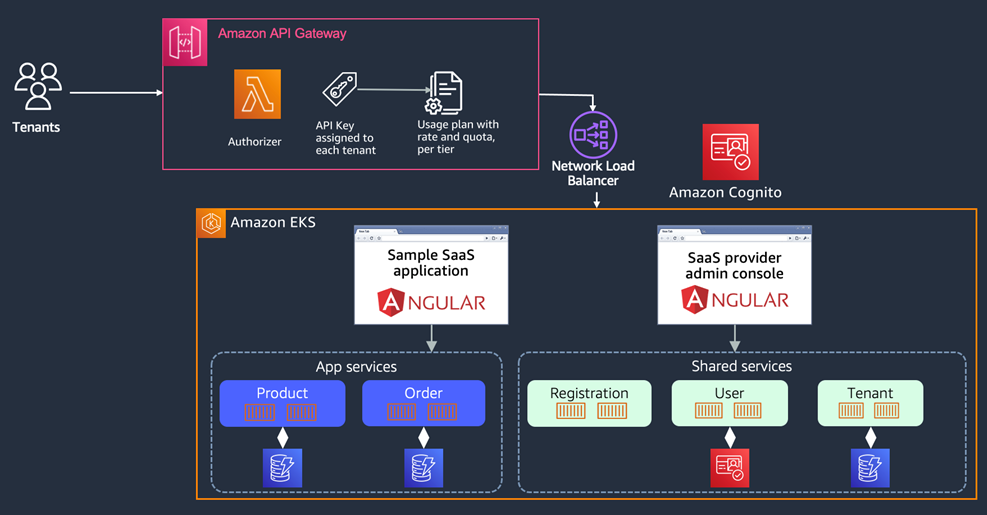

Before we dive in to the details of our sample solution, let’s look at the high-level elements of the architecture that are employed by this environment. Below, you’ll see the different layers that are part of the EKS SaaS solution.

Figure 1 – High-level architecture.

The solution is comprised of an Amazon Elastic Kubernetes Service (Amazon EKS) cluster hosting the various microservices of our SaaS environment. We have a SaaS provider application that provides a user interface (UI) that allows SaaS admins to deploy new tenants and look at the details of already deployed tenants. We also provision a UI application for SaaS tenants to log in, along with their corresponding microservices that are part of our application plane.

Our core, or shared services, are part of the SaaS control plane which provides the horizontal, cross-cutting services used across our SaaS environment. They’re not specific to any one tenant, but rather provide the support infrastructure for onboarding new tenants and the management and monitoring of those tenants. Here, you’ll see we have Registration, Tenant Management, and User Management microservices deployed into our cluster.

The microservices in this architecture are all running within the same Amazon EKS cluster. While it’s possible for an EKS cluster to have limits defined on compute resources such as CPU and memory, it requires the workload request to reach the cluster before EKS can determine if there are sufficient CPU or memory to be allocated.

In our architecture diagram, you’ll notice we have introduced Amazon API Gateway. This allows us to apply throttling to requests before they reach the EKS cluster. It also gives us a way to manage the flow of requests to the cluster and manage/evaluate activity on a per-tenant basis.

Amazon API Gateway is a fully managed service that makes it easy to expose RESTful APIs that act as the “front door” of an application that exposes data and functionalities. In addition to exposing RESTful APIs, Amazon API Gateway provides the mechanisms you’ll need to enforce throttles and quotas with usage plans and API keys:

- Usage Plan controls which API and methods are accessible and also defines the target request rate and quota for each API and methods. You can use a usage plan to configure throttling and quota limits, which are enforced on individual client API keys.

- API Key is an alphanumeric string that Amazon API Gateway uses to identify a requestor who uses your REST or WebSocket API. It generates API keys on your behalf, or you can import them from a CSV file. You can use API keys together with AWS Lambda authorizers or usage plans to control access to your APIs.

A usage plan uses API keys to identify the client and determine access. While API keys are traditionally focused on authorizing access to resources, in our example we’ll be leveraging API keys to map a tenant to a given usage plan that implements our tiering strategy. A separate usage plan will be assigned to each tenant tier, providing different throttling policies based on tenant’s designated tier.

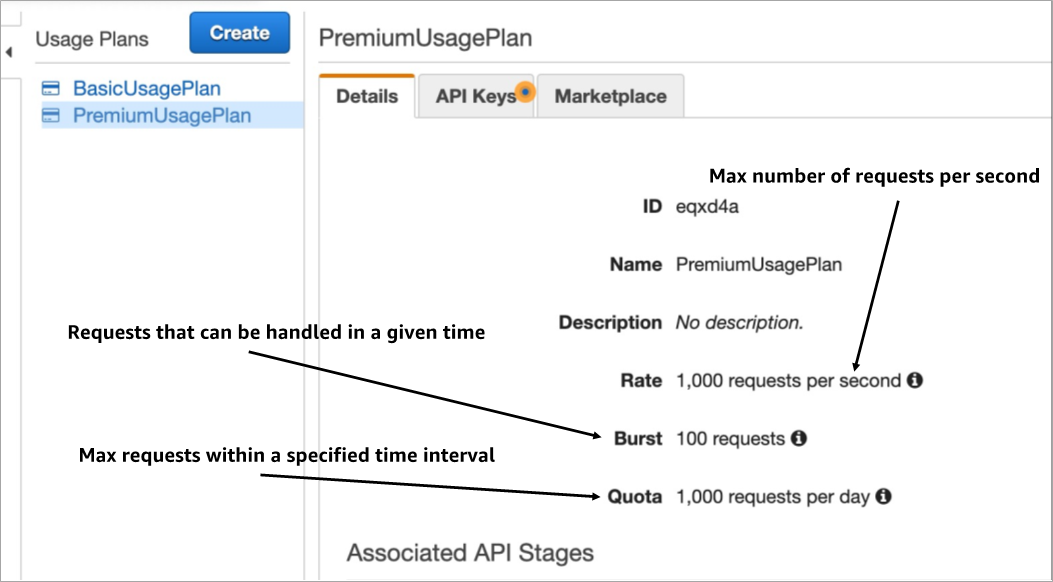

Configuring Usage Plans

Now that we’ve had an introduction to usage plans and API keys, let’s take a look at how to configure them and start using them in our requests. Below, you can see Rate, Burst, and Quota as options when creating a usage plan.

Figure 2 – Rate, Burst, and Quota definitions in a usage plan.

- Rate is the limit for maximum number of requests per second. With the rates configured for a usage plan, we’ll be able to control how fast requests can be submitted for a particular customer. We can configure the parameter to be different values based on tiers. For example, we may only allow end users logging into the SaaS application for a basic tier, so the rates parameter maybe set lower. But we want to allow the rates to be set to a different level for premium tiers that may have use cases requiring higher request rates.

. - Burst represents the target maximum number of concurrent requests Amazon API Gateway will allow. This parameter has an impact on concurrent users and requests. For a basic tier, we may only want to allow a certain number of concurrent users on the system. We can configure burst to have a limit of 10 requests, which means Amazon API Gateway will only allow 10 concurrent requests.

.

If a basic tier customer has too many users in the system, it’s likely some of the users will be throttled due to number of concurrent requests exceeding the burst value. For premium tier customers, this parameter can have a much higher value to accommodate more users and API integration.

. - Quota is a third parameter that can be used as part of the throttling strategy. This limits how many requests can be submitted in a defined window, such as day, week, or month. With this parameter configured, the tenants associated with an API key have a limit on the number of interactions is allowed with the system.

Using API Keys

In our solution, we’ve picked a strategy that requires a unique API key for each tenant. This API key is created during our tenant onboarding process. Once the key is created, it’s stored in Amazon DynamoDB as part of the tenant record. The value is also attached to each user in our identity providers (in this case, Amazon Cognito).

Each time a user authenticates, the identity provider creates an encoded JSON Web Token (JWT) that includes this API key. The client application could extract this value from the JWT and include the key in the HTTP request to Amazon API Gateway.

The image below provides an example of how an API key is passed as part of the request header for all request to Amazon API Gateway, which uses the API key from the header to track usage and ensure the request is within the defined threshold for throttle, burst rate, and quota.

Figure 3 – API key part of the request header.

In this model, something to keep in mind is that there’s a limit of 10,000 API keys per account per region. If you are anticipating more than 10,000 tenants per region in a single AWS account, you could use the solution covered in this post in combination with another strategy, such having a threshold of tenants per account and create separate AWS accounts to accommodate additional tenants.

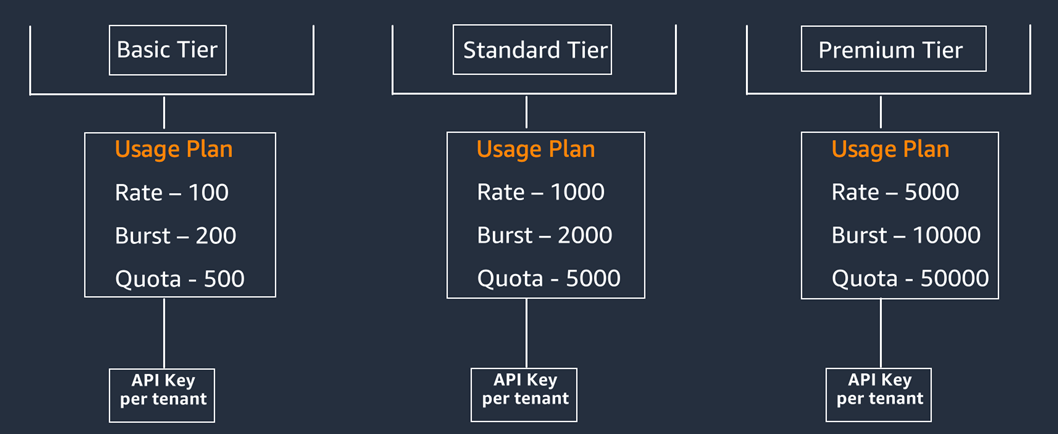

As you can see in Figure 4, we illustrate how a usage plan is associated with each tier of the SaaS solution. With an API key assigned to each tenant, every tenant gets their own allocation of the defined rate, burst, and quota values that have been configured within in the usage plan for the tier associated with the tenant.

Figure 4 – Usage plan per tier and API key per tenant.

By having an Amazon API Gateway in front of the Amazon EKS cluster, each transaction request will be evaluated and minimize unnecessary, unauthorized workload from reaching the compute resources.

While the example provided in this post assigns a unique API key to each tenant, another implementation approach is one API key per tier and to have all the tenants in each tier share an API key. If we opt not to have a quota configured and limit the number of requests within a window, we could have all tenants in the same tier share an API key and have the same rate and burst configuration.

While sharing API key for all tenants in the same tier means less API keys to manage, it also means the solution is more susceptible to noisy neighbor effect. One tenant could potentially saturate the system with requests and max out rate and burst, leaving other tenants unable to interact with the system.

Having a unique API key per tenant means each tenant has their own allocation of burst and rate, allowing for the quota parameter to be configured. The trade-off is there will be more API keys to manage.

API Key Creation During Tenant Onboarding

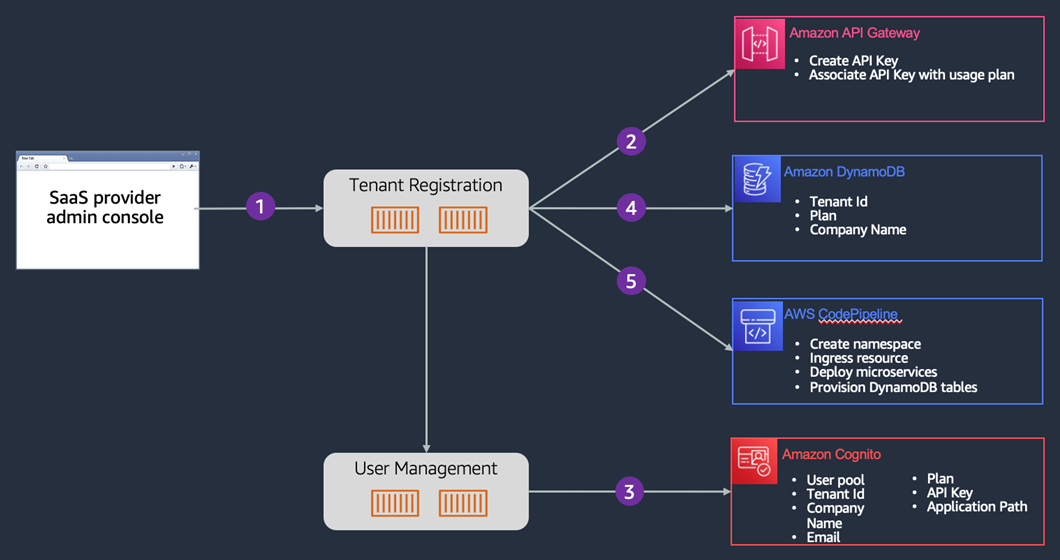

Creating and assigning an API key to a tenant is a big part of this experience. The diagram below provides an overview of the onboarding flow that illustrates how API keys get created during this process.

Figure 5 – Tenant onboarding.

In the diagram above, you’ll see the onboarding process starts with a SaaS admin filling out a sign-up form in the administration application (Step 1). When this form is submitted, the system invoked the Tenant registration service. The registration service assumes responsibility for coordinating and ensuring all elements of the onboarding process are successfully created and configured.

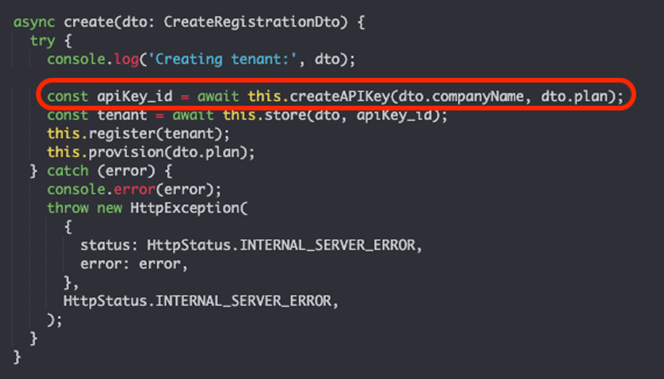

In Step 2, registration service first creates an API key and associates that API key with a usage plan. Remember, a usage plan can control which API and methods are accessible and also defines the target request rate and quota for each API and methods.

Figure 6 – Creation of API key during registration.

In Step 3, we provision a new Amazon Cognito User Pool (if required, depending on the tier) as well as add the first user for the tenant. As part of creating a new user, custom claims are populated with data about the tenant to assist with correlating users with their corresponding tenants to create a SaaS identity.

The tenant registration service captures and stores the fundamental attributes of a tenant in a DynamoDB table along with data from the creation of the user (Step 3) that’s essential to the authentication flow (user pool, AppId, etc).

Request Authentication Flow

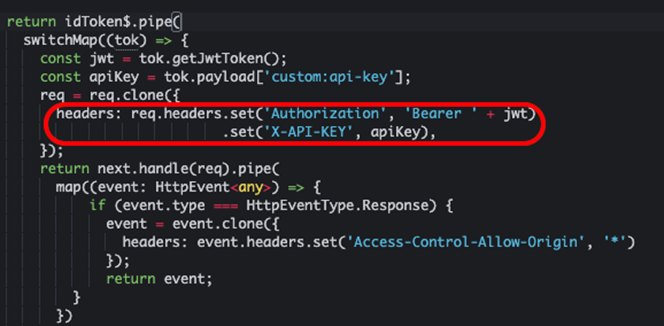

As shown in Figure 7, one of the custom claim we have populated in Amazon Cognito is the API Key associated with the tenant. We have an interceptor on the client side that’s adding the X-API-KEY to the http header and populating it with the value from Cognito’s custom claim.

Figure 7 – Associating API key with request header.

There are different options as far as where to add the API key to the request. While we are showing the interceptor as an example, it’s also possible to add the API key within a Lambda authorizer associated with the API Gateway instance.

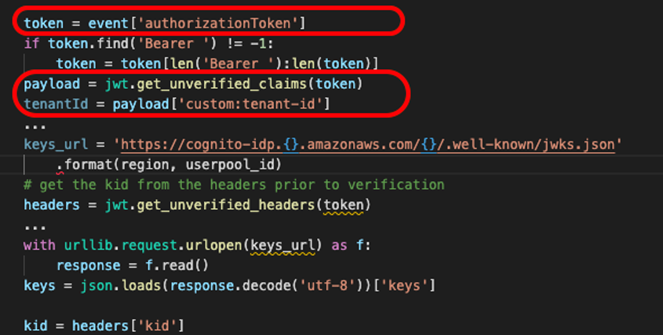

As you can see in Figure 8, the solution uses a Lambda authorizer that validates the JWT tokens against different user pools. When a new tenant is created with our sample solution, one of the attribute we store in DynamoDB is the information about the tenant’s IdP; in our case, the User Pool ID and App Client ID from Amazon Cognito. This information gives us the necessary information for our Lambda authorizer to validate the JWT token from the client.

Figure 8 – Lambda authorizer and JWT token handling.

When the client request is submitted to Amazon API Gateway, the authorizer identifies the tenant based on the tenant-id custom claim in the JWT token and retrieve the IdP information from DynamoDB based on the tenant ID. Since the JWT token was validated prior to the SaaS workload behind the API Gateway, the services can trust the token and not have to worry about the complexity of validating tokens with different IdPs.

Conclusion

In this post, we examined key considerations for implementing throttling, tiering, and authentication in a multi-tenant Amazon EKS environment using Amazon API Gateway.

Amazon EKS offers a range of constructs to implement multitenancy in your SaaS solution, as we have previously seen in the AWS SaaS Factory EKS Reference Architecture and also the EKS workshop. This post dives into tiering and throttling challenges and the value that API Gateway brings in addressing those challenges.

Some key takeaways from the proposed architecture are:

- Use Amazon API Gateway, usage plans, and API keys for throttling tenant requests in your EKS SaaS environment.

- Tiering and tenant isolation.

- Authentication and authorization using Lambda authorizers.

As you dig into the sample application we have shared in this GitHub repository, you’ll get a better sense of the various building blocks of the solution that we have presented. The repo provides a more in-depth view of the solution and has detailed instructions to set up and deploy a sample environment, which helps in understanding all of the moving pieces of the environment.