AWS Big Data Blog

Use AWS CloudWatch as a destination for Amazon Redshift Audit logs

Amazon Redshift is a fast, scalable, secure, and fully-managed cloud data warehouse that makes it simple and cost-effective to analyze all of your data using standard SQL. Amazon Redshift has comprehensive security capabilities to satisfy the most demanding requirements. To help you to monitor the database for security and troubleshooting purposes, Amazon Redshift logs information about connections and user activities in your database. This process is called database auditing.

Amazon Redshift Audit Logging is good for troubleshooting, monitoring, and security purposes, making it possible to determine suspicious queries by checking the connections and user logs to see who is connecting to the database. It gives information, such as the IP address of the user’s computer, the type of authentication used by the user, or the timestamp of the request. Audit logs make it easy to identify who modified the data. Amazon Redshift logs all of the SQL operations, including connection attempts, queries, and changes to your data warehouse. These logs can be accessed via SQL queries against system tables, saved to a secure Amazon Simple Storage Service (Amazon S3) Amazon location, or exported to Amazon CloudWatch. You can view your Amazon Redshift cluster’s operational metrics on the Amazon Redshift console, use CloudWatch, and query Amazon Redshift system tables directly from your cluster.

This post will walk you through the process of configuring CloudWatch as an audit log destination. It will also show you that the latency of log delivery to either Amazon S3 or CloudWatch is reduced to less than a few minutes using enhanced Amazon Redshift Audit Logging. You can enable audit logging to Amazon CloudWatch via the AWS-Console or AWS CLI & Amazon Redshift API.

Solution overview

Amazon Redshift logs information to two locations-system tables and log files.

- System tables: Amazon Redshift logs data to system tables automatically, and history data is available for two to five days based on log usage and available disk space. To extend the log data retention period in system tables, use the Amazon Redshift system object persistence utility from AWS Labs on GitHub. Analyzing logs through system tables requires Amazon Redshift database access and compute resources.

- Log files: Audit logging to CloudWatch or to Amazon S3 is an optional process. When you turn on logging on your cluster, you can choose to export audit logs to Amazon CloudWatch or Amazon S3. Once logging is enabled, it captures data from the time audit logging is enabled to the present time. Each logging update is a continuation of the previous logging update. Access to audit log files doesn’t require access to the Amazon Redshift database, and reviewing logs stored in Amazon S3 doesn’t require database computing resources. Audit log files are stored indefinitely in CloudWatch logs or Amazon S3 by default.

Amazon Redshift logs information in the following log files:

- Connection log – Provides information to monitor users connecting to the database and related connection information. This information might be their IP address.

- User log – Logs information about changes to database user definitions.

- User activity log – It tracks information about the types of queries that both the users and the system perform in the database. It’s useful primarily for troubleshooting purposes.

Benefits of enhanced audit logging

For a better customer experience, the existing architecture of the audit logging solution has been improved to make audit logging more consistent across AWS services. This new enhancement will reduce log export latency from hours to minutes with a fine grain of access control. Enhanced audit logging improves the robustness of the existing delivery mechanism, thus reducing the risk of data loss. Enhanced audit logging will let you export logs either to Amazon S3 or to CloudWatch.

The following section will show you how to configure audit logging using CloudWatch and its benefits.

Setting up CloudWatch as a log destination

Using CloudWatch to view logs is a recommended alternative to storing log files in Amazon S3. It’s simple to configure and it may suit your monitoring requirements, especially if you use it already to monitor other services and application.

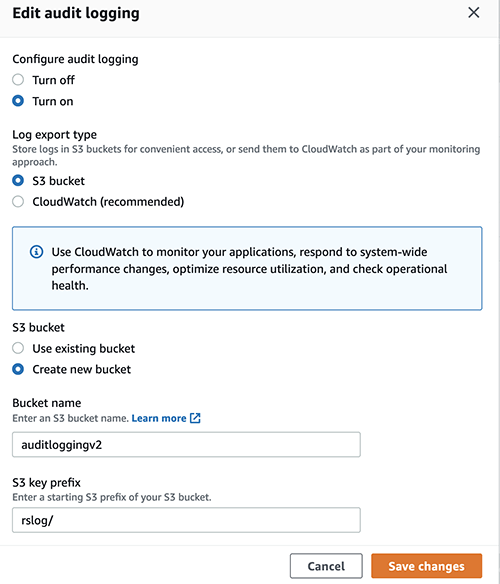

To set up a CloudWatch as your log destination, complete the following steps:

- On the Amazon Redshift console, choose Clusters in the navigation pane.

This page lists the clusters in your account in the current Region. A subset of properties of each cluster is also displayed. - Choose cluster where you want to configure CloudWatch logs.

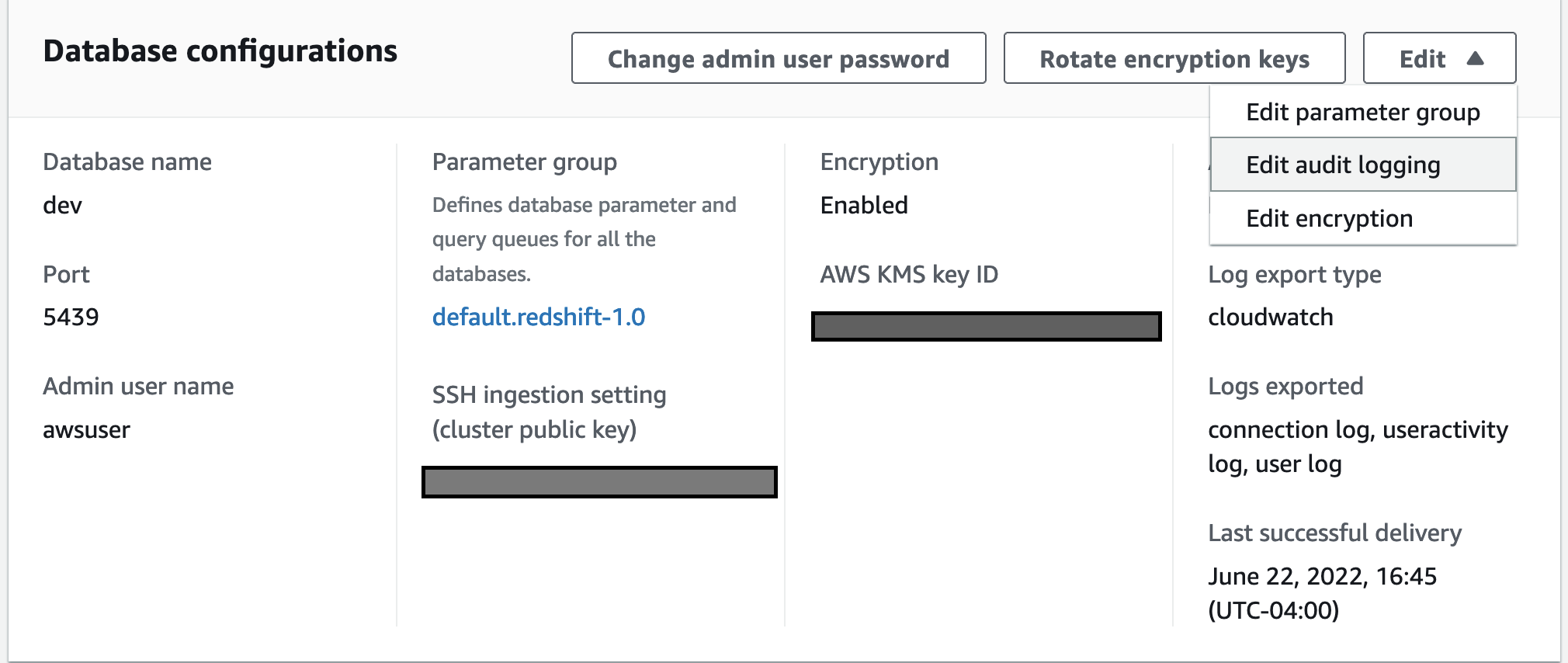

- Select properties to edit audit logging.

- Choose Turn on configure audit logging, and CloudWatch under log export type.

- Select save changes.

Analyzing audit log in near real-time

To run SQL commands, we use redshift-query-editor-v2, a web-based tool that you can use to explore, analyze, share, and collaborate on data stored on Amazon Redshift. However, you can use any client tools of your choice to run SQL queries.

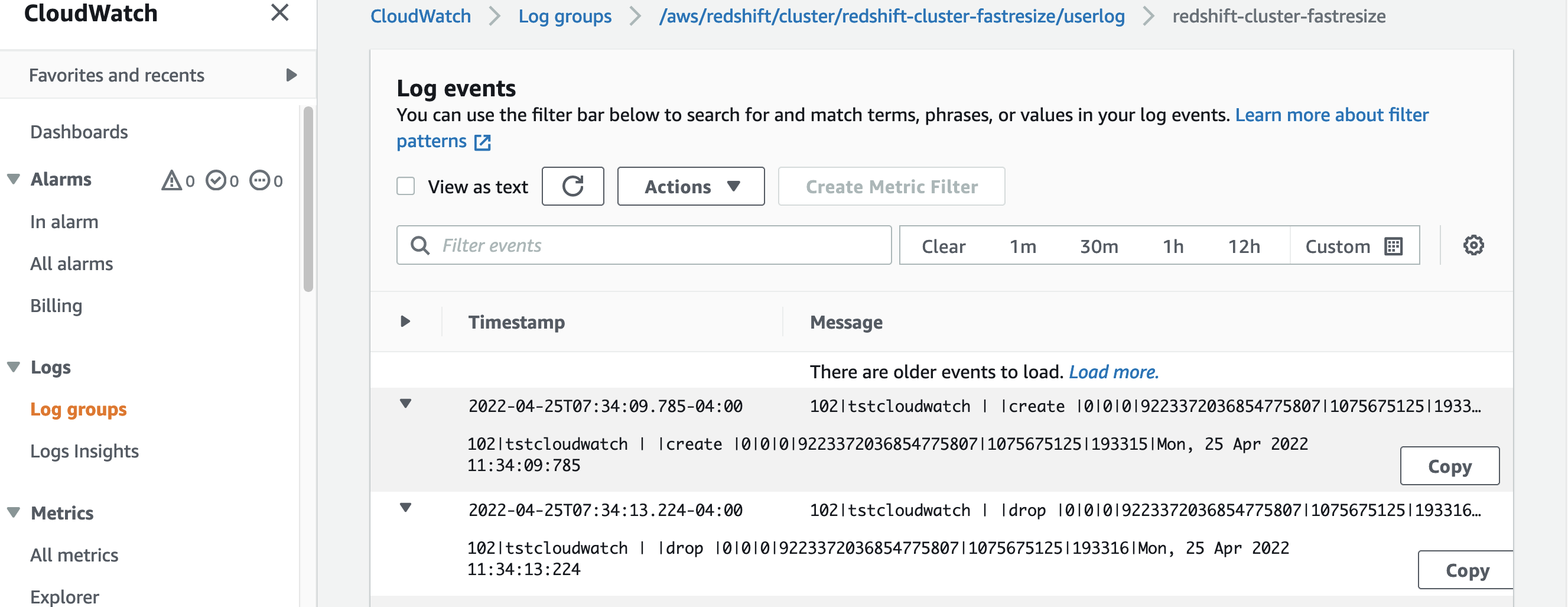

Now we’ll run some simple SQLs and analyze the logs in CloudWatch in near real-time.

- Run test SQLs to create and drop user.

- On the AWS Console, choose CloudWatch under services, and then select Log groups from the right panel.

- Select the userlog – user logs created in near real-time in CloudWatch for the test user that we just created and dropped earlier.

Benefits of using CloudWatch as a log destination

- It’s easy to configure, as it doesn’t require you to modify bucket policies.

- It’s easy to view logs and search through logs for specific errors, patterns, fields, etc.

- You can have a centralized log solution across all AWS services.

- No need to build a custom solution such as AWS Lambda or Amazon Athena to analyze the logs.

- Logs will appear in near real-time.

- It has improved log latency from hours to just minutes.

- By default, log groups are encrypted in CloudWatch and you also have the option to use your own custom key.

- Fine-granular configuration of what log types to export based on your specific auditing requirements.

- It lets you export log groups’ logs to Amazon S3 if needed.

Setting up Amazon S3 as a log destination

Although using CloudWatch as a log destination is the recommended approach, you also have the option to use Amazon S3 as a log destination. When the log destination is set up to an Amzon S3 location, enhanced audit logging logs will be checked every 15 minutes and will be exported to Amazon S3. You can configure audit logging on Amazon S3 as a log destination from the console or through the AWS CLI.

Once you save the changes, the Bucket policy will be set as the following using the Amazon Redshift service principal.

For additional details please refer to Amazon Redshift audit logging.

For enabling logging through AWS CLI – db-auditing-cli-api.

Cost

Exporting logs into Amazon S3 can be more cost-efficient, though considering all of the benefits which CloudWatch provides regarding search, real-time access to data, building dashboards from search results, etc., it can better suit those who perform log analysis.

For further details, refer to the following:

Best practices

Amazon Redshift uses the AWS security frameworks to implement industry-leading security in the areas of authentication, access control, auditing, logging, compliance, data protection, and network security. For more information, refer to Security in Amazon Redshift.

Audit logging to CloudWatch or to Amazon S3 is an optional process, but to have the complete picture of your Amazon Redshift usage, we always recommend enabling audit logging, particularly in cases where there are compliance requirements.

Log data is stored indefinitely in CloudWatch Logs or Amazon S3 by default. This may incur high, unexpected costs. We recommend that you configure how long to store log data in a log group or Amazon S3 to balance costs with compliance retention requirements. Apply the right compression to reduce the log file size.

Conclusion

This post demonstrated how to get near real-time Amazon Redshift logs using CloudWatch as a log destination using enhanced audit logging. This new functionality helps make Amazon Redshift Audit logging easier than ever, without the need to implement a custom solution to analyze logs. We also demonstrated how the new enhanced audit logging reduces log latency significantly on Amazon S3 with fine-grained access control compared to the previous version of audit logging.

Unauthorized access is a serious problem for most systems. As an administrator, you can start exporting logs to prevent any future occurrence of things such as system failures, outages, corruption of information, and other security risks.

About the Authors

Nita Shah is an Analytics Specialist Solutions Architect at AWS based out of New York. She has been building data warehouse solutions for over 20 years and specializes in Amazon Redshift. She is focused on helping customers design and build enterprise-scale well-architected analytics and decision support platforms.

Nita Shah is an Analytics Specialist Solutions Architect at AWS based out of New York. She has been building data warehouse solutions for over 20 years and specializes in Amazon Redshift. She is focused on helping customers design and build enterprise-scale well-architected analytics and decision support platforms.

Evgenii Rublev is a Software Development Engineer on the Amazon Redshift team. He has worked on building end-to-end applications for over 10 years. He is passionate about innovations in building high-availability and high-performance applications to drive a better customer experience. Outside of work, Evgenii enjoys spending time with his family, traveling, and reading books.

Evgenii Rublev is a Software Development Engineer on the Amazon Redshift team. He has worked on building end-to-end applications for over 10 years. He is passionate about innovations in building high-availability and high-performance applications to drive a better customer experience. Outside of work, Evgenii enjoys spending time with his family, traveling, and reading books.

Yanzhu Ji is a Product manager on the Amazon Redshift team. She worked on Amazon Redshift team as a Software Engineer before becoming a Product Manager, she has rich experience of how the customer facing Amazon Redshift features are built from planning to launching, and always treat customers’ requirements as first priority. In personal life, Yanzhu likes painting, photography and playing tennis.

Yanzhu Ji is a Product manager on the Amazon Redshift team. She worked on Amazon Redshift team as a Software Engineer before becoming a Product Manager, she has rich experience of how the customer facing Amazon Redshift features are built from planning to launching, and always treat customers’ requirements as first priority. In personal life, Yanzhu likes painting, photography and playing tennis.

Ryan Liddle is a Software Development Engineer on the Amazon Redshift team. His current focus is on delivering new features and behind the scenes improvements to best service Amazon Redshift customers. On the weekend he enjoys reading, exploring new running trails and discovering local restaurants.

Ryan Liddle is a Software Development Engineer on the Amazon Redshift team. His current focus is on delivering new features and behind the scenes improvements to best service Amazon Redshift customers. On the weekend he enjoys reading, exploring new running trails and discovering local restaurants.