AWS Database Blog

How caresyntax uses managed database services for better surgical outcomes

This is a guest post from Ken Wu, Chief Technology Officer, and Steve Gordon, Director of Engineering at caresyntax.

caresyntax provides the needed tools to make surgery smarter and safer. Our solutions use IoT, analytics, and AI technologies to automate clinical and operational decision support for surgical teams and support all outcome contributors. We help caregivers better identify and manage risk, increase workflow efficiency, reduce surgical variability, and improve operational or clinical outcomes at the point of care. As we navigate COVID-19, our solutions provide strategies and insight for restarting and managing elective surgeries, tools to improve quality and safety, and virtual options for the new “normal.” As of this writing, caresyntax technologies are used in more than 8,000 operating rooms worldwide and support surgical teams in over 13 million procedures per year.

Our tool, Periop Insight, is a surgical data analytics solution for today’s busy perioperative leaders to improve their operating room (OR) performance. It is an enterprise software-as-a-service (SaaS) web application that works on desktops, tablets, and phone form factors. This application helps our customers drive meaningful and data-driven improvement to surgical workflow efficiency and financial performance. Periop Insight runs on AWS, and its data services architecture includes Amazon Aurora, Amazon DynamoDB, Amazon Redshift, and self-hosted open-source Redis on Amazon Elastic Compute Cloud (Amazon EC2).

The Periop Insight challenge

Although many modern data-centric applications deal with extremely large volumes of data, Periop Insight’s challenges stemmed from the complexity of the data, not volume. The largest customers rarely report more than a thousand surgical procedures in a day. Although the dataset is small, it’s extremely complex because it encompasses OR schedules, delays, cancellations, complications, exceptions, and cause/effect relationships between events in a highly dynamic environment. Our challenge is how to uncover meaningful and actionable insight from this small yet complex dataset to improve surgical efficiency. One example would be block utilization (how well hospitals are utilizing operating rooms to increase patient flow and improve care).

We needed a platform that can perform complex computations on relatively small volumes of data to produce an extremely versatile results set, optimized for both dashboard and deep drill-down reporting. Finally, it was critical that the Periop Insight application be simple, intuitive, and responsive for end-users.

The data journey: From raw to meaningful

In its raw format, data doesn’t have much value until it goes through a long and painful journey of transformation, enrichment, and aggregation, and comes out at the other end as a new object (an insight), which highly valued by the user. The question we faced was what tools and technologies we should choose to build a platform that not only meets our extremely challenging goal, but is also cost-effective. We knew there probably wasn’t a single technology that would allow us to accomplish what we needed; our solution required a combination of tools, or a “box of tools.”

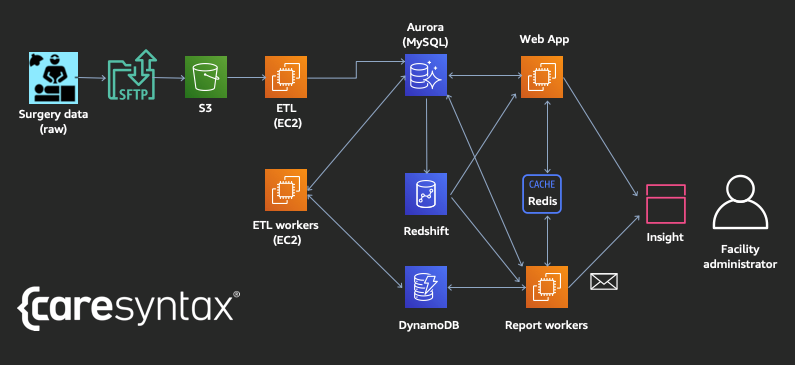

For us, AWS was an obvious choice for cloud infrastructure given its vast database and analytics services that we can use to solve different challenges along the data transformation journey. The following is the high-level system diagram for Periop Insight, composed of different AWS database services that help form a map that guides the data (such as facility scheduling data, timing of key phases of surgical procedures, or medical supply and implant materials used) as it travels from one end to the other, reborn as insights.

Our “box of tools” approach

Our “box of tools” approach on AWS was driven by the challenging requirement of building a highly scalable, fault-tolerant, responsive, and cost-effective platform for Periop Insight that can “slice and dice” the data to present a rich set of analytics. In this section, we share our reasons for choosing each service.

Amazon S3

Periop Insight uses Amazon Simple Storage Service (Amazon S3) to maintain long-term storage of raw primary data from clients in a cost-effective manner. In the rare instance where a reporting discrepancy occurs, we can reconcile it with the original data provided by the client to quickly determine where the discrepancy was introduced. More importantly, archiving source data provides flexibility to make design changes to the product and data pipeline without concern for any loss of data fidelity. Any data that was mutated or omitted during the ETL process can always be recovered.

ETL on Amazon EC2

From Amazon S3, a Pentaho ETL process was implemented to read the data, clean it, and load it into an operational data store in Aurora (MySQL-compatible edition). ETL worker EC2 instances are dynamically launched to perform the deep analysis and transform the operational data into a dimensional model fit for reporting. ETL and scheduled reports occur at specific designated times of day. Periop Insight reduces our costs by launching EC2 instances for these workloads only when necessary. As an added benefit, ETL work is divided and executed on several EC2 instances in parallel, thereby reducing the overall time to complete ETL and allowing us to scale well beyond current demand.

Amazon Aurora

We chose Aurora for its performance and scalability. We built an Aurora cluster to host a set of dimensional tables in addition to using it as a transactional database. All of our data preparation is done in Aurora, which is more than capable of managing transactional data such as users, roles, and customer metadata. Although Aurora may not be the obvious choice for analytical workloads, it’s effective at these smaller data volumes. With Aurora, the app can also use a MySQL-compliant database and benefit from cloud-native features like autoscaling (great for occasional workloads like ETL and offline reporting) and managed backups.

Amazon Redshift

For certain higher-volume datasets, namely those related to the fine details of a surgical procedure including supplies and implants used, and associated cost, Aurora was limited in what it can do within reasonable response times. These larger and more complex datasets are loaded into Amazon Redshift. Periop Insight can achieve reporting response times of just a few seconds on Amazon Redshift. In addition, the platform uses Amazon Redshift for benchmark computation, which requires intensive calculations on data from many different dimensional tables and aggregates the data across many different layers. Amazon Redshift provides the performance the platform requires.

Amazon DynamoDB

In some use cases, you need to store highly transient or very dynamic data like report parameters. We have more than a dozen types of reports, and each scheduled report can have dozens of parameters associated with it. Maintaining a strict schema for each kind of report would create a rigid system where enhancements to reports involve changes to schema with data migrations. Instead, we use DynamoDB because it doesn’t require a strict schema. DynamoDB allows the app to store reporting parameters in a flexible manner without having to make heavy schema changes whenever reports are modified.

Open-source Redis

Finally, as users navigate our application, they are bound to request the same data multiple times in their sessions. Multiple users of the same organization can log in throughout the day, and often multiple reports require the same subset of data. Periop Insight uses open-source Redis on Amazon EC2 as a caching layer for query results. By caching query results, it reduces the demand for more expensive database resources while simultaneously improving response times for our users.

Summary

On the surface, Periop Insight is a typical analytical platform that analyzes data and presents it in a clean, user-friendly interface. Under the hood, a rich set of analytics is powered by a variety of AWS database services, each of which was chosen carefully for its strengths to solve specific issues or meet specific needs. AWS and its managed database services enable caresyntax to focus on serving more customers and developing new insights for better outcomes. In the future, with the vast historical data our platform has collected and continues to collect, we could use AWS AI and machine learning capabilities such as Amazon Forecast to predict things like case volume and block utilization to improve operation planning, and ultimately improving hospitals’ financial performance and patient satisfaction.

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.

About the Authors

Ken Wu is the Chief Technology Officer at caresyntax, responsible for the development and operation of software product and SaaS platform that make surgery smarter and safer for healthcare providers and patients across the world. Ken has more than a decade of experience in developing enabling technology in the healthcare industry for providers, payers, and Life Science companies.

Steve Gordon is the Director of Engineering at caresyntax, responsible for the development and operation of Periop Insights and other data solutions. For 20 years, Steve has built world-class systems across a variety of industries including music, education, finance, business administration, marketing, and healthcare.